Web scraping, the process of extracting data from websites, is a powerful tool for businesses, researchers, and developers alike. It enables the collection of vast amounts of information from the internet, which can be used for competitive analysis, market research, or even fueling machine learning models. However, effective web scraping requires more than just technical know-how; it demands an understanding of ethical considerations, legal boundaries, and the latest trends in technology.

What is Website Scraping

Website scraping, also known as web scraping, is the process of extracting data from websites. It involves using software or scripts to automatically access a web page, parse the HTML code of that page to retrieve the desired information, and then collect that data for further use or analysis. Web scraping is used in various fields and for numerous applications, such as data mining, information gathering, and competitive analysis.

Source: https://scrape-it.cloud/blog/web-scraping-vs-web-crawling

Tips for Effective Web Scraping

To effectively gather data through web scraping, it’s crucial to approach the process with both technical precision and ethical consideration. Here are extended tips to help ensure your web scraping efforts are successful, responsible, and yield high-quality data:

Choose the Right Tools

The choice of tools is critical in web scraping. Your selection should be based on the complexity of the task, the specific data you need to extract, and your proficiency with programming languages.

- Beautiful Soup and Scrapy are excellent for Python users. Beautiful Soup simplifies the process of parsing HTML and XML documents, making it ideal for beginners or projects requiring quick data extraction from relatively simple web pages. Scrapy, on the other hand, is more suited for large-scale web scraping and crawling projects. It’s a comprehensive framework that allows for data extraction, processing, and storage with more control and efficiency.

- Puppeteer offers a powerful API for Node.js users to control headless Chrome or Chromium browsers. It’s particularly useful for scraping dynamic content generated by JavaScript, allowing for more complex interactions with web pages, such as filling out forms or simulating mouse clicks.

- Evaluate your project’s needs against the features of these tools. For instance, if you need to scrape website JavaScript-heavy, Puppeteer might be the better choice. For Python-centric projects or for those requiring extensive data processing capabilities, Scrapy could be more appropriate.

Respect Website Load Time

Overloading a website’s server can cause performance issues for the website and could lead to your IP being banned. To mitigate this risk:

- Implement polite scraping practices by introducing delays between your requests. This is crucial to avoid sending a flood of requests in a short period, which could strain or crash the target server.

- Scrape website during off-peak hours if possible, when the website’s traffic is lower, reducing the impact of your scraping on the site’s performance and on other users’ experience.

Stay Stealthy

Avoiding detection is often necessary when scraping websites that employ anti-scraping measures. To do so:

- Rotate user agents and IP addresses to prevent the website from flagging your scraper as a bot. This can be achieved through the use of proxy servers or VPNs and by changing the user agent string in your scraping requests.

- Implement CAPTCHA solving techniques if you’re dealing with websites that use CAPTCHAs to block automated access. Though this can be challenging and might require the use of third-party services, it’s sometimes necessary for accessing certain data.

Ensure Data Accuracy

Websites frequently change their layout and structure, which can break your scraping scripts.

- Regularly check the consistency and structure of the website you are scraping. This can be done manually or by implementing automated tests that alert you to changes in the website’s HTML structure.

- Validate the data you scrape website both during and after the extraction process. Ensure that the data collected matches the structure and format you expect. This might involve checks for data completeness, accuracy, and consistency.

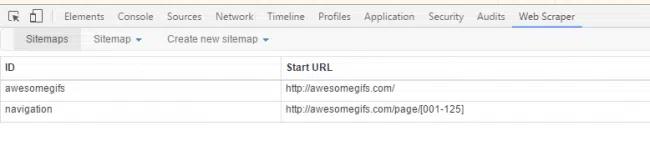

Tools for Website Scraping

In the realm of web scraping, the selection of the right tools can significantly impact the efficiency and effectiveness of your data extraction efforts. There are several robust tools and frameworks designed to cater to various needs, from simple data collection tasks to complex web crawling projects.

Beautiful Soup is a Python library that simplifies the process of parsing HTML and XML documents. It’s especially useful for small-scale projects and for those new to web scraping, providing a straightforward way to navigate and search the parse tree it creates from web pages.

Scrapy, another Python-based tool, is a more comprehensive framework suited for large-scale web scraping and crawling. It allows users to write rules to systematically extract data from websites, making it ideal for projects requiring deep data mining or the extraction of data from multiple pages and websites.

Puppeteer is a Node library which provides a high-level API to control Chrome or Chromium over the DevTools Protocol. It’s particularly useful for interacting with web pages that rely heavily on JavaScript, allowing for dynamic data extraction that mimics human browsing behavior.

In addition to these tools, PromptCloud offers specialized web scraping services that cater to businesses and individuals needing large-scale, customized data extraction solutions. PromptCloud’s services streamline the web scraping process, handling everything from data extraction to cleaning and delivery in a structured format. This can be particularly beneficial for organizations looking to leverage web data without investing in the development and maintenance of in-house scraping tools. With its scalable infrastructure and expertise in handling complex data extraction requirements, PromptCloud provides a comprehensive solution for those looking to derive actionable insights from web data efficiently.

Trends Shaping Website Scraping

AI and ML Integration

Artificial intelligence and machine learning are making it easier to interpret and categorize scraped data, enhancing the efficiency of data analysis processes.

Increased Legal Scrutiny

As web scraping becomes more prevalent, legal frameworks around the world are evolving. Staying informed about these changes is crucial for conducting ethical scraping.

Cloud-Based Scraping Services

Cloud services offer scalable solutions for web scraping, allowing businesses to handle large-scale data extraction without investing in infrastructure.

Conclusion

Web scraping is a potent tool that, when used responsibly, can provide significant insights and competitive advantages. By choosing the right tools, adhering to legal and ethical standards, and staying abreast of the latest trends, you can harness the full potential of web scraping for your projects.

To fully leverage the power of web data for your business or project, consider exploring PromptCloud’s custom web scraping services. Whether you’re looking to monitor market trends, gather competitive intelligence, or enrich your data analytics endeavors, PromptCloud offers scalable, end-to-end data solutions tailored to your specific needs. With advanced technologies and expert support, we ensure seamless data extraction, processing, and delivery, allowing you to focus on deriving actionable insights and driving strategic decisions.

Ready to transform your approach to data collection and analysis? Visit PromptCloud today to learn more about our custom web scraping services and how we can help you unlock the full potential of web data for your business. Contact us now to discuss your project requirements and take the first step towards data-driven success.

Frequently asked questions (FAQs)

Is it legal to scrape websites?

The legality of web scraping depends on several factors, including the way the data is scraped, the nature of the data, and how the scraped data is used.

- Terms of Service: Many websites include clauses in their terms of service that specifically prohibit web scraping. Ignoring these terms can potentially lead to legal action against the scraper. It’s essential to review and understand the terms of service of any website before beginning to scrape it.

- Copyrighted Material: If the data being scraped is copyrighted, using it without permission could infringe on the copyright holder’s rights. This is particularly relevant if the scraped data is to be republished or used in a way that competes with the original source.

- Personal Data: Laws like the General Data Protection Regulation (GDPR) in the European Union place strict restrictions on the collection and use of personal data. Scraping personal information without consent can lead to legal consequences under these regulations.

- Computer Fraud and Abuse Act (CFAA): In the United States, the CFAA has been interpreted to make unauthorized access to computer systems (including websites) a criminal offense. This law can apply to web scraping if the scraper circumvents technical barriers set by the website.

- Bots and Automated Access: Some websites use a robots.txt file to specify how and whether bots should interact with the site. While ignoring robots.txt is not illegal in itself, it can be considered a breach of the website’s terms of use.

What is scraping a website?

Scraping a website, or web scraping, refers to the process of using automated software to extract data from websites. This method is used to gather information from web pages by parsing the HTML code of the website to retrieve the content you’re interested in. Web scraping is commonly used for a variety of purposes, such as data analysis, competitive research, price monitoring, real-time data integration, and more.

The basic steps involved in web scraping include:

- Sending a Request: The scraper software makes an HTTP request to the URL of the webpage you want to extract data from.

- Parsing the Response: After the website responds with the HTML content of the page, the scraper parses the HTML code to identify the specific data points of interest.

- Extracting Data: The identified data is then extracted from the page’s HTML structure.

- Storing Data: The extracted data is saved in a structured format, such as CSV, Excel, or a database, for further processing or analysis.

Web scraping can be performed using various tools and programming languages, with Python being particularly popular due to libraries such as Beautiful Soup and Scrapy, which simplify the extraction and parsing of HTML. Other tools like Selenium or Puppeteer can automate web browsers to scrape data from dynamic websites that rely on JavaScript to load content.

While web scraping can be a powerful tool for data collection, it’s important to conduct it responsibly and ethically, taking into account legal considerations and the potential impact on the websites being scraped.

How can I scrape a website for free?

Scraping a website for free is entirely possible with the use of open-source tools and libraries available today. Here’s a step-by-step guide on how you can do it, primarily focusing on Python, one of the most popular languages for web scraping due to its simplicity and powerful libraries.

Step 1: Install Python

Ensure you have Python installed on your computer. Python 3.x versions are recommended as they are the most current and supported versions. You can download Python from the official website.

Step 2: Choose a Web Scraping Library

For beginners and those looking to scrape websites for free, two Python libraries are highly recommended:

- Beautiful Soup: Great for parsing HTML and extracting the data you need. It’s user-friendly for beginners.

- Scrapy: An open-source and collaborative framework for extracting the data you need from websites. It is more suited for large-scale web scraping and crawling across multiple pages.

Step 3: Install the Necessary Libraries

You can install Beautiful Soup and Scrapy using pip, the Python package installer. Open your command line or terminal and run the following commands:

pip install beautifulsoup4

pip install Scrapy

Step 4: Write Your Scraping Script

For a simple scraping task with Beautiful Soup, your script might look something like this:

python

import requests

from bs4 import BeautifulSoup

# Target website

url = ‘https://example.com’

response = requests.get(url)

# Parse the HTML content

soup = BeautifulSoup(response.text, ‘html.parser’)

# Extract data

data = soup.find_all(‘tag_name’, class_=’class_name’) # Adjust tag_name and class_name based on your needs

# Print or process the data

for item in data:

print(item.text)

Replace ‘https://example.com’, ‘tag_name’, and ‘class_name’ with the actual URL and HTML elements you’re interested in.

Step 5: Run Your Script

Run your script using Python. If using a command line or terminal, navigate to the directory containing your script and run:

python script_name.py

Replace script_name.py with the name of your Python file.

Step 6: Handle Data Ethically

Always ensure you’re scraping data ethically and legally. Respect the website’s robots.txt file, avoid overwhelming the website’s server with requests, and comply with any terms of service.

Additional Free Tools

For dynamic websites that heavily use JavaScript, you might need tools like:

- Selenium: Automates browsers to simulate real user interactions.

- Puppeteer: Provides a high-level API to control Chrome or Chromium over the DevTools Protocol.

Both tools allow for more complex scraping tasks, including interacting with web forms, infinite scrolling, and more.