What Web Scraping Really Means (And Why Most Definitions Are Wrong)

Web scraping is not about extracting data, it is about building systems that can reliably collect, structure, and maintain data despite constant change. The real challenge begins after the first successful scrape, when scale, accuracy, and system failures start to surface.

Most explanations of web scraping stop at “extracting data from websites.” That definition is incomplete, and honestly, misleading. Because the moment you move beyond a one-time script, scraping stops being a data problem and becomes a systems problem. At this stage, teams are effectively building scalable web scraping systems, where reliability, monitoring, and data consistency matter more than extraction logic.

At a basic level, yes, web scraping involves:

- Sending requests to a website

- Parsing HTML or APIs

- Extracting structured data

But that’s the easy part.

The real complexity shows up when:

- The website layout changes silently

- Data formats shift across pages or regions

- JavaScript rendering breaks your extraction logic

- Anti-bot systems start blocking requests

This is where most “working scrapers” fail.

The Shift Most Teams Miss

There are two very different interpretations of web scraping:

| Stage | What Teams Think | What Actually Happens |

| Initial Setup | “We built a scraper” | You built a fragile extraction script |

| Early Success | Data starts flowing | No monitoring, no validation |

| At Scale | More sources added | Breakages increase exponentially |

| Production Use | Business depends on data | Data quality becomes unpredictable |

The gap between “script works” and “data is reliable” is where scraping projects collapse.

Why This Matters More in 2026

Web data today is:

- More dynamic (JS-heavy, infinite scroll, APIs behind sessions)

- More protected (fingerprinting, rate limiting, bot detection)

- More critical (feeding AI models, pricing systems, automation)

Which means scraping is no longer a developer task.

It’s infrastructure.

In fact, poor data quality is one of the biggest hidden risks in AI systems. According to the AI data standards checklist, 80% of AI project failures are tied to poor data quality and pipeline issues. According to multiple industry reports on AI deployment, a significant share of project failures are linked to poor data quality and unreliable data pipelines. This shift is why enterprises are investing in web scraping infrastructure, not just tools.

Your data pipeline shouldn’t depend on fragile scrapers

PromptCloud provides AI-ready data pipelines built on publicly accessible sources, with compliance<br>documentation, source provenance, and usage controls baked in.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

How Web Scraping Actually Works (From Request to Dataset)

At a surface level, scraping looks linear. You request a page, extract elements, and store the data. In reality, production scraping is a multi-stage pipeline, where each layer introduces its own failure modes.

Each layer directly impacts web data pipeline reliability, which determines whether data can be trusted downstream.

The Real Flow

A reliable scraping system typically moves through these stages:

- Access Layer – The system decides how to reach the data:

- Direct HTTP requests

- Headless browsers for JS-heavy sites

- Authenticated sessions or APIs

This is where most teams underestimate complexity. Modern websites don’t just serve HTML. They serve stateful, personalized, and dynamic content.

- Rendering Layer – If the page relies on JavaScript, the scraper must:

- Execute scripts

- Wait for async content

- Handle lazy loading

A static parser fails here. This is why scrapers that work on day one suddenly return empty fields later.

- Extraction Layer – This is what most people think scraping is.

But extraction is fragile because:

- DOM structures are inconsistent

- Selectors break with minor UI changes

- Data formats vary across pages

The problem is not extraction logic. It’s the assumption that structure will stay stable.

- Normalization Layer – Raw scraped data is rarely usable.

You need to:

- Standardize formats (dates, currencies, units)

- Resolve duplicates

- Align schemas across sources

Without this, your dataset becomes inconsistent, even if extraction is “successful.”

- Validation Layer (Most Ignored, Most Critical) – This is where mature systems differ.

Validation includes:

- Schema checks (missing fields, type mismatches)

- Freshness checks (is data updated?)

- Completeness checks (did we capture everything?

Most DIY setups skip this. That’s why bad data silently flows downstream.

- Delivery Layer – Finally, data is pushed into:

- APIs

- Data warehouses

- Analytics tools

- ML pipelines

And this is where the cost of earlier mistakes shows up.

Where Systems Actually Break

Failures don’t happen during extraction. They show up later:

- A selector change causes silent data gaps

- A rate limit introduces partial datasets

- A schema drift breaks downstream models

- A rendering delay returns incomplete pages

And because most systems lack observability, teams realize this after decisions are already made on bad data.

The Core Insight

A scraper is not a script. It is a data pipeline with moving dependencies.

Which means every stage needs:

- Monitoring

- Fallback logic

- Change detection

This is exactly why simple tools plateau quickly when teams try to scale them across multiple sources or geographies.

Types of Web Scraping

Most classifications of web scraping are too simplistic. They focus on how data is extracted, not how systems behave under real-world conditions.

The more useful way to classify scraping is based on system maturity and ability to handle change. Because that is where most implementations fail.

1. Static Scraping

This is the most basic form of scraping. It involves sending HTTP requests and parsing HTML using selectors.

Where it works:

- Simple websites with stable layouts

- Public pages with minimal JavaScript

- Low-frequency data collection

Where it breaks:

- Layout or DOM structure changes

- Dynamic content loading

- Inconsistent page templates

Static scraping creates a false sense of stability. It works well initially but fails silently as soon as the source evolves.

2. Browser-Based Scraping

This approach uses headless browsers to render pages and execute JavaScript before extraction.

Where it works:

- JavaScript-heavy websites

- Infinite scroll, lazy loading, interactive elements

- Content hidden behind user actions

Tradeoffs:

- Slower execution time

- Higher infrastructure cost

- Increased risk of detection and blocking

This method solves access problems but introduces operational complexity. It requires better resource management and retry logic.

3. Pipeline-Based Scraping

This is the transition from scripts to systems. Scraping is treated as a data pipeline, not a one-time extraction task.

Key characteristics:

- Modular architecture (acquisition, extraction, validation, delivery)

- Schema enforcement and normalization

- Monitoring and alerting for failures

- Retry and fallback mechanisms

Why this matters:

- Data reliability improves significantly

- Failures are detected early

- Systems can scale across multiple sources

This is also where distributed or multi-agent scraping models are used to handle orchestration and fault tolerance.

Comparison: What Actually Differentiates These Approaches

| Approach | Setup Effort | Scalability | Failure Visibility | Data Reliability |

| Static Scraping | Low | Low | None | Unreliable over time |

| Browser-Based Scraping | Medium | Medium | Partial | Moderate |

| Pipeline-Based Scraping | High | High | Strong | High |

Key Takeaway

The difference between these approaches is not technical complexity. It is how they handle change.

- Static systems assume stability

- Browser-based systems react to complexity

- Pipeline-based systems are designed for continuous change

Most teams fail because they start with static scraping and never evolve the system architecture, even as the use case becomes business-critical.

Need This at Enterprise Scale?

While DIY scraping works for small-scale extraction and one-off use cases, enterprise data requirements introduce continuous change, multi-source variability, and strict data quality expectations. Most enterprise teams evaluate reliability, maintenance overhead, and data SLAs to determine total cost of ownership.

Web Scraping Tools (What They Solve and Where They Break)

Most content lists tools as options. That’s not useful.

The real question is: what problem are tools actually solving, and where do they stop being enough?

What Web Scraping Tools Are Designed For

Web scraping tools are built for data extraction, not data reliability.

They help teams:

- Quickly pull data from websites

- Build scrapers without heavy engineering effort

- Prototype use cases and validate demand

This makes them effective in early stages where speed matters more than stability.

Categories of Web Scraping Tools

Different tools solve different layers of the problem. Treating them as interchangeable is a mistake.

No-Code / Visual Scrapers

These tools allow users to extract data using point-and-click interfaces.

They are typically used by:

- Non-technical teams

- Analysts validating a use case

- Small-scale scraping needs

They reduce setup time significantly but lack flexibility when complexity increases.

Developer Frameworks

These are code-based tools used to build custom scrapers.

They offer:

- Full control over extraction logic

- Integration with existing systems

- Ability to handle complex workflows

However, they shift the burden to internal teams. Every failure, change, or scaling issue must be handled manually.

Browser Automation Tools

These simulate user behavior and are used for dynamic websites.

They are necessary when:

- Content loads via JavaScript

- Interaction is required

They improve access but increase system cost and complexity.

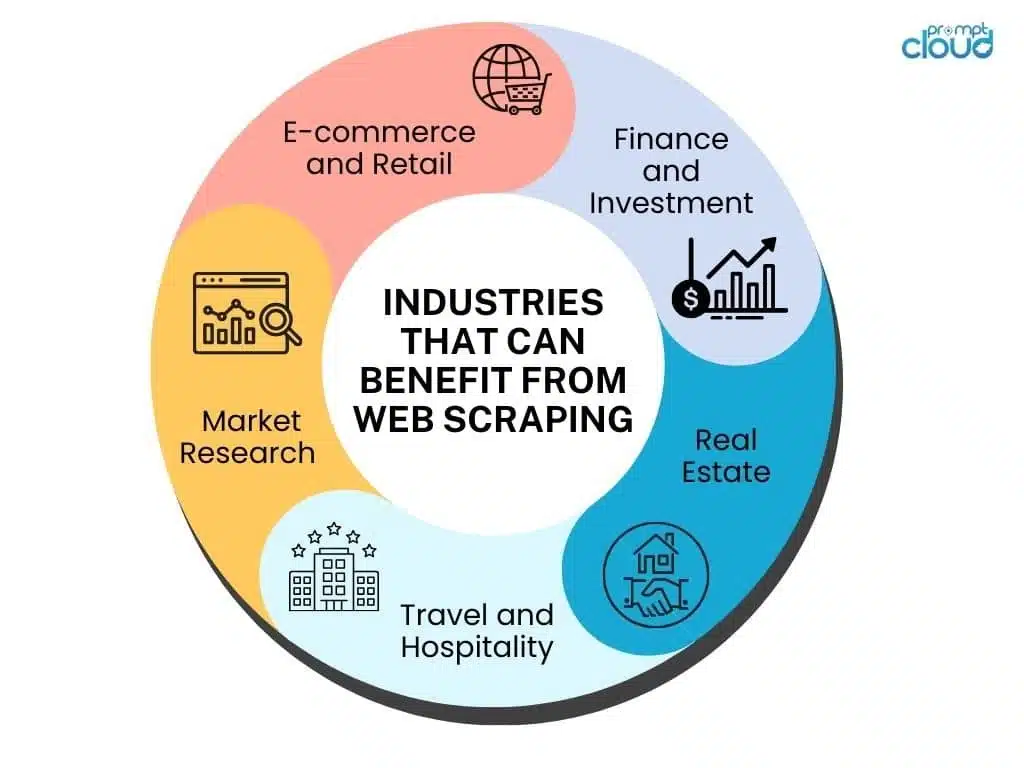

Common Web Scraping Use Cases

Most teams think of web scraping as a way to “get data.” That framing is weak.

The real value shows up when scraped data directly drives decisions, automation, or models. The use case itself is less important than how tightly it connects to action.

1. Pricing Intelligence

This is the highest-impact and most sensitive use case.

Teams monitor competitor pricing, availability, and promotions across channels to inform pricing strategy in near real time.

Why it matters: Pricing decisions become dynamic instead of reactive. Even small delays or inaccuracies here translate directly into revenue loss.

2. Market and Trend Signals

Scraping helps teams track product launches, category shifts, and customer sentiment at scale.

Why it matters: You get early signals before they show up in internal dashboards. This is especially useful for identifying emerging competitors or demand spikes.

3. AI and Model Training Data

This is where scraping is shifting from a support function to core infrastructure. Scraped data feeds LLMs, recommendation systems, and forecasting models.

Why it matters: If the data is inconsistent or stale, model performance degrades. This is not a data problem, it becomes a product problem.A significant share of AI failures are tied to poor data quality, not model design.

Why Web Scraping Systems Break

Scraping systems rarely fail in obvious ways. They degrade.

Pipelines keep running. Jobs show success. Data keeps flowing. But the output slowly becomes unreliable. Missing fields, incorrect values, outdated records. The system looks healthy, but decisions start drifting.

This is not a scraping problem. It is a system design problem.

Change Is Constant, Not an Edge Case

Websites are not static systems. Layouts shift, elements move, APIs change, and anti-bot mechanisms evolve continuously.

Most teams build scrapers assuming stability. That assumption breaks first.

The result is not a crash. It is a partial extraction. Some fields stop updating. Some values get misaligned. Over time, data quality erodes without any visible failure signal.

No Visibility Into Data Quality

Most setups track system health, not data correctness.

If a job runs successfully, it is treated as success. But there is no check on whether the extracted data is:

- Complete

- Accurate

- Consistent across runs

This creates a blind spot. You don’t notice the failure because the pipeline never technically breaks.

Scale Amplifies Small Failures

At a small scale, issues are manageable. At large scales, they compound.

When you move from tens to hundreds of sources:

- Variability increases

- Failures become harder to detect manually

- Fix cycles slow down

What used to be a minor issue becomes systemic. Data inconsistencies start affecting downstream systems and decisions.

How Modern Web Scraping Systems Are Designed to Handle Change

The shift is not about better tools. It is about a different system philosophy. You are no longer extracting data. You are managing a continuously changing external dependency.

Architecture Moves From Scripts to Systems

In basic setups, scraping is a single flow. Fetch, parse, store.

Modern systems break this into layers so change does not cascade. Extraction, validation, and delivery operate independently. When something breaks, it is contained and visible instead of silently corrupting the output.

This is what turns scraping from a fragile task into a maintainable system.

Data Quality Becomes a First-Class Concern

Stable systems assume failure and design for detection.

Instead of trusting the scraper, they validate the output. Missing fields, abnormal values, or sudden drops in records are treated as signals, not edge cases.

This changes the operating model. You stop reacting to failures and start catching them early.

Monitoring Shifts From Jobs to Data

Running jobs successfully does not mean the system is working.

Modern setups track whether the data itself is usable. Freshness, completeness, and consistency become the real indicators of system health.

Without this, failures remain invisible until they impact decisions.

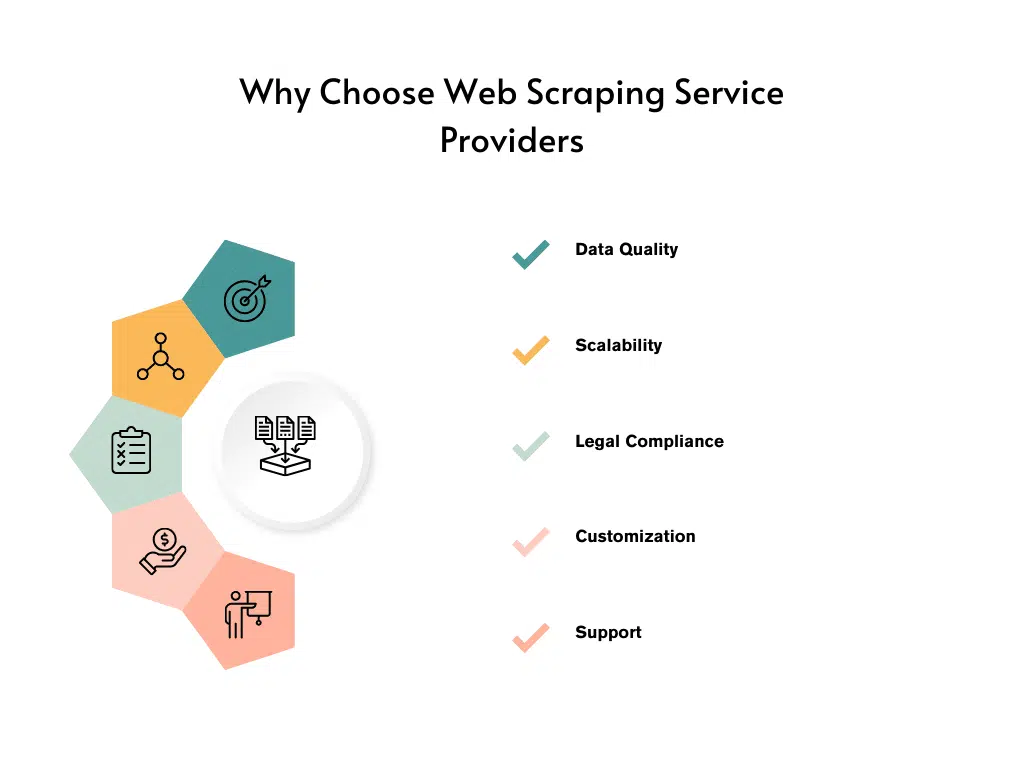

Web Scraping Tools vs Managed Services

This is where most teams make the wrong decision.

They compare tools vs services based on cost or speed of setup. That is a shallow comparison.

The real difference shows up later in maintenance burden, data reliability, and total cost of ownership.

Tools Give Control, But Also Responsibility

DIY tools and frameworks work well in the early stage.

They are flexible, fast to deploy, and give full control over logic and workflows. For small use cases or experiments, this is often the right choice.

But as usage grows, the hidden cost surfaces.

Every change in the source requires updates. Anti-bot mechanisms need handling. Failures need debugging. Over time, engineering effort shifts from building features to maintaining scrapers.

What started as a cost-saving decision becomes an ongoing operational load.

Managed Services Shift the Operating Model

Managed scraping services take a different approach.

The focus is not on extraction alone, but on delivering reliable, structured data continuously.

This includes handling:

- Source changes

- Infrastructure scaling

- Data validation and quality checks

The internal team no longer manages scraping logic. They consume data as an input, similar to any other external data system.

Where the Trade-off Actually Lies

The real decision is not tools vs services.

It is:

- Do you want to build and maintain a scraping system, or

- Do you want to consume reliable data as a product

Most teams underestimate how quickly scraping becomes a maintenance problem once it scales or becomes business-critical.

How to Choose the Right Approach for Your Use Case

Most teams don’t make a wrong choice upfront. They fail to re-evaluate the choice as the use case evolves.

What starts as a simple scraping need often becomes business-critical. The operating model stays the same. That is the problem.

Start With the Role of Data in Your System

The decision depends on how the data is used.

If the data is:

- Supporting internal analysis, tolerance for delay and gaps is higher

- Driving pricing, automation, or AI models, tolerance drops to near zero

The closer the data is to revenue or product behavior, the higher the requirement for reliability.

Evaluate Based on Failure Impact, Not Setup Effort

Most teams optimize for speed of setup. That is short-term thinking.

The better lens is: what happens when the data is wrong or delayed?

- If nothing critical breaks, a lightweight setup works

- If decisions, pricing, or models depend on it, failure cost is high

This reframes the decision from cost to risk.

Recognize the Inflection Point Early

There is always a point where:

- Number of sources increases

- Frequency of updates increases

- Data starts feeding multiple systems

At this stage, maintenance effort grows non-linearly.

If you continue with the same setup, the team spends more time fixing pipelines than using the data.

Business Benefits of Web Scraping

Web scraping is often positioned as a growth lever. That is misleading.

It only creates value when the data is timely, consistent, and trusted.

Pricing Intelligence

In pricing use cases, latency and accuracy directly affect revenue.

If competitor pricing data is delayed, decisions are reactive. If it is incorrect, decisions are wrong. Both scenarios result in measurable loss, even if it is not immediately visible.

Reliable scraping allows pricing systems to respond in near real time. That is where the value is created.

Market and Competitive Intelligence

Scraped data provides visibility into external signals that internal systems cannot capture.

This includes product launches, assortment changes, and customer sentiment.

However, without normalization and coverage, this data becomes fragmented. Instead of insight, teams get conflicting signals.

The advantage comes from consistency, not just access.

AI and Data-Driven Systems

This is where scraping becomes foundational.

Models depend on external data for training, updating, and contextual relevance. If the data pipeline is unstable, model outputs degrade.

At this stage, scraping is no longer a support function. It becomes part of the core product infrastructure.

AI Web Scraping

AI scraping is often misunderstood as a better way to extract data. That framing is incomplete.

The shift is not from manual scraping to automated scraping. It is from rule-based extraction to adaptive systems that can interpret, learn, and recover from change.

What Changes With AI Scraping

Traditional scraping depends on predefined rules. Selectors, XPath, regex. These work only as long as the structure remains predictable.

AI scraping introduces models that can:

- Interpret page structure instead of relying only on fixed selectors

- Extract meaning from semi-structured or unstructured content

- Adapt to layout variations without requiring constant reconfiguration

This reduces dependence on brittle rules and improves resilience in environments where change is constant.

Where AI Scraping Actually Helps

AI scraping is most effective in scenarios where traditional methods struggle.

Unstructured Data Extraction

Reviews, descriptions, specifications, and mixed-format content can be parsed more accurately using models that understand context.

Template Variability Across Sources

When multiple websites present the same data differently, AI models can normalize outputs without building separate logic for each source.

Change Tolerance

Instead of breaking on minor layout changes, AI-based systems can continue extracting relevant data with minimal intervention.

Where AI Scraping Does Not Solve the Problem

AI scraping is not a replacement for system design.

It does not eliminate:

- The need for validation

- Monitoring for data quality

- Handling of access restrictions and anti-bot systems

Without these layers, AI can produce outputs that look correct but are inconsistent or inaccurate.

Is Web Scraping Legal?

The legality of web scraping is not binary. It depends on execution.

Most enterprise use cases operate within acceptable boundaries because they focus on publicly available data and controlled access patterns.

Risk increases when systems are designed without constraints. Scraping behind authentication layers, ignoring platform terms, or collecting sensitive data introduces compliance issues.

The important shift is operational. Mature teams embed compliance into their systems. They define access policies, control request behavior, and maintain traceability.

Legality is not decided after the system is built. It is defined by how the system is designed.

How to Evaluate Web Scraping Providers

Vendor evaluation is where most teams optimize incorrectly.

They compare tools on surface metrics like speed, proxies, or cost per request. These are not indicators of success.

The real evaluation should focus on whether the provider can deliver reliable, decision-ready data over time. This is especially critical for teams evaluating enterprise web scraping solutions, where data reliability directly impacts revenue systems and AI models.

What Strong Providers Do Differently

They take ownership of change.

Instead of exposing raw scraping outputs, they deliver structured datasets with consistency guarantees. They handle source variability, maintain schema stability, and detect failures before they impact downstream systems.

This reduces internal maintenance and improves trust in the data.

Where Weak Providers Fall Short

They focus on extraction, not delivery.

This means:

- Breakages are passed downstream

- Data inconsistencies are not flagged

- Internal teams end up fixing issues

This creates hidden operational costs and reduces confidence in the system.

PromptCloud is built for teams that need reliable, decision-grade web data, not just extraction. It handles continuous site changes, enforces data quality checks, and delivers structured datasets with defined SLAs, so internal teams don’t spend time maintaining scrapers.

Instead of managing pipelines, you get consistent, production-ready data that can directly feed pricing systems, analytics, and AI models. This is delivered through structured data delivery systems designed for production use.

Common Myths About Web Scraping

Most discussions around web scraping are shaped by early-stage use cases. Small scripts, limited sources, short-term goals. That context creates assumptions that do not hold once scraping becomes part of a production system. The gap between perception and reality is where most failures begin.

Myth 1: Web Scraping Is a One-Time Setup

Web scraping is often treated like a build-and-forget task. A script is written, deployed, and expected to keep working.

In reality, websites behave like constantly evolving systems. Layouts shift, elements move, APIs change, and access patterns get tighter. Scrapers do not break immediately when this happens. They degrade over time.

Fields go missing, values get misaligned, and datasets lose integrity without triggering obvious failures. What appears stable is often quietly drifting away from accuracy.

Myth 2: If the Scraper Runs, the Data Is Correct

Execution success is frequently mistaken for data correctness.

A scraper completing its run only confirms that the pipeline executed. It does not guarantee that the output is valid. Data can be structurally complete but logically incorrect.

Prices may be outdated, mappings may be wrong, or attributes may be partially extracted. Without validation, these issues remain invisible until they impact decisions.

Myth 3: Proxies Solve Most Scraping Problems

Proxies are often seen as a primary solution for scraping challenges.

They help manage access and reduce blocking, but they do not address data quality. They cannot detect incorrect parsing, fix broken selectors, or ensure consistency across sources.

Focusing only on access creates a system that runs successfully but produces unreliable output.

Myth 4: Web Scraping Is Illegal

There is a widespread belief that web scraping is inherently illegal.

In practice, legality depends on how scraping is executed. Most enterprise use cases operate within acceptable boundaries by focusing on publicly available data and controlled access patterns.

Risk increases when systems bypass restrictions, ignore platform constraints, or collect sensitive data. The distinction lies in execution, not the act itself.

Organizations that embed compliance into system design operate with far fewer risks than those that treat it as an afterthought.

Myth 5: Scraping Tools Can Handle Everything

Scraping tools are effective for getting started. They simplify extraction and reduce setup time.

However, they are not designed to handle continuous change, multi-source variability, or long-term consistency. As use cases grow, limitations become visible.

Breakages increase, outputs diverge, and internal teams spend more time maintaining pipelines than using the data.

Myth 6: Scaling Scraping Is Just Adding More Infrastructure

Scaling is often misunderstood as a volume problem.

In reality, scaling introduces complexity. Each new source behaves differently. Structures vary, update frequencies change, and detection mechanisms evolve.

This makes failures harder to detect and resolve. Systems not designed for variability accumulate issues that compound over time.

Myth 7: All Data Sources Behave the Same

A common assumption is that once a scraper works for one site, it can be replicated across others.

In practice, every source has its own structure, logic, and behavior. Treating them as uniform leads to inconsistent outputs and unreliable datasets.

Mature systems account for this variability instead of forcing standardization where it does not exist.

Web scraping is often framed as a technical task. In reality, it is a system design challenge centered around change, reliability, and trust in data.

Explore More

- Web scraping at scale

- Web scraping monitoring challenges

- Managing change in web scraping systems

- Challenges of turning web data into insights

Web scraping is widely used for data extraction and automation, but its real-world complexity shows up when pages are dynamic, structures change, and teams need to keep data usable over time. IBM’s overview is relevant here because it explains how traditional web scraping works, why maintenance becomes difficult, and why responsible execution matters.

Your data pipeline shouldn’t depend on fragile scrapers

PromptCloud provides AI-ready data pipelines built on publicly accessible sources, with compliance<br>documentation, source provenance, and usage controls baked in.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. How do you choose the right web scraping method for a project?

The right method depends on how the data is delivered on the website and how critical it is to your business. Simple HTML pages can be handled with basic scrapers, while dynamic or high-frequency data requires browser-based or pipeline-driven systems. The decision should be based on reliability requirements, not just ease of setup.

2. What challenges do companies face when scaling web scraping?

As scraping scales, variability across websites increases, and maintaining consistency becomes difficult. Teams struggle with handling structural changes, ensuring data quality, and managing infrastructure efficiently. These challenges often lead to higher maintenance costs and unreliable outputs if not addressed at the system level.

3. Can web scraping be used for real-time data monitoring?

Yes, but real-time scraping requires systems designed for frequent updates, low-latency extraction, and continuous monitoring. Standard scraping setups often fail in real-time scenarios because they cannot handle rapid changes or ensure consistent data delivery at high frequency.

4. What data quality issues are common in web scraping?

Common issues include missing fields, incorrect mappings, duplicate records, and outdated data. These problems often go unnoticed because pipelines continue running even when data quality degrades. Without validation and monitoring, these issues can affect downstream decisions.

5. When should a business move from DIY scraping to a managed solution?

The shift typically happens when scraped data starts impacting pricing, analytics, or product decisions. At this stage, maintenance effort increases, and data reliability becomes critical. Businesses move to managed solutions when the cost of maintaining scrapers exceeds the cost of ensuring consistent, high-quality data delivery.