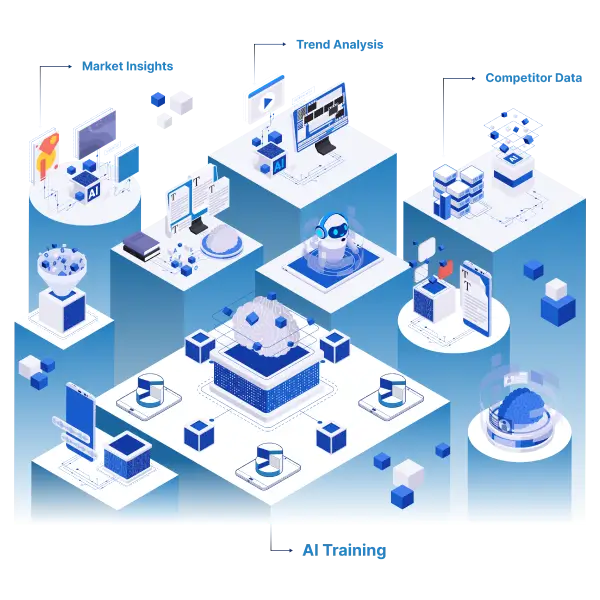

Automated Web Scraping Services – Scalable & Reliable Data Extraction

Extract High-Quality Data at Scale with Automated Web Scraping

Looking to grow your business with actionable insights? Discover Promptcloud’s automated web scraping services—the ultimate solution for scalable, reliable, and high-quality data extraction. From real-time market intelligence to large-scale competitor tracking, we deliver customized data solutions without the technical hassles.