PromptCloud Inc, 16192 Coastal Highway, Lewes De 19958, Delaware USA 19958

We are available 24/ 7. Call Now. marketing@promptcloud.com- Home

- Scrape Real Estate Data

Web Scraping for Real Estate

Setting Expectations: Property Trends

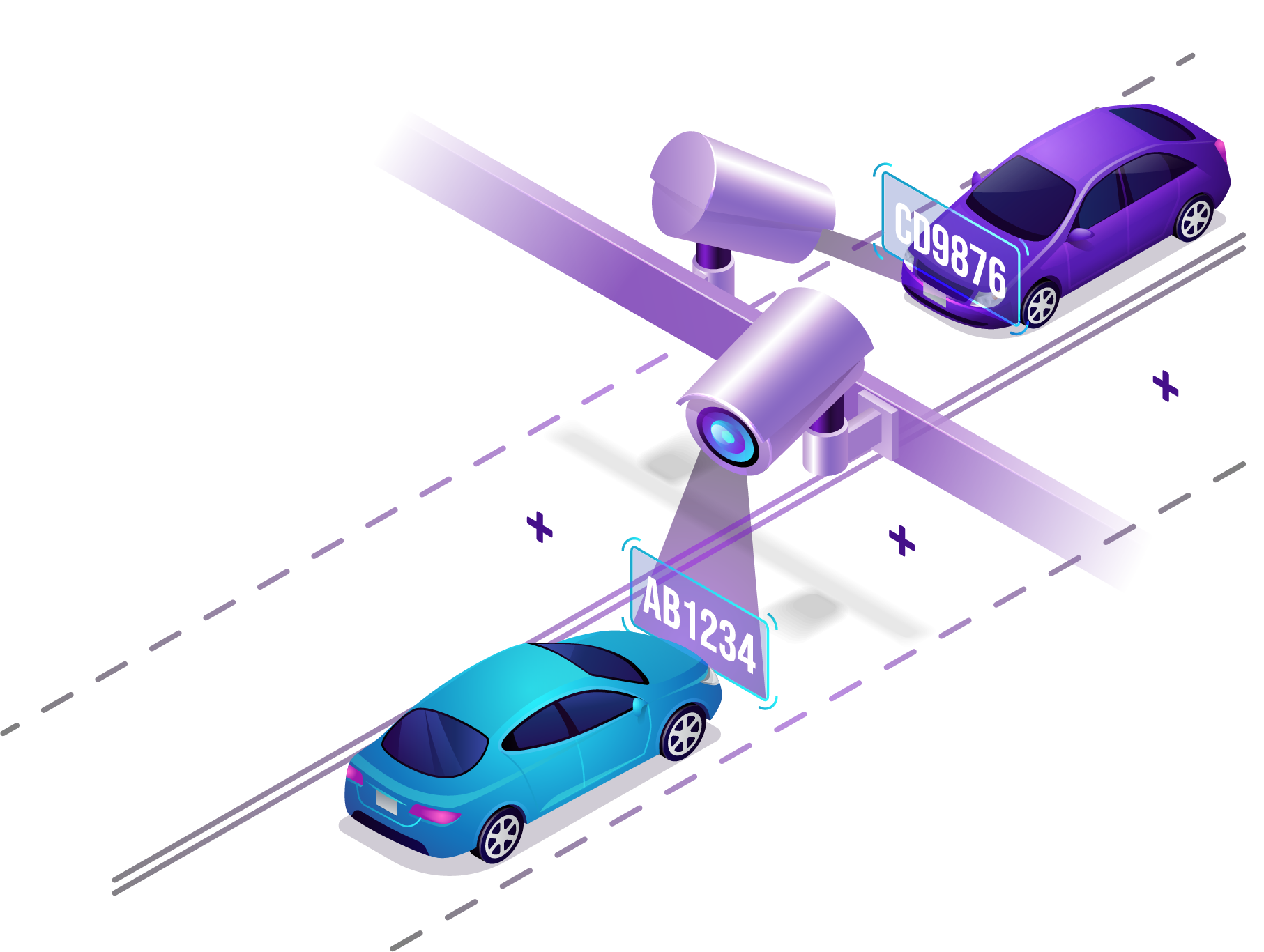

We provide real estate data scraping service for extracting required details to analyze trends. We include property information, pricing, addresses, images, reviews, agent information, and seller profiles from classifieds, websites and portals. Our real estate scraper accumulates real-time intelligence in the required frequency and format, powered by Artificial Intelligence, Machine Learning, and Natural Language Processing techniques.

Over the past decade, we have worked with mid-size to Fortune 500 companies for scraping real estate data and we guarantee faster delivery, accuracy, and consistency in the quality of web data. We become a catalyst to extract publicly available primary data to conduct property trend research in record time. Even when the industry is increasing its reliance on data scraping solutions.

Implementing Real Estate Research Analytics

You can quickly implement properties research data scraping projects within a short period of time by following a few easy steps. Where you will see that our core focus is on data quality and speed of implementation.

We can fulfill your large scale data scraping requirements even on complex sites without any coding in the shortest time possible. We have ready-to-use real estate scraping solutions as a result of our vast experience in building web crawlers for multiple clients across different verticals.

Use cases of Real Estate Data Scraping

Demand Analysis

Sourcing multiple real estate websites manually is a tedious task. Having crawled hundreds of real estate websites over the years, we can gather data on most in-demand properties, location, pricing rates, and much more. Examine aggregated, high-dimensional data to understand demand fluctuations, so that you can make informed business decisions.

Property Price Comparison

We provide you with real-time commercial properties data that you can rely on while managing listings and prices. With our real estate scraper, you get real-time property insights to fuel your pricing engine. Set the frequency to crawl pricing data – hourly, daily, or weekly, as per your need.

Market Fluctuations

By web scraping real estate data, understand market fluctuations and unlock profitable opportunities. Industry data scraping helps to collect historical and current intelligence on properties, value, sales cycles, and much more. The analyzed data provides data-backed predictions on how the market will perform in the near future. You simply can list your real estate data sources, and we’ll get the data points you need.

Monitoring Competition

Acquire competitor information, their offerings, and pricing by scanning properties data from multiple sites. With our data intelligence, understand the gap in your offerings and devise strategies to keep ahead of the game. Get property listings data from your targeted geolocations and better serve your customers with up-to-date deals. Swiftly accumulate real-time market data and promptly react to competitors’ actions.

Are you ready to move to the smarter way of acquiring ready-to-use data?

Click on ‘Get Started Now’ and walk us through your requirements. We will get you started immediately.

Top case studies and use cases from Real Estate Industry

Scraping listings of real estate agents: Mid-scale companies.

Extracting agents’ listings from multiple real estate portals to improve data strategy.

Learn moreScraping listings of real estate agents: Large-scale companies

Crawling popular US-based portals to improve business intelligence and service portfolio.

Learn moreScraping real estate listings across the country: India and US

Collecting real-estate listings across the country on classifieds to connect B2B marketplaces.

Learn moreAggregating real estate listings using web scraping services

Collecting real estate listings from popular property listings sites by leveraging web scraping solution.

Learn moreWhy Choose PromptCloud?

Dedicated

Support

Fully Managed

Service

Completely Customizable

Low

Latency

Highly

Scalable

No

Upkeep