SEO techniques have evolved over time and backlinks and meta tags are no more the only things that help companies generate better organic traffic. Even though everyone knows that Google ranks websites based on a number of factors, using complex algorithms, all of the parameters that count in SEO rankings are not known. However, the need for structured data for SEO purposes is universally accepted and you will find a number of blogs on the web about this. This Google-page actually explains how Google tries to understand your webpage better using all the structured data that is available for it to parse. By having structured data on your website, you are leaving Google more clues to understand your website and rank it accordingly. In fact, Google has also presented examples of how your website data should look in the backend. With Google being the biggest Search Engine in the world (except Baidu in China), it is safe to say that it would be beneficial for all websites to follow this structured-data format for better visibility. And this is why websites are fast changing data formats on their pages, and this is going to benefit SEO rankings as well as web scrapers.

Structured data is beneficial for all. Even websites who want to scale up would benefit by having structured data since they would already be having a basic data layout to follow, and operations in their backend would be faster leading to lesser latency and superior customer experience.

The difference made by Structured Data

So till now, I have spoken about Structured data and how SEO practices are forcing websites to shift to it. But what is the difference between structured and unstructured data and why is it easier to parse structured data, be it for SEO ranking or web scraping?

Let’s explain this with an example. Say you are a website where people post reviews of restaurants. People rate restaurants and also post comments about the food they had in different restaurants on your website. So say you have a restaurant listed with the name- “Red Onion Restaurant”, and three people have rated the restaurant and whereas two have posted comments. Say you store this data in the form of a string-

“Red Onion Restaurant | Average Rating- 3.5 stars| 3 | “Good food, too crowded” | “Noodles was great.”

So you see that this is a single string or a single sentence that Google will be parsing for SEO, or one might be scraping for extracting data. You can understand that there could be variations in this string, depending on extra details such as location and price range for some restaurants. In such scenarios, extracting separate elements such as the average rating and the different comments might prove to be a headache, and require extra computations.

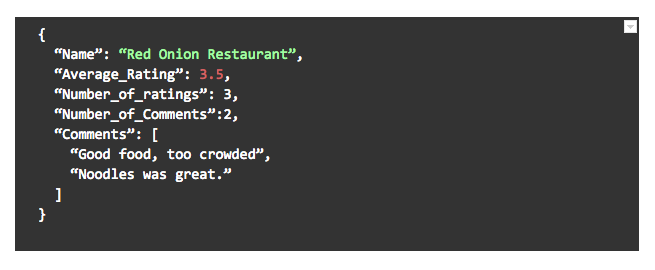

Now say the same website had been actually storing this data in a JSON object in this format-

Just imagine how easier it would have been for Google to understand the data and rank the website and how simple it would be for you to crawl the data. Even if there are extra fields for some restaurants (or if some of these fields are missing), it would be a simple check to pick up the available data. That’s how structured data is much easier to process, be it for a human eye, or a computer.

The growth of web scraping supported by structured data

Web scraping has been growing at an enormous rate since the time when people used to cut newspaper articles or copy paste from online blogs. Nowadays most of the web-scraping is done by automated or semi-automated intelligent bots who take care of most of the problems. In cases where it runs into an issue or where it has to be trained to crawl a new web-page, human intervention is required. If a web scraper is running on websites that have most of the data in a structured format, the chances of errors or the need for manual intervention is highly minimised and the speed at which the scraping bot can run is also faster.

Nothing in a website could help web scraping more than structured data. Often images and videos are present in websites, and even these might be inserted into random tags. Instead, having their links as a part of the data-JSON, and storing them elsewhere, would greatly help web-scrapers differentiate between different forms of data and crawl and store them separately and accordingly.

Structured Data can positively impact web scraping and information discovery

An important factor that people forget when talking about data is data-cleanliness. Data-cleanliness is very important since dirty data can reduce the value of data itself, or even render it useless. Un-structured data can lead to dirty data when it is being scraped, processed, or even transferred between websites or web-pages. Structured data reduces chances of dirty or duplicate data since by following a single format for new every data entry, mistakes and issues are flagged in the entry point of data itself.

Web scraping occurs in a very similar manner to how search engines parse your websites to rank you, and so it is no surprise that the interests of both are inter-related. However one has to understand the basic logic behind why structured data is preferred, no matter what the use-case. Code changes take place regularly and both the front end and back-end computations are prone to regular changes due to product-upgradations, new features, etc, but having a standard data format would go a long way to making lives easier for developers. When an API’s input and output format remains the same no matter what other changes happen on both the ends, it is much simpler for others to use it, since they know that regular code-breaks due to data-format changes won’t take place.

Web scraping, being one of the major beneficiaries of structured data, will garner further growth, as the same scraping bot can run at a much faster pace and give better accuracy rates when it is only parsing structured data as compared to when it is parsing mangled data.