Web Scraping: What It Actually Means in Real Systems

What This Guide Actually Helps You Decide

Web scraping is not just about extracting data. It is about maintaining a system that continues to deliver usable data as websites change.

This guide breaks down:

- the three approaches to web scraping (tools, frameworks, managed pipelines)

- the techniques that work on modern websites

- where scraping creates real business value

- where it fails due to data quality, scale, and maintenance

The core decision is not which tool to use. It is whether you are building a script or a system. That distinction determines whether your data pipeline holds over time.

Web Scraping: What It Actually Means in Real Systems

What This Guide Actually Helps You Decide

Web scraping is not just about extracting data. It is about maintaining a system that continues to deliver usable data as websites change.

This guide breaks down:

- the three approaches to web scraping (tools, frameworks, managed pipelines)

- the techniques that work on modern websites

- where scraping creates real business value

- where it fails due to data quality, scale, and maintenance

The core decision is not which tool to use. It is whether you are building a script or a system. That distinction determines whether your data pipeline holds over time.

Most explanations describe web scraping as extracting data from websites. That definition is incomplete and leads to bad decisions.

In reality, Web scraping is not a one-time extraction task. It is a continuous data pipeline that must adapt to change while maintaining data reliability over time. A working scraper on day one does not mean you have a working solution. It only means the website structure hasn’t changed yet. A more accurate way to think about it:

Web scraping = continuous data collection + adaptation to change + data reliability

This shift in framing is critical. Because the effort is not in writing the script. The effort is in keeping the data usable over time.

Why Initial Success Is Misleading

A working scraper is often treated as a finished solution. In reality, it is only the starting point. As soon as the source changes, even slightly, the extraction logic begins to drift. The impact is not always obvious. Instead of breaking completely, the system produces incomplete or inconsistent outputs.

You may start noticing:

- certain fields missing without warning

- incorrect values being captured due to structural shifts

- duplicate records appearing across runs

These issues are difficult to detect without validation systems. Over time, they create a gap between what the system reports and what is actually happening on the source website. This is why many scraping pipelines appear stable on the surface while quietly losing reliability underneath.

For a deeper breakdown of how these failures emerge in crawling and extraction systems.

The Hidden Layer Most Teams Ignore

Most discussions around web scraping focus on how to extract data. Very few address what happens after extraction. Raw scraped data is rarely usable in its initial form. It often requires:

- cleaning to remove inconsistencies

- normalization across multiple sources

- validation to ensure completeness and accuracy

Without these steps, the data cannot support analysis, reporting, or decision-making. This creates a situation where teams are technically collecting data but still relying on manual checks or assumptions to interpret it.

See how structured web data pipelines actually work

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

Web Scraping as Part of a Larger Data Workflow

Web scraping becomes significantly more valuable when it is treated as one component within a broader data workflow.

In most real-world use cases, the output of scraping feeds into:

- pricing and competitive intelligence systems

- market research dashboards

- lead generation pipelines

- AI models that depend on fresh external data

In these environments, the requirement is not just extraction. It is consistent. A system that delivers slightly less data but does so reliably over time is far more useful than one that captures everything inconsistently. This shift changes how web scraping should be evaluated. The question is no longer whether you can extract the data, but whether you can depend on it.

The Structural Decision Behind Every Scraping Project

At some point, every team working with web data faces a decision that shapes everything that follows. Are you building a quick extraction setup, or are you building a system that can sustain itself over time?

| Approach | Where It Works | Where It Starts to Struggle |

| DIY scripts | Small datasets, stable pages | Frequent changes, scaling |

| Scraping tools | Medium complexity use cases | Custom logic, reliability |

| Managed pipelines | Ongoing, business-critical data | Requires upfront evaluation |

This is not just a technical choice. It affects maintenance effort, data quality, and how much engineering time gets spent fixing breakages instead of building new capabilities.

Key Insight

Web scraping is not defined by how you extract data. It is defined by how well your system continues to deliver accurate data as the source changes. Most failures happen not because the scraper was built incorrectly, but because it was not designed to adapt.

Most guides list tools. That doesn’t help you make a decision.

The real question is not which tool is best. It is which approach matches your data requirements over time.

Because tools don’t fail immediately. They fail when:

- data volume increases

- websites become dynamic

- reliability starts to matter

Need This at Enterprise Scale?

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

PromptCloud delivers structured web data pipelines without scraper maintenance.

The Three Categories That Define Your Choice

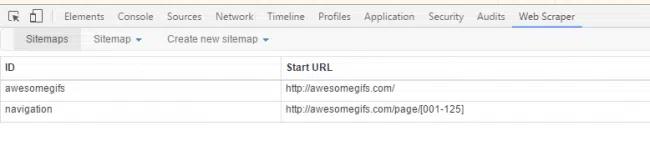

Web scraping tools fall into three practical categories. Each solves a different stage of the problem.

1. No-code / visual tools

Tools like Octoparse or ParseHub are built for quick setup. You point, click, and extract. They work well when:

- the website structure is simple

- data needs are limited

- usage is occasional

They start to struggle when logic becomes complex or when websites introduce dynamic rendering and blocking mechanisms.

2. Developer frameworks and libraries

Python libraries like BeautifulSoup, Scrapy, and Selenium give full control. They allow you to:

- handle complex page structures

- customize extraction logic

- integrate scraping into internal systems

But this control comes with responsibility. You now own:

- maintenance when sites change

- proxy management and anti-bot handling

- scheduling and monitoring

What starts as flexibility often turns into ongoing engineering overhead.

3. Managed web data services

This is where the model shifts from tools to outcomes.

Instead of building scrapers, you receive structured datasets.These systems handle:

- crawler infrastructure

- anti-bot mitigation

- schema consistency

- delivery via APIs or data feeds

This approach becomes relevant when scraping moves from experimentation to business-critical workflows. For context on how data delivery models are evolving.

PromptCloud operates in this category, delivering structured datasets from target websites through SLA-backed pipelines without scraper maintenance.

The Real Tradeoff Most Comparisons Ignore

Tool comparisons usually focus on features. That’s not where decisions are made. The real tradeoff is between:

- speed of setup

- level of control

- long-term reliability

You can optimize for one, sometimes two. Rarely all three.

- A no-code tool gives speed but limited control.

- A custom scraper gives control but low reliability without ongoing effort.

- A managed system gives reliability but requires upfront evaluation.

This is why teams often switch approaches as their use case matures.

When Tools Start Breaking Down

The limitations don’t appear at the beginning. They show up when requirements evolve. Common breaking points include:

- scraping multiple websites with different structures

- handling JavaScript-heavy or dynamically rendered pages

- maintaining consistent schemas across sources

- running high-frequency data collection without getting blocked

At this stage, the problem is no longer “how to scrape.” It becomes how to maintain data quality without constant intervention.

A Practical Way to Choose

Instead of starting with tools, start with your use case. If your requirement is:

- one-time extraction → use a simple tool

- recurring but low-scale → use scripts or libraries

- continuous, high-volume, business-critical → move to managed systems

Most teams make the mistake of starting with tools and then adapting their use case to fit those tools. That usually leads to rework.

Enterprise Web Scraping Solutions: What Actually Changes at Scale

When web scraping moves from experimentation to business dependency, the definition of success changes.

At a small scale, scraping is about whether data can be extracted. At an enterprise scale, it becomes about whether data can be delivered reliably, consistently, and in a format that downstream systems can trust.

This is where enterprise web scraping solutions differ fundamentally from tools and scripts.

Instead of focusing on extraction, they focus on outcomes:

- structured dataset delivery across multiple sources

- schema consistency even as websites change

- SLA-backed data delivery schedules

- data validation and quality monitoring layers

- infrastructure that handles anti-bot systems without manual intervention

This shift introduces a different operating model.

| Layer | Small-Scale Scraping | Enterprise Web Scraping Solutions |

| Extraction | Script or tool-based | Managed pipelines |

| Maintenance | Manual fixes | Continuous adaptation systems |

| Data Quality | Assumed | Validated and monitored |

| Delivery | Files or exports | APIs, feeds, scheduled pipelines |

| Reliability | Variable | SLA-backed |

The key difference is not technical complexity. It is operational ownership. In small setups, teams own the scraper. In enterprise systems, teams own the outcome, while the underlying data pipeline is managed, monitored, and continuously adapted.

This is why enterprise web scraping solutions are not evaluated based on features. They are evaluated based on:

- data reliability over time

- total cost of ownership

- engineering effort required for maintenance

- ability to scale across multiple data sources

The decision is no longer about how to scrape a website. It is about how to ensure that web data remains usable as a system input.

Web Scraping Techniques: What Actually Works on Modern Websites

Most explanations of web scraping techniques are outdated. They list methods like HTML parsing or XPath without addressing how modern websites behave. Today, websites are not static documents. They are dynamic applications. Data is often loaded after the page renders, hidden behind APIs, or protected by anti-bot systems.

This changes how scraping actually works in practice.

HTML Parsing Still Works, But Only in Specific Cases

HTML parsing is the starting point for most scraping workflows. You fetch the page, parse the DOM, and extract elements using selectors. This works well when:

- the website serves complete data in the initial HTML response

- the structure is relatively stable

- there is no heavy client-side rendering

In these cases, tools like BeautifulSoup or lxml are efficient and reliable. The limitation is straightforward. If the data is not present in the HTML response, parsing alone cannot retrieve it.

JavaScript Rendering Changes the Game

Many modern websites load data dynamically using JavaScript. The HTML you receive initially is often incomplete. In these cases, you need to simulate a browser environment. This is where tools like Selenium or Puppeteer come in. They execute JavaScript, wait for the page to render, and then extract data from the fully loaded DOM.

This approach works, but it introduces tradeoffs:

- slower execution compared to direct requests

- higher infrastructure cost

- increased chances of being detected

It is effective, but not efficient at scale.

API-Based Extraction Is Often the Most Reliable Path

Behind many web interfaces, data is fetched through APIs. Instead of scraping the UI, you can intercept these requests and extract data directly from API responses, typically in JSON format. This approach offers clear advantages:

- structured data without parsing HTML

- faster response times

- fewer breakages from UI changes

However, APIs are not always accessible. They may require authentication, tokens, or have strict rate limits. Still, when available, API extraction is usually the most stable method.

Handling Pagination and Data Expansion

Real-world datasets rarely sit on a single page.

You often need to navigate:

- paginated listings

- infinite scroll interfaces

- dynamically loaded content batches

This requires building logic to:

- detect next-page patterns

- simulate scrolling behavior

- manage request sequences

The complexity here is not in extraction, but in coverage. Missing pages means incomplete datasets.

Anti-Bot Systems Are the Real Barrier

A significant portion of web traffic today is automated. According to Imperva, nearly 47% of all internet traffic comes from bots, with a large share actively involved in data extraction and monitoring activities. This is why websites continuously evolve detection systems, making access the hardest part of web scraping at scale.

The biggest technical challenge in web scraping is not parsing or rendering. It is access. Websites actively detect and block automated behavior using:

- IP rate limiting

- CAPTCHAs

- fingerprinting techniques

- behavioral analysis

To work around this, scraping systems use:

- proxy rotation

- request throttling

- header and session management

This turns scraping into an infrastructure problem, not just a coding task.

No Single Technique Is Enough

This is where most guides oversimplify. In real systems, scraping is not done using one technique. It is a combination:

- HTML parsing for simple pages

- browser automation for dynamic content

- API extraction where possible

- proxy and anti-bot handling for access

The effectiveness comes from how these techniques are combined, not which one is used.

Python Web Scraping: How It Works in Practice

Why Python Became the Default Choice

Python dominates web scraping because it removes friction at the start.

A developer can fetch a webpage, parse HTML, and extract data quickly. Libraries like BeautifulSoup, Scrapy, and Selenium simplify this process to the point where scraping looks easier than it actually is.

That early simplicity is what makes Python attractive. It is also what makes it misleading.

The Basic Workflow (and Why It Feels Easy)

At a surface level, Python scraping follows a clean flow. You request a webpage, parse the HTML, locate elements, and store the output in formats like CSV or JSON. For static pages and small datasets, this works reliably. This is why most teams get early wins. The setup is fast, the output looks correct, and the system appears stable. The issue is that this only holds true under controlled conditions.

Where Things Start Breaking

The moment scraping becomes a recurring workflow, the cracks start to show. Websites introduce JavaScript rendering, dynamic elements, and anti-bot protections. Even small layout changes can disrupt extraction logic. The script does not always fail outright. Instead, it degrades. You start seeing incomplete fields, inconsistent structures, or missing data across runs. The system continues operating, but the output becomes unreliable.

The Wrong Assumption Most Teams Make

When things break, teams often assume the problem is the code. In reality, the issue is structural. They are trying to use a script as a data pipeline, without the reliability, monitoring, and maintenance layers required to sustain it. A script is designed to run and finish. A system is designed to run continuously, adapt to change, and maintain consistency. Python alone does not solve for that.

What It Takes to Make Python Work at Scale

To make Python scraping reliable, you need layers beyond extraction. You need ways to:

- schedule and orchestrate runs

- handle failures and retries

- validate outputs across runs

- detect when data quality has changed

At this point, the complexity is no longer in writing scraping logic. It is in managing everything around it.

Where Python Fits (and Where It Doesn’t)

Python works best as an entry point. It is ideal for experimentation, small-scale data collection, and validating whether a dataset is useful. But as soon as the use case becomes business-critical, the challenge shifts. Reliability, consistency, and maintenance start to matter more than flexibility. This is where Python alone stops being sufficient.

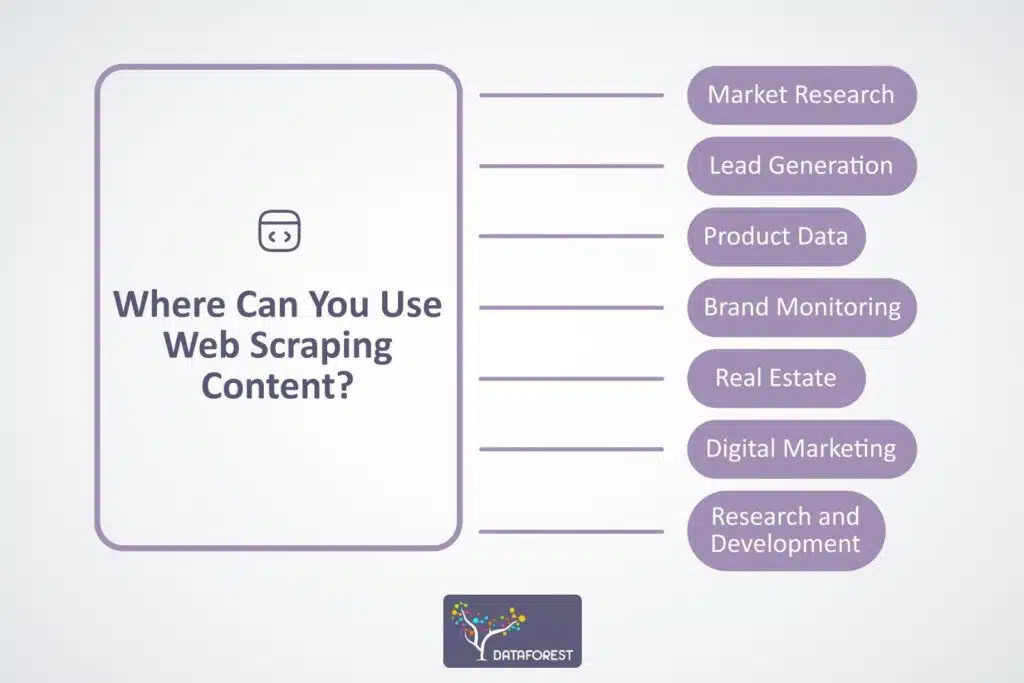

Web Scraping Use Cases: Where It Actually Drives Value

Web scraping is often positioned as a universal solution for data collection. In reality, its value is highly dependent on the use case. The difference between a successful scraping initiative and a wasted effort is not the tool or technique. It is whether the data directly feeds a decision-making system.

Source: Dataforest

High-Impact Use Cases Where Web Scraping Works

Web scraping delivers the most value when the data is:

- external (not available internally)

- fragmented across multiple sources

- changing frequently

- directly tied to business decisions

This is why it shows up in specific categories repeatedly.

Pricing and competitive intelligence

Companies track competitor pricing, discounts, and availability across marketplaces. This data feeds dynamic pricing models, promotion strategies, and revenue optimization systems.

Digital shelf monitoring

Brands track product rankings, reviews, and stock availability across ecommerce platforms. The value comes from identifying visibility gaps and reacting faster than competitors.

Market and trend analysis

Aggregating data across multiple websites allows teams to identify patterns that are not visible within a single source. This is commonly used in travel, real estate, and financial research.

Lead and company intelligence

Teams extract hiring signals, company data, or directory listings to build outbound pipelines. The effectiveness depends on data freshness and coverage.

AI and LLM data pipelines

Web scraping is increasingly used to feed external, real-time data into AI systems. Static datasets quickly become outdated, making continuous data collection essential.

For a broader perspective on how external data is shaping enterprise systems.

Where Teams Overestimate the Value

Not every use case benefits from scraping. Web scraping becomes inefficient or unnecessary when:

- the data already exists internally

- APIs provide cleaner and more stable access

- the dataset does not change frequently

- the effort to maintain scraping exceeds the value of the data

A common mistake is using scraping as a default solution instead of evaluating whether it is the right one.

The Real Requirement Behind Every Use Case

Across all successful implementations, one pattern holds. The value does not come from collecting data. It comes from using that data consistently. If the data is:

- incomplete

- inconsistent

- delayed

then the use case breaks, regardless of how advanced the scraping setup is. This is why reliability matters more than extraction capability.

Matching Use Cases to the Right Approach

Different use cases require different levels of sophistication.

- A one-time research task can be handled with simple tools.

- A recurring dashboard might require scripts.

- A business-critical system needs a reliable pipeline.

Teams that align their approach with the importance of the use case avoid unnecessary complexity early, and avoid rework later.

Limitations of Web Scraping: Where It Breaks in Practice

Most guides position web scraping as a straightforward solution. In reality, its limitations define whether a project succeeds or becomes an ongoing drain on resources. The challenges do not appear at the start. They show up when scraping moves from experimentation to dependency.

Structural Changes Break Extraction Logic

Websites are not stable data sources. Even minor frontend updates can shift HTML structure, rename classes, or change element hierarchy. When this happens, extraction logic stops aligning with the page. The failure is rarely obvious. Scrapers may continue running while silently returning incomplete or incorrect data. Without validation, these issues go unnoticed until they impact downstream systems.

JavaScript-Heavy Websites Limit Access

Modern websites increasingly rely on client-side rendering. Data is loaded dynamically after the page initializes, which means it is not present in the raw HTML response.

This forces scrapers to use browser automation or reverse-engineer APIs.

Both approaches introduce tradeoffs. Browser automation is slower and resource-intensive. API access is often restricted or unstable. As a result, scraping dynamic websites becomes significantly more complex than static extraction.

Anti-Bot Systems Create Access Barriers

Websites actively detect and block automated traffic. This includes:

- rate limiting

- CAPTCHAs

- IP blocking

- behavioral fingerprinting

Bypassing these requires proxy rotation, request tuning, and session management. At scale, this becomes an infrastructure problem rather than a coding challenge. Teams often underestimate how much effort goes into simply maintaining access.

Data Quality Degrades Without Monitoring

Even when scraping works technically, the output can degrade over time. Common issues include:

- missing attributes

- inconsistent schemas

- duplicated records

- stale or delayed updates

The core issue is not extraction failure. It is the absence of systems that validate and enforce data quality continuously. Without these layers, scraped data cannot be trusted for analysis or decision-making.

Legal and Compliance Considerations

Web scraping operates within a complex legal landscape. Key factors include:

- website terms of service

- data ownership and copyright

- privacy regulations such as GDPR

Not all data is safe to collect or use. Organizations need clear governance around what is being scraped and how it is used.

For a deeper breakdown of legal considerations.

When Web Scraping Stops Being Efficient

There is a point where scraping becomes more expensive to maintain than the value it generates. This usually happens when:

- multiple websites need to be monitored continuously

- data refresh frequency increases

- reliability becomes business-critical

- engineering teams spend more time fixing than building

At this stage, the problem is no longer about scraping capability. It is about operational efficiency.

When Web Scraping Makes Sense

Web scraping is one of the most powerful ways to access external data, but its value depends entirely on how it is implemented. At a small scale, it is an effective way to experiment. Teams can quickly validate ideas, collect sample datasets, and understand whether external data can improve their workflows. In these scenarios, simple tools or Python scripts are often sufficient.

As the importance of the data increases, the expectations change. It is no longer enough to extract data occasionally. The system needs to deliver consistent, structured, and reliable outputs over time. This is where most implementations start to struggle.

The decision is not whether to use web scraping. It is whether your approach can deliver structured, reliable data consistently as requirements scale.

| Scenario | Recommended Approach |

| One-time or small-scale data | Simple tools or scripts |

| Recurring but limited workflows | Custom scraping setup |

| Business-critical, high-frequency data | Managed data pipeline |

Web scraping delivers value when it is aligned with the importance of the use case. Used correctly, it becomes a strong data advantage. Used without structure, it becomes an ongoing maintenance burden.

The difference lies in how early you recognize that you are not just extracting data, but operating a system.

For a clear, technical explanation of how web data is structured and accessed on the internet, refer to this document.

See how structured web data pipelines actually work

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. What is the difference between web scraping and web crawling?

Web crawling focuses on discovering and navigating URLs across websites, while web scraping is concerned with extracting specific data from those pages. Crawling finds the data sources, scraping pulls the actual data. In most real-world systems, both are used together as part of a larger data pipeline.

2. How do you handle website blocks while scraping data?

Handling blocks typically involves managing request behavior rather than just changing code.

This includes rotating IP addresses, controlling request frequency, and maintaining session consistency. Advanced systems also mimic real user behavior patterns to reduce detection.

However, increasing access often increases complexity and cost, which is why many teams shift toward managed solutions at scale.

3. Can web scraping be used for real-time data collection?

Web scraping can support near real-time data collection, but it depends on how frequently the source updates and how often your system can safely request data.

High-frequency scraping increases the risk of blocks and infrastructure costs. For real-time use cases, systems often combine scraping with event triggers, APIs, or streaming pipelines.

4. How do you ensure data accuracy in web scraping?

Accuracy is not guaranteed by extraction alone. It requires validation layers. This includes schema checks, completeness monitoring, duplicate detection, and periodic audits of extracted data against source pages. Without these, data can degrade silently over time.

5. What are the alternatives to web scraping for data collection?

Web scraping is not always the best option. Alternatives include:

official APIs provided by platforms

data partnerships or licensed datasets

public data feeds and open datasets

These methods are often more stable, but may have limitations in coverage, flexibility, or cost. Choosing between scraping and alternatives depends on availability, reliability requirements, and how critical the data is to your use case.