**TL;DR**

A Facebook scraper helps extract Marketplace data such as listings, prices, locations, seller trends, and product categories. But in 2026, building a simple script is not enough. Facebook’s dynamic content, login barriers, and anti-automation systems require smarter scraping strategies that balance technical execution with compliance and scale.

What is a Facebook Scraper?

Facebook Marketplace is no longer just a side feature inside a social network.

For automotive listings, rental properties, second-hand electronics, furniture, and even local grocery resale, it has become a real-time pricing marketplace.

And here’s what makes it interesting:

Much of that pricing and product data never appears on traditional ecommerce platforms.

It lives inside Marketplace listings.

If you are a retailer, reseller, auto dealer, rental aggregator, or pricing intelligence team, ignoring Marketplace means ignoring a large portion of local demand signals.

That is where a Facebook scraper becomes relevant.

A Facebook scraper is designed to extract structured data from Facebook pages, especially Marketplace listings. This includes:

- Product titles

- Asking prices

- Seller location

- Listing timestamps

- Category tags

- Description text

- Image references

However, scraping Facebook is fundamentally different from scraping a static website.

Marketplace content is:

- JavaScript-heavy

- Dynamically rendered

- Often login-protected

- Frequently updated

- Guarded by anti-bot mechanisms

Which means building a Facebook scraper in Python requires more than BeautifulSoup and a simple GET request.

In this guide, we will cover:

- What a Facebook scraper really does

- Technical architecture considerations

- Python-based approaches

- Limitations and detection risks

- Compliance boundaries

- When to build vs when to scale professionally

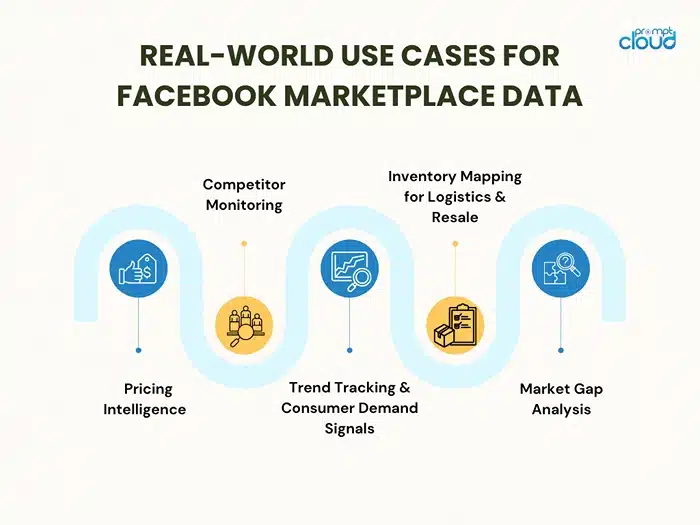

Why Facebook Marketplace Data Is Strategically Valuable in 2026

Before writing a single line of Python, you need to answer one question:

Why scrape Facebook Marketplace at all?

Because Marketplace is not just another classifieds site. It has become a high-frequency, hyperlocal, peer-driven pricing engine.

Unlike traditional ecommerce platforms:

- Listings are often informal

- Pricing is negotiable

- Inventory is fragmented

- Supply fluctuates hourly

- Demand signals are raw and unfiltered

That makes it messy.

But it also makes it powerful.

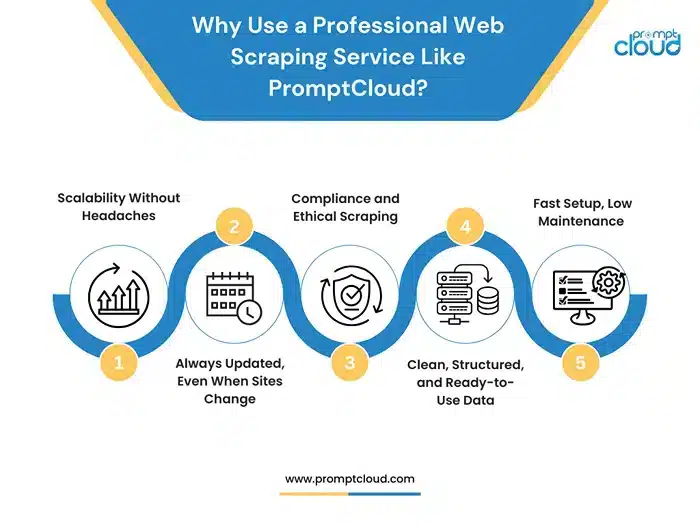

Your data collection shouldn’t stop at the browser. If your scrapers are hitting limits or you’re tired of rebuilding after every site change, PromptCloud can automate and scale it for you.

1. It Reflects True Local Market Pricing

Amazon shows listed prices. Retail sites show MSRP or controlled pricing.

Facebook Marketplace shows what people are actually willing to sell something for in your city, this week.

For example:

- Used iPhones priced differently in Mumbai vs Bangalore

- Rental furniture marked down heavily at month-end

- Used cars undercutting dealership listings

- Gym equipment spikes post-New Year

If you are building pricing intelligence models, Marketplace provides:

- Real asking price distribution

- Price elasticity signals

- Listing velocity trends

- Discount patterns over time

That data does not exist in structured retail APIs.

2. It Captures Secondary Market Liquidity

Marketplace is where:

- Overstock gets offloaded

- Small retailers test inventory

- Individuals resell products

- Local arbitrage happens

For resale businesses, refurbishers, or liquidation buyers, this is supply discovery.

For example:

- Electronics refurbishers track bulk phone listings

- Used car aggregators monitor dealer spillover inventory

- Furniture rental startups track seasonal sell-offs

A properly designed facebook scraper lets you:

- Monitor listing volume per category

- Track repeat sellers

- Detect bulk listing behavior

- Identify supply clusters geographically

That becomes procurement intelligence.

3. It Surfaces Demand Before It Hits Retail

One underrated signal: what people are urgently trying to buy or sell.

If you scrape listing descriptions and categorize keywords, you can detect:

- Sudden demand for air purifiers during pollution spikes

- Generator listings during power outages

- Work-from-home furniture demand

- Seasonal product waves

Marketplace often reacts faster than structured retail platforms.

Because it is user-driven.

That makes it useful for:

- Inventory forecasting

- Regional demand modeling

- Dynamic pricing systems

- Expansion planning

4. It Enables Competitor Monitoring for SMBs

Small businesses increasingly sell through Marketplace.

If you are:

- A used car dealer

- A local appliance store

- A regional electronics reseller

- A grocery reseller

Your competitors are already there.

A facebook marketplace scraper allows you to:

- Track competitor listing frequency

- Monitor pricing adjustments

- Detect undercutting

- Identify new entrants

This is especially critical in markets where traditional ecommerce penetration is low but Facebook adoption is high.

Data Modeling: What a Good Facebook Scraper Actually Outputs

Most people think scraping means:

Pull HTML → extract fields → dump into CSV.

That’s not useful long term.

A production-grade facebook scraper must output normalized, analysis-ready data.

Here’s what structured output should ideally include:

| Field | Why It Matters |

| listing_id | De-duplication and tracking |

| title | NLP categorization |

| price_raw | Original text |

| price_numeric | Standardized currency value |

| currency | Multi-region normalization |

| category | Segmentation |

| location_text | Geo mapping |

| latitude/longitude (if derivable) | Heatmap analysis |

| timestamp_scraped | Freshness validation |

| listing_age | Velocity modeling |

| image_url | Visual classification |

| description_cleaned | Sentiment or feature extraction |

If you are building a serious data pipeline, this structure matters more than scraping speed.

Because downstream use cases depend on:

- Clean pricing normalization

- Consistent category mapping

- Deduplicated listings

- Timestamp accuracy

Without structured modeling, your scraper becomes noise.

Technical Architecture of a Facebook Scraper in 2026

If you are thinking:

“I’ll just run Selenium on my laptop and pull listings.”

That works for 20 rows.

Not for 20,000.

In 2026, scraping Facebook Marketplace requires thinking like a systems engineer, not just a Python developer.

Let’s break down the actual architecture layers involved.

1. Browser Layer: You Are Not Scraping HTML — You Are Simulating a User

Facebook Marketplace is:

- Client-side rendered

- GraphQL-driven

- Session-authenticated

- Behavior-monitored

There is no clean static HTML response to parse.

So the scraper must operate in a real browser context.

Common stack:

- Playwright (recommended)

- Selenium (still widely used)

- Headful mode when necessary

- Chromium-based instances

Why headless alone is not enough:

Facebook fingerprinting systems detect:

- Headless browser flags

- Missing GPU signatures

- Suspicious navigator properties

- Predictable automation behavior

So modern scrapers must:

- Modify browser fingerprints

- Randomize interaction timing

- Simulate scroll velocity

- Load assets like a real user

If your scraper loads 200 listings in 3 seconds, you’re flagged.

Speed is the enemy of stealth.

2. Session & Authentication Layer

Marketplace content is gated behind login.

That means:

- Session cookies

- CSRF tokens

- Device trust signals

- IP consistency checks

A serious facebook scraper must manage:

- Session persistence

- Cookie refresh cycles

- Token expiration

- Reauthentication workflows

If your login flow breaks, the scraper stops entirely.

And in 2026, Facebook frequently forces:

- Security challenges

- Suspicious login alerts

- 2FA triggers

- Checkpoint verifications

This makes unattended long-term scraping fragile without automation infrastructure.

3. Proxy & Network Layer

One IP scraping hundreds of listings repeatedly?

Blocked.

Modern scraping stacks require:

- Rotating residential proxies

- Geo-targeted IP pools

- Session-bound IP mapping

- Rate-limited request flows

Residential proxies matter because:

Facebook can detect:

- Datacenter IP ranges

- Suspicious ASN patterns

- Known proxy providers

And once an IP is flagged, that session is compromised.

But proxies introduce complexity:

- Latency increases

- Connection stability varies

- Costs scale quickly

This is where DIY projects start becoming operational problems.

4. Anti-Detection & Behavioral Modeling

Facebook does not rely only on rate limiting.

It uses behavioral analysis.

That means your scraper must avoid:

- Perfectly timed scrolls

- Uniform click intervals

- Zero mouse movement

- Linear navigation patterns

Human behavior is messy.

Automation must imitate that messiness.

Techniques include:

- Randomized scroll depth

- Non-linear cursor movement

- Variable idle times

- Occasional pause-and-hover behavior

Without behavioral simulation, detection risk rises sharply.

5. Data Extraction Strategy: DOM vs Network Interception

There are two core approaches:

A. DOM Parsing (UI-based scraping)

You let the page render fully, then extract elements.

Pros:

- Easier to implement

- Matches visible data

Cons:

- Slower

- DOM changes break selectors

- Heavier browser load

B. Network Interception (GraphQL capture)

Marketplace listings are fetched via background API calls (often GraphQL).

If you intercept those responses:

- You get structured JSON

- You bypass fragile DOM parsing

- Extraction becomes cleaner

But:

- Endpoints change

- Tokens expire

- Requests are signed and validated

- Reverse engineering required

In 2026, serious scrapers favor network-level interception over DOM scraping.

Because structured responses are more stable than UI markup.

6. Storage & Pipeline Layer

Scraping is only step one.

You need:

- Deduplication logic

- Version tracking

- Historical price tracking

- Change detection

- Schema normalization

For example:

If a listing price drops from ₹20,000 to ₹18,500:

Do you overwrite?

Or do you track delta?

A professional pipeline stores:

- listing_id

- scrape_timestamp

- price_snapshot

This enables:

- Price trend charts

- Velocity scoring

- Inventory turnover modeling

Without historical tracking, you lose 80% of analytical value.

7. Monitoring & Failure Recovery

Production scrapers fail.

Constantly.

Reasons:

- DOM structure changes

- Login invalidation

- Proxy exhaustion

Rate-limit detection - Captcha injection

- Geo-blocking

So a robust facebook scraper requires:

- Logging

- Health monitoring

- Auto-restart logic

- Alerting

- Selector fallbacks

- Retry policies

If you are not monitoring scraper health, you are operating blind.

Why Most DIY Facebook Scrapers Fail at Scale

Let’s be blunt.

The Python script you build this weekend:

- Will work initially

- Will break silently

- Will get flagged

- Will require maintenance

- Will not scale cleanly

The failure modes usually look like this:

- DOM selector changes → empty dataset

- IP flagged → login locked

- Session invalidated → scraper loops

- Captcha triggered → manual intervention required

- Price formatting changes → parsing errors

And suddenly your “simple scraper” becomes a full-time maintenance job. That’s why building is educational. But scaling is infrastructural.

Anti-Bot Detection Systems Facebook Uses in 2026

If you’re building a facebook scraper, you’re not just writing code.

You’re playing against a risk engine.

Facebook’s anti-automation systems in 2026 are far more advanced than simple rate limiting. They combine behavioral modeling, network fingerprinting, browser telemetry, and account-level risk scoring.

Understanding this is critical before you write a single line of scraping logic.

1. Behavioral Fingerprinting

Facebook tracks how humans behave.

Not just what they request.

It monitors:

- Scroll velocity curves

- Mouse movement randomness

- Typing cadence

- Pause intervals

- Click heatmaps

- Navigation order

A human does not scroll in perfectly consistent 5000px increments every 2 seconds.

A bot often does.

If your scraper scrolls with mathematical precision, that’s a signal. If it loads 100 listings and never moves the mouse, that’s a signal. If it never hesitates before clicking, that’s a signal. Detection today is pattern-based, not just threshold-based. Which means even slow scraping can be flagged if the pattern looks synthetic.

2. Browser Fingerprinting

Facebook inspects your browser environment deeply.

Signals include:

- WebGL fingerprints

- Canvas fingerprint hash

- AudioContext fingerprint

- Installed fonts

- Screen resolution

- GPU metadata

- Navigator properties

- Headless browser flags

Even if you rotate IP addresses, if your browser fingerprint remains identical across sessions, you are traceable.

In 2026, serious scraping stacks randomize:

- User agents

- Browser contexts

- Fingerprint signatures

- Session storage states

But this adds complexity.

It’s no longer just about running Playwright.

It’s about running modified browser builds or stealth layers.

3. IP & Network Intelligence

Facebook’s systems classify IP traffic.

They can detect:

- Known datacenter ranges

- Suspicious ASN patterns

- Traffic velocity anomalies

- Multiple account logins from one subnet

Residential proxies reduce risk.

But even residential IPs can be flagged if behavior is abnormal.

Example:

If one IP views 1,000 listings in 10 minutes, even with human-like scroll patterns, the anomaly is visible at the network level.

Risk scoring is cumulative.

The more unusual signals you generate, the higher your profile score climbs.

4. Session & Account Risk Scoring

Every Facebook account has a trust score.

New account scraping aggressively?

High risk.

Long-established account browsing casually?

Low risk.

But scraping activity increases account risk over time.

Facebook can trigger:

- Security checkpoints

- Forced password resets

- Suspicious login verifications

- Temporary Marketplace blocks

- Full account suspension

This is why production-level facebook scraper implementations often separate:

- Account pools

- Usage patterns

- Regional targeting

But again, this moves you from coding into operations management.

5. GraphQL Signature Validation

Marketplace listings are fetched via internal API calls.

In 2026, many of these:

- Require signed parameters

- Include dynamic tokens

- Validate request headers

- Expire frequently

Reverse engineering these calls is possible.

Maintaining them is harder.

Facebook rotates:

- Query hashes

- Request signatures

- Header expectations

Which means network-level scraping must constantly adapt.

6. Silent Shadow Blocking

The scariest detection method?

Silent degradation.

Instead of blocking you, Facebook may:

- Show partial results

- Reduce listing counts

- Hide newer posts

- Serve cached data

Your scraper still runs.

But your data becomes incomplete.

Without monitoring validation checks, you won’t even know.

This is why production scrapers validate:

- Expected listing counts

- Freshness timestamps

- Category volume consistency

If your scrape volume suddenly drops 40%, that’s a signal.

Why This Matters Before You Build

A facebook scraper in 2026 is not about:

“Can I extract the HTML?”

It’s about:

“Can I extract consistently, repeatedly, at scale, without detection, and without degrading data quality?”

That’s an entirely different engineering problem.

For:

- Personal experiments

- Academic prototypes

- Short-term data pulls

A DIY solution is fine.

For:

- Competitive pricing intelligence

- Regional demand tracking

- Automotive inventory analysis

- Rental market monitoring

- Resale analytics

You need infrastructure, not scripts.

The Real Cost of Building Your Own Facebook Scraper

By now, you understand something important:

Building a facebook scraper in Python is technically possible.

The real question is whether it is operationally sustainable.

Most teams underestimate the hidden cost of scraping Facebook Marketplace at scale. Not the code cost. The maintenance cost.

Let’s break this down realistically.

1. Engineering Time Is Not a One-Time Expense

A basic prototype might take:

- 1 to 2 weeks to stabilize login flows

- 1 week to refine selectors

- 1 week to handle scrolling and pagination

- 1 week to normalize and store data

That’s already a month of engineering bandwidth.

But the real cost starts after deployment.

You will need ongoing work for:

- DOM changes

- Session expiration handling

- Anti-bot bypass updates

- Proxy rotation management

- Data validation and monitoring

- Freshness checks

- Error recovery systems

If Facebook changes something every 3 to 6 weeks, your team must react every 3 to 6 weeks.

That means scraping becomes a recurring engineering commitment, not a one-time build.

2. Data Quality Becomes the Bigger Risk

Even if your script runs successfully, that does not mean your data is correct.

Marketplace scraping introduces subtle issues:

- Duplicate listings

- Relisted items with new timestamps

- Price changes not reflected in previous scrape

- Missing categories

- Inconsistent location formats

- Truncated descriptions

Without normalization pipelines, your analytics may become unreliable.

And pricing intelligence built on inconsistent data leads to flawed business decisions.

Scraping is not just about extraction.

It is about validation, structuring, and quality assurance.

3. Legal and Compliance Pressure Is Growing

In 2026, data regulation environments are tightening globally.

Scraping platforms like Facebook requires careful boundaries:

- Avoiding personally identifiable information

- Respecting usage terms

- Avoiding account abuse

- Ensuring commercial data usage compliance

Even if you scrape only listing-level data, your organization must consider:

- Storage policies

- Access controls

- Retention timelines

- Data processing transparency

If you are building internal dashboards for pricing analysis, risk may be manageable.

If you are commercializing Marketplace-derived insights, scrutiny increases.

That distinction matters.

4. Scaling Changes Everything

Scraping 500 listings per week is manageable.

Scraping:

- 50,000 listings per day

- Across 20 cities

- In 5 categories

- With hourly refresh

Is infrastructure.

Now you need:

- Browser orchestration

- Queue management

- Distributed workers

- Proxy pools

- Retry logic

- Monitoring dashboards

- Alerting systems

- Structured storage

At that point, you are not building a facebook scraper.

You are building a data platform.

When a DIY Facebook Scraper Makes Sense

Let’s be clear.

There are legitimate cases where building your own facebook scraper is the right move.

It makes sense when:

- You are validating a business idea

- You need a short-term dataset

- You are conducting academic research

- You want to test pricing signals in one region

- You have in-house scraping expertise

- You are comfortable maintaining it

In those cases, Python with Playwright or Selenium works.

You can extract titles, prices, locations, and timestamps effectively.

For experimental work, it is powerful.

When It’s Smarter to Think Long-Term

But if your objective is:

- Continuous pricing intelligence

- Automotive inventory tracking

- Resale supply chain optimization

- Local commerce analytics

- Competitive monitoring across regions

- Data integration into BI systems

Then reliability becomes more important than raw extraction.

And reliability requires:

- Managed infrastructure

- Continuous monitoring

- Structured data pipelines

- Compliance-aware workflows

- Scalable architecture

That is where managed web scraping services become less about convenience and more about strategic stability.

Facebook Scraper in 2026: It’s Not About Code. It’s About Strategy.

The idea of building a facebook scraper in Python sounds technical.

But in 2026, it is fundamentally strategic.

Because Marketplace is not just a webpage.

It is a live signal engine for:

- Hyperlocal pricing

- Secondary market liquidity

- Product turnover velocity

- Regional supply-demand imbalance

- Informal retail competition

And the organizations that harness that signal properly gain an advantage that traditional ecommerce data cannot provide.

However, scraping Facebook is no longer a hobby-level task.

It requires:

- Intelligent browser automation

- Anti-detection awareness

- Data modeling discipline

- Legal sensitivity

- Infrastructure maturity

The smart question is no longer: “Can we scrape Facebook?” The smarter question is: “Can we operationalize Facebook Marketplace data reliably and responsibly?” That is the difference between building a script and building intelligence. And that is where this discussion truly begins to matter.

Explore More

If you’re exploring structured marketplace intelligence or industry-specific scraping use cases, these resources provide deeper context:

- Automotive marketplace intelligence use case.

- Grocery pricing and inventory data scraping.

- Custom web scraping services for complex platforms.

- Using big data for hospitality demand forecasting.

For a technical understanding of browser automation and headless scraping frameworks often used in building a facebook scraper in Python, refer to: Playwright Documentation (Official Guide). This provides foundational knowledge for handling dynamic, JavaScript-heavy websites similar to Facebook Marketplace.

Your data collection shouldn’t stop at the browser. If your scrapers are hitting limits or you’re tired of rebuilding after every site change, PromptCloud can automate and scale it for you.

FAQs

Is it legal to build a Facebook scraper for Marketplace data?

Scraping Facebook Marketplace operates in a gray area. While listing-level data may be publicly visible, Facebook’s Terms of Service restrict automated access. Businesses should avoid collecting personal data and ensure compliance with regional data protection laws before using scraped data commercially.

Can I scrape Facebook Marketplace without logging in?

In most cases, no. Facebook restricts Marketplace visibility for logged-out users. A working facebook scraper typically requires authenticated sessions, which introduces additional complexity such as cookie handling, session persistence, and bot detection mitigation.

What data can a Facebook Marketplace scraper safely extract?

A compliant Facebook scraper should focus only on listing-level fields such as product titles, prices, categories, approximate locations, timestamps, and image URLs. Personal data such as names, phone numbers, or profile links should be avoided to reduce legal and compliance risks.

Why does Facebook Marketplace scraping break so often?

Facebook frequently updates its front-end structure, runs A/B tests, and rotates dynamic class names. Since there are no stable APIs for Marketplace, scraping scripts relying on DOM selectors are prone to failure after layout updates or anti-automation adjustments.

5. When should businesses use a managed scraping service instead of building in-house?

If the goal is continuous monitoring across multiple cities, categories, and time intervals, maintaining a DIY scraper becomes resource-intensive. In such cases, using a professional data provider ensures scalability, monitoring, and structured output without constant engineering maintenance.