In the dynamic field of Artificial Intelligence (AI) and Machine Learning (ML), the availability and quality of data are paramount. As the digital landscape expands, an increasingly popular method for sourcing diverse datasets is web scraping. This technique offers a practical solution for acquiring the large and varied datasets necessary for effective AI model training. This guide delves into the nuanced process of using web-scraped data for training AI models, ensuring a balance between technical efficacy and ethical practice.

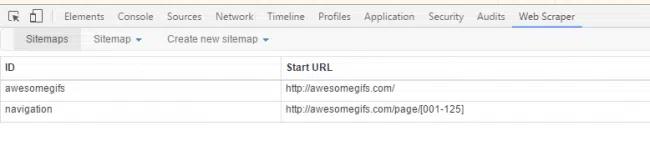

The Art of Web Scraping

At its core, web scraping is the practice of programmatically extracting data from websites. This information can range from simple text to complex datasets including images, prices, and operational data. The key to effective web scraping lies in using specialized tools and scripts that automate the data extraction process, thereby enabling the collection of substantial amounts of data efficiently.

Image Source: Bridging Points Media

Navigating Legal and Ethical Boundaries

A critical aspect of web scraping is navigating the legal and ethical boundaries associated with data collection. It is imperative to respect website terms of service, copyright laws, and data privacy regulations. In certain jurisdictions, unauthorized data extraction can lead to legal challenges, making it essential to approach web scraping with a thorough understanding of these constraints.

Data Collection for AI Training

- Identifying Data Needs

Start by identifying the type of data your AI model requires. This could be textual data, images, videos, or specific information like product prices or weather forecasts. The nature of your AI project will dictate the type of data needed.

- Choosing the Right Tools

Several tools and libraries facilitate web scraping, such as BeautifulSoup, Scrapy, and Selenium for Python. The choice depends on the complexity of the task and the structure of the website.

- Handling Data Formats

Data scraped from websites often comes in various formats (HTML, JSON, XML). Converting this data into a structured form like CSV, Excel, or a database format is essential for further processing.

- Data Preprocessing

Once the data is collected, it must be cleaned and preprocessed. This step involves removing duplicates, handling missing values, and transforming data into a format suitable for AI model training.

- Feature Engineering

Feature engineering involves creating predictive features from raw data. This step is crucial in transforming scraped data into a meaningful dataset that can provide real insights when fed into an AI model.

- Training AI Models

With the preprocessed data, you can start training your AI models. Depending on the project, this could involve supervised learning, unsupervised learning, or deep learning techniques.

- Model Evaluation and Refinement

After training, evaluate the model’s performance using appropriate metrics. Based on this evaluation, you might need to refine the model or revisit your data preprocessing steps.

Challenges and Best Practices

Image Source: PromptCloud – Web Scraping Providers

- Data Quality and Bias

One major challenge with web-scraped data is ensuring quality and avoiding bias. It’s important to source data from diverse and reliable websites to avoid skewing the AI model’s outcomes.

- Scalability and Efficiency

Scraping and processing large datasets can be resource-intensive. Efficient coding practices, parallel processing, and choosing the right tools can help manage this.

- Continuous Monitoring

Websites frequently update their structure and content. Regularly monitor and update your scraping scripts to ensure a consistent data flow.

Conclusion

Web-scraped data offers a treasure trove of information for training AI models. However, it

requires careful planning, ethical consideration, and technical proficiency to leverage effectively. By following the guidelines outlined in this comprehensive guide, practitioners can harness the power of web data to fuel innovative AI solutions.

To know more about PromptCloud’s web scraping services, send us an email at sales@promptcloud.com