Navigating and gathering information on the expansive World Wide Web can be quite challenging. This is where web crawlers step in. Web crawlers, which also go by the names spiders or bots, are robust instruments harnessed by search engines and various web applications to meticulously traverse and catalog the internet.

In this article, we will delve deep into the inner workings of web crawlers. We will examine their operational aspects, obstacles they encounter, their constraints, and prospects for future enhancements.

Understanding Web Crawlers and their Role

A web crawler is a computer program that takes on the task of exploring the vast landscape of the internet, moving from one webpage to another by following hyperlinks. Its primary mission revolves around the collection and systematic organization of information found on web pages, essentially aiding search engines in their efficient indexing and retrieval of data.

Web crawlers are pivotal in the online world as they aid in uncovering fresh web content, contribute to the upkeep of search engine indices, and supply data for a range of applications, including web analysis, data mining, and content recommendation systems.

The Functionality of Web Crawlers

Web crawlers employ a series of steps to perform their tasks effectively:

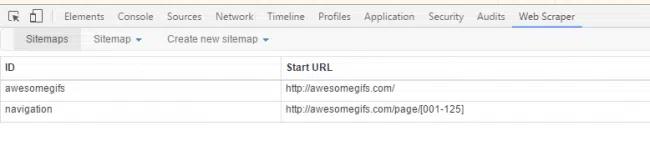

- Seed URLs: The crawler starts with a set of seed URLs, which typically consist of popular websites or user-defined starting points.

- HTTP Requests: The crawler initiates HTTP requests to retrieve webpages linked to the seed URLs.

- Parsing and Extraction: After obtaining the webpage, the crawler analyzes the HTML code to gather pertinent data, including links, textual content, images, and metadata.

- URL Frontier: The extracted URLs are added to a priority queue called the “URL frontier.” This queue holds the URLs yet to be visited.

- Visiting and Crawling: The crawler selects a URL from the URL frontier and repeats the crawling process, starting from step 2. This process continues recursively until the URL frontier is empty or a predetermined limit is reached.

- Indexing: As the crawler fetches webpages, it stores the collected data, building an index that enables fast retrieval and search capabilities.

Challenges and Limitations of Web Crawlers

While web crawlers are powerful tools, they face various challenges and limitations:

- Crawl Exhaustion: The web is vast and constantly evolving, making it impossible for crawlers to cover every webpage. Limited resources and crawl budget constraints further restrict their exploration capabilities.

- Crawl Traps: Some websites employ techniques to confuse or trap crawlers, leading to infinite loops or redundant crawling. Examples include pagination traps, dynamic URLs, and heavily JavaScript-dependent pages.

- Dynamic Content: Webpages with rapidly changing or personalized content pose a challenge to crawlers. Such content may be generated by client-side scripts or retrieved through AJAX requests, requiring advanced crawling and rendering techniques.

- Legal and Ethical Issues: Web crawling can potentially violate website terms of service or raise privacy concerns. Respectful crawling behavior, such as adhering to robots.txt directives and rate limits, is necessary to avoid legal disputes.

Future Trends and Advancements in Web Crawling

The field of web crawling continues to evolve to meet the demands of a dynamic web ecosystem. Several advancements and trends are shaping the future of web crawling:

- Focused Crawling: Focused crawling aims to prioritize specific topics or domains of interest, enabling crawlers to explore more efficiently and gather relevant data for targeted applications or research.

- Deep Learning and Natural Language Processing: By incorporating deep learning and natural language processing methods into web crawling algorithms, we can significantly improve their capacity to comprehend and extract valuable information from web pages. This enhancement leads to more precise indexing and in-depth content analysis.

- Distributed Crawling: Given the vast expanse of the internet, it is imperative to employ distributed crawling strategies that involve multiple crawlers collaborating to collectively explore a broader section of the web. Distribution strategies such as parallel crawling, load balancing, and decentralized architectures are being explored.

- Crawl Optimization: Researchers are constantly improving crawling algorithms and strategies to maximize efficiency and overcome challenges like crawl traps and crawl exhaustion. Techniques such as adaptive crawling, site-specific crawling policies, and intelligent scheduling are being developed.

Conclusion

Web crawlers are the backbone of search engines and numerous other web applications. It is crucial to grasp the technicalities, operation, obstacles, and forthcoming developments of web crawlers to effectively utilize the abundant information sources accessible on the internet.

As web crawlers consistently evolve and adjust to the ever-changing nature of the web, they will remain pivotal in facilitating efficient information retrieval and analysis.