The scraping of a website is not a daunting task anymore. The technically aware professionals will help you out of how to go about it. How to scrape a website is just a click away.

The scraping of a website is usually done to churn out the information from various websites for formulating of the strategy against the competitors. Before starting the process of a website scraping, it is significant to understand the reason or the purpose behind it. Website scraping is usually done so as to provide critical data and information to the businesses that they require from their competitors. With various computer based programs available, data can be easily and conveniently scraped from the websites of the stiff competitors and this information helps the business owners to take major business decisions helping in the development of a marketing strategy. When scraping offers pricing related information, business houses are able to ensure a perfect pricing strategy development that depends on the industry and market changes. If the product pricing is too high or too low, there can be a lot of issues, thus, it is important to strike a perfect balance.

In earlier times too, data used to be scraped from the websites’ of the competitors but it was done in a manual manner which has become redundant practice today. Also, this method used to be quite cumbersome and tedious, especially when each and every web page was studied in detail. This was the major reason behind discontinuation in the use of manual practices. This task became all the more difficult and impossible in case of limited time as in the case of many websites, their pages use to run into thousands. Also, the factor of unreliability was also high in case of manual website scraping as the chances of mistakes in such a process is quite high which may occur due to negligence.

When an automated process is used for the purpose of website scraping, not only energy and time is saved to a great extent, but also accuracy can be enhanced considerably in the process. In a matter of minutes, information on several product pages could be obtained using DaaS service / software for website scraping. Moreover, the chances of inaccurate or unreliable data are almost negligible. Pricing shifts can also be obtained by using Data mining softwares.

Rate parity can be implemented only when the prices are monitored constantly using different platforms and mediums and then a perfectly suitable decision is taken. It is also important to track various distribution channels as it helps you to derive with the right price that is perfectly in tandem with the target market. Pricing transparency is also assured with rate parity. Customer loyalty is boosted and the clients are encouraged to go through the site instead of going through the third party sites offering low prices to a significant extent. Thus, gleaning this information from the competitor’s sites prove to be quite advantageous.

With DaaS industry revolution, there is no need for spending a lot of money or time to watch the pricing policies adopted by the competitors in a manual manner. Additional costs will not be incurred and the customers would not be lost as other channels of distribution are also offering highly competitive prices when compared with you. Thus, if you are successful in striking a balance and offer a price that is highly competitive with your competitors, it will help you to gain more customers. Not only the name of the company will get a boost with the price monitoring but will also help in enhancing brand loyalty.

Web scraping, a method of collecting data online from various websites is a widely popular system among e-business associates. This method is basically performed by a computer program which extracts web content from the display output of other sites or other programs. Such systems, which are designed to crawl content from numerous web sources are called website scraper. The question may be raised what is the reason behind web scraping and why it has become common practice in today’s market.

There are ample online businesses which need a pull of information for their core business requirements. Like if you are in any sort of online commerce business, say i.e. online gifting, you need to collect information regarding different gift items of different companies or brands. From product name, description of the features and price, you need to put together all such information in your online portal. If you are thinking this sounds such an easy job, then I must tell you that you don’t collect information about certain products, maybe you have to collect as much as thousand products of different categories. Now, you can assume how much time consuming the procedure can be and why people are going for the Data crawling and extraction services.

The process of website information collection does not stop here. You also have to save the data systematically and it makes this entire process quite troublesome and very annoying. Copying data from numerous websites and pasting the same properly in another excel sheet is very much taxing and you cannot rule out the chance of human mistakes. Even a slight error cannot be rectified, or if rectified, it will consume another endless amount of time. To solve all such issues related to the web data collection, the crawler bots come to rescue. Web scraping has become the need of the day as human data entry sometimes fails to meet the requirements.

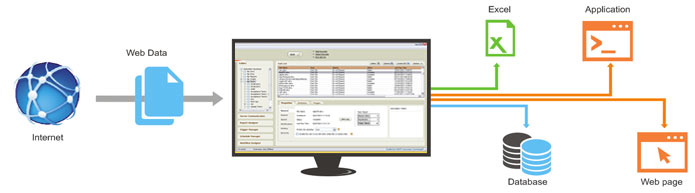

A web scraping software is built to meet the core requirements of the business persons or people who need a huge data resource. For error-free data mining, you must take the help of a program which will extract web content quickly, appropriately and in an organized way. Website scrapers are designed in a way that they can navigate through numerous Web Pages, churn information from the websites and keep it safely for future use.

For any business, it is very crucial to collect and keep important information. Either for analyzing in-house growth or reviewing competitors’ performance, you must need a web scraping software which will churn out the information for you as desired. For a cosmetics firm, it is highly important to maintain a proper and regularly updated product catalog. Hence, you need to take the help of an automated system. There comes the need of extract web content tools.

Gone are the days of working manually and you need to be updated on a real time basis or you will fight for your existence with the sheer pressure of competitions.

Image Credits: habiledata