Web crawlers have a vital function in the indexing and structuring of the extensive information present on the internet. Their role involves traversing web pages, gathering data, and rendering it searchable. This article delves into the mechanics of a web crawler, providing insights into its components, operations, and diverse categories. Let’s delve into the world of web crawlers!

What is a Web Crawler

A web crawler, referred to as a spider or bot, is an automated script or program designed to methodically navigate through internet websites. It starts with a seed URL and then follows HTML links to visit other web pages, forming a network of interconnected pages that can be indexed and analyzed.

The Purpose of a Web Crawler

A web crawler’s main objective is to gather information from web pages and generate a searchable index for efficient retrieval. Major search engines such as Google, Bing, and Yahoo heavily rely on web crawlers to construct their search databases. Through the systematic examination of web content, search engines can deliver users with pertinent and current search results.

It’s important to note that the application of web crawlers extends beyond search engines. They are also used by various organizations for tasks such as data mining, content aggregation, website monitoring, and even cybersecurity.

The Components of a Web Crawler

A web crawler comprises several components working together to achieve its goals. Here are the key components of a web crawler:

- URL Frontier: This component manages the collection of URLs waiting to be crawled. It prioritizes URLs based on factors like relevancy, freshness, or website importance.

- Downloader: The downloader retrieves web pages based on the URLs provided by the URL frontier. It sends HTTP requests to web servers, receives responses, and saves the fetched web content for further processing.

- Parser: The parser processes the downloaded web pages, extracting useful information such as links, text, images, and metadata. It analyzes the page’s structure and extracts the URLs of linked pages to be added to the URL frontier.

- Data Storage: The data storage component stores the collected data, including web pages, extracted information, and indexing data. This data can be stored in various formats like a database or a distributed file system.

How Does a Web Crawler Work

Having gained insight into the elements involved, let’s delve into the sequential procedure that elucidates the functioning of a web crawler:

- Seed URL: The crawler starts with a seed URL, which could be any web page or a list of URLs. This URL is added to the URL frontier to initiate the crawling process.

- Fetching: The crawler selects a URL from the URL frontier and sends an HTTP request to the corresponding web server. The server responds with the web page content, which is then fetched by the downloader component.

- Parsing: The parser processes the fetched web page, extracting relevant information such as links, text, and metadata. It also identifies and adds new URLs found on the page to the URL frontier.

- Link Analysis: The crawler prioritizes and adds the extracted URLs to the URL frontier based on certain criteria like relevance, freshness, or importance. This helps to determine the order in which the crawler will visit and crawl the pages.

- Repeat Process: The crawler continues the process by selecting URLs from the URL frontier, fetching their web content, parsing the pages, and extracting more URLs. This process is repeated until there are no more URLs to crawl, or a predefined limit is reached.

- Data Storage: Throughout the crawling process, the collected data is stored in the data storage component. This data can later be used for indexing, analysis, or other purposes.

Types of Web Crawlers

Web crawlers come in different variations and have specific use cases. Here are a few commonly used types of web crawlers:

- Focused Crawlers: These crawlers operate within a specific domain or topic and crawl pages relevant to that domain. Examples include topical crawlers used for news websites or research papers.

- Incremental Crawlers: Incremental crawlers focus on crawling new or updated content since the last crawl. They utilize techniques like timestamp analysis or change detection algorithms to identify and crawl modified pages.

- Distributed Crawlers: In distributed crawlers, multiple instances of the crawler run in parallel, sharing the workload of crawling a vast number of pages. This approach enables faster crawling and improved scalability.

- Vertical Crawlers: Vertical crawlers target specific types of content or data within web pages, such as images, videos, or product information. They are designed to extract and index specific types of data for specialized search engines.

How often should you crawl web pages?

The frequency of crawling web pages depends on several factors, including the size and update frequency of the website, the importance of the pages, and the available resources. Some websites may require frequent crawling to ensure the latest information is indexed, while others may be crawled less frequently.

For high-traffic websites or those with rapidly changing content, more frequent crawling is essential to maintain up-to-date information. On the other hand, smaller websites or pages with infrequent updates can be crawled less frequently, reducing the workload and resources required.

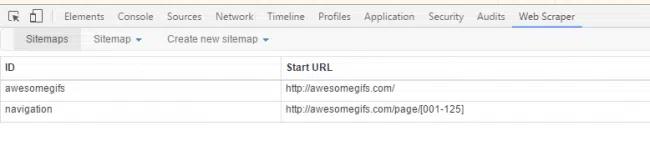

In-House Web Crawler vs. Web Crawling Tools

When contemplating the creation of a web crawler, it’s crucial to assess the intricacy, scalability, and necessary resources. Constructing a crawler from the ground up can be a time-intensive endeavor, encompassing activities such as managing concurrency, overseeing distributed systems, and addressing infrastructure obstacles. On the flip side, opting for web crawling tools or frameworks can offer a swifter and more effective resolution.

Alternatively, using web crawling tools or frameworks can provide a faster and more efficient solution. These tools offer features like customizable crawling rules, data extraction capabilities, and data storage options. By leveraging existing tools, developers can focus on their specific requirements, such as data analysis or integration with other systems.

However, it’s crucial to consider the limitations and costs associated with using third-party tools, such as restrictions on customization, data ownership, and potential pricing models.

Conclusion

Search engines heavily rely on web crawlers, which are instrumental in the task of arranging and cataloging the extensive information present on the internet. Grasping the mechanics, components, and diverse categories of web crawlers enables a deeper understanding of the intricate technology that underpins this fundamental process.

Whether opting to construct a web crawler from scratch or leveraging pre-existing tools for web crawling, it becomes imperative to adopt an approach aligned with your specific needs. This entails considering factors such as scalability, complexity, and the resources at your disposal. By taking these elements into account, you can effectively utilize web crawling to gather and analyze valuable data, thereby propelling your business or research endeavors forward.

At PromptCloud, we specialize in web data extraction, sourcing data from publicly available online resources. Get in touch with us at sales@promptcloud.com