Discover the hidden costs of in-house web scraping

PromptCloud Inc, 16192 Coastal Highway, Lewes De 19958, Delaware USA 19958

We are available 24/ 7. Call Now. marketing@promptcloud.com- Home

- Scrape and Extract Stock Marke ...

Scrape and Extract Stock Market Data

Scrape financial data

EMAIL : sales@promptcloud.com

INDIA CONTACT : +91 80 4121 6038

Applications of financial data

- Financial data and rating services: Our crawlers can be used for monitoring several websites to keep track of financial news. With this in place, changes in the financial state of corporations that can potentially impact the market can be detected in near real time.

- Financial advisory: If you are looking to build a financial recommendation platform, using web crawling to fetch the needed data would be the ideal option. For example, you could crawl the interest rate for various products on bank websites to recommend the relevant ones to your consumers.

- Risk mitigation services: Web crawling can be used to extract content from the sites of regulatory bodies (government, court, etc.) to mitigate the risk of lending in case of natural disasters, crimes (example: identity theft) and policy changes.

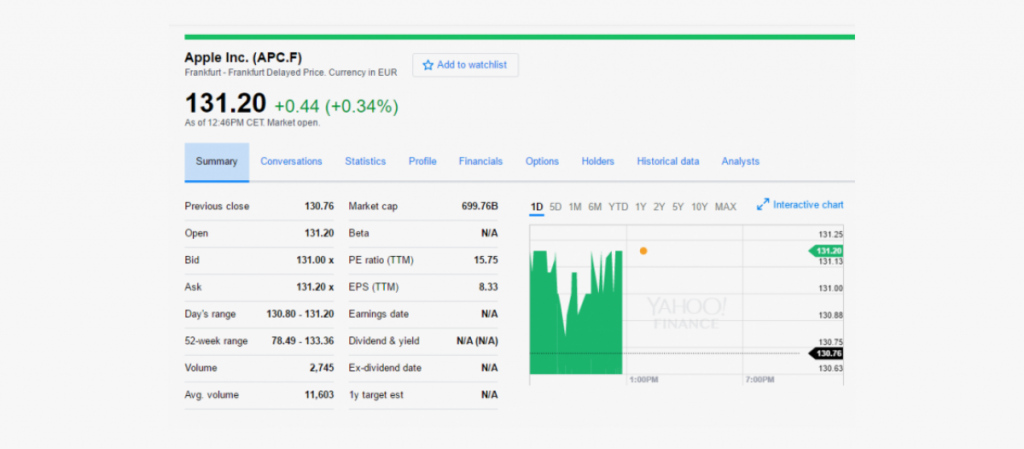

- Investment firms: Making safe investments is the key to survival as an investment firm. Scraping financial data can aid you in detecting micro-trends for making such efficient investments and in share trading. The financial performance data needed for this can be scraped from sites like Yahoo Finance, Google Finance, Bloomberg market etc.

- Compliance management: Staying compliant to the policies and regulations set by financial regulators is important if you want to keep your business out of legal troubles and ramifications. You can use web crawling to stay updated to the changing policies and laws to avoid possible consequences.

The requirements

To get started, all you need to do is let us know about your requirement like sources, frequency, data points and the delivery format and method. With this information, our team will do a feasibility check to make sure your requirement is legally and technically feasible. With our fully managed web crawling service, you don’t need to be involved in any of the technical aspects. Common data points associated with stock market data are:

Previous Close

Open

Bid, Ask

Day’s Range

Average Volume

Market Cap

Beta

PE Ratio

EPS

Earning’s Date

Dividend & Yield

Ex-Dividend Date

Crawler setup for scraping financial data

Once the feasibility check is completed and the requirement is found feasible, our team will proceed onto the next step – crawler setup. Setting up the crawler is the most complicated and technically demanding process in web crawling. This will be taken care of by our dedicated technical team and typically takes a few days to get completed. The data starts flowing in after the crawler is set up and running. This initial data might contain noise, which includes unwanted HTML tags and text that got scraped along with the data. To remove this, the data dump file is run through a cleansing setup. Finally, the data is properly structured to ensure compatibility with databases and analytics systems.

Data delivery

The data delivery formats and methods are just as customizable as our crawling solution. You can choose between XML, JSON and CSV for data formats and get the data via our API, Amazon S3, Dropbox, Box or FTP.Recommended

Price Crawling Data Extraction Ecommerce

Read More >>

Scraping Product Prices and Reviews

Read More >>