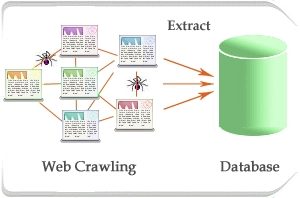

There are many reasons why there may be a requirement to pull data or information from other sites, and usually the process begins after checking whether the site has an official API. There are very few people who are aware about the presence of structured data that is supported by every website automatically. We are basically talking about pulling data right from the HTML, also referred to as HTML scraping. This is an awesome way of gleaning data and information from third party websites.

Any webpage content that can be viewed can be scraped without any trouble. If there is any way provided by the website to the browser of the visitor to download content and use the same in a highly structured manner, in that case, accessing of the content programmatically is possible. HTML scraping works in an amazing manner.

Before indulging in HTML scraping, one can inspect the browser for network traffic. Site owners have a couple of tricks up their sleeve to thwart this access, but majority of them can be worked around.

Before moving on to how HTML scraping works, we must understand the reasons behind the same. Why is scraping needed? Once you get a satisfactory answer to this question, you can start looking for RSS or API feeds or various other traditional structured data forms. It is significant to understand that when compared with APIs, websites are more significant.

The most important advantage of the same is the maintenance of their websites where a lot of visitors visit rather than safeguarding structured data feeds. With Tweeter, the same has been publicly seen when it clamps down on the developer ecosystem. Many times, API feeds change or move without any prior warning. Many times, it can also be a deliberate attempt, but mostly, such issues or problems erupt as there is no authority or an organization that maintains or takes care of the structured data. It is rarely noticed, if the same gets severely mangled or goes offline. In case the website has certain issues or the website no longer works, the problem is more in the form of a ball in your court requiring dealing with the same without losing any time.

Rate limiting is another factor that needs a lot of thinking and in case of public websites, it virtually doesn’t exist. Besides some occasional sign up pages or captchas, many business websites fail to create and built defenses against any unwarranted automated access. Many times, a single website can be scraped for four hours straight without anyone noticing. There are chances that you would not be viewed under DDOS attack unless concurrent requests are being made by you. You will be seen just as an avid visitor or an enthusiast in the logs, that too, in case anyone is looking.

Another factor in HTML scraping is that one can easily access any website anonymously. Behavior tracking can be done with a few ways by the administrator of the website and this turns out to be beneficial if you want to privately gather the data. Many times, registration is imperative with APIs in order to get key and with any request being sent, this key also needs to be sent. But, in case of simple and straightforward HTTP requests, the visitor can stay anonymous besides cookies and IP address, which can again be spoofed.

The availability of HTML scraping is universal and there is no need to wait for the opening of the site for an API or for contacting anyone in the organization. One simply needs to spend some time and browse websites at a leisurely pace until the data you want is available and then find out the basic patterns to access the same.

Now you need to don a hat of a professional scraper and simply dive in. Initially, it may take some time to work up figuring out the way the data have been structured and the way it can be accessed just as we read APIs. If there is no documentation unlike APIs, you need to be a little more smart about it and use clever tricks.

Some of the most used tricks are

Data Fetching

The first thing that is required is data fetching. Find endpoints to begin with, that is the URLs that can help in returning the data that is required. If you are pretty sure about the data and the way it should be structured so as to match your requirements, you will require a particular subset for the same and later you can indulge in site browsing using the navigation tools.

GET Parameter

The URLs must be paid attention to and see the way it changes as you indulge in clicking between the sections and the way they divide into various subsections. Before starting, the other option that can be used is to straight away go to the search functionality of the site. Certain terms can be typed and the URL needs to be focused again for watching the changes on the basis of what is being searched. A GET parameter will be probably seen like q which changes on the basis of the search term used by you. Other GET parameters that are not being used can be removed from the URL until only the ones that are needed are left for data loading. Before a query string, there must always be a “?” beginning.

Now the time has come when you would have started to come across the data that you would like to see and want to access, but sometimes, there may be certain pagination issues that require to be dealt with. Due to these issues, you may not be able to see the data in its entirety. Single requests are kept away by many APIs as well from database slamming. Many times, clicking the next page can add some offset parameter that helps in data visibility on the page. All these steps will help you succeed in HTML scraping.