In the ever-expanding digital universe, data reigns supreme. At the heart of this data-centric world lies a crucial process known as data extraction. Data extraction techniques involve retrieving data from various sources – be it a database, a website, or a cloud storage system. This extraction process is foundational in transforming raw data into valuable insights, propelling businesses and organizations forward in an increasingly competitive landscape.

The significance of data extraction tool cannot be overstated in today’s data-driven era. It serves as the first step in the data processing pipeline, enabling organizations to gather and consolidate disparate data forms. This aggregated data becomes the bedrock for informed decision-making, trend analysis, and strategic planning. From enhancing customer experiences to driving operational efficiencies, the implications of data extraction tools span a vast array of industries and applications. Systematic review of tools and services help businesses ace the game.

Our post delves into the various techniques employed to extract data, the tools that facilitate this process, and the diverse use cases where data extraction plays a pivotal role. Whether you are a data enthusiast, a business professional, or someone curious about the mechanics of data extraction, this page aims to provide a thorough and insightful overview of this vital extraction process. Join us on this journey to uncover how data extraction is reshaping the way we understand and utilize information in our digital world.

Data Extraction Definition

Data extraction is the process of retrieving data from various data sources, which may include databases, websites, cloud services, and numerous other repositories. It is a critical first step in the broader data processing cycle, which encompasses data transformation and data loading. In essence, data extraction lays the groundwork for data analysis and business intelligence activities. This process can be automated or manual data extraction, depending on the complexity of the data and the source from which it is being extracted. Systematic review of data extraction tool is prerequisite for the best outcome.

At its core, data extraction is about converting data into a usable format for further analysis and processing. It involves identifying and collecting relevant data source, which is then typically moved to a data warehouse or a similar centralized data repository. In the context of data analysis, extraction allows for the consolidation of disparate data sources, making it possible to uncover hidden insights, identify trends, extract data from web pages and make data-driven decisions.

Types of Data Extraction

Data extraction methodologies vary based on the nature of the data source and the type of data being extracted. The three primary types of data extraction include:

Structured Data Extraction:

- This involves extracting data from structured sources like databases or spreadsheets.

- Structured data is highly organized and easily searchable, often stored in rows and columns with clear definitions.

- Examples include SQL databases, Excel files, and CSV files.

Unstructured Data Extraction Techniques:

- Unstructured data extraction methods deals with data that lacks a predefined format or organization.

- This type of data is usually text-heavy and includes information like emails, social media posts, or documents.

- Extracting unstructured data often requires more complex processes, like natural language processing (NLP) or image recognition.

Semi-Structured Data Extraction Methods:

- Semi-structured data extraction is a blend of structured and unstructured data extraction methods.

- This type of data is not as organized as structured data but contains tags or markers to separate semantic elements and enforce hierarchies of records and fields.

- Examples include JSON, XML files, and some web pages.

Understanding these different types of data extraction tools is crucial for choosing the right method and tools. The choice depends on the nature of the data source and the intended use of the extracted data, with each type posing its unique challenges and requiring specific strategies for effective extraction. Systematic review of data extraction software is prerequisite for the best outcome.

Data Extraction Techniques

Data extraction techniques vary in complexity and scope, depending on the source of data and the specific needs of a project. Understanding these techniques in data science is key to efficiently harnessing and leveraging data.

Manual Data Extraction Software vs Automated Extraction:

Manual Data Extraction:

- Involves human intervention to retrieve data. This might include copying data from documents, websites, or other sources manually.

- It is time-consuming and prone to errors, suitable for small-scale or one-time projects where automated extraction is not feasible.

- Manual extraction lacks scalability and is often less efficient.

- Automated Data Extraction:

- Utilizes data extraction software to automatically extract data, minimizing human intervention.

- More efficient, accurate, and scalable compared to manual extraction.

- Ideal for large datasets and ongoing data extraction needs.

- Automated data extraction tools include techniques like web scraping, API extraction, and ETL processes.

Web Scraping:

- Web scraping involves extracting data from websites.

- It automates the process of collecting structured web data, making it faster and more efficient than manual extraction.

- Web scraping is used for various purposes, including price monitoring, market research, and sentiment data analysis.

- This technique requires consideration of legal and ethical issues, such as respecting website terms of service and copyright laws.

API Extraction:

- API (Application Programming Interface) extraction uses APIs provided by data holders to access data analysis.

- This method is structured, efficient, and typically does not violate terms of service.

- API extraction is commonly used to retrieve data from social media platforms, financial systems, and other online services.

- It ensures real-time, up-to-date data access and is ideal for dynamic data sources.

Database Extraction:

- Involves extracting data from database management systems using queries.

- Commonly used in structured databases like SQL, NoSQL, or cloud databases.

- Database extraction requires knowledge of query languages like SQL or specialized database tools.

ETL Processes:

- ETL stands for Extract, Transform, Load.

- It’s a three-step process where data is extracted from various sources, transformed into a suitable format, and then loaded into a data warehouse or other destination.

- The transform phase includes cleansing, enriching, and reformatting the data.

- ETL is essential in data integration strategies, ensuring data is actionable and valuable for business intelligence and analytics.

Each of these techniques serves a specific purpose in data extraction and can be chosen based on the data requirements, scalability needs, and complexity of the data sources.

Tools for Data Extraction

Data extraction tools are specialized software solutions designed to facilitate the process of retrieving data from various sources. These tools vary in complexity and functionality, from simple web scraping utilities to comprehensive platforms capable of handling large-scale, automated data extractions. The primary goal of these tools is to streamline the data extraction process, making it more efficient, accurate, and manageable, especially when dealing with large volumes of data or complex data structures.

Criteria for Choosing Tools

When selecting a data extraction tool, consider the following factors:

- Data Requirements: The complexity and volume of data you need to extract.

- Ease of Use: Whether the tool requires technical expertise or is user-friendly for non-developers.

- Scalability: The tool’s ability to handle increasing amounts of data.

- Cost: Budget considerations and the tool’s pricing model.

- Integration Capabilities: How well the tool integrates with other systems and workflows.

- Compliance and Security: Ensuring the tool adheres to legal standards and data privacy regulations.

- Support and Community: Availability of customer support and a user community for guidance.

Choosing the right tool depends on balancing these criteria with your specific data extraction needs and the strategic objectives of your project.

Use Cases of Data Extraction

Market Research:

- Data extraction is pivotal in market research for gathering vast amounts of information from different sources like social media, forums, and competitor websites.

- It helps in identifying market trends, customer preferences, and industry benchmarks.

- By analyzing this extracted relevant data, businesses can make informed decisions on product development, marketing strategies, and target market identification.

Competitive Analysis:

- In competitive analysis, data extraction is used to monitor competitors’ online presence, pricing strategies, and customer engagement.

- This includes extracting data from competitors’ websites, customer reviews, and social media activity.

- The valuable insights gained enable businesses to stay ahead of the curve, adapting to market changes and competitor strategies effectively.

Customer Insights:

- Data extraction aids in understanding customer behavior by gathering data from various customer touchpoints like e-commerce platforms, social media, and customer feedback forms.

- Analyzing this data provides valuable insights into customer needs, satisfaction levels, and purchasing patterns.

- This information is crucial for tailoring products, services, and marketing campaigns to meet customer expectations better.

Financial Analysis:

- In financial analysis, data extraction is used to gather information from financial reports, stock market trends, and economic indicators.

- This data is crucial for performing financial forecasting, risk assessment, and investment analysis.

- By extracting and analyzing financial data, companies can make better financial decisions, assess market conditions, and predict future trends.

In each of these use cases, data extraction plays a fundamental role in collecting and preparing data for deeper analysis and decision-making. The ability to efficiently and accurately extract relevant data is a key factor in gaining actionable insights and maintaining a competitive edge in various industries.

Best Practices in Data Extraction

Ensuring Data Quality:

- Importance of Accuracy and Integrity: The value of extracted data hinges on its accuracy and integrity. High data quality is crucial for reliable analysis and informed decision-making.

- Verification and Validation: Implement processes to verify and validate extracted data. This includes consistency checks, data cleaning, and using reliable data sources.

- Regular Updates: Data should be regularly updated to maintain its relevance and accuracy, especially in fast-changing environments.

- Avoiding Data Bias: Be mindful of biases in data collection and extraction processes. Ensuring a diverse range of data sources can mitigate biases and enhance the quality of insights.

Ethical Considerations:

- Compliance with Laws and Regulations: Adhere to legal frameworks governing data collection, such as GDPR in Europe or CCPA in California. This includes respecting copyright laws and terms of service of websites.

- Respecting Privacy: Ensure that personal data is extracted and used in a manner that respects individual privacy rights. Obtain necessary consents where required.

- Transparency and Accountability: Maintain transparency in data extraction practices. Be accountable for the methods used and the handling of the extracted data.

Data Security:

- Protecting Extracted Data: Data extracted, especially personal and sensitive data, must be securely stored and transmitted. Implement robust security measures to prevent unauthorized access, breaches, and data loss.

- Encryption and Access Control: Use encryption for data storage and transmission. Implement strict access controls to ensure that only authorized personnel can access sensitive data.

- Regular Security Audits: Conduct regular security audits and updates to identify vulnerabilities and enhance data protection measures.

- Data Anonymization: Where possible, anonymize sensitive data to protect individual identities. This is particularly important in fields like healthcare and finance.

Adhering to these best practices in data collection not only ensures the data quality and reliability of the data but also builds trust with stakeholders and protects the reputation of the entity conducting the extraction.

In Summary

In today’s fast-paced digital world, data is more than just information; it’s a powerful asset that can drive innovation, inform strategic decisions, and offer competitive advantages. Understanding this, we’ve explored the multifaceted realm of data extraction, covering its data extraction techniques, tools, and diverse use cases across industries like market research, competitive analysis, customer insights, financial analysis, and healthcare data management.

In the rapidly evolving digital landscape of today, the significance of data extends far beyond being mere information; it stands as a valuable asset with the potential to steer innovation, shape strategic choices, and confer competitive edges. Recognizing this profound value, we have delved into the intricate domain of data extraction, delving into its manifold techniques, tools, and its wide-ranging applications in various sectors such as market research, competitive analysis, customer profiling, financial assessment, and healthcare data handling.

Quality data extraction solution is pivotal in transforming raw data into actionable insights. From ensuring data accuracy and integrity to adhering to ethical considerations and maintaining robust data security, the best practices in data extraction set the foundation for reliable and effective data utilization.

PromptCloud: Your Partner in Data Extraction Excellence

Delving deep into the realm of data extraction solution unveils the critical importance of selecting a reliable partner to guide through the intricate processes involved. In this intricate landscape, the role of a proficient data extraction service provider becomes paramount. This is precisely where PromptCloud shines. Renowned for our proficiency in offering customized data extraction solutions, we are dedicated to meeting your unique data requirements with utmost precision and effectiveness. Our specialized services are meticulously crafted to tackle even the most intricate and extensive web scraping assignments, ensuring the delivery of top-notch, well-structured data that serves as the foundation for making informed and strategic business choices.

Whether you’re looking to gain in-depth market insights, monitor your competitors, understand customer behavior, or manage vast amounts of healthcare data, PromptCloud is equipped to transform your data extraction challenges into opportunities.

Ready to unlock the full potential of data for your business? Connect with PromptCloud today. Our team of experts is poised to understand your requirements and provide a solution that aligns perfectly with your business goals. Harness the power of data with PromptCloud and turn information into your strategic asset. Contact us at sales@promptcloud.com

Frequently Asked Questions

What is a data extraction tool?

A data extraction tool is a software or service designed to retrieve data from various sources, such as databases, websites, PDFs, and other digital documents, and convert it into a usable format. These tools are critical in the fields of data analysis, business intelligence, and web scraping, as they help automate the process of collecting data, reducing the need for manual data entry and ensuring more accurate and efficient data retrieval.

- Automation: They automate the process of extracting data from structured or unstructured sources, saving time and reducing errors associated with manual extraction.

- Data Transformation: These tools often have the capability to transform extracted data into a format that is more suitable for analysis. For example, they can normalize unstructured data into structured data that can be easily queried and analyzed.

- Integration: Data extraction tools typically offer features that allow the extracted data to be directly fed into data warehouses, databases, or business intelligence tools for immediate use.

- Scalability: They can handle large volumes of data efficiently, making them ideal for businesses that need to process substantial amounts of information regularly.

What is the best data extraction tool?

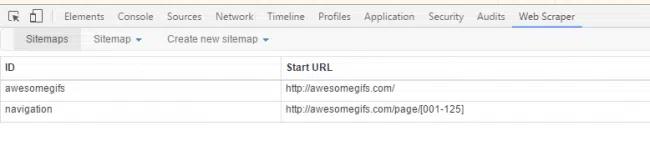

Choosing the “best” data extraction tool often depends on your specific needs, such as the type of data you’re working with, the complexity of the tasks, the volume of data, and your budget. Here are some of the top data extraction tools available, each with its own strengths that might make it the best fit for different scenarios:

1. Octoparse

- Strengths: Octoparse is a user-friendly, no-code web scraping tool that makes it easy to extract data from websites. It is particularly beneficial for individuals or businesses without a programming background but requires robust data extraction capabilities. It also offers both cloud-based and local extraction options, handling dynamic websites using AJAX and JavaScript.

- Best For: Non-technical users needing detailed web scraping capabilities without coding.

2. Import.io

- Strengths: Import.io specializes in converting web data into structured data. It is well-suited for large-scale web data extraction and offers a comprehensive suite of data integration, transformation, and automation tools.

- Best For: Businesses that need to extract and analyze large volumes of data from various web sources.

3. UiPath

- Strengths: Primarily known for its Robotic Process Automation (RPA) capabilities, UiPath excels at automating data extraction from websites and various business applications. It integrates well with enterprise systems and is a good choice for automating complex workflows.

- Best For: Enterprises looking to automate across various systems and require extensive workflow integration.

4. ParseHub

- Strengths: ParseHub is a powerful tool for extracting data from websites that use JavaScript, AJAX, cookies, etc. It offers a visual approach to data extraction, making it accessible to users without extensive coding knowledge.

- Best For: Users who need to scrape complex and dynamically changing websites.

5. Scrapy

- Strengths: As an open-source and fully customizable framework, Scrapy allows developers to write their own crawl and scraping code. It handles large amounts of data and complex data extraction, making it suitable for developers looking for a highly flexible and scalable tool.

- Best For: Programmers and developers who need a customizable and powerful scraping solution.

6. Beautiful Soup

- Strengths: This Python library is great for quick turnaround projects that require scraping of HTML and XML documents. It is favored for its ease of use and the ability to work with other Python libraries for data manipulation and more.

- Best For: Data scientists and developers working in Python who need a straightforward tool for occasional scraping tasks.

Is Excel a data extraction tool?

Yes, Excel can be considered a data extraction tool, although it is primarily known as a spreadsheet application. Excel offers several features that allow users to extract and manipulate data effectively for various purposes. Here’s how Excel functions as a data extraction tool:

1. Importing Data

Excel can import data from various sources, including other spreadsheets, CSV files, text files, and databases. It also supports importing data from web pages and can connect directly to external databases using ODBC (Open Database Connectivity) or directly from SQL Server, MySQL, and others.

2. Data Queries

Using the Query Editor (formerly known as Power Query), Excel users can perform sophisticated data manipulation and extraction tasks. Power Query is a powerful tool within Excel that allows you to transform, merge, and enhance data from various sources before importing it into your spreadsheet for analysis.

3. Data Connections

Excel allows you to establish live connections to external data sources, enabling you to refresh data in your spreadsheet as it changes at the source. This feature is particularly useful for keeping your data up-to-date without manually re-importing it.

4. Automating Data Tasks

With Excel’s VBA (Visual Basic for Applications) scripting, users can automate the extraction and processing of data, making Excel a flexible tool for repetitive tasks and complex data operations.

Limitations of Excel as a Data Extraction Tool:

While Excel is powerful for many data extraction and manipulation tasks, it has some limitations compared to specialized data extraction tools:

- Scalability: Excel is not ideal for handling extremely large datasets, as performance can significantly degrade with very large files or complex operations.

- Real-time Data Processing: Although Excel can connect to live data sources, it isn’t primarily designed for real-time data processing and can be less efficient than tools specifically built for that purpose.

- Complex Web Scraping: Excel can pull data from web pages, but it is limited in its ability to handle complex web scraping needs, especially when dealing with dynamic content that requires interaction such as clicking or entering data.

What are the three data extraction techniques?

Data extraction is a fundamental process in data management and analysis, involving the retrieval of data from various sources. There are several techniques for data extraction, but here are three primary methods commonly used across different industries:

1. Batch Data Extraction

Batch data extraction involves collecting data in batches at scheduled intervals. This method is used when real-time data is not necessary but periodic updates are needed. Data is often extracted at off-peak times to minimize impact on system performance. This technique is ideal for scenarios where it’s acceptable to work with data that might not be up-to-the-minute, such as daily sales reports or monthly inventory checks.

Advantages:

- Reduces the load on the source system during peak hours.

- Simplifies the management of data flows by dealing with large volumes of data at known times.

Disadvantages:

- Data is not real-time and may be outdated depending on the frequency of extraction.

- Scheduling must be managed carefully to avoid conflicts or system overloads.

2. Real-Time Data Extraction

Real-time data extraction, as the name suggests, involves extracting data as soon as it becomes available. This method is crucial for applications where immediate data is essential, such as in financial trading, real-time analytics, or monitoring systems. Real-time extraction ensures that the data used for processing and decision-making is current.

Advantages:

- Provides up-to-date information, allowing for timely decision-making.

- Enables immediate response to operational events, enhancing dynamic business processes.

Disadvantages:

- More complex and costly to implement and maintain.

- Can put a significant load on the source system, affecting performance.

3. Incremental Data Extraction

Incremental data extraction involves extracting only data that has changed since the last extraction. Changes can be tracked through timestamps, logs, or trigger-based systems. This technique is efficient for managing data in large databases where continually extracting all data (as in full extraction) is impractical due to volume and resource constraints.

Advantages:

- Reduces the volume of data transported, improving performance and reducing resource usage.

- Ensures that the data warehouse or destination is regularly updated without the need for full reloads.

Disadvantages:

- Requires a reliable method of tracking changes in the source data, which might not always be available.

- More complex to implement than batch processing as it requires mechanisms to detect and extract only the changes.