In the fast-paced world of information, businesses are diving headfirst into the realm of data-driven insights to shape their strategic moves. Let’s explore the captivating universe of data scraping—a crafty process that pulls information from websites, laying the groundwork for essential data gathering.

Come along as we navigate the intricacies of data scraping, revealing a variety of tools, advanced techniques, and ethical considerations that add depth and meaning to this game-changing practice.

Image Source: https://www.collidu.com/

Data Scraping Tools

Embarking on a data scraping adventure requires acquainting oneself with a variety of tools, each with its own quirks and applications:

- Web scraping software: Dive into programs like Octoparse or Import.io, offering users, regardless of technical expertise, the power to extract data effortlessly.

- Programming languages: The dynamic duo of Python and R, coupled with libraries like Beautiful Soup or rvest, takes center stage for crafting custom scraping scripts.

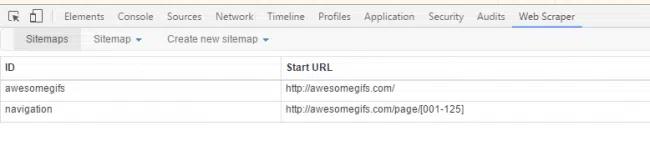

- Browser extensions: Tools such as Web Scraper or Data Miner provide nifty in-browser options for those quick scraping tasks.

- APIs: Some websites generously offer APIs, streamlining structured data retrieval and reducing reliance on traditional scraping techniques.

- Headless browsers: Meet Puppeteer and Selenium, the automation maestros that simulate user interaction to extract dynamic content.

Each tool boasts unique advantages and learning curves, making the selection process a strategic dance that aligns with project requirements and the user’s technical prowess.

Mastering Data Scraping Techniques

Efficient data scraping is an art that involves several techniques ensuring a smooth collection process from diverse sources. These techniques include:

- Automated Web Scraping: Unleash bots or web crawlers to gracefully gather information from websites.

- API Scraping: Harness the power of Application Programming Interfaces (APIs) to extract data in a structured format.

- HTML Parsing: Navigate the web page landscape by analyzing the HTML code to extract the necessary data.

- Data Point Extraction: Precision matters—identify and extract specific data points based on predetermined parameters and keywords.

- Captcha Solving: Conquer security captchas with technology to bypass barriers set up to protect websites from automated scraping.

- Proxy Servers: Don different IP addresses to dodge IP bans and rate limiting while scraping copious amounts of data.

These techniques ensure sensitive and targeted data extraction, respecting the delicate balance between efficiency and the legal boundaries of web scraping.

Best Practices for Quality Results

To achieve top-notch results in data scraping, adhere to these best practices:

- Respect Robots.txt: Play by the rules outlined in websites’ robots.txt file—only access allowed data.

- User-Agent String: Present a legitimate user-agent string to avoid confusing web servers about the identity of your scraper.

- Throttling Requests: Implement pauses between requests to lighten the server load, preventing the dreaded IP blocking.

- Avoiding Legal Issues: Navigate the landscape of legal standards, data privacy laws, and website terms of use with finesse.

- Error Handling: Design robust error handling to navigate unexpected website structure changes or server hiccups.

- Data Quality Checks: Regularly comb through and clean the scraped data for accuracy and integrity.

- Efficient Coding: Employ efficient coding practices to create scalable, maintainable scrapers.

- Diverse Data Sources: Enhance the richness and reliability of your dataset by collecting data from multiple sources.

Ethical Considerations in the World of Data Scraping

While data scraping unveils invaluable insights, it must be approached with ethical diligence:

- Respect for Privacy: Treat personal data with the utmost privacy considerations, aligning with regulations like the GDPR.

- Transparency: Keep users informed if their data is being collected and for what purpose.

- Integrity: Avoid any temptation to manipulate scraped data in misleading or harmful ways.

- Data Utilization: Use data responsibly, ensuring it benefits users and steers clear of discriminatory practices.

- Legal Compliance: Abide by laws governing data scraping activities to sidestep any potential legal repercussions.

Image Source: https://dataforest.ai/

Data Scraping Use Cases

Explore the versatile applications of data scraping in various industries:

- Finance: Uncover market trends by scraping financial forums and news sites. Keep an eye on competitor prices for investment opportunities.

- Hotel: Aggregate customer reviews from different platforms to analyze guest satisfaction. Keep tabs on competitors’ pricing for optimal pricing strategies.

- Airline: Collect and compare flight pricing data for competitive analysis. Track seat availability to inform dynamic pricing models.

- E-commerce: Scrape product details, reviews, and prices from different vendors for market comparison. Monitor stock levels across platforms for effective supply chain management.

Conclusion: Striking a Harmonious Balance in Data Scraping

As we venture through the vast world of data scraping, finding that sweet spot is key. With the right tools, savvy techniques, and a dedication to doing things right, both businesses and individuals can tap into the true power of data scraping.

When we handle this game-changing practice with responsibility and openness, it not only sparks innovation but also plays a role in shaping a thoughtful and flourishing data ecosystem for everyone involved.

FAQs:

What is data scraping work?

Data scraping work involves the extraction of information from websites, allowing individuals or businesses to gather valuable data for various purposes, such as market research, competitive analysis, or trend monitoring. It’s like having a detective that sifts through web content to uncover hidden gems of information.

Is it legal to scrape data?

The legality of data scraping depends on how it’s done and whether it respects the terms of use and privacy regulations of the targeted websites. Generally, scraping public data for personal use may be legal, but scraping private or copyrighted data without permission is likely to be unlawful. It’s crucial to be aware of and adhere to the legal boundaries to avoid potential consequences.

What is the data scraping technique?

Data scraping techniques encompass a range of methods, from automated web scraping using bots or crawlers to leveraging APIs for structured data extraction. HTML parsing, data point extraction, captcha solving, and proxy servers are among the various techniques employed to efficiently collect data from diverse sources. The choice of technique depends on the specific requirements of the scraping project.

Is data scraping easy?

Whether data scraping is easy depends on the complexity of the task and the tools or techniques involved. For those without technical expertise, user-friendly web scraping software or outsourcing to web scraping service providers can simplify the process. Choosing to outsource allows individuals or businesses to leverage the expertise of professionals, ensuring accurate and efficient data extraction without delving into the technical intricacies of the scraping process.