Given the abundance of data accessible online, there are two primary methods widely employed for extracting information: web scraping with the help of tools or web scraping service providers and traditional data collection.

Despite sharing the common objective of gathering data, these two approaches exhibit notable distinctions in terms of their procedures, limitations, and benefits.

This article aims to provide a comparative guide on web scraping and traditional data collection, highlighting their pros and cons.

Web Scraping

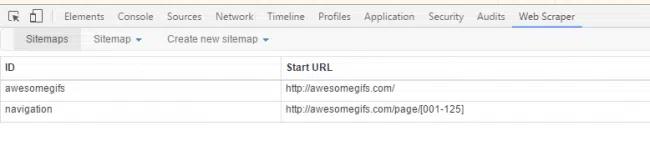

Web scraping involves automating the extraction of data from websites by utilizing software tools, commonly referred to as web scrapers or web crawlers, or with the help of web scraping service providers. These tools navigate web pages and collect targeted information efficiently. The process of web scraping typically follows these steps:

- Identifying the target website: The initial step involves pinpointing the website from which data extraction is needed, whether it’s a single webpage or an entire site.

- HTML parsing: Following the identification of the target website, the web scraper proceeds to parse the HTML code of the webpage. It extracts pertinent data elements like text, images, tables, and links.

- Data extraction: Once we’ve got the parsed HTML code, the web scraper uses different methods like XPath, CSS selectors, or regular expressions to grab the specific information we need.

- Data storage: The extracted data is then neatly organized and saved in a format like CSV, Excel, or a database, making it ready for us to analyze further or smoothly integrate into other systems.

Web scraping offers several advantages such as:

- Automation: Web scraping allows for the automated and efficient collection of data from multiple sources, saving time and effort.

- Real-time data: By scraping websites directly, web scraping enables access to real-time or up-to-date information, which is crucial for businesses dealing with dynamic data.

- Large-scale data collection: Web scraping enables the collection of a large volume of data from multiple websites, making it ideal for research purposes or market analysis.

Traditional Data Collection

Traditional data collection methods typically entail the manual collection of information through methods like surveys, interviews, observations, or by utilizing existing datasets. This method frequently depends on human effort and can be quite time-consuming and demanding in terms of resources. Some common traditional data collection methods include:

- Surveys: Surveys involve gathering data by asking questions through paper forms, phone calls, emails, or online surveys. Surveys can offer valuable insights, but their effectiveness is limited by factors such as biases, inaccuracies, and the limitations of small sample sizes or groups.

- Interviews: Interviews involve one-on-one interactions with individuals or groups to gather data. Despite yielding valuable qualitative insights and deeper comprehension, interviews frequently entail a substantial time commitment and may encounter constraints in faithfully depicting the entire sample.

- Observations: Observational data collection involves directly observing and recording behaviors or events. This method can be useful for gathering real-time data but may be limited by the time and effort required for observation and potential observer bias.

- Existing datasets: Traditional data collection may also involve accessing pre-existing datasets, such as government records, research publications, or public databases. Extracting data sets can provide valuable insights; it often faces limitations due to the availability of relevant datasets and the need for manual extraction or integration.

Traditional data collection methods have their own set of limitations, including:

- Time-consuming: Traditional data collection methods often involve manual processes that can be time-consuming, especially when dealing with large-scale data collection.

- Limited data volume: With traditional methods, it can be challenging to collect large volumes of data from multiple sources simultaneously.

- Potential biases: Human intervention in traditional data collection methods can introduce biases, such as respondent bias in surveys or observer bias in observations.

- Limited scalability: Traditional methods may struggle to scale up, especially when dealing with large and complex datasets or frequent data updates.

Comparison: Pros and Cons

To better understand the differences between web scraping and traditional data collection methods, let’s compare their pros and cons:

Conclusion

Web scraping and traditional data collection each have their pros and cons. Web scraping offers automation, real-time data access, and scalability, but demands ethical considerations. Traditional methods provide in-depth insights but can be time-consuming.

The choice depends on project needs, resources, and ethics. By weighing these factors, researchers and businesses can make informed data-driven decisions.

Why wait any longer? Opting for web scraping service providers to handle your large-scale data extraction projects could prove to be the smartest choice you make. Contact us now at sales@promptcloud.com