Managing change in web scraping is not about fixing breakages. It is about designing systems that expect them.

Every scraping system eventually breaks. Not because the code is bad. Not because the proxies failed. But because the world changes.

HTML layout changes. Website structure changes. Pagination shifts. JSON fields move. A div gets renamed. A class disappears. A JavaScript layer replaces static markup. And suddenly, the system that worked yesterday is producing incomplete or corrupted data today. Most teams treat scraper maintenance as a reactive task. Something breaks, someone patches selectors, and the job runs again. That approach works at small scale. It collapses at production scale.

Managing change in web scraping is not a debugging problem. It is a systems design problem. The real challenge is not that websites evolve. The challenge is that scraping systems are often built as static extraction scripts with no schema versioning, no automated validation, no regression testing, and no backward compatibility planning.

If you do not design for change, change will design your downtime. Let’s break down the ten operational challenges that make managing change in web scraping systems harder than most teams expect.

If managing change in web scraping is consuming more engineering time each quarter, your pipeline needs structural discipline. Schedule a Demo with PromptCloud.

In large-scale scraping environments, unmanaged structural change accounts for roughly 40–60% of recurring scraper maintenance effort. Teams that implement automated change detection and schema versioning reduce reactive maintenance incidents significantly.

Challenge 1: Website Structure Changes Break Extraction Logic

Why this is the starting point of most failures

Managing change in web scraping almost always begins with website structure changes. A minor HTML layout change, a renamed CSS class, or a modified pagination pattern can invalidate hardcoded selectors without triggering a visible system failure.

The scraper runs. The job completes. But the extracted fields no longer represent the intended data.

Unlike infrastructure outages, structure changes rarely produce immediate crashes. They produce subtle degradation. Fields return empty. Nested attributes shift. Lists truncate early. JSON responses restructure without warning.

If your scraping system is tightly coupled to specific DOM patterns, every structural adjustment on the source site becomes a maintenance event.

What actually changes on the source side

Website evolution happens continuously, not just during redesigns. Common triggers include:

- A/B testing variants modifying HTML layout

- Frontend refactoring that renames classes or IDs

- JavaScript rendering replacing static markup

- New dynamic loading mechanisms

- Pagination logic shifting from page numbers to cursor-based APIs

These are normal product updates for the website owner. They are destabilizing events for unmanaged scraping systems.

Why teams underestimate the risk

Most teams assume breakage will be obvious. They expect errors when selectors fail. In reality, selectors often still match something — just not the right node.

This creates the most dangerous scenario in managing change in web scraping: silent correctness drift.

The pipeline appears healthy. The output is structurally valid. But the data is incomplete, misaligned, or inconsistent.

How to reduce structural fragility

Web scraping system maintenance becomes manageable when structural volatility is assumed, not ignored. Mature teams implement:

- DOM change detection snapshots

- Structural signature monitoring

- Automated validation comparing record completeness against baselines

- Regression testing after pipeline updates

- Fallback extraction logic for known volatility zones

Challenge 1 is about selectors breaking entirely. Challenge 2 is harder — selectors that still match but now return the wrong thing.

Challenge 2: HTML Layout Changes Create Silent Data Drift

How layout tweaks become data problems

Not every change breaks a selector completely. In many cases, HTML layout changes simply shift the hierarchy of elements. A price moves from one container to another. A description field gains an extra wrapper. A badge element is inserted before a title.

The selector still returns a value. It is just the wrong value.

Managing change in web scraping becomes difficult because layout shifts often degrade data accuracy instead of stopping extraction. This is harder to detect than a crash.

You do not get a failure alert. You get polluted output.

Where this shows up in production

HTML layout changes typically introduce:

- Truncated text fields

- Incorrect attribute mapping

- Duplicated nested values

- Missing optional metadata

- Overwritten fields due to ambiguous selectors

Because the pipeline does not break, teams often discover the issue through downstream complaints rather than change detection systems.

That delay increases cleanup cost.

Why scraper maintenance alone is not enough

Most teams approach web scraping system maintenance as a manual fix cycle. Something looks wrong, selectors are adjusted, and the job is redeployed.

This works for isolated incidents. It fails when you operate across dozens or hundreds of domains where layout volatility is constant.

Managing change in web scraping requires automated validation layers that check output integrity continuously. Without data consistency checks tied to expected baselines, layout shifts will remain invisible.

How to detect layout-induced drift early

You reduce layout-related risk by implementing:

- Field-level completeness monitoring

- Historical comparison of scraping performance metrics

- Automated validation for unexpected value distributions

- Regression testing against prior output samples

- Change detection systems that flag structural divergence

Challenge 3: No Automated Change Detection Systems

Why manual monitoring fails at scale

Managing change in web scraping becomes unmanageable the moment your system depends on humans noticing anomalies.

Many teams still rely on visual inspection, ad hoc QA checks, or downstream complaints to detect breakage. That works when you scrape a handful of sites. It collapses when you manage dozens or hundreds of pipelines.

Website structure changes happen continuously. Without automated change detection systems, your only monitoring layer is human intuition.

That is not a system. It is a gamble.

What typically goes undetected

When change detection is not automated, you miss:

- Gradual record count drops

- Partial extraction failures

- Field-level null rate increases

- Unexpected shifts in value distribution

- Silent removal of optional attributes

Because nothing technically crashes, alerts are never triggered.

Managing change in web scraping requires measuring structural stability and data consistency in real time, not waiting for consumers to escalate issues.

What automated detection should monitor

Effective change detection systems track:

- DOM structure signatures across runs

- Schema stability over time

- Field completeness percentages

- Distribution shifts in key attributes

- Unexpected pipeline updates

Detection systems are only as good as the baselines behind them — which is why Challenge 6 matters just as much as the tooling.

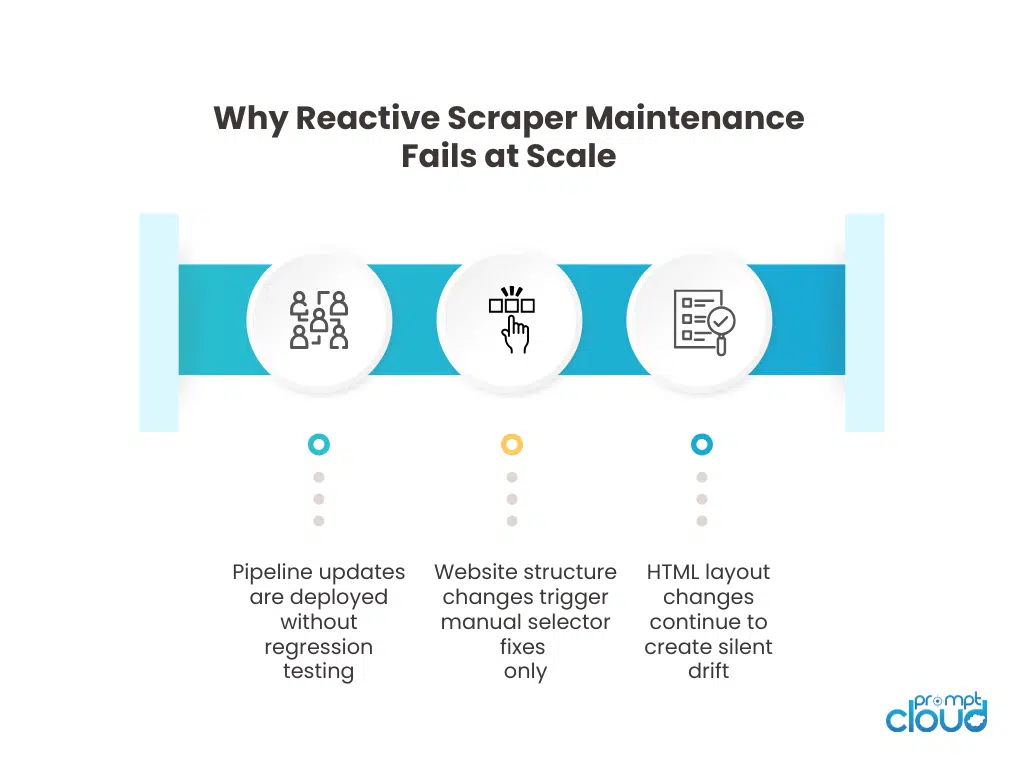

Figure 1: Comparison between reactive scraper maintenance and structured change management in web scraping systems.

Challenge 4: Regression Testing Is Rarely Implemented

Why pipeline updates introduce new risks

Every time you modify extraction logic to handle HTML layout changes, you introduce the possibility of breaking something else.

Managing change in web scraping does not stop at adapting to new structures. It requires ensuring that your fix does not corrupt previously stable fields.

Yet most scraping systems do not implement regression testing. Updates are deployed directly into production.

This creates cumulative fragility.

What regression failure looks like

Without regression testing, pipeline updates can cause:

- Previously stable fields to disappear

- Backward compatibility issues in historical datasets

- Inconsistent record structures across batches

- Reprocessing errors during backfills

- Data consistency checks failing after redeployment

These issues are often discovered after consumers ingest the updated data.

That is already too late.

How to institutionalize safe updates

Managing change in web scraping becomes safer when pipeline updates follow structured testing workflows:

- Snapshot comparison between old and new output

- Field-level delta validation

- Controlled staging environments

- Automated data consistency checks before release

- Backward compatibility validation for downstream consumers

Challenge 5: Backward Compatibility Is Treated as Optional

Why structural evolution breaks consumers

Managing change in web scraping is not only about adapting to website structure changes. It is also about protecting the systems that consume your data.

When a field is renamed, removed, or retyped without compatibility planning, downstream systems break even if extraction is technically correct. Dashboards fail. Models reject inputs. ETL jobs error out.

Web scraping system maintenance becomes chaotic when structural evolution is treated as internal plumbing instead of a contract with consumers.

Where compatibility failures appear

Backward compatibility issues commonly surface as:

- Data warehouse ingestion errors

- BI dashboards displaying null values

- Machine learning feature mismatches

- Historical datasets becoming structurally inconsistent

- API consumers receiving unexpected field formats

These failures are rarely immediate. They often surface hours or days after a pipeline update. That delay increases investigation cost.

How to manage compatibility during change

Managing change in web scraping requires treating schema updates as versioned releases:

- Introduce additive changes before deprecating fields

- Maintain dual-field support during transition periods

- Document migration timelines clearly

- Enforce schema versioning across batches

- Validate consumer impact before rollout

Experiencing These Challenges?

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

Challenge 6: No Baseline for Data Consistency Checks

Why you cannot detect drift without reference

You cannot manage change in web scraping if you do not know what “normal” looks like.

Many teams implement automated validation but fail to define stable baselines. They check whether a field exists, but not whether it behaves consistently over time.

Without baselines, change detection systems generate noise or miss meaningful drift entirely.

What baseline failure looks like

When data consistency checks lack historical reference points, you see:

- False positives triggered by normal seasonal variation

- Missed detection of gradual field degradation

- Inconsistent thresholds across domains

- Over-reliance on manual interpretation

- Alert fatigue from unstable metrics

Website structure changes often create subtle shifts rather than immediate breaks. Without historical baselines, these shifts blend into normal variance.

What effective baseline monitoring requires

Managing change in web scraping at scale requires:

- Historical completeness metrics per field

- Expected record count ranges by source

- Stable value distribution references

- Change detection systems tied to statistical thresholds

- Automated validation comparing current batch vs prior stable version

Challenge 7: Pipeline Updates Are Deployed Without Controlled Environments

Why production becomes the testing ground

Managing change in web scraping requires frequent pipeline updates. Selectors evolve. Extraction rules adjust. Parsing logic is refined. But in many systems, these updates are deployed directly into production.

There is no staging layer. No isolated test runs. No controlled rollout.

This turns live data consumers into unwitting testers.

When HTML layout changes force urgent scraper maintenance, teams often push quick fixes. Under time pressure, proper regression testing and automated validation are skipped. The update solves one problem and introduces another.

What uncontrolled deployment leads to

Without controlled environments, pipeline updates commonly result in:

- Inconsistent outputs between runs

- Mixed schema versions in storage

- Partial data overwrites

- Temporary data gaps during redeployments

- Backfill inconsistencies

The instability compounds because production data is continuously overwritten. There is no safe comparison point. Managing change in web scraping becomes chaotic when updates lack release discipline.

How to introduce release control

Stable systems treat scraping pipelines like software products. They implement:

- Staging environments that mirror production

- Shadow runs comparing new output against stable output

- Controlled rollouts by source or segment

- Rollback mechanisms for failed updates

- Change logs documenting pipeline updates

Challenge 8: Change Propagates Faster Than Documentation

Why undocumented evolution creates technical debt

Scraper maintenance often happens under pressure. A website structure change is detected, selectors are adjusted, and the pipeline is restored.

Documentation is postponed.

Over time, managing change in web scraping becomes difficult not because of complexity, but because no one remembers why certain logic exists.

Extraction rules accumulate edge-case handling. Conditional parsing logic grows. Hardcoded exceptions multiply. Without documentation, every future change becomes riskier.

What undocumented change causes

Lack of documentation leads to:

- Engineers hesitant to modify legacy logic

- Duplicate parsing rules layered over old ones

- Hidden dependencies between fields

- Accidental removal of critical fallback logic

- Increasing time spent debugging scraper maintenance tasks

This slows down response to HTML layout changes and pipeline updates.

How to keep change sustainable

Managing change in web scraping requires operational memory. Sustainable systems include:

- Version-controlled extraction logic

- Structured change logs for every update

- Clear mapping between website structure changes and pipeline updates

- Documentation of schema versioning decisions

- Defined ownership for each data source

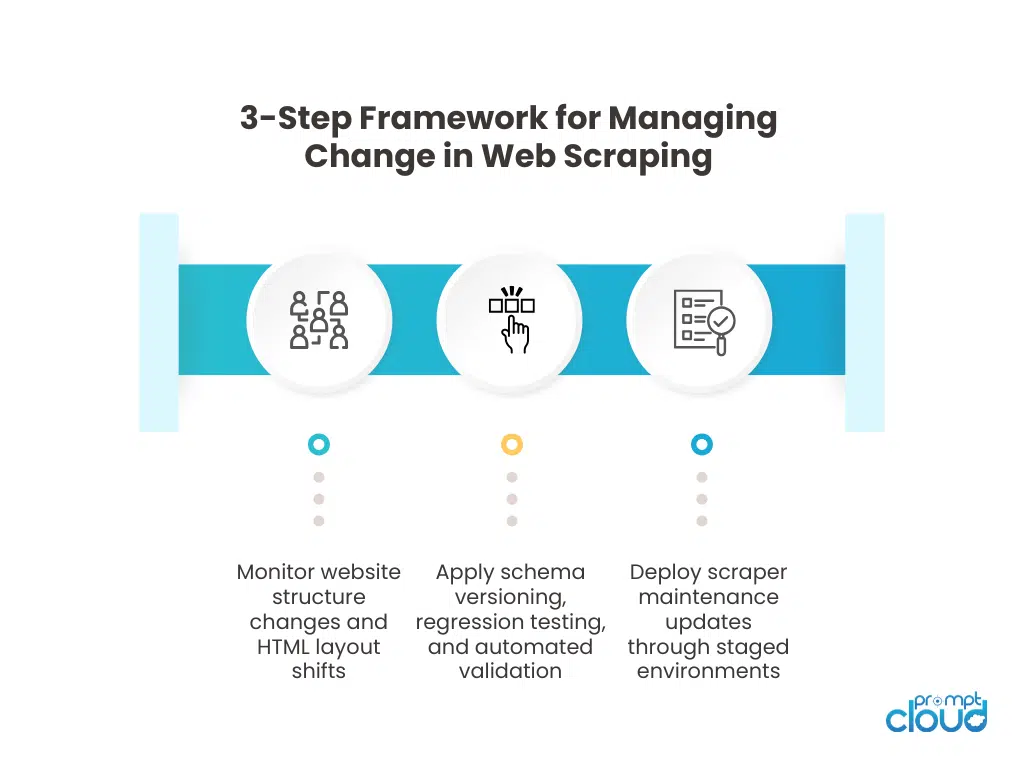

Figure 2: Three-step operational framework for managing change in web scraping systems from detection to controlled release.

Challenge 9: No Lineage Visibility During Structural Change

Why debugging becomes slow and uncertain

When website structure changes break extraction logic, the first question is simple: when did this start?

If you cannot answer that quickly, managing change in web scraping becomes investigative work instead of operational response.

Many scraping systems do not track lineage properly. They store final output but do not attach metadata about scraper version, schema versioning state, extraction logic version, or timestamped pipeline updates.

Without lineage, every incident becomes guesswork.

What breaks when lineage is missing

Absence of lineage visibility causes:

- Inability to identify which pipeline update introduced drift

- Manual log inspection across multiple runs

- Uncertainty about whether corruption affects one batch or historical data

- Delays in rolling back to a stable version

- Weak root cause analysis for HTML layout changes

When managing change in web scraping, you must know which code version produced which data version. Otherwise, recovery becomes slow and risky.

What effective lineage tracking requires

Strong lineage controls include:

- Capturing scraper version per batch

- Tagging schema versioning state in metadata

- Storing extraction timestamps

- Logging pipeline updates with structured identifiers

- Linking change detection systems to specific runs

Challenge 10: Change Management Is Treated as an Event, Not a System

Why reactive maintenance never stabilizes

The biggest mistake in managing change in web scraping is treating each website structure change as an isolated incident.

Teams patch selectors. Adjust extraction logic. Deploy fixes. Move on.

Until the next change.

This cycle never ends because the web is not static. HTML layout changes, frontend refactors, content management system upgrades, and new rendering patterns are continuous.

If web scraping system maintenance is reactive, your operational load scales with the number of sources.

What event-driven maintenance looks like

Reactive systems show predictable patterns:

- Frequent emergency pipeline updates

- Escalations triggered by downstream teams

- Manual validation after every major source change

- Growing technical debt in extraction logic

- Increasing effort per source over time

The cost curve rises as coverage expands.

What systemic change management looks like

Managing change in web scraping becomes sustainable only when it is designed as an ongoing discipline:

- Change detection systems monitoring structure continuously

- Automated validation gating every pipeline update

- Regression testing as a release requirement

- Backward compatibility enforced through schema versioning

- Data consistency checks tied to baselines

- Lineage tracking embedded in every run

| Challenge | What changes | What breaks | How to catch it early | What to implement |

| 1. Website structure changes break extraction logic | DOM hierarchy, pagination patterns, JS rendering, A/B variants | Selectors fail or pull wrong nodes, coverage drops | Change detection systems on DOM signatures, record-count baselines | Structural signatures, fallback extraction, source-level monitoring |

| 2. HTML layout changes create silent data drift | Wrapper divs, reordered elements, inserted badges/labels | Wrong values mapped to fields, truncation, null creep | Data consistency checks on distributions, field completeness trends | Automated validation, field-level drift checks, regression snapshots |

| 3. No automated change detection systems | Continuous site evolution without explicit notice | Delayed detection, issues found by downstream teams | Baseline-aware anomaly detection, completeness and freshness monitors | Change detection systems, pipeline alerts with severity |

| 4. Regression testing is rarely implemented | Fixes for site changes and pipeline updates | Fix resolves one area but breaks another | Golden sample comparisons, diff-based output checks | Regression testing suite, staged releases, rollback paths |

| 5. Backward compatibility is treated as optional | Field rename/removal/type shifts, schema evolution | ETL breaks, dashboards misread fields, model feature mismatch | Contract tests, consumer impact checks before deploy | Schema versioning, deprecation windows, dual-write transitions |

| 6. No baseline for data consistency checks | Natural variance vs real drift not separated | False positives or missed drift, alert fatigue | Source-segment baselines, seasonality-aware thresholds | Baseline models, per-source expectations, SLA tracking |

| 7. Pipeline updates deployed without controlled environments | Hotfixes pushed directly into prod | Mixed outputs, gaps during redeploy, overwrite issues | Shadow runs, canary releases, staging validation | Staging env, controlled rollout, rollback automation |

| 8. Change propagates faster than documentation | Quick fixes, growing edge-case logic | Knowledge loss, risky edits, duplicate rules | Change logs tied to releases, incident notes | Versioned docs, runbooks, ownership per source |

| 9. No lineage visibility during structural change | Multiple scraper versions, evolving schemas | Slow root cause analysis, unsafe backfills | Traceability by batch, version tags, provenance metadata | Lineage + provenance tagging, run correlation, audit trails |

| 10. Change management treated as an event, not a system | Ongoing site volatility at scale | Permanent firefighting, rising maintenance cost curve | Trend dashboards across sources, incident rate tracking | End-to-end change discipline: detection, testing, contracts, observability |

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

If you want to go deeper

- Black Friday scraping stress test lessons – Why high-traffic events expose weaknesses in managing change in web scraping systems and scraper maintenance discipline.

- AI data quality metrics for production pipelines – Explains how automated validation and data consistency checks prevent silent structural drift.

- Structuring and labeling web data for LLM systems – Shows why schema versioning and backward compatibility matter when scraped data feeds AI workflows.

- Data lineage and provenance in web data pipelines – Covers how traceability accelerates root cause analysis during pipeline updates and website structure changes.

The Martin Fowler article on Evolutionary Database Design explains how systems must evolve schemas safely without breaking consumers. The principles directly apply to schema versioning and backward compatibility in web scraping systems.

PromptCloud manages structural change across scraping systems spanning ecommerce, travel, and intelligence use cases operating at scale.

“Before structured change management, maintenance consumed nearly a quarter of our engineering sprint capacity. With versioning and validation controls, that dropped materially.”

Head of Data Engineering

Global Retail Platform

What separates successful teams is structural discipline. They design pipelines that assume change and enforce backward compatibility from day one. This is why managed web scraping services require automated validation, schema control, and production-grade change management. Organizations reaching this realization often evaluate whether to continue reactive maintenance or adopt a managed, reliability-first model.

FAQs

1. Why is managing change in web scraping harder than initial setup?

Initial setup assumes a stable structure. Ongoing operations must handle continuous website structure changes, HTML layout shifts, and evolving data contracts without disrupting downstream systems.

2. How does schema versioning reduce scraper maintenance risk?

Schema versioning allows you to introduce changes incrementally, maintain backward compatibility, and track which structural version produced each dataset. This prevents silent downstream breakage during pipeline updates.

3. What is the role of regression testing in web scraping system maintenance?

Regression testing compares new extraction output against previously stable snapshots. It ensures that fixes for website structure changes do not corrupt unrelated fields or introduce new inconsistencies.

4. How do change detection systems improve reliability?

Change detection systems monitor structural signatures, field completeness, and distribution shifts. They catch drift early instead of relying on downstream complaints or manual inspection.

5. What is the biggest mistake teams make when handling HTML layout changes?

The most common mistake is treating each change as a one-off fix instead of designing a systematic approach with automated validation, version control, lineage tracking, and controlled release workflows.