Why Standard Data Quality Checks Fall Short for AI

If your AI model started recommending discounts on products that sold out two days ago, or your sentiment classifier swung positive without any obvious cause, the problem was almost certainly not your model architecture. It was your data.

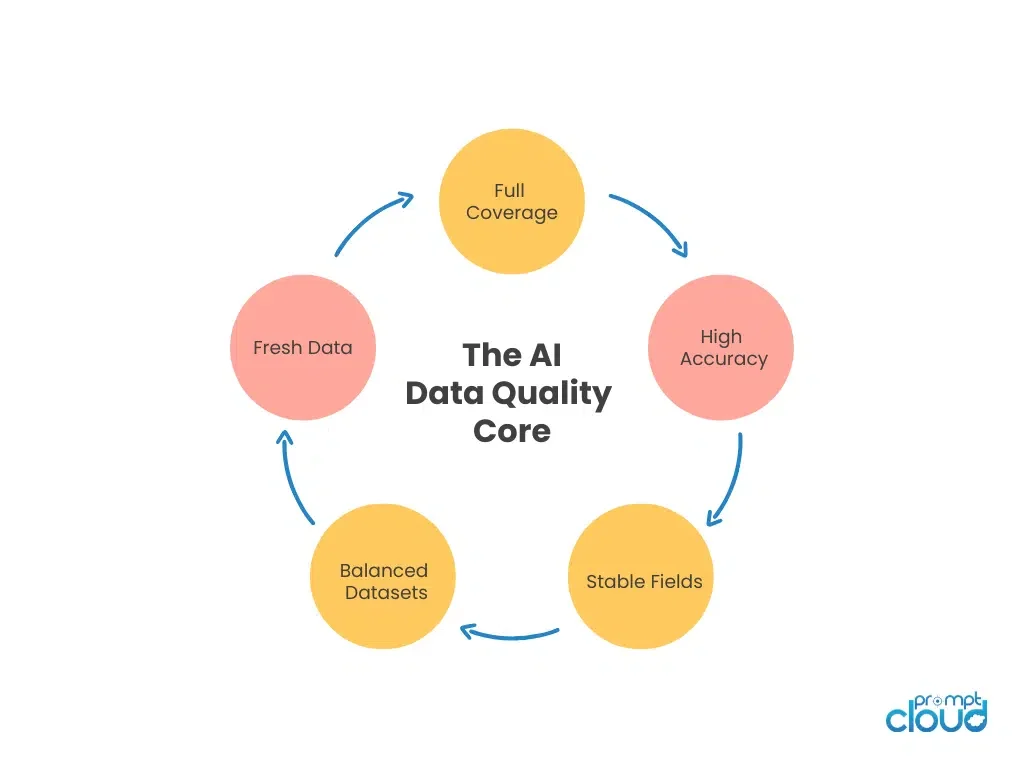

Data quality metrics for AI pipelines are not the same as data quality for spreadsheets or analytics dashboards. A clean CSV with no null values can still destroy a production model if the records are six weeks stale, the coverage skips entire product categories, or one source quietly dominates everything else in the dataset. In AI, quality is not a binary pass or fail. It is a set of conditions that determine whether your model learns the right patterns or picks up noise dressed as a signal.

Three metrics drive most of this: freshness, bias, and completeness. They are not independent checkboxes. They interact, they compound, and when one slips, the others tend to follow. This guide breaks down what each one means in a real AI context, how to measure each with precision, and how to build a monitoring framework that catches drift before it reaches your training environment. Whether you are building recommendation engines, sentiment classifiers, or demand forecasting models that depend on web data for AI, these three metrics are the difference between a pipeline that holds up and one that silently erodes.

Most traditional data quality programs are built to check for null values, duplicate rows, and formatting errors. These checks matter, but they were designed for analytics workflows where a human reviews the output before any decision is made. AI pipelines remove that human checkpoint. The model ingests the data, learns from it, and starts making predictions at scale. Every distortion in the input gets amplified across every inference the model produces.

According to a survey by Dimensional Research, 96% of organizations encounter data quality problems when training AI models. A separate analysis found that 87% of data science projects never reach production, with inadequate data quality as the leading cause. These are not edge cases. They reflect a structural gap between how most teams think about data quality and what AI systems actually require from their inputs.

IBM’s research on AI data quality reinforces this point: leading organizations are moving away from one-off cleansing initiatives and toward continuous quality monitoring embedded in pipelines, with accountability defined across data domains. The shift is not cosmetic. It reflects how differently AI systems behave when trained on data that drifts versus data that is actively maintained.

The gap between clean data and AI-ready data comes down to three properties that standard tooling rarely tracks: whether the data still reflects current reality, whether it fairly represents the full world the model needs to understand, and whether every field and record the model depends on is actually present at the depth required. Each one shapes what the model learns. Together, they determine whether a model that performs well in testing holds up when it encounters real-world conditions.

Your pipeline is only as reliable as the data coming into it.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

Freshness: Does Your Data Still Reflect the World Your Model Will Operate In?

Freshness is not just about having recent data. It is about alignment between your dataset and the conditions your model will actually face during inference. An AI model trained on pricing data from six weeks ago is not learning about the market as it exists today. It is learning about a market that has already moved. And during inference, it will act on that outdated understanding as if nothing has changed.

Web data shifts faster than most teams account for. Prices change hourly on competitive marketplaces. Product listings expire and get relisted. Review volume spikes overnight following a viral post or a major product event. If your pipeline does not keep pace with the source, the model starts operating in a version of reality that no longer exists. The predictions look reasonable until they stop being reasonable, and by that point the damage has already reached production.

Freshness has three practical layers, each worth tracking independently.

Collection frequency is the rhythm of your extraction. Some use cases need hourly refreshes. Others work well with daily or weekly pulls. The right cadence depends entirely on how quickly the underlying source changes. Pricing data for electronics ages far faster than a product description for furniture. Applying a single threshold across all data types is a common mistake that creates blind spots in the most time-sensitive categories.

Propagation delay is the time between raw extraction and usable data inside your pipeline. Even when the crawler runs on schedule, delays accumulate in transformation, validation, and schema mapping steps before anything reaches the model. Teams often measure extraction timestamps while ignoring the hours lost downstream, which gives a false sense of freshness at the pipeline entry point.

Staleness tolerance defines the acceptable lag for each specific use case. A two-hour delay on travel fare data can break a forecasting model. The same delay for a real estate catalog is completely acceptable. Defining tolerance thresholds per data type is what separates teams with reliable freshness controls from teams who are essentially guessing.

Measuring freshness in practice means tracking the age distribution of records across your full dataset on a continuous basis, not just at the moment of ingestion. A healthy pipeline shows a tight cluster of recent timestamps. A wide spread signals uneven crawl coverage, inconsistent update schedules, or a source that has changed its behavior without anyone noticing.

For teams running pipelines across multiple sources simultaneously, maintaining even freshness is particularly challenging. Multi-agent scraping frameworks distribute crawling load across sources in parallel, which helps maintain consistent freshness across categories that would otherwise lag behind higher-priority feeds.

Bias in Web Data: Where Your Dataset Leans and Why Your Model Follows

Bias in web-sourced data is rarely intentional. It is structural. Certain brands update their product listings more frequently than others. Some regions generate more reviews by volume. A few marketplaces publish data in richer formats that happen to get scraped more reliably. None of this is deliberate, but all of it shapes what the model learns to treat as normal, and what it learns to overlook.

The clearest way to think about bias in training data is as imbalance: imbalance in representation, frequency, and emphasis. Even small distortions compound over training cycles. A recommendation model will surface what it saw most often. A classifier will struggle with categories it barely encountered. A forecasting model will over-index on dominant signals while missing weaker ones that matter in production.

Table 1: Common Types of Bias in Web-Sourced AI Training Data

| Bias Type | How It Shows in Raw Web Data | How It Affects AI Output |

|---|---|---|

| Category Bias | Certain product types or brands dominate record counts | Model favors dominant labels; minority categories get misclassified |

| Source Bias | One marketplace supplies the majority of records | Model learns one platform’s patterns and generalizes poorly to others |

| Geographic Bias | Strong coverage in select regions, weak or absent elsewhere | Forecasting and sentiment outputs skew toward well-covered regions |

| Temporal Bias | Data spikes in certain periods with gaps in others | Model misreads seasonality or learns time-restricted behavior as permanent |

| User Bias | Reviews dominated by power users or a specific demographic | Sentiment outputs reflect one audience group, not the broader market |

A practical bias audit starts with four questions. Which categories appear most often relative to their actual market share? Which sources are contributing the most records? Which geographic zones dominate the coverage? Which time windows have gaps that could distort the model’s understanding of seasonal patterns? The answers do not need to be perfect. They need to be known before the model trains on them.

Quantifying bias means building structured views rather than relying on intuition. Count records per category and compare against expected market proportions. Calculate source contribution share to see whether one platform is responsible for more than half your dataset. Look at the ratio of positive to negative sentiment entries to catch emotional skew. Review geographic spread normalized against actual market size. Each of these is a measurable signal, not an estimate.

The downstream impact of source-level bias becomes especially visible in domain-specific models. In HR data analytics, training data that overrepresents certain job boards or candidate profiles can skew recruitment models toward patterns that reflect one source’s demographics rather than the actual labor market. The same dynamic plays out in real estate data analytics, where overrepresentation of premium listing platforms can distort property valuation and demand models.

The goal of bias measurement is not to force artificial balance. Real markets are not symmetrical and training data should not pretend they are. The goal is to understand the degree of imbalance before the model learns it as ground truth, so the pipeline and any weighting adjustments can be designed around the actual shape of the data rather than a corrected version that does not reflect reality.

Completeness: Why a Full Record Count Does Not Mean Complete Data

Completeness sounds like the simplest metric to verify. Count the rows, check that fields are populated, move on. In practice, completeness in AI training data is multidimensional, and teams who treat it as a row count tend to discover the real gaps at the worst possible moment: midway through feature engineering, or during model evaluation when a segment collapses that was never properly represented in training.

A dataset can be complete in terms of record count while being critically incomplete in attribute richness. You can have full entity coverage for one product category and almost no depth on three others. You can have detailed fields for every record from one time window and missing data from an entire quarter. You can have thousands of rows that all technically pass schema validation but contain placeholder values in fields the model was counting on for signal.

Completeness operates across five dimensions that need to be evaluated independently rather than as a single combined score.

Record coverage is the count of unique entities in the dataset compared to the expected population. If you are building a pricing model for consumer electronics but your dataset covers only 40% of listed SKUs, the missing 60% is not neutral. The model does not know what it does not see.

Field coverage measures how consistently each attribute is populated across records. A price field missing from 30% of records does not just create gaps. It forces downstream workarounds in feature engineering that introduce noise the model will later treat as signal.

Depth of information goes beyond whether a field exists to whether it contains enough detail to be useful. A product description field containing a single sentence is technically populated but practically hollow for any model learning semantic patterns from that content.

Need This at Enterprise Scale?

While in-house monitoring works for a few sources, pipelines spanning hundreds of domains introduce schema drift and coverage gaps that manual frameworks cannot sustain.

Temporal coverage checks the continuity of data across time windows. Missing weeks or months in a time-series dataset do not show up as obvious errors in a schema validation. They show up as distorted seasonality and unexplained model behavior in production, long after the gap was introduced.

Source variability coverage checks whether the dataset draws from a wide enough range of platforms and sellers. Low source diversity creates models that generalize poorly because they have only been trained on one version of how a market behaves.

Completeness can also degrade silently when source page structures change without notice. CMS-driven sources are particularly prone to this. Understanding how automated extraction handles WordPress-based content is one practical example of catching field-level gaps at the source before they propagate into the training dataset.

Teams typically discover completeness problems at the worst possible time. The fix at that stage is expensive. The solution is a completeness audit built into the pipeline as a standing check, not bolted on after the fact when a model is already underperforming.

How Freshness, Bias, and Completeness Compound Each Other

Most teams treat these three metrics as separate concerns with separate owners. This works at small scale. As the pipeline grows and the data sources multiply, these metrics start influencing each other in ways that no single-metric view will catch.

Freshness affects bias. When one source updates more frequently than others, it naturally becomes overrepresented in any recent snapshot of the dataset. That imbalance is source bias. The model does not learn the broader market pattern. It learns the update rhythm of whichever source happened to refresh most recently.

Bias affects completeness. When a dataset leans toward certain categories or regions, coverage appears stronger than it actually is. You have full records for one slice of the market and sparse records for everything else. The aggregate completeness score looks acceptable while hiding serious gaps at the segment level where the model will be evaluated.

Completeness affects freshness. A dataset can refresh on schedule and still be incomplete if certain attributes have stopped appearing or if some categories thin out over time without triggering any alert. High refresh frequency does not guarantee a complete dataset. Both conditions need to be tracked independently and cross-checked.

The practical consequence of these interactions is that pipelines can drift in ways that no single metric would catch on its own. A small coverage gap becomes a representation imbalance. A few stale fields become a steady tilt in outputs. Within weeks, the model is operating on a version of the data that no longer maps to the environment it is supposed to understand. Monitoring all three together is not just good practice. It is the only way to catch the compounding effects before they surface as production failures.

Building a Data Quality Monitoring Framework for AI Pipelines

A pipeline that only checks data quality during periodic audits is a pipeline that discovers problems after they have already done damage. The goal of a monitoring framework is continuous visibility: knowing the condition of your data at every stage, with defined thresholds that trigger action before any issue reaches the training environment.

Think of data quality monitoring the same way engineering teams approach observability. You do not wait for a service to go down before checking its health. You track indicators continuously, store baselines, and alert on deviations. Data quality deserves the same treatment, with the same level of instrumentation.

A practical monitoring framework runs on three layers.

The measurement layer calculates freshness scores, representation ratios, and completeness percentages across the full dataset on a continuous basis. It captures how each metric changes over time so you can see trends, not just point-in-time values. A single snapshot tells you where things stand today. A trend tells you whether things are improving, stable, or quietly degrading.

The expectation layer defines acceptable thresholds for each metric, specific to each data type and use case. For freshness: what percentage of records must have been updated within the last 24 hours for this category? For completeness: what is the minimum field population rate before records are considered usable for training? For bias: what is the maximum source contribution share before weighting adjustments are required? These thresholds should not be universal. They should be calibrated to the actual volatility and importance of each data type.

The alerting layer sends signals when values fall outside acceptable ranges. A freshness score dropping below threshold, a completeness rate falling on a critical field, or a single source share climbing beyond acceptable limits are all clear signals that warrant immediate investigation. The value is catching the signal early, before the issue propagates downstream through transformation, feature engineering, and into the model.

A well-designed framework also connects the three metrics so that alerts on one automatically prompt checks on the others. A drop in freshness should trigger a source bias review. A completeness drop should prompt a check for schema changes at the source. A spike in source bias should lead to a review of coverage and time windows. These connections turn individual metric alerts into a coordinated diagnostic system that surfaces root causes, not just symptoms.

Organizations that implement continuous quality monitoring see substantially fewer model failures compared to those running on static validation policies. The investment in monitoring pays back in fewer retraining cycles, more predictable model behavior, and faster debugging when issues do arise.

How PromptCloud Helps Teams Maintain AI-Ready Data Quality at Scale

Maintaining freshness, bias control, and completeness across large-scale web data pipelines requires infrastructure that most teams cannot build and operate in-house. Crawling thousands of sources, handling schema changes across platforms, adapting to anti-scraping measures, and delivering clean structured data on a reliable schedule is a full engineering function on its own.

PromptCloud is built to handle exactly this layer. The platform delivers structured, validated web data to AI pipelines across industries, with crawl schedules calibrated to source volatility so that pricing data, product catalogs, job listings, and review feeds stay current without manual intervention. Coverage is built across geographies and source categories from the start, rather than patched together reactively when bias shows up in model outputs.

For teams building training datasets for large language models, recommendation systems, or domain-specific classifiers, PromptCloud provides the data infrastructure that keeps freshness, representation, and field-level completeness within defined parameters. Pipelines are monitored for schema drift, coverage gaps, and extraction failures, with structured outputs delivered in formats that plug directly into training workflows without additional transformation overhead.

The result is a data layer that teams can trust to hold up in production. Clean records, consistent coverage, and delivery on a schedule that matches the actual pace of change in the underlying sources. When the data layer is reliable, model development can focus on what it should: building systems that learn the right patterns rather than debugging why the inputs stopped reflecting reality.

Data Quality Is Not a Pre-Training Checklist

The most common mistake teams make with data quality is treating it as a one-time gate before training starts. Run the checks, clean the data, feed it to the model, and move on. This approach works exactly once, before the pipeline starts evolving and the real world keeps changing underneath it.

Freshness, bias, and completeness are not static properties of a dataset. They shift every time a source changes its update schedule, every time a new category starts generating volume, every time a region that was well-covered begins producing fewer records. A pipeline that was high quality at launch can degrade silently over months while producing outputs that look normal until they stop being normal entirely.

The teams that build reliable AI systems treat data quality as infrastructure, not as a pre-launch task. They monitor continuously, define thresholds with intention, connect the three core metrics into a single diagnostic view, and respond to drift before it compounds. That discipline separates AI pipelines that hold up under real-world conditions from ones that only work in the controlled environment of initial testing.

The model matters. But the data it learns from matters more. And the systems that maintain that data matter just as much as either.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

Frequently Asked Questions

1. What are data quality metrics for AI and why do they matter?

Data quality metrics for AI are measurable indicators that determine whether a dataset is fit for training or powering an AI model. The most critical are freshness (how current the data is), bias (whether certain sources, categories, or regions are overrepresented), and completeness (whether all required records and fields are present at the required depth). These metrics matter because AI models amplify whatever patterns exist in the training data. A model trained on stale, skewed, or incomplete data does not just produce wrong answers. It produces systematically wrong answers at scale, and those errors compound over every inference it makes.

2. How is data freshness measured in an AI pipeline?

Data freshness is measured by tracking the timestamp of each record against a defined acceptable lag window for that specific data type. In practice, this involves three checks: the age distribution of records across the full dataset, the time between a real-world change at the source and when that change appears in the pipeline, and the propagation delay between raw extraction and the point where data becomes usable for training. A continuous freshness score is calculated as the percentage of records updated within the target window. When this score drops below the defined threshold for a data type, it signals a need to adjust crawl frequency, investigate a pipeline delay, or check whether the source has changed its update behavior.

3. What causes bias in web-sourced training data?

Bias in web-sourced training data is almost always structural rather than intentional. It emerges when certain sources update more frequently than others and therefore contribute disproportionately to any snapshot of the dataset. It emerges when specific categories or brands have more active listing practices than competitors. It emerges when geographic coverage reflects where more digital commerce activity occurs rather than where the actual target market sits. It also emerges when review data is dominated by a subset of highly active users whose preferences do not represent the broader audience. None of these causes require a deliberate decision. They happen naturally in any large-scale web data collection unless active steps are taken to monitor and adjust representation.

4. How do you fix data completeness issues in AI training datasets?

Fixing completeness issues starts with identifying which of five dimensions is failing: record coverage (missing entities), field coverage (unpopulated attributes), depth of information (shallow field values), temporal coverage (gaps in time-series data), or source variability coverage (too few platforms represented). Once the dimension is identified, the fix depends on the root cause. Missing records usually require expanded crawl coverage. Field gaps often result from schema changes at the source that the extraction logic has not caught up with. Temporal gaps need historical backfills. Source diversity gaps require adding new feeds. Completeness checks should be embedded in the pipeline as standing validation rules, not run as ad hoc audits when a model starts underperforming.

5. What is the difference between data accuracy and data completeness in machine learning?

Data accuracy refers to whether the values in a record correctly reflect reality. A price field that contains the wrong number has an accuracy problem. Data completeness refers to whether all required records and fields exist in the dataset at all. A missing price field is a completeness problem. In machine learning, both matter, but they fail in different ways. An accuracy failure introduces wrong signal that the model learns. A completeness failure introduces absence of signal, which means the model has no information for certain patterns and will generalize poorly to cases it never encountered during training. Completeness failures are often harder to detect because missing data rarely throws an error. It simply leaves gaps in what the model can learn.

6. How does source bias in training data affect model predictions?

When a training dataset is dominated by one source, the model learns the patterns, vocabulary, pricing norms, and sentiment tone of that source rather than the broader market. This shows up in several ways. A recommendation model trained heavily on one marketplace will favor product attributes that are common on that platform. A sentiment classifier trained on reviews from one platform will misread the tone of reviews from others. A pricing model trained on a single retailer’s data will produce recommendations that reflect that retailer’s strategy, not the competitive landscape. Source bias does not produce obvious errors. It produces subtly skewed outputs that look plausible until the model is tested against data from sources it has rarely seen.

7. What is data drift and how does it relate to freshness in AI pipelines?

Data drift refers to a change in the statistical properties of the data your model receives during inference compared to what it was trained on. Freshness is one of the primary causes of data drift. When training data becomes stale, the real-world environment continues evolving while the model’s understanding of it stays fixed. The gap between what the model expects and what it encounters grows over time. This produces a specific type of drift where the model performs well on data from the period it was trained on and progressively worse on data from further into the future. Monitoring freshness continuously is one of the most direct ways to catch data drift before it degrades model performance in production.

8. How do you build a data quality monitoring framework for AI?

A data quality monitoring framework for AI has three functional layers. The measurement layer continuously calculates freshness scores, bias ratios, and completeness percentages across the dataset and stores those values over time to enable trend analysis. The expectation layer defines acceptable thresholds for each metric, calibrated per data type and use case rather than using universal standards. The alerting layer sends signals when values fall outside defined ranges and connects alerts across metrics so that a freshness drop triggers a bias check and a completeness decline triggers a schema review. The framework works best when it is integrated into the pipeline itself rather than run as a separate validation step, and when alerts on one metric automatically prompt investigation of the others.

9. What percentage of AI projects fail due to poor data quality?

Industry research consistently points to data quality as the leading cause of AI project failure. A survey by Dimensional Research found that 96% of organizations encounter data quality problems when training AI models. A VentureBeat analysis found that 87% of data science projects never reach production, with inadequate data as the primary reason. Gartner research has estimated that poor data quality costs organizations an average of 12.9 million dollars annually, and that number does not account for the indirect costs of failed AI initiatives, delayed deployments, or the resources spent debugging models when the underlying data issue was never identified.

10. How are freshness, bias, and completeness connected in an AI data pipeline?

These three metrics interact in ways that make isolated monitoring insufficient. Freshness affects bias because sources that update more frequently naturally become overrepresented in recent snapshots of the dataset, creating source-level imbalance. Bias affects completeness because a dataset that leans toward certain categories or regions appears more complete than it is, masking serious coverage gaps in underrepresented segments. Completeness affects freshness because a pipeline that refreshes on schedule but stops populating certain fields correctly is not actually delivering fresh data in any meaningful sense. Monitoring all three metrics together, with alerts on one metric triggering checks on the others, is the only way to catch the compounding effects of these interactions before they produce production failures.