What Alternative Data Actually Means for Institutional Investors

Quarterly earnings tell you what already happened. Alternative data shows you what is happening right now — in web traffic, consumer spending, hiring activity, and supply chains. For hedge funds, the edge is no longer in which data you can access. It is in how you collect it, how clean it is, and whether your web scraping infrastructure can deliver it at the quality and cadence institutional decisions require. This guide covers the data types that move markets, why web scraping is the collection layer funds rely on most, and what separates investable signal from noise when the stakes are measured in basis points.

Traditional financial analysis has a timing problem. Earnings reports land 45 to 90 days after the period they describe. By then, the market has already moved. The investors who moved first were reading different signals — and sourcing them through methods that fall outside the standard data stack.

Alternative data is any information that reflects real-world economic activity before it appears in official filings. It captures what companies are actually doing — not what they report — and what consumers are spending on, not what sentiment surveys suggest.

For hedge funds operating at institutional scale, that distinction drives material alpha. Research teams at multi-strategy and quantitative funds do not treat alt-data as a supplementary layer. It is a primary input in how they build conviction, size positions, and manage portfolio risk on a continuous basis.

The clearest way to understand the distinction

Traditional data answers: What did this company report?Alternative data answers: What is this company actually doing right now?

For funds targeting early-stage alpha, that second question is the only one that matters. The challenge is answering it consistently — at scale, with clean data, in near real time. That is where alternative data web scraping becomes the infrastructure question behind the investment question.

Stop building positions on data that’s already 90 days old.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

The Alternative Data Sources That Drive Real Investment Decisions

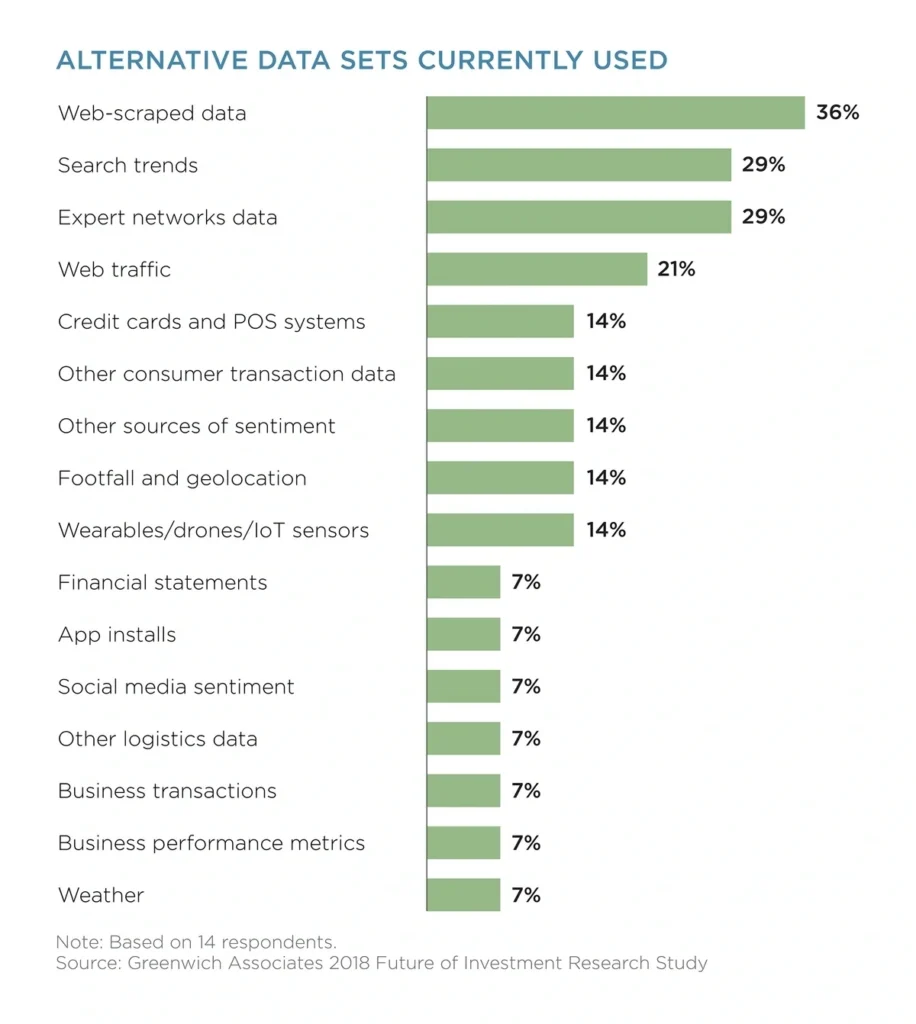

Not all alt-data is equal. Hedge funds focus on sources that are structured enough to model, frequent enough to matter, and differentiated enough to carry edge. Below are the eight categories that institutional investors consistently prioritise — and the specific signals they extract from each.

1. Web Traffic and Search Demand

Web visit data from providers like SimilarWeb and Semrush reflects demand momentum before revenue materialises. Funds track unique visitors, time-on-site, add-to-cart behaviour, and branded search volume. A sustained traffic inflection at a retailer or SaaS company often precedes an earnings beat by six to ten weeks.

2. Credit Card and Transaction Data

Aggregated, anonymised spending data is one of the highest-signal alt-data categories. Providers like Yodlee and Second Measure show category-level purchase trends, shifts in discretionary vs. essential spending, and early winners and losers in retail. Funds use this data to build revenue estimates that are often more accurate than sell-side consensus.

3. Job Postings and Hiring Trends

A company’s hiring footprint reflects its operational roadmap. Rapid growth in engineering or supply chain roles signals product expansion or geographic entry. Sudden hiring freezes — especially in customer-facing divisions — often precede guidance cuts. Job posting data is also one of the more accessible categories for scraping at scale from public job boards.

4. Web Scraped E-Commerce Intelligence

Product pricing, stock availability, promotional depth, and inventory restocking cycles change hourly on major retail platforms. Funds use alternative data web scraping to track these signals in near real time across thousands of SKUs. A pattern of sustained stockouts on a brand’s top products often signals stronger-than-expected demand, while deep discounting can flag inventory stress.

5. Customer Reviews and Product Ratings

Review velocity and sentiment trends on platforms like Amazon, G2, and Trustpilot reveal shifts in product quality and consumer satisfaction before they appear in churn numbers or net promoter data. A fund tracking a consumer tech company can detect early product-market fit issues months before they surface in quarterly results.

6. OTA and Travel Demand Signals

Booking patterns across airlines, hotels, and car rental platforms indicate both macro economic strength and company-specific performance. Funds tracking hospitality or travel-adjacent companies use OTA aggregation to monitor occupancy trends, pricing power, and seasonal demand shifts — often scraping aggregator pages directly to build proprietary datasets.

7. Supply Chain and Shipment Data

Customs filings and shipping manifest data reveal real operational movement — import volumes, supplier relationships, restocking cycles, and geographic concentration risk. This category is especially valuable for tracking manufacturing companies and large retailers where inventory dynamics directly impact earnings.

8. App Usage and Engagement Metrics

For consumer tech and fintech companies, daily active user trends, session length, and uninstall rates proxy for product momentum. App usage data sourced through panel providers or aggregated platforms can tell a fund whether a company is growing its engaged base or simply inflating installs with low-quality acquisition.

Need This at Enterprise Scale?

While internal scraping works for a few sources, institutional-scale collection introduces schema drift, freshness SLAs, that compound fast. Most research teams evaluate managed vs. build to determine true cost of ownership.

Why Web Scraping Is the Collection Layer Behind Alternative Data

Most hedge funds do not buy alternative data in a clean, pre-packaged form. They build it. And the most common method of doing that — especially for web traffic, e-commerce pricing, job postings, and review data — is web scraping.

Web scraping extracts structured information from publicly accessible web pages at scale and on a defined cadence. For a fund tracking 200 retailers across pricing, stock availability, and promotional activity, manual monitoring is not feasible. A well-configured scraping pipeline delivers that data daily or hourly, normalised and ready for analysis.

According to PromptCloud’s State of Web Scraping 2026 report, over 67% of financial services teams using alternative data cite data freshness and collection reliability as their top infrastructure concerns — ahead of cost and vendor availability. This reflects a shift: the bottleneck is no longer access to data sources. It is the ability to collect consistently, at scale, without signal degradation.

Why funds build scraping infrastructure rather than relying solely on data vendors

Proprietary datasets carry more edge than commodity purchases. When the same vendor sells the same dataset to 30 funds, the information advantage erodes. Custom scraping pipelines built around specific investment theses generate data that competitors cannot easily replicate.

The practical challenge is that web scraping at institutional scale requires significant engineering. Dynamic rendering, anti-bot protections, IP rotation, schema drift, and data validation are ongoing problems. Many funds that begin with internal scraping infrastructure eventually move to managed collection providers to maintain freshness and reliability without absorbing the operational overhead.

How Hedge Funds Integrate Alternative Data Into Their Investment Process

Alternative data is only useful when it feeds into a repeatable research framework. Below is how institutional investment teams actually embed these signals — from the earliest stages of idea generation through to portfolio exit decisions.

Idea Generation

Analysts scan alt-data dashboards for early inflection points: a retailer with a 30% week-over-week traffic spike, a logistics company showing shipment acceleration, or a fintech app gaining session time. These signals form the basis of new investment ideas before any earnings data confirms the trend.

Thesis Validation

Once an idea shows potential, the team triangulates multiple datasets to test conviction. If web traffic is rising, does credit card transaction data show the same pattern? Is hiring growing in customer success and fulfilment roles? Do reviews show improving satisfaction scores? Convergence across data types strengthens the thesis. Divergence prompts reassessment.

Revenue Forecasting

Alt-data inputs feed quantitative models that generate forward estimates. Funds building proprietary revenue models for consumer-facing companies often achieve materially better forecast accuracy than sell-side consensus by incorporating real-time transaction and traffic data rather than management guidance alone.

Portfolio Monitoring

Once a position is held, alternative data becomes a continuous early-warning system. A sudden drop in web traffic, a negative review surge, or a freeze in hiring across key divisions can all flag developing problems weeks before a preannouncement or earnings release.

Exit Signals

Alt-data is as useful on the exit side as the entry side. Declining engagement, competitor share gains visible through e-commerce pricing data, or deteriorating supply chain signals help funds exit positions before the market reacts to public information.

The Real Challenges Hedge Funds Face With Alternative Data

Access to alternative data is no longer the barrier it was five years ago. The challenge has shifted to quality, consistency, and integration. These are the obstacles that separate funds producing durable alpha from those generating expensive noise.

| Challenge | Why It Matters | What It Looks Like in Practice |

| Noise vs. signal | Most alt-data arrives raw and context-free | Traffic spikes from press coverage misread as demand acceleration |

| Data freshness | Stale data loses its predictive edge completely | Pricing scraped weekly is useless for high-frequency trading decisions |

| Schema drift | Website structure changes break scraping pipelines silently | Weeks of missing data before the gap is detected |

| Multi-source integration | Real signal requires triangulation across datasets | Merging job data with traffic data requires consistent entity resolution |

| Compliance and privacy | Regulations on data collection tighten every year | Using PII-adjacent data without proper legal review creates regulatory exposure |

| Cost vs. edge | Commodity datasets erode quickly as more funds access them | Paying for the same vendor feed as 30 competitors produces no edge |

| Internal infrastructure | Scraping at scale requires dedicated engineering resources | Funds without managed pipelines lose data reliability as source sites evolve |

The infrastructure gap is especially pronounced for mid-sized funds. Building and maintaining a scraping operation that delivers clean, fresh, reliable data across dozens of sources requires ongoing engineering investment that competes with alpha-generating activities. This is why managed data collection has become a procurement consideration alongside raw data vendor agreements.

What Separates Funds That Generate Alpha From Alternative Data vs. Those That Don’t

The gap is rarely about which data sources a fund uses. Most sophisticated funds have access to similar vendor catalogues. The gap is operational.

Funds that consistently extract alpha from alternative data share three characteristics. First, they build proprietary collection pipelines around specific investment theses rather than buying generic datasets. A fund with a thesis on regional grocery chains will build a price and stock scraper for 15 specific retailers rather than paying for a broad retail data feed that includes 10,000 SKUs across 500 chains.

Second, they invest in data normalisation before analysis. Raw alt-data — whether scraped or purchased — arrives inconsistently structured. Timestamps vary, currencies differ, entity names do not match ticker symbologies. Funds with strong data engineering teams process this into consistent, analysis-ready formats before any signal extraction begins.

Third, they treat freshness as a first-order requirement. A credit card transaction dataset updated monthly may be sufficient for some analyses. But for funds making position decisions around product launches, supply chain events, or competitive pricing shifts, daily or sub-daily refresh cadences are necessary. Building infrastructure that guarantees this without manual intervention is a competitive advantage in itself.

Building the Infrastructure to Source Alternative Data at Scale

For hedge funds serious about alternative data web scraping, the architecture question is as important as the data strategy question. A few structural decisions determine whether a scraping programme delivers consistent, high-quality data or becomes an engineering maintenance burden.

- Define the thesis before the dataset. The most wasteful alt-data investments come from collecting broadly and hoping for insight. Define the specific investment thesis, identify which behavioural signals would confirm or reject it, then build collection infrastructure for those signals only.

- Prioritise data freshness over breadth. A narrow dataset refreshed daily carries more edge than a broad dataset refreshed weekly. For most investment applications, temporal precision outperforms coverage.

- Plan for schema drift from day one. Websites change structure regularly. Production-grade scraping pipelines need automated detection for schema changes and alerting when data stops flowing as expected.

- Validate before you model. Implement data quality checks — completeness, consistency, range validation — as a pipeline stage before data reaches analysts. Bad data that reaches a model is harder to detect than bad data caught at ingestion.

- Evaluate managed collection vs. internal build. For funds without dedicated data engineering teams, managed web scraping providers offer a faster path to clean, reliable data without the infrastructure overhead. The trade-off is reduced customisation. Evaluate based on the specificity of your thesis and the volume of sources you need to monitor.

Putting Alternative Data Web Scraping to Work

The funds generating durable alpha from alternative data today are not doing so because they have access to more sources than their peers. They are doing so because their collection infrastructure is more reliable, their normalisation is more rigorous, and their models are built on data that is fresher and more specific to their thesis.

Alternative data web scraping is the mechanism that makes this possible for the sources that matter most: e-commerce pricing, job postings, review sentiment, OTA trends, and web engagement. These signals exist in public data. The edge lies in the consistency and precision with which you extract them.

If your fund is currently relying on quarterly filings and vendor datasets that 30 other funds also subscribe to, the opportunity cost is real and growing. The market prices in public information faster than ever. What moves first is what your competitors are not yet reading — and that data, for most of the signals that matter, lives on the open web.

If You Want to Explore More

Here are four PromptCloud articles that align with the role of alternative data:

- See how large real estate datasets are collected in our guide to scraping Home.com data.

- Compare internal tools vs managed solutions in scraper tool vs web scraping service.

- Understand high-value collection strategies in scrape Google vs targeted scraping.

- Learn how travel listings influence pricing intelligence in Expedia listings competitor pricing strategy.

The CFA Institute provides a detailed overview of how investment professionals can responsibly use alternative datasets while maintaining compliance and ethical standards — see their guidance here.

Stop being the last fund to see what the data already showed.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. Why do hedge funds rely on alternative data today?

Because traditional financial sources — earnings reports, SEC filings, analyst notes — are available to every market participant simultaneously. They provide no timing advantage. Alternative data, sourced through web scraping and behavioural signals, reflects real-world activity weeks or months before official disclosures confirm it. That time advantage is where alpha is generated.

2. Which alternative data sources offer the strongest predictive value?

Credit card transaction data and web traffic analytics consistently score highest for revenue prediction accuracy. Job posting data is particularly strong for anticipating operational shifts and product direction. E-commerce pricing and inventory signals are most valuable for companies where margin and demand dynamics are highly stock-price-sensitive.

3. What is alternative data web scraping and how is it different from buying data?

Alternative data web scraping is the process of extracting structured information from publicly accessible websites at scale — pricing pages, job boards, review platforms, OTA listings — using automated pipelines. The difference from buying data is proprietary control: scraped datasets are built around a specific thesis and are not accessible to competing funds who use the same vendor. The trade-off is infrastructure cost and maintenance complexity.

4. How do hedge funds integrate alternative data into their workflow?

The most effective integration follows the investment cycle: alt-data feeds idea generation and early thesis formation, triangulation across multiple sources builds conviction, quantitative models incorporating alt-data produce forecasts, and continuous monitoring flags early warning signals once a position is held. The key structural requirement is clean, normalised data that reaches analysts in a consistent, timely format.

5. Is using alternative data legal and compliant?

Collecting and using publicly available data through web scraping is generally permissible, but the legal framework varies by jurisdiction, data type, and collection method. Funds must ensure that scraping does not violate terms of service in ways that create legal exposure, that no personally identifiable information is incorporated, and that all data vendors they work with have conducted proper legal review of their collection practices. Engaging legal counsel with expertise in financial data regulation is standard practice at institutional funds.