What is an AI-Ready Pipeline?

Most AI failures are not model failures. They are pipeline failures wearing a model’s face. When a system starts producing inconsistent results, the instinct is to retrain the model, tune hyperparameters, or blame the architecture. In most cases, the real issue is upstream: the data feeding the model was never stable, consistently structured, or properly monitored. A 2024 survey by Evidently AI found that up to 32% of production scoring pipelines experience distributional shifts within the first six months of deployment. The model did not break. The data environment changed, and the pipeline had no mechanism to detect it.

An AI-ready pipeline is not the same as a standard data pipeline. A basic data pipeline moves data from one place to another. An AI-ready pipeline does something harder: it makes that data trustworthy enough for a model to learn from repeatedly, at scale, without silently drifting.

This guide breaks down every layer of that architecture, what each one does, how they function as a connected system, and what goes wrong when any single layer is skipped or underbuilt.

Raw data is unpredictable by nature. Sources change their schema without notice. Records arrive with missing fields. Formats shift between batches. Values drift in ways that look normal until a model starts misbehaving. A standard ETL process can move that data downstream, but it cannot make the data safe for a model to learn from consistently.

An AI-ready pipeline is the system that imposes control over that unpredictability. It does not just transport data. It shapes it, tags it, validates it, tracks it, and watches it over time. Each layer has a specific job, and those jobs are deliberately ordered: every layer depends on what the previous one delivers.

An AI-ready pipeline does three things well:

- Delivers data in a consistent shape, even when sources update their structure without warning.

- Attaches context and lineage, so models know what they are learning from and teams can trace every record back to its origin.

- Enforces quality and monitors for change, so the dataset stays reliable across every training run, not just the first one.

When these three things hold, model performance becomes predictable. When any one of them breaks, performance erodes in ways that are genuinely difficult to diagnose because the failure rarely surfaces as an obvious error. It shows up as accuracy drift, strange edge cases, or retraining cycles that produce different results for no apparent reason.

Your pipeline is only as reliable as the data coming into it.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

The High-Level Architecture of an AI-Ready Pipeline

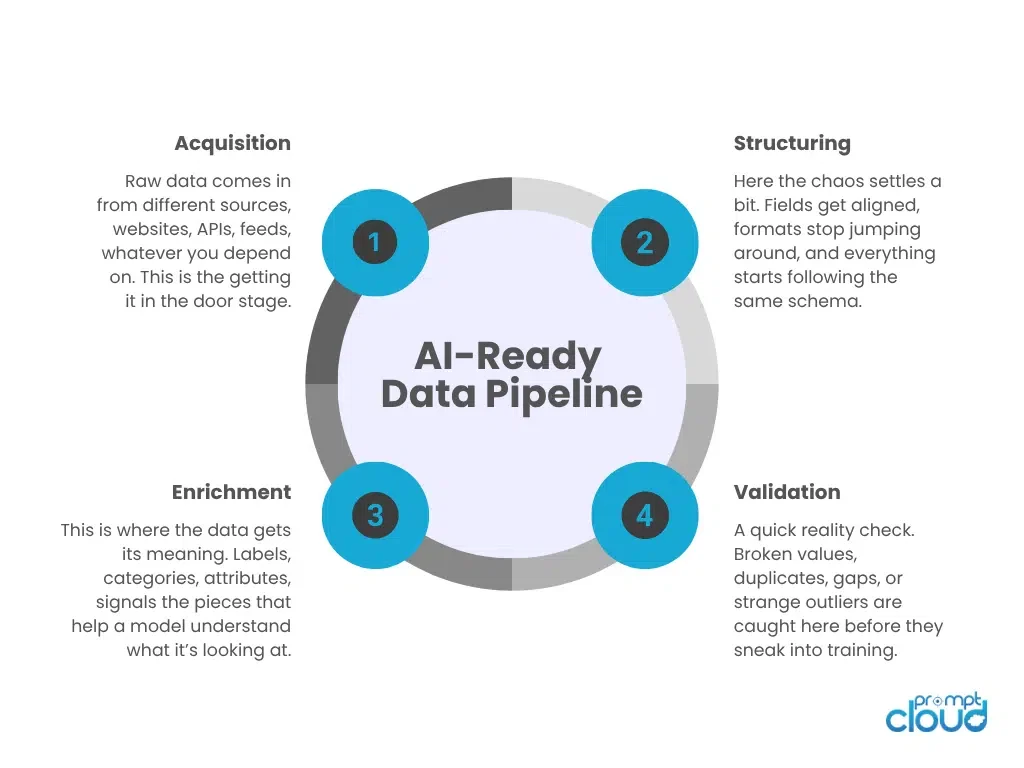

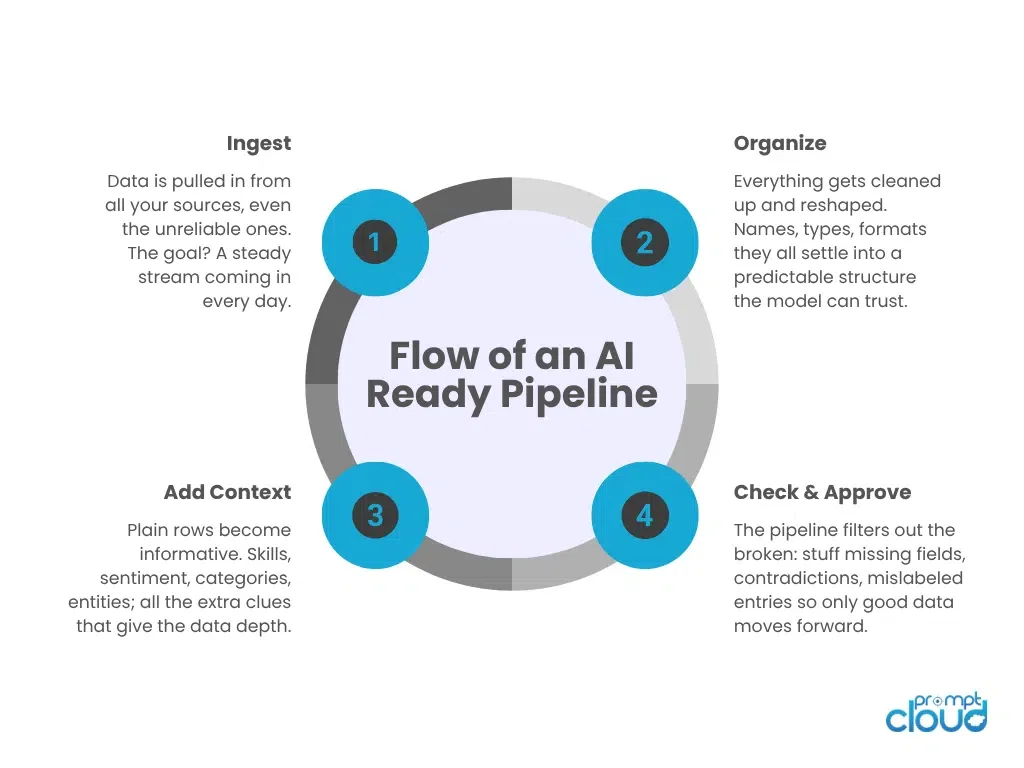

Seven layers sit between raw data and model input. Each one addresses a specific class of problem that, left unresolved, compounds downstream.

| Pipeline Layer | Core Function | What It Passes Forward |

|---|---|---|

| Acquisition | Pull raw data from multiple sources on a controlled schedule | Unfiltered, real-world input with no uniform shape yet |

| Structuring | Map raw fields into a consistent schema with unified names and formats | Structured records that behave like a single, predictable source |

| Enrichment | Add labels, attributes, and metadata for model-ready context | Context-rich data a model can interpret without guessing |

| Validation | Filter duplicates, missing values, contradictions, and outliers | Clean, de-duplicated data with far less noise entering training |

| Lineage | Attach source ID, timestamps, and transformation history to every record | Fully traceable data with a visible origin and processing path |

| Governance | Define access rules, retention schedules, versioning, and change approval | A controlled environment where datasets evolve in a managed way |

| Monitoring | Watch for drift, volume anomalies, structural changes, and failures | Alerts and signals that surface problems before the model is affected |

These layers form a chain. A weak acquisition layer sends inconsistent inputs to structuring. Poor structuring forces the model to waste capacity on formatting irregularities instead of pattern recognition. Missing lineage makes debugging nearly impossible when something goes wrong. And without monitoring, slow drift accumulates until accuracy drops without a clear cause or timeline.

A Deep Dive Into Each Layer of the AI-Ready Pipeline

Acquisition: Getting Data Through the Door Reliably

The acquisition layer has one job: making sure data arrives consistently, even when sources do not cooperate. Web sources update their layout. APIs impose rate limits or change authentication requirements. Feeds go offline without warning. The acquisition layer absorbs this instability so the rest of the pipeline does not have to.

The challenge here is not just connectivity. It is scheduling, fault tolerance, and change detection. A pipeline that pulls data successfully 80% of the time looks healthy on the surface until the missing 20% creates a training gap that biases the model toward whatever data did arrive. Reliable acquisition means building retry logic, monitoring source availability, and alerting when expected volumes fail to appear.

Structuring: Giving Data a Predictable Shape

Once data arrives, it is rarely in a form the model can use. Field names differ across sources. Date formats conflict. Numerical values arrive as strings in some feeds and integers in others. A field labelled “job_title” in one source might appear as “role” or “position” in another. The structuring layer resolves these inconsistencies.

Schema unification is the core task: mapping every source’s raw output into a single, agreed-upon structure. But structuring also includes normalisation, type coercion, deduplication at the record level, and handling schema evolution when a source adds or drops fields. Without a well-designed structuring layer, the model receives a dataset that effectively has a different shape every time it trains, and that alone is enough to make retraining cycles unreliable.

Enrichment: Adding the Context a Model Needs to Learn From

Structured data is consistent but often shallow. The enrichment layer adds depth by attaching metadata, labels, extracted signals, and contextual attributes to each record. A product listing becomes more learnable when it carries a category tag, a quality score, and extracted specifications. A job posting becomes more interpretable when it includes normalised skill labels, seniority classification, and employer industry.

Enrichment is also where entity resolution happens: linking records that refer to the same real-world object across different sources. It is where derived features are added, signals that do not exist in the raw data but can be calculated from it. Without enrichment, the model infers context from raw text alone, which produces shallower pattern recognition and weaker generalisation across segments.

Validation: Stopping Bad Records Before They Reach the Model

Validation is the quality gate. Its job is to catch records that should not enter training: duplicates, records with missing critical fields, values outside acceptable ranges, contradictions between fields, and outliers that suggest a data capture error rather than a real signal.

The reason validation matters is that errors in training data do not usually produce obvious failures. A model trained on 5% noisy records does not crash. It learns from those records and produces subtly wrong patterns that are very hard to trace back to their cause. Validation prevents this by filtering problems early, before they compound into model behaviour that is difficult to explain or correct.

The validation layer also maintains rejection logs. Knowing which records were filtered, and why, is useful both for improving upstream data quality and for auditing model behaviour after deployment.

Lineage: Keeping a Full Record of Where Data Came From

Lineage is what makes debugging possible. Every record in an AI-ready pipeline carries metadata that answers four questions: where did this record come from, when was it ingested, what transformations were applied, and which version of the pipeline produced it.

Without lineage, answering a question like “why is the model performing worse on this segment this month” becomes a forensic exercise. Teams spend days reconstructing what data was used for which training run. With lineage, that question has a direct answer: pull the records, trace them to their source, identify when the source changed, and understand exactly what the model learned from.

Lineage also supports compliance. Regulated industries increasingly require organisations to demonstrate that training data was collected and processed in documented, auditable ways. But even outside regulated contexts, lineage reduces the risk of silent data quality issues going undetected for months.

Governance: Defining the Rules That Keep the Pipeline Consistent

Governance is the layer that prevents the pipeline from fragmenting over time. Without it, different teams start maintaining their own versions of the same dataset. Schema changes happen informally. Retention rules are ignored. Someone removes a field that three downstream processes depend on, and no one finds out until a model training job fails without a useful error message.

A governance layer defines who can access what data, how schema changes must be proposed and approved, how long records are retained, and how dataset versions are tracked. It is not bureaucracy for its own sake. It is the mechanism that keeps a shared pipeline behaving like a shared pipeline rather than a collection of independently managed files that happen to live in the same storage bucket. For growing teams, governance also creates the audit trail that makes it possible to reproduce any historical training run exactly, which is essential when a model needs to be rolled back or a specific version needs to be defended to a stakeholder.

Monitoring: Catching the Problems That Accumulate Quietly

Even a well-designed pipeline drifts over time. Sources change their layout. The distribution of incoming values shifts. Record volumes spike or drop without obvious explanation. None of these issues announce themselves. They accumulate quietly until a training run produces a noticeably worse model, at which point the damage has already been compounding for weeks.

Monitoring is what catches these changes before they compound. A well-instrumented monitoring layer tracks schema changes at the source level, volume anomalies against expected baselines, distributional shifts in key fields, and partial ingestion events caused by upstream failures. When a threshold is breached, the team receives an alert before the affected batch reaches a training run. That window between detection and training is the difference between a scheduled retraining decision and an unplanned debugging session that can take days to resolve. An effective monitoring setup does not just watch for obvious failures. It watches for statistical patterns that indicate something is slowly changing, including value distributions that are shifting, field populations that are declining, and source latency that is creeping upward over successive ingestion cycles.

The specific failure patterns that most commonly escape detection in production environments are documented in this breakdown of why web scrapers fail in production, and most of them apply directly to what a monitoring layer needs to watch for at the acquisition stage.

How These Layers Work Together: The End-to-End Flow

Each layer feeds the next, and understanding where one ends and the other begins matters when something goes wrong, because failure almost always starts in one specific layer and propagates from there.

A record enters at acquisition as raw, unstructured output from a source: a webpage response, an API payload, a file. The structuring layer maps its fields to the agreed schema, resolving naming conflicts and type mismatches. Enrichment adds context that was not present in the raw record: a category, a quality signal, a resolved entity reference.

Before that enriched record moves forward, validation checks it against defined quality rules. If it passes, lineage metadata is attached: source ID, ingestion timestamp, transformation log, pipeline version. The record then enters the governed dataset, subject to access rules, retention schedules, and version tracking defined at the governance layer. Throughout the entire process, monitoring observed volume, schema consistency, and distributional health, alerting when something outside expected parameters appears.

When all seven layers function correctly, the model receives data that looks structurally identical across every training run. That consistency is what produces stable performance. Without it, the model trains on a different dataset each time, and no amount of architectural tuning compensates for that.

What Happens When a Pipeline Layer Fails

Every layer in this architecture has a failure mode. Understanding them helps teams prioritise where to invest and diagnose what went wrong when a model underperforms unexpectedly.

| Layer | What Breaks | Symptom You See | Impact on Models |

|---|---|---|---|

| Acquisition | Data stops arriving from one or more sources | Volume gaps, missing time windows, silent market disappearance | Skewed training patterns, bias toward remaining sources, unstable predictions |

| Structuring | Schema shifts silently across sources | Fields move, formats flip, new columns appear unmapped | Patterns fail, features degrade, accuracy drops with no code change |

| Enrichment | Labels or attributes are missing or inconsistent | Categories applied unevenly, entities left unresolved | Shallow learning, weaker segmentation, misread signals |

| Validation | Quality checks relaxed or misconfigured | Duplicates, outliers, and missing values propagate unchecked | Noisy training produces unstable, hard-to-explain predictions |

| Lineage | Source and history not captured per record | No way to trace a bad record back to its origin | Debugging becomes guesswork; compliance audits are impossible |

| Governance | Access and changes go uncontrolled | Multiple dataset versions, informal schema edits, no single truth | Experiments cannot be compared; trust in the data erodes |

| Monitoring | Drift and structural issues go untracked | Problems only surface after users complain about results | Accuracy erodes quietly; retraining becomes permanently reactive |

The most dangerous failure modes are the silent ones. A structuring issue that flips a column’s data type does not throw an error. A validation misconfiguration that allows 8% more nulls through does not halt a training job. Monitoring exists precisely to surface these slow-moving problems before they become model performance issues that require weeks to diagnose and reverse.

Need This at Enterprise Scale?

While in-house acquisition works for a handful of sources, enterprise AI introduces schema drift, source maintenance, and reliability overhead that compounds quickly.

Why This Pipeline Architecture Matters in Practice

The gap between AI that performs well in a controlled demo and AI that performs well in production is almost always a pipeline gap. A model trained on a stable, well-governed dataset behaves predictably. A model trained on inconsistently structured, inadequately validated data produces results that surprise even the team that built it.

Gartner’s analysis predicted that at least 30% of generative AI projects would be abandoned after proof of concept due to poor data quality and related pipeline issues. The model is rarely the bottleneck at that stage. The infrastructure feeding it is.

The downstream benefits of getting this architecture right are concrete:

- Retraining becomes predictable because the input schema and quality baseline remain stable across runs.

- Debugging becomes faster because lineage makes it possible to trace model behaviour back to specific data characteristics.

- Drift becomes manageable because monitoring catches distribution shifts before they reach a training run.

- Feature engineering improves because enriched, structured records produce cleaner signals with less manual preprocessing.

- Team coordination improves because governance prevents the fragmentation that happens when multiple people work on the same dataset without shared rules.

For teams looking to understand how well-structured pipeline data connects to broader ML preparation standards, Google’s Machine Learning Crash Course on data preparation is a useful reference for what clean, model-ready data is expected to look like at the point of consumption.

How PromptCloud Delivers AI-Ready Web Data

Most teams building AI systems on web data face the same upstream problem: the raw data they need is scattered, unstructured, and inconsistently formatted across hundreds of sources. Building and maintaining the acquisition infrastructure to handle that at scale is a significant engineering investment that competes directly with time spent on model development.

PromptCloud operates at the acquisition and structuring layers of the pipeline. The platform collects structured, schema-consistent web data from any source, at any scale, and delivers it on a defined schedule so teams can build on a reliable input baseline rather than managing the instability of web sources directly.

Every dataset delivered through PromptCloud is:

- Schema-consistent across sources, with field names, formats, and data types unified before delivery.

- Delivered on a predictable schedule, so training pipelines receive data at known intervals without manual intervention.

- Configurable to the exact structure your pipeline needs, rather than requiring downstream teams to reshape raw output before it can be used.

- Monitored for source-level changes, so schema drift at the source is caught before it reaches your pipeline.

For AI teams, this means the most unpredictable layer of the pipeline, acquisition, is handled externally, and what arrives internally is already structured and ready for enrichment, validation, and training. The result is fewer engineering cycles spent on data infrastructure and more cycles available for the model work that actually advances the product.

If your team is evaluating whether to build this infrastructure in-house or work with a managed data provider, the practical breakdown of that decision is covered in detail in the web scraping build vs. buy guide.

Your AI Is Only as Good as Its Pipeline

Models do not perform reliably by accident. They perform reliably because the system feeding them is designed to be stable. Every layer in an AI-ready pipeline: acquisition, structuring, enrichment, validation, lineage, governance, and monitoring, contributes to that stability in a specific and measurable way.

The question is not whether you need this architecture. If you are running models in production, or planning to, you already depend on it. The question is whether it was built intentionally or whether it grew informally in ways that will start showing cracks when you scale.

Building it right from the start is significantly less expensive than diagnosing and rebuilding it after a model failure surfaces a problem that has been accumulating in the pipeline for months.

Your pipeline is only as reliable as the data coming into it.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

Frequently Asked Questions

What is an AI-ready pipeline and how is it different from a regular data pipeline?

A regular data pipeline moves data from one system to another. An AI-ready pipeline does more: it structures the data consistently, enriches it with context, validates its quality, tracks its origin, and monitors it over time. The distinction matters because machine learning models need data that is not just present but trustworthy and stable across every training run. A regular pipeline does not guarantee that. An AI-ready pipeline is specifically designed to.

What are the seven layers of an AI-ready pipeline?

The seven layers are acquisition, structuring, enrichment, validation, lineage, governance, and monitoring. Each addresses a specific class of problem: acquisition handles source reliability, structuring enforces schema consistency, enrichment adds model-relevant context, validation filters bad records, lineage tracks record origin, governance controls access and versioning, and monitoring detects drift and anomalies over time.

Why does my AI model perform inconsistently across retraining cycles?

Inconsistency between retraining runs almost always points to a problem in the structuring or acquisition layer. If the schema or volume of incoming data shifts between runs without detection, the model trains on a subtly different dataset each time. Adding lineage tracking and schema monitoring to those two layers is usually enough to diagnose the root cause.

How does schema drift affect model performance?

Schema drift occurs when the structure of incoming data changes at the source, fields move, formats flip, or new columns appear, without the pipeline detecting or accounting for it. When this happens, the features the model was trained to expect no longer arrive in the expected shape. The model does not fail with an error message. It simply learns from malformed input and produces degraded predictions. Structuring and monitoring layers exist specifically to catch drift before it reaches training.

What is data lineage and why does it matter for AI systems?

Data lineage is a record of where each piece of data came from, when it entered the pipeline, what transformations were applied to it, and which version of the pipeline produced it. For AI systems, lineage is the primary tool for debugging. When a model behaves unexpectedly, lineage lets engineers trace the behaviour back to specific data characteristics rather than guessing. It also supports compliance requirements that demand documented, auditable data provenance.

What is the difference between data validation and data monitoring in a pipeline?

Validation is a per-record check that runs as data enters the pipeline. It filters individual records that fail quality criteria: missing fields, out-of-range values, duplicates. Monitoring is a continuous, system-level process that watches the pipeline over time. It detects patterns like volume drops, distributional shifts, or schema changes across batches. Validation catches individual bad records. Monitoring catches systemic trends that validation alone would miss.

How do you prevent data pipeline failures from affecting model accuracy?

Prevention requires operating at multiple layers simultaneously. The acquisition layer needs retry logic and source availability alerts. The structuring layer needs schema versioning and change detection. The validation layer needs clearly defined quality thresholds with rejection logging. The monitoring layer needs alerts on volume anomalies and distributional shifts. No single layer is sufficient on its own. Reliable model accuracy is the output of all seven layers functioning together.

Is lineage and governance only necessary for regulated industries?

No. Lineage matters any time a team needs to debug a model or explain its behaviour to a stakeholder. Governance matters any time more than one person contributes to or consumes the same dataset. Both become essential once a team grows beyond a single data scientist working in isolation. In unregulated environments, the consequences of skipping these layers are slower debugging cycles, fragmented datasets, and gradual erosion of trust in model outputs.

How does data enrichment improve machine learning model performance?

Enrichment adds context that the raw data does not contain: category labels, extracted attributes, entity resolution links, quality scores, and derived signals. Without enrichment, a model infers these signals from raw text or numerical values alone, which produces shallower representations and weaker generalisation. Enriched data gives the model a richer input surface to learn from, which translates to better segmentation, more accurate predictions, and more interpretable features.

What is the first step to making a data pipeline AI-ready?

The most impactful first step is schema standardisation at the structuring layer. Most pipelines that underperform have inconsistent field naming and format handling across sources. Fixing that creates a stable input surface that every downstream layer can depend on. Once schema consistency is established, adding validation rules and basic lineage logging are the next highest-value investments before moving to enrichment and monitoring.