Compliance Challenges in Web Scraping 2026

Compliance issues in web scraping rarely appear at the start. They appear when scale meets scrutiny. Most teams focus on access. Robots.txt rules. Rate limits. IP rotation. But legal compliance in web scraping is no longer about whether you can fetch data. It’s about whether you can justify why you did, how you stored it, and how you use it. In 2026, compliance failures are not technical crashes. They are governance gaps discovered late.

What are the Compliance Challenges in Web Scraping?

- Can we extract the data?

- Can we avoid being blocked?

- Can we scale the crawl?

In 2026, GDPR enforcement is stricter. CCPA interpretations are broader. PDPA-like regimes in Asia have expanded. Consent expectations have evolved.

Compliance challenges no longer surface during extraction. They surface during audits, partnerships, acquisitions, or AI training reviews. That delay is what makes them dangerous. Teams often believe they are compliant because scraping works. Because robots.txt rules are respected. Because no one complained. But compliance is not silence. It is defensibility. This article breaks down the ten most common web scraping compliance challenges teams face in 2026.

Get structured, QA-verified datasets delivered with SLAs and human-in-the-loop validation.

Challenge 1: Robots.txt Rules vs Legal Compliance in Web Scraping

Many teams still equate robots.txt adherence with legal compliance in web scraping. Respecting robots.txt rules is important. It signals technical respect for crawl directives. But robots.txt is not a legal contract. It is a crawl instruction file. Compliance in 2026 goes beyond path restrictions.

Data privacy regulations focus on personal data. Copyright restrictions focus on content ownership. Terms of service violations hinge on contractual interpretation. None of these are solved purely by reading robots.txt. A scraper can fully respect robots.txt and still create regulatory exposure if it captures personal data without lawful basis or stores copyrighted content without usage rights. Robots.txt is a starting layer. It is not a shield. Teams that rely on it as a legal boundary often discover the gap only when questioned.

Next, we move into the most cited but least understood regulation affecting web scraping: GDPR compliance.

Challenge 2: GDPR Compliance Requirements for Web Scraping

Most teams think GDPR compliance in web scraping is about avoiding obvious personal identifiers. Names. Emails. Phone numbers. User IDs. That’s the surface. GDPR defines personal data more broadly. Any information relating to an identifiable person qualifies. Location signals. Device identifiers. Combined datasets that make re-identification possible. Even contextual data tied to profiles.

The compliance challenge is not just what you collect. It’s what your dataset becomes when combined.

Consent management complicates this further. Just because data is publicly visible does not automatically make it free of lawful basis requirements under GDPR. Public accessibility does not eliminate purpose limitation, data minimization, or retention obligations.

Web scraping compliance challenges in 2026 revolve around traceability. Can you demonstrate a lawful basis? Can you justify retention? Can you delete records on request? Can you document the processing purpose? If your scraping pipeline does not track source context and intended use, you will struggle to answer those questions.

Next, we’ll examine a regulation-related issue that often appears late in scaling: CCPA and data usage rights interpretation.

Challenge 3: CCPA and Data Usage Rights in Scraped Data

CCPA and similar data privacy regulations focus heavily on consumer rights.

- Right to know.

- Right to delete.

- Right to opt-out of sale.

Data usage rights become central. The definition of sale under CCPA has evolved. Data sharing for value exchange can trigger obligations even if money does not directly change hands.

This is where compliance issues appear late. A scraping pipeline may operate quietly for years. But when a partnership forms, when data is packaged as a product, or when enterprise customers demand assurances, CCPA implications surface.

The challenge is structural.

Web scraping systems are rarely designed with deletion workflows or opt-out mapping. They are optimized for ingestion, not erasure. If a data subject request arrives and you cannot trace, locate, and remove associated records, you face compliance risk. Legal compliance in web scraping is not just about collection. It is about lifecycle management.

Next, we’ll explore a common grey area: terms of service violations and contractual ambiguity.

Challenge 4: Terms of Service Violations in Web Scraping

Many websites prohibit automated access in their terms. Others allow it under conditions. Some explicitly restrict data reuse. Enforcement varies widely. Web scraping compliance challenges in 2026 are increasingly contractual. The issue is not always whether scraping is technically possible. It is whether scraping violates agreed terms in ways that create legal exposure.

This becomes especially sensitive in high-value sectors like ecommerce or social platforms. For example, competitive intelligence use cases in social media scraping often intersect with platform terms and content licensing frameworks. See applied use cases here: social media scraping for competitive intelligence

Risk is not uniform across sites. Some organizations conduct legal reviews per domain. Others rely on risk thresholds. The compliance challenge lies in inconsistency. Without standardized review processes, teams make ad-hoc decisions. Terms of service violations are rarely discovered at crawl time. They surface during disputes, audits, or escalations.

Next, we’ll examine a growing challenge: copyright restrictions in scraped content reuse.

Challenge 5: Copyright Restrictions in Scraped Content Reuse

Copyright is where many scraping teams get surprised.

Scraping publicly accessible content does not automatically grant the right to reuse it. Displaying excerpts internally is different from redistributing content. Training AI models on scraped text is different from indexing it. Replicating product descriptions or images in commercial systems introduces additional exposure.

The legal question is not “was it public?”

It is “what rights attach to this content?”

In ecommerce scraping, product specifications may be factual and less protected, but product descriptions, marketing copy, and images are often copyrighted. For example, extracting product information from ecommerce sites may seem operational, but reuse and redistribution change the compliance profile. See applied context here: extract product information from ecommerce sites

AI training data complicates this further. The compliance challenge in 2026 is not just collection. It is a reuse classification. Teams need policies defining how scraped content is stored, transformed, indexed, and redistributed. Without this clarity, copyright exposure accumulates quietly.

Compliance Pressure Increasing?

Get structured, QA-verified datasets delivered with SLAs and human-in-the-loop validation.

Next, we’ll move into something operational: consent ambiguity in publicly visible data.

Challenge 6: Consent Management and Purpose Limitation

Public visibility does not equal informed consent. A user profile visible on a website may have been created under platform-specific expectations. That user may not expect third-party scraping for analytics or AI training. Consent management becomes complex when scraping intersects with user-generated content.

Data privacy regulations emphasize purpose limitation. Even if personal data is publicly available, reprocessing it for unrelated purposes may require a lawful basis. The ambiguity lies in interpretation. Platforms often collect consent for their own processing, not necessarily for external scraping use.

This becomes especially sensitive in industries using scraped data to drive decision-making, including manufacturing intelligence or supply chain optimization. See how data accuracy and governance intersect in operational pipelines: Data Accuracy in Web Scraping (2026).

The compliance risk emerges when intent is unclear. Teams need documented purpose statements for scraped datasets. They need internal policies governing secondary use. They need traceability linking data origin to processing purpose. Without documented intent, compliance becomes reactive.

Next, we’ll examine a technical issue with legal implications: PII masking and data minimization failures.

Challenge 7: PII Masking and Data Minimization Controls

Personal data enters scraped datasets more often than teams expect.

- Usernames embedded in URLs.

- Contact details inside PDFs.

- Metadata hidden inside images.

- Customer reviews revealing phone numbers or addresses.

These exposures are rarely intentional. They are incidental. The failure mode is not capture. It is sequencing. Many teams apply masking after storage. Data is collected, stored in raw form, and then redacted downstream. That window — even if short — creates exposure. Logs, backups, and intermediate pipelines may already contain personal data before masking occurs.

In 2026, data minimization must be enforced at ingestion.

Mature pipelines:

- Run automated PII detection during extraction

- Apply redaction or pseudonymization before persistence

- Tag records containing sensitive attributes

- Log masking events as auditable actions

Detection requires pattern scanning (email regex, phone patterns, national identifiers), contextual signals (review content, forum threads), and metadata inspection (EXIF, embedded documents).

Masking is not a cleanup task. It is a structural control.

If scraped data is used downstream before minimization, compliance risk expands exponentially — especially in AI training contexts where removal after model ingestion is non-trivial.

Challenge 8: Audit Trails and Evidence Gaps

Most web scraping teams believe they are compliant because nothing has gone wrong. Until an audit happens. Audits do not ask whether scraping worked. They ask whether decisions were documented. Whether data governance processes were followed. Whether a lawful basis was assessed. Whether retention policies exist. Whether deletion workflows are functional.

This is where compliance challenges surface late. Scraping systems often lack comprehensive audit trails. They track technical logs, but not governance context. They log request timestamps, but not for processing purposes. They record crawl frequency, but not legal review status. When regulators, enterprise customers, or partners ask for documentation, teams scramble.

Compliance in 2026 requires defensibility.

- Can you show when a domain was legally reviewed?

- Can you demonstrate that robots.txt rules were evaluated?

- Can you prove that PII masking was active at ingestion?

- Can you produce logs of consent-related filtering?

If your web scraper API delivers data reliably but cannot generate compliance evidence, you have a structural weakness. See how reliability systems intersect with data handling: web scraper API for reliable data.

In enterprise engagements over the past 18 months, compliance documentation requests now arrive 3–4x earlier in procurement cycles than they did in 2023. Legal review is no longer a post-deal checkpoint — it is an entry requirement.

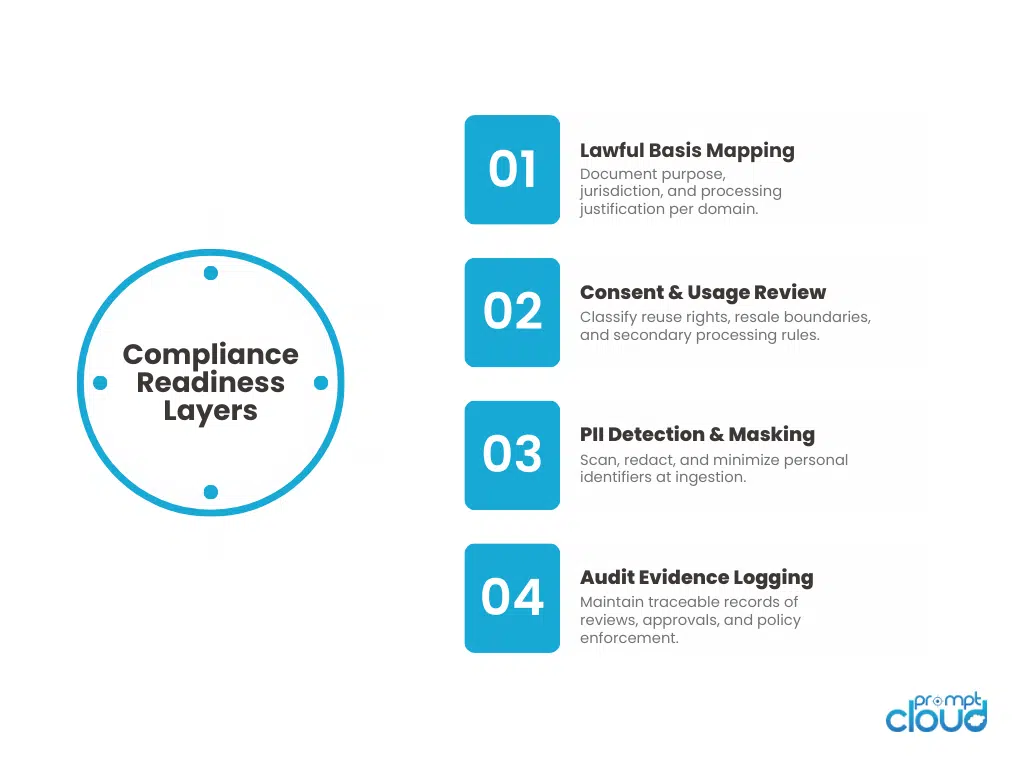

Figure 1: The four structural layers required to make web scraping operations defensible under regulatory review.

Challenge 9: Cross-Border Data Transfer Compliance Risks

Web scraping operates across borders by default. Servers may be in one country. Data subjects in another. Storage infrastructure in a third. AI processing pipelines in a fourth. Regulations differ across all of them.

GDPR imposes restrictions on cross-border transfers. Other jurisdictions have localization requirements. PDPA variants and emerging AI regulations add layers of expectation. The compliance challenge is mapping jurisdiction to a dataset. If scraped content contains personal data from EU residents, storage and processing rules change. If data is transferred outside approved regions, additional safeguards may apply.

Most scraping pipelines do not tag data by origin jurisdiction. They aggregate first and classify later. That sequencing creates risk. Compliance in 2026 demands origin awareness. Geographic tagging. Region-based processing rules. Data residency controls. Without explicit jurisdiction mapping, teams discover exposure only when expanding into new markets or onboarding enterprise clients.

Finally, we’ll address the structural issue that makes all other challenges worse.

Challenge 10: Data Governance Frameworks for Scraping at Scale

Web scraping systems often scale technically faster than they mature operationally. Crawl volume increases. Domains expand. Data products grow. AI training pipelines integrate. But governance frameworks remain static. Policies exist in documents, not in systems.

- No automated compliance gates.

- No structured review workflows.

- No recurring domain risk assessments.

- No centralized data usage registry.

That gap compounds. Legal compliance in web scraping becomes reactive. Issues are addressed after escalation. Reviews happen after contracts are signed. Retention policies are implemented after datasets are already stored. Governance must evolve with scale. Compliance is not a constraint on scraping. It is an architectural layer. If your governance framework does not move at the same speed as your technical growth, compliance challenges will always appear late. And late compliance is expensive compliance.

Compliance Risk Map: Web Scraping in 2026

| # | Compliance Challenge | Where It Usually Breaks | Why It Surfaces Late | What Mature Teams Do Differently |

| 1 | Overreliance on robots.txt | Teams treat crawl directives as legal approval | No issues until formal review | Separate technical access rules from legal assessment |

| 2 | GDPR scope misinterpretation | Personal data captured indirectly | Exposure appears during audit or complaint | Track lawful basis and purpose per dataset |

| 3 | CCPA and usage rights gaps | Data resold or shared downstream | Risk emerges during partnership or productization | Map resale, sharing, and deletion workflows clearly |

| 4 | Terms of service ambiguity | Scraping violates platform contracts | Enforcement triggered selectively | Standardize TOS review and domain risk scoring |

| 5 | Copyright exposure | Reuse of scraped content in products or AI | Content ownership disputes surface later | Classify reuse types and limit redistribution |

| 6 | Consent ambiguity | Public data reused beyond original intent | Questioned during compliance inquiry | Document processing purpose and secondary use policy |

| 7 | PII masking failures | Personal identifiers captured unintentionally | Discovered after internal or external review | Embed automated PII detection and minimization controls |

| 8 | Weak audit trails | No documentation of reviews or safeguards | Audit requests require retroactive reconstruction | Maintain governance logs and review records |

| 9 | Cross-border transfer risk | Data stored or processed across jurisdictions | Triggered during market expansion | Tag data by origin and enforce region-based policies |

| 10 | Governance lag behind scale | Crawl volume expands without policy updates | Compliance gaps widen quietly | Build governance into pipeline architecture, not documents |

When Web Scraping Becomes a Compliance Problem

Most compliance failures in web scraping don’t start with bad intent. They start with a pipeline that was built to collect, not to justify.

Early on, everything looks fine. Data lands. Dashboards move. Teams ship. Nobody asks hard questions because the output is “internal,” “experimental,” or “temporary.” Then the data becomes important. It gets shared with partners. It powers a product feature. It trains a model. It shows up in a board deck. And suddenly the standard changes.

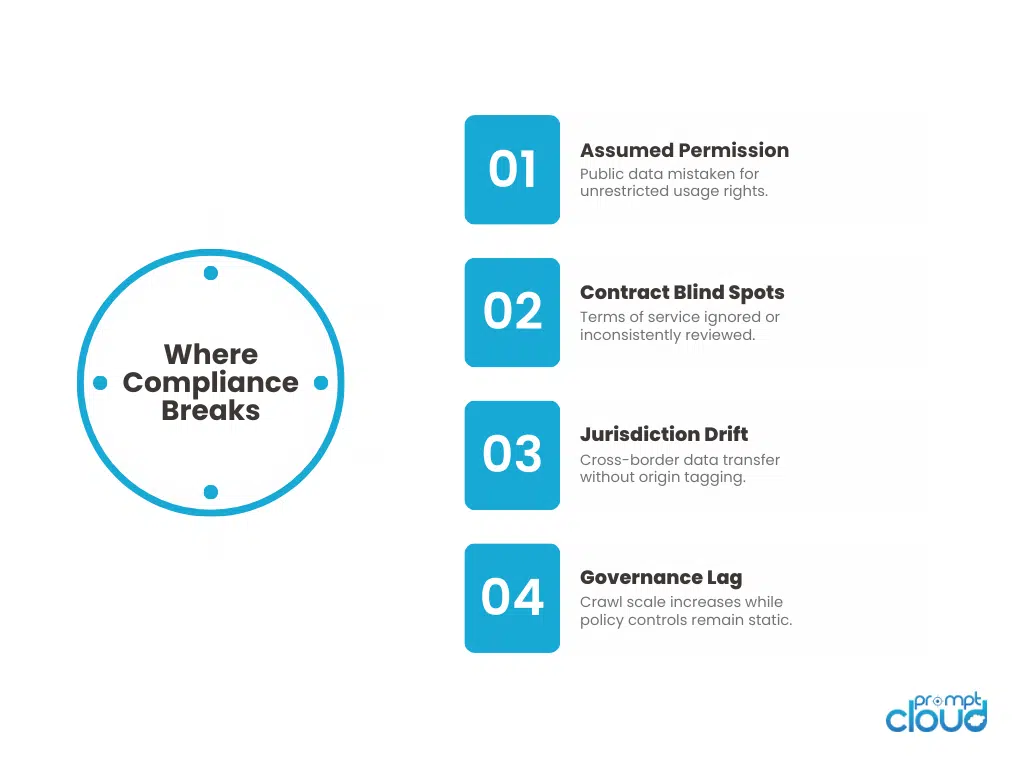

Figure 2: The four recurring pressure points where web scraping compliance challenges emerge in scaled operations.

Now you need to answer questions your system was never designed to answer:

- Why did you collect this data, and what exactly is it used for today?

- What personal data could be present, even indirectly?

- What happens when a record needs to be deleted or excluded?

- Which jurisdiction does this dataset fall under, and where is it processed?

- Can you prove that masking, minimization, and retention controls were active at the time of collection?

Get structured, QA-verified datasets delivered with SLAs and human-in-the-loop validation.

Let’s look at it together.

This is why compliance issues appear late. Not because teams ignored law, but because most pipelines treat compliance as documentation. In 2026, documentation is not enough. Enterprises want evidence. Regulators want controls. Legal teams want repeatable review, not one-off approvals.

The teams that stay out of trouble don’t “scrape less.” They build systems that can stand up to scrutiny. They separate access from permission. They tag data with purpose and jurisdiction. They design deletion and retention as real workflows. They treat PII capture as expected, not rare. They maintain audit trails that explain what happened without a human reconstruction effort.

Compliance is not a blocker to web scraping. It is a quality bar for whether your data can be used safely at scale.

If you can’t defend the dataset, the dataset is not production-ready.

The EDPB provides formal interpretations of GDPR requirements around lawful basis, consent, and data processing—critical for understanding compliance expectations in web scraping.

What separates defensible organizations is structural governance. They design traceability, consent logic, and jurisdiction mapping into their pipelines from day one. This is why compliant web data and governance solutions require built-in audit controls, lifecycle management, and documented lawful basis tracking. Organizations reaching this stage often evaluate whether their current scraping workflows are truly regulation-ready.

FAQs

1. Is web scraping legal if the data is publicly available?

Public availability does not automatically grant unrestricted usage rights. Legal compliance in web scraping depends on purpose, data type, jurisdiction, and terms of service, not just visibility.

2. Does following robots.txt guarantee compliance?

No. Robots.txt provides crawl guidance, not legal permission. Compliance also involves privacy laws, copyright restrictions, and contractual obligations.

3. How does GDPR affect web scraping teams?

GDPR impacts any scraping that involves personal data, directly or indirectly. Teams must demonstrate lawful basis, data minimization, retention control, and deletion capability.

4. What is the biggest compliance risk in scaling scraping operations?

Late discovery. Compliance gaps usually surface during audits, partnerships, or product launches after data has already been widely used.

5. How can teams prepare for compliance audits?

Maintain audit trails, document domain reviews, implement PII masking, tag data by jurisdiction, and embed governance into pipeline workflows instead of relying on policy documents alone.

PromptCloud’s scraping pipelines have passed procurement and compliance audits for enterprise clients in financial services, ecommerce, and AI platforms operating across multiple jurisdictions.

“Compliance questions used to stall our partnerships. With structured lineage and masking controls in place, audits became procedural, not disruptive.”

Director of Data Governance

Global Retail Enterprise

If Two or More of These Apply to Your Pipeline, It’s Time for a Conversation

Get structured, QA-verified datasets delivered with SLAs and human-in-the-loop validation.

If your scraping operation supports enterprise clients, AI systems, or regulated industries, defensibility is not optional.