When it comes to scraping data from the web, different web scraping tool take different approaches. Automated Web Scraping often uses bots to extract data from multiple webpages of a website. Screen grabbing is another technique where the aim is to capture the specific pixels that are selected by the user, instead of delving into the underlying HTML content. Complex scraping engines are used for continuously monitoring competitor websites to keep a check on product prices or other frequently updated information. Both academicians and companies use these systems to get the best data source for their assessments.

If you want to extract a few webpages, the process is pretty simple. You write the code and execute it. You need to enter a single URL or a list of URLs, after which it begins the process of scraping. The scraper then loops over each URL and fetches the complete HTML content of each page. Based on your code’s configuration, the web scraper will extract specific data points and take care of certain data corrections and generate the results for you.

While all web scrapers perform the same tasks, they can be separated into some loosely defined categories:

a). Self-built or DIY tools: While self-built tools involve writing your code, the DIY web scraping tool comes with a graphical user interface and allows you to create a scraping engine through a few clicks. While the former may be difficult to build without software developers with prior experience in web scraping, the latter usually comes with certain constraints.

b). Paid Softwares: Most DIY web scraping tool also comes with a paid version where some extra features are available along with support options.

c). Browser Extensions: Browser extensions are most commonly used by those who want to extract data from web pages while manually browsing through the web. In this case, you will have to select the part of a webpage that you need to extract, and the extension should be able to make it available to you in some format.

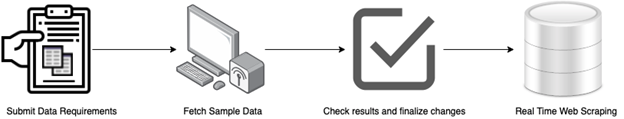

d). Cloud-based DaaS Providers: Cloud-based DaaS (Data as a Service) Providers come to the rescue of enterprises that need a complete end-to-end solution. Usually, you will be charged only based on the amount of data that needs to be scraped or the number of webpages that need to be parsed. You will need to submit your data-requirements and the websites that you need data from. Based on these parameters, the data will be scraped and cleaned. It will also be provided to you in the format (CSV, JSON, XML, etc) and means (S3, Dropbox, REST API, etc.) of your choosing.

If you keep aside the small niche group that writes its scraping code, people mainly rely on two methods to get data: DIY web scraping tool and DaaS or Data as a Service. The former allows people with little knowledge of coding to scrape a website. DaaS, on the other hand, functions on a subscription model like any other cloud service.

DIY Web Scraping Tool

It allows you to scrape websites without the need for a single line of coding. You will, however, need to set certain settings for every website that you need to scrape data from. In case the user interface of any of these websites changes, you will need to make the necessary changes in the config of your tool.

Various commercial tools are available that you can purchase and use. Platforms like extract.io, Mozenda are a few examples of such web scraping tool. You can turn to these options if the data you want to scrape is easy and small in size. Such tools are better suited for ad hoc jobs. If you have a website or a group of websites where you want data to be collected, a DIY web scraper will do the job for you in a few hours. However, complex functions like gathering data from the open web and cleaning or normalizing it based on certain parameters cannot be performed simultaneously.

While these tools have their pros, the cons outweigh them. You should count out DIY web scrapers when:

a). The website is hard to scrape– can be behind a captcha or a login page, or have complex Javascript code running in the background.

b). You do not have a business team with extra time to devote to a new tool that would need regular tweaks and fixes.

c). You require more than just scraping of raw data– you need some data wrangling efforts before it flows into your business workflow.

DaaS or Data As A Service

In this subscription model, your cloud vendor would deliver data to you in a manner that will enable you to use it in a plug-and-play format. This would ensure minimum disruption to your core business system due to the data-stream. The service provider would be responsible for maintaining the crawler so that changes in the websites that need to be crawled are handled, and errored-out pages are debugged. The service provider would also handle the entire cloud infrastructure required for having such a system run continuously. For enterprises dealing with large quantities of data, DaaS solutions take out a lot of overheads from the equation, thereby helping companies transform into a data-driven business.

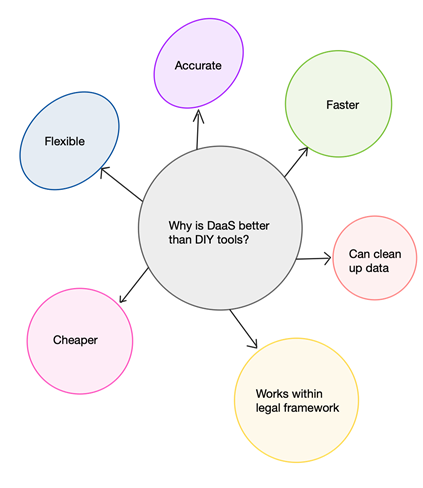

Advantages of DaaS over DIY tools

1. Pocket- Friendly

DIY web scrapers need a team for regular-maintenance and updates. Frequent documentation would also be needed to catch errors that may creep in, early on. Having your business team dedicate time and resources to learning and using a tool may eat up their productivity on core features. You might also need to build a larger business team which would, in turn, prove to be more expensive than using a DaaS service.

DaaS providers do not require you to have an in-house team and the data integration is a one-time setup that can be completed with relative ease.

2. Flexibility

Enterprises usually require custom-made scraping solutions. DIY scrapers cannot be customized easily,and you may end up using multiple tools in a chain to get your actual work done. This may impact the quality of your data. Enterprise-grade DaaS solutions can accommodate any custom changes to fetch the data in a specific format. This may be in the form of updates to the data scraped from a website.

3. Accurate Results

While DIY web scrapers can bring the required data, there might be inaccuracies. You never know which website will cause your DIY web scraper to pick up the wrong data and bring inaccurate results. Certain webpages can also cause your DIY web scraping tool to throw errors which will then need to be debugged manually. These errors can alter your data analysis insights and create problems in your data-driven decisions. However, professional web scraping services will ensure that you receive accurate datasets in a ready-to-consume form.

4. Faster scraping

Large-scale web scraping tasks often cause DIY web scrapers to perform at slower speeds than what may be required for a continuous feed. DaaS providers use the right infrastructure and resources, which allow them to extract data faster and more efficiently. This usually involves scraping data from multiple sources concurrently.

5. Data clean-up

Web scrapers usually collect the data in a dump file. If you use a DIY scraping tool, you will have to clean-up the data to get it in a usable format. This means you will require additional tools for the clean-up. However, on using a DaaS, you will not have to worry about it since you will get the data in its “ready-to-use” form.

6. Site Policies

Websites from which you might want to extract data can have policies that bar data scraping. Any DaaS provider will extract data following the rules and policies that are set by the website. This would ensure that you do not get into legal hassles when using data scraped from the web.

What do we offer at PromptCloud?

Our team at PromptCloud offers a fully managed Enterprise-Grade Web scraping service. This end-to-end managed data mining service can help you use data from millions of webpages to boost your business. Instead of every company having to invest time and resources into personnel, training, tools, and infrastructure, a DaaS service like ours takes care of every web scraping requirement that an enterprise can have.

Having completed thousands of web scraping projects for companies worldwide, we take pride in our completely customizable web-scraping solution that can be tweaked based on the problem statement at hand. Unlike other DaaS services, we look beyond the data you need. We look at the question you are trying to answer with the data, the problem that the data should solve so that we are also able to provide you with some “data-advice.”