DIY web scraping challenges appear small at first. They compound fast.

Building your own scraper feels efficient in the beginning. You control the logic. You own the code. You avoid vendor costs. For early experiments or one-off data pulls, a DIY web scraper works fine. The problem starts when the data stops being optional. The moment scraped data feeds pricing models, AI systems, market intelligence dashboards, or revenue-impacting workflows, the stakes change. What was once a side project becomes infrastructure.

This is where DIY web scraper limitations start to surface. The maintenance burden increases. Proxy rotation issues multiply. Anti-bot mitigation becomes an arms race. Monitoring gaps appear. Engineering bandwidth gets consumed by scraper maintenance instead of product development. DIY web scraping challenges are not about whether scraping works. They are about whether it works reliably, continuously, and at scale.

The build vs buy scraping decision is not ideological. It is operational.

Let’s break down the ten structural risks that appear when DIY scrapers are pushed into business-critical environments.

DIY web scraping challenges remain manageable at small scale. They become structural when scraped data feeds business-critical systems. Maintenance burden expands, anti-bot mitigation becomes continuous, monitoring gaps create silent failures, and engineering bandwidth shifts from innovation to upkeep. This article outlines the ten inflection points where build vs buy scraping becomes a strategic decision rather than a technical preference.

In enterprise environments we support, teams transitioning from DIY scraping to managed services typically reclaim 10–20 engineering hours per week previously spent on maintenance, proxy management, and incident response.

If your scraping layer is already operating like production infrastructure, the conversation about ownership is worth having.

Challenge 1: Maintenance Burden Expands Faster Than Expected

Why DIY scrapers start simple

Most DIY web scraping challenges do not appear on day one. At that stage, maintenance feels manageable. The problem begins when the scraper becomes business-critical. New sources are added. Fields expand. HTML structure changes. Edge cases accumulate. Suddenly the codebase is no longer a script. It is a fragile system with implicit dependencies.

DIY web scraper limitations start to show when change becomes continuous rather than occasional.

What increases the maintenance load

As scraping at scale expands, maintenance grows across multiple dimensions:

- Website structure changes requiring selector updates

- Anti-bot mitigation measures blocking requests

- Proxy rotation issues creating inconsistent responses

- Parsing adjustments for new layouts

- Pipeline updates for schema changes

- Monitoring gaps requiring manual inspection

Each of these adds engineering effort. None of them directly create product value. The maintenance burden compounds because scrapers are tightly coupled to external systems you do not control.

Why engineering bandwidth becomes constrained

When scraped data feeds AI pipelines, pricing engines, or market analytics, downtime becomes unacceptable. Engineers must prioritize keeping the scraper alive.

Over time, DIY web scraping challenges shift engineering bandwidth from innovation to upkeep. Teams spend more time patching and debugging than building features. This is the hidden cost in the build vs buy scraping conversation. Maintenance is not a one-time effort. It is a permanent operational commitment.

Challenge 2: Anti-Bot Mitigation Becomes an Ongoing Arms Race

Why blocks increase as usage grows

A DIY scraper often works quietly in the beginning. Low request volume, simple headers, minimal concurrency. It goes unnoticed.

The moment you increase frequency or expand coverage, anti-bot systems respond. Rate limits tighten. IP addresses get flagged. JavaScript challenges appear.

Where DIY web scraper limitations surface

As anti-bot systems evolve, teams encounter:

- IP bans requiring constant proxy rotation

- CAPTCHA interruptions

- Dynamic token validation

- Bot fingerprinting tied to browser behavior

Proxy rotation issues alone can consume engineering time. Sourcing stable proxies, managing pools, detecting burned IPs, and replacing them becomes a separate operational layer.

Why this drains engineering bandwidth

Anti-bot mitigation is not a core product competency for most companies. Yet DIY scrapers force teams to build internal solutions for proxy management, browser simulation, and evasion logic.

This introduces:

- Hidden scraping costs

- Infrastructure complexity

- Increased monitoring gaps

Challenge 3: Monitoring Gaps Lead to Silent Data Failures

Why DIY systems underinvest in observability

When teams build their own scrapers, monitoring is usually minimal. The script runs. Logs are stored. A success message confirms completion.

For experimental use cases, that may be enough. A scraper can complete successfully while returning incomplete, misaligned, or stale data. This is where DIY web scraper limitations become risky.

What monitoring gaps look like in production

Without structured observability, teams miss:

- Gradual record count drops

- Field-level null rate increases

- Distribution shifts in key attributes

- Freshness degradation

- Partial extraction failures masked as success

Because the scraper did not crash, no alert fires.

Data reliability risks increase silently. Downstream analytics, AI models, or pricing engines continue operating on degraded input.

What Strong Observability Requires

Addressing monitoring gaps in DIY web scraping systems typically requires:

- Automated validation layers tied to expected field distributions

- Data consistency checks across batches

- Freshness thresholds aligned to business SLAs

- Change detection systems tied to historical baselines

These layers are rarely designed into early DIY implementations but become necessary once scraping feeds business-critical workflows.

Monitoring gaps expose failures that already exist. Challenge 4 focuses on building reliability systems so fewer failures occur in the first place.

Challenge 4: Data Reliability Risks Increase as Scraping Becomes Critical

Where reliability breaks down in DIY systems

DIY web scraper limitations often surface in:

- Inconsistent extraction across sources

- Partial data updates when jobs fail mid-run

- Untracked schema shifts affecting downstream logic

- Gaps in scraping at scale due to proxy rotation issues

- Weak data consistency checks across batches

Why reliability requires system-level discipline

Reliable scraping at scale requires:

- Structured pipeline updates

- Automated validation gates

- Historical consistency monitoring

- Regression testing before deployment

- Defined SLAs for data freshness and completeness

Challenge 5: Scraping at Scale Exposes Infrastructure Limits

Why scale changes everything

DIY web scraping challenges multiply when request volume increases. What was once a simple scheduled script now requires distributed job orchestration, intelligent retry logic, proxy rotation, and rate management.

Scraping at scale is an infrastructure problem, not just a coding task.

Where DIY web scraper limitations surface at scale

As volume increases, teams face:

- Increased proxy rotation issues and IP bans

- Higher failure rates due to concurrency spikes

- Memory and CPU bottlenecks in parsing

- Queue backlogs during peak runs

- Uneven crawl coverage across sources

Without architectural planning, scaling introduces instability.

Many DIY systems rely on single-instance scripts or loosely managed containers. They are not built for coordinated distributed crawling. As scale grows, maintenance burden increases disproportionately.

Why scale amplifies hidden scraping costs

Scaling a scraper means scaling:

- Infrastructure management

- Anti-bot mitigation layers

- Monitoring systems

- Data validation logic

- Error handling and retries

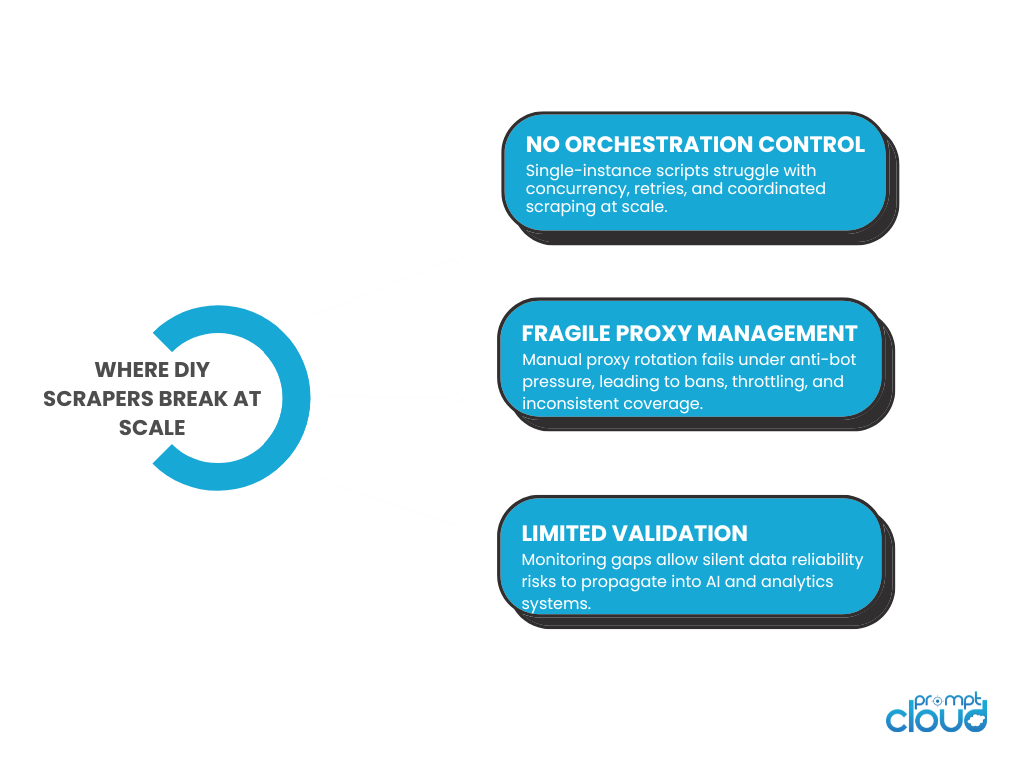

Figure 1: Structural gaps that expose DIY web scraper limitations in business-critical environments.

Experiencing These Challenges?

If your scraping layer is already operating like production infrastructure, the conversation about ownership is worth having.

Teams across ecommerce, AI, and analytics organizations have migrated from internal scrapers to managed infrastructure once maintenance and reliability began affecting product velocity.

“Scraper maintenance was absorbing nearly an entire sprint every quarter. Offloading infrastructure stabilized our data and freed engineering bandwidth for roadmap delivery.”

Director of Engineering

Global Marketplace

Challenge 6: Compliance Risks Are Often Overlooked

Where compliance risk appears

As scraping operations grow, teams must consider:

- Terms-of-service constraints

- Data usage boundaries

- Jurisdictional data regulations

- Access controls for stored datasets

- Audit trails for extraction logic

DIY web scraper limitations surface because compliance engineering is rarely built into early architectures.

Many teams do not maintain structured logs tying extraction runs to specific logic versions. They do not track provenance. They lack documentation explaining how data was collected or transformed.

These gaps become liabilities when scrutiny increases.

Why compliance requires structural controls

Managing compliance risks requires:

- Traceable data lineage

- Version-controlled pipeline updates

- Role-based access controls

- Retention policies

- Documented change management workflows

Challenge 7: Engineering Bandwidth Gets Redirected from Core Product Work

Why scraping becomes a permanent responsibility

A DIY scraper rarely stays small. Once it proves useful, it expands. More sources are added. More fields are requested. More teams depend on the output.

At that point, scraping stops being a side task. It becomes infrastructure.

DIY web scraping challenges become visible when engineering bandwidth shifts toward scraper maintenance instead of product development. Instead of building features, teams spend cycles fixing broken selectors, tuning proxy rotation, handling anti-bot mitigation, and investigating monitoring gaps.

This shift is gradual. It often goes unnoticed until roadmap velocity slows.

Where the time actually goes

Engineering effort is consumed by:

- Debugging HTML layout changes

- Updating parsing logic for new edge cases

- Managing scraping at scale infrastructure

- Handling proxy rotation issues

- Adding automated validation after incidents

- Fixing data reliability risks exposed downstream

None of these activities directly generate product differentiation. The build vs buy scraping decision is often framed as a cost comparison. It is more accurately a focus comparison.

Why opportunity cost matters more than infrastructure cost

Hidden scraping costs are not limited to servers and proxies. The larger cost is lost opportunity.

When engineers are tied up maintaining DIY web scrapers, they are not improving AI models, optimizing user experience, or shipping new capabilities. DIY web scraper limitations become strategic when scraping evolves from experimental support to operational dependency. The question shifts from “Can we build it?” to “Should our core team be responsible for maintaining it long-term?”

Challenge 8: Schema Instability Disrupts Downstream Systems

Why structure becomes harder to control over time

As DIY scrapers evolve, schema changes accumulate. New fields are added to meet stakeholder requests. Old fields are renamed. Types are adjusted to match updated website structure changes.

Without disciplined schema control, structural drift becomes normal. DIY web scraping challenges increase because schema versioning is rarely formalized. Most teams modify the extraction logic directly and redeploy. They do not track structured schema evolution across versions.

That works until multiple downstream systems depend on stable structure.

Where DIY web scraper limitations surface

Schema instability creates:

- ETL breakages during ingestion

- BI dashboards displaying incorrect mappings

- Feature pipelines failing in AI workflows

- Historical datasets containing inconsistent field formats

- Manual data consistency checks after every update

When scraping at scale, even small structural shifts ripple across data consumers. Without backward compatibility planning, pipeline updates introduce operational risk every time a field is modified.

Why schema discipline matters in business-critical pipelines

Managing DIY web scraping challenges at this stage requires:

- Explicit schema versioning tied to releases

- Controlled migration workflows

- Backward compatibility layers

- Automated validation blocking breaking changes

- Clear documentation of structural updates

Challenge 9: Hidden Scraping Costs Accumulate Over Time

Why DIY looks cheaper at the start

The build vs buy scraping conversation usually begins with cost comparison. DIY appears inexpensive because there is no vendor invoice. The code is written internally. Infrastructure runs on existing cloud accounts.

On paper, the cost looks limited to engineering time and compute usage.

DIY web scraping challenges emerge when the hidden costs are tracked over months instead of weeks.

Where the real costs appear

Hidden scraping costs typically include:

- Ongoing scraper maintenance effort

- Proxy rotation issues requiring paid proxy pools

- Infrastructure scaling during peak loads

- Engineering time spent on anti-bot mitigation

- Monitoring and alerting system development

- Compliance and governance tooling

- Incident response after data reliability failures

These costs rarely appear in initial planning documents. They surface gradually as scraping at scale expands.

Why cost volatility increases with scale

As the number of sources grows, complexity does not scale linearly. Each additional source introduces new anti-bot behaviors, new website structure changes, and new edge cases.

Engineering bandwidth must scale alongside coverage. Monitoring gaps become more expensive to close. Data reliability risks require stronger validation.

DIY web scraper limitations become clear when cost unpredictability increases. What seemed economical at small scale becomes structurally expensive at production scale.

The build vs buy scraping decision often shifts when organizations measure long-term operational cost rather than initial development effort.

Challenge 10: DIY Scrapers Struggle to Meet Enterprise Reliability Standards

Why working is not the same as production-ready

A DIY scraper can work consistently in controlled environments. It can return data. It can run on schedule. That does not mean it meets enterprise reliability expectations.

Business-critical data pipelines require defined SLAs, measurable uptime, structured monitoring, and predictable performance.

DIY web scraping challenges become structural when reliability expectations rise beyond informal standards.

What enterprise-grade reliability requires

Production-grade scraping systems typically include:

- Documented SLAs for freshness and completeness

- Redundant infrastructure for fault tolerance

- Automated validation before data release

- Structured monitoring for data reliability risks

- Controlled pipeline updates with rollback capability

- Governance controls and audit trails

DIY web scraper limitations appear because these layers are rarely designed into early implementations.

Retrofitting enterprise reliability onto a loosely built scraper is significantly harder than designing for it from the start.

Why reliability gaps become strategic risks

When scraped data feeds pricing engines, AI systems, chatbots, or customer-facing applications, instability directly affects revenue and reputation.

A temporary failure may be manageable. Repeated inconsistencies erode trust in the data itself. At that point, the issue is no longer technical. It is organizational. DIY web scraping challenges ultimately converge on one question: is the scraping system engineered with the same rigor as the systems that depend on it?

If not, reliability will eventually become the bottleneck.

Summary of the Challenges

| Challenge | DIY web scraper limitation | What it looks like in reality | Business-critical impact | What “buy/managed” typically removes |

| 1. Maintenance burden | Scraper upkeep scales with source volatility | Constant selector fixes after website structure changes | Engineering time diverted to scraper maintenance | Dedicated maintenance + change handling |

| 2. Anti-bot mitigation | Evasion becomes continuous work | CAPTCHAs, bans, fingerprinting, throttling | Coverage drops, unstable refresh cycles | Built anti-bot mitigation + hardened delivery |

| 3. Monitoring gaps | Job-level “success” hides data failure | Silent null creep, missing fields, drift | Decisions made on degraded data | Data-level validation + observability |

| 4. Data reliability risks | Output varies across runs and sources | Partial updates, mis-mapped fields, stale values | Model and analytics integrity erodes | Reliability engineering + SLAs |

| 5. Scraping at scale | Architecture not built for concurrency | Queue backlogs, uneven coverage, retries explode | Latency spikes, missed updates | Distributed crawling + scale ops |

| 6. Compliance risks | Governance is bolted on late | Weak provenance, unclear ToS controls | Legal and reputational exposure | Compliance processes + auditability |

| 7. Engineering bandwidth | Core team becomes on-call for data | Firefighting incidents, slow product roadmap | Opportunity cost dominates | Offloads ops burden |

| 8. Schema instability | No schema versioning discipline | Types shift, fields rename, dashboards break | Downstream breakage + inconsistent history | Schema control + backward compatibility |

| 9. Hidden scraping costs | Costs are fragmented and volatile | Proxy pools, infra spikes, incident hours | Budget unpredictability | Predictable cost + managed ops |

| 10. Enterprise standards | Hard to retrofit production rigor | No SLAs, no rollback, weak QA gates | Reliability becomes bottleneck | SLA-backed delivery + mature controls |

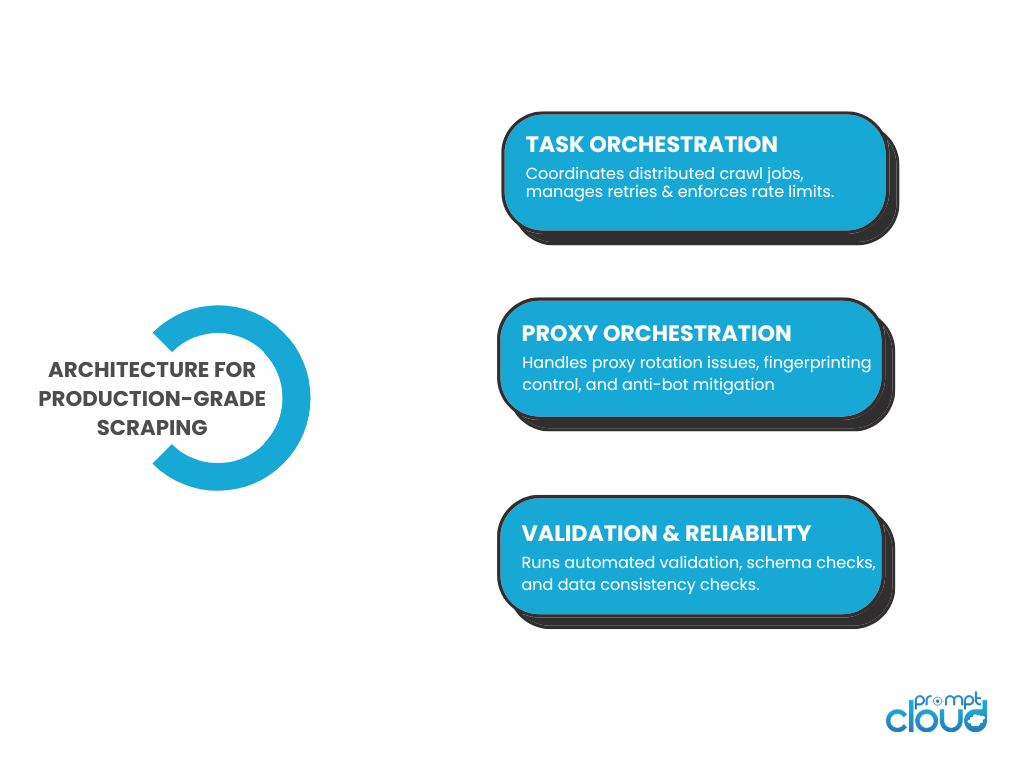

Figure 2: Core architectural components required to overcome DIY web scraping challenges in production environments.

When DIY stops being scrappy and starts being risky

DIY web scraping challenges are not about capability. Most engineering teams can build a scraper. The issue is what happens when that scraper becomes infrastructure.

At a small scale, DIY feels efficient. You control the stack. You ship quickly. You avoid vendor cost. For non-critical workloads, that tradeoff can make sense.

The risk appears when scraped data becomes embedded in pricing systems, AI models, forecasting pipelines, or customer-facing products. At that point, reliability expectations rise. Change frequency increases. Monitoring requirements expand. Compliance obligations surface. Engineering bandwidth becomes finite.

This is where DIY web scraper limitations compound.

The build vs buy scraping decision is rarely about whether scraping works. It is about whether your organization wants to operate a production-grade data acquisition layer as a permanent responsibility. That includes anti-bot mitigation, scraping at scale, schema discipline, regression testing, observability, and governance. If your team is already maintaining retry logic, rotating proxies, debugging silent drift, and writing internal validation frameworks, you have effectively built a data operations function.

The question becomes strategic. Is maintaining that function aligned with your core differentiation? Or is it absorbing engineering capacity that should be focused elsewhere? DIY web scraping challenges are manageable at low scale. They become structural at high stakes. The right answer is not universal. It depends on how critical the data is, how volatile the sources are, and how much operational rigor your organization is prepared to sustain.

What separates successful teams is operational focus. They protect engineering bandwidth and treat data reliability as infrastructure, not an experiment. This is why managed web scraping services require anti-bot resilience, structured validation, and SLA-backed delivery. Organizations reaching this realization often evaluate whether continuing DIY ownership aligns with their long-term product priorities.

But one thing is consistent: when data drives revenue or product experience, reliability must be engineered deliberately, not assumed.

If your scraping layer is already operating like production infrastructure, the conversation about ownership is worth having.

If you want to go deeper

- Synthetic data vs real web data for AI training – Why production-grade data reliability matters more than convenience when training AI systems.

- Designing AI-ready data schemas for web data – Explains how schema control and structured modeling reduce downstream breakage.

- Improving AI model accuracy with ecommerce data – Shows how data reliability risks directly affect AI performance.

- Web data extraction for chat bots – Why conversational systems require stable, validated data pipelines.

The Google Site Reliability Engineering handbook emphasizes that reliability must be designed and measured, not assumed. The same principle applies to scraping systems that support business-critical workflows.

FAQs

1. What are the biggest DIY web scraping challenges for business-critical data?

The main DIY web scraping challenges include maintenance burden, anti-bot mitigation, monitoring gaps, schema instability, hidden scraping costs, and limited engineering bandwidth. These risks increase as scraping becomes embedded in production systems.

2. When do DIY web scraper limitations become serious?

DIY web scraper limitations become serious when scraped data feeds revenue-impacting workflows such as pricing engines, AI models, forecasting tools, or customer-facing applications. At that point, reliability and compliance requirements increase significantly.

3. How do hidden scraping costs affect the build vs buy scraping decision?

Hidden scraping costs include ongoing maintenance, proxy infrastructure, monitoring development, incident response, and compliance overhead. Over time, these operational costs often exceed initial development savings.

4. Why is scraping at scale harder than expected?

Scraping at scale introduces concurrency challenges, proxy rotation issues, anti-bot defenses, and distributed infrastructure complexity. Without architectural planning, instability increases non-linearly with volume.

5. How can organizations reduce data reliability risks in scraping?

Organizations reduce data reliability risks by implementing automated validation, schema versioning, regression testing, monitoring gaps closure, and structured governance controls. Reliability must be engineered into the pipeline rather than patched after incidents.