The Twitter stream of any person contains rich social data that can unveil a lot about that person. Since Twitter data is public and the API is open for anyone to use, data mining techniques can be easily applied to find out everything from the timing patterns and the topics the person focuses on to the text patterns used to express views and thoughts.

In this study, we’ll use R to perform analyses on the tweets posted by one of the most famous celebrities, i.e., Emma Watson. First we’ll go through the exploratory analysis and then move to text analytics.

Extracting Emma Watson’s Twitter Data

Twitter API allows us to download 3,200 recent tweets — all we need to do is create a Twitter app to get the API key and access token. Follow the steps given below to create the app:

- Open up Twitter

- Click on ‘Create New App’

- Enter the details and click on ‘Create your Twitter application’

- Click on ‘Keys and Access Tokens’ tab and copy the API key and secret

- Scroll down and click on “Create my access token”

There is an R library called rtweet which will be used to download the tweets and create a data frame. Use the code given below to proceed:

[code language=”r”]

install.packages(“httr”)

install.packages(“rtweet”)

library(“httr”)

library(“rtweet”)

# the name of the twitter app created by you

appname <- “tweet-analytics”

# api key (replace the following sample with your key)

key <- “8YnCioFqKFaebTwjoQfcVLPS”

# api secret (replace the following with your secret)

secret <- “uSzkAOXnNpSDsaDAaDDDSddsA6Cukfds8a3tRtSG”

# create token named “twitter_token”

twitter_token <- create_token(

app = appname,

consumer_key = key,

consumer_secret = secret)

#Downloading the tweets posted by Emma Watson

ew_tweets <- get_timeline(“EmmaWatson”, n = 3200)

[/code]

Exploratory analysis

Here we’ll summarize the dataset by visualizing the following:

- Number of tweets posted from 2010 to 2018

- Tweet frequency over the months

- Tweet frequency through a week

- Tweet density through a day

- Comparison of the number of re-tweets and original tweets

Year-wise tweets

We’ll be using the amazing ggplot2 and lubridate library to plot charts and work with the dates. Go ahead and follow the code given below to install and load the packages:

[code language=”r”]

install.packages(“ggplot2”)

install.packages(“lubridate”)

library(“ggplot2”)

library(“lubridate”)

[/code]

Execute the following code to plot the count of tweets over the years by breaking down into months:

[code language=”r”]

ggplot(data = ew_tweets,

aes(month(created_at, label=TRUE, abbr=TRUE),

group=factor(year(created_at)), color=factor(year(created_at))))+

geom_line(stat=”count”) +

geom_point(stat=”count”) +

labs(x=”Month”, colour=”Year”) +

xlab(“Month”) + ylab(“Number of tweets”) +

theme_minimal()

[/code]

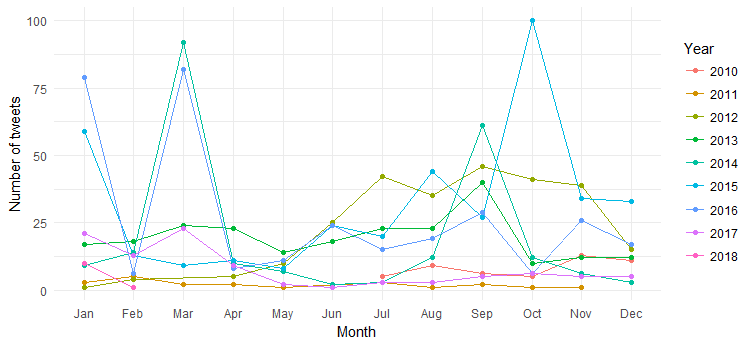

The result is the following chart:

We can see the break-up of the month-wise tweets (spikes on March 2014, March 2016 and October 2015) over the years, but interpretation is difficult. Let’s now simplify the chart by plotting only year-wise tweet counts.

[code language=”r”]

ggplot(data = ew_tweets, aes(x = year(created_at))) +

geom_bar(aes(fill = ..count..)) +

xlab(“Year”) + ylab(“Number of tweets”) +

scale_x_continuous (breaks = c(2010:2018)) +

theme_minimal() +

scale_fill_gradient(low = “cadetblue3”, high = “chartreuse4”)

[/code]

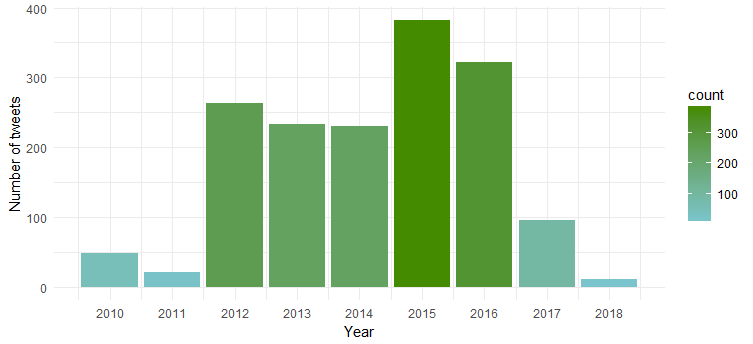

The resulting chart shows that she was most active in 2015 and 2016 while 2011 witnessed least activity.

Tweet frequency over the months

Let’s now find out in Emma Watson Twitter Data to see whether she tweets equally over the months of a year or there are any specific months in which she tweets the most. Use the following code to create the chart:

[code language=”r”]

ggplot(data = ew_tweets, aes(x = month(created_at, label = TRUE))) +

geom_bar(aes(fill = ..count..)) +

xlab(“Month”) + ylab(“Number of tweets”) +

theme_minimal() +

scale_fill_gradient(low = “cadetblue3”, high = “chartreuse4”)

[/code]

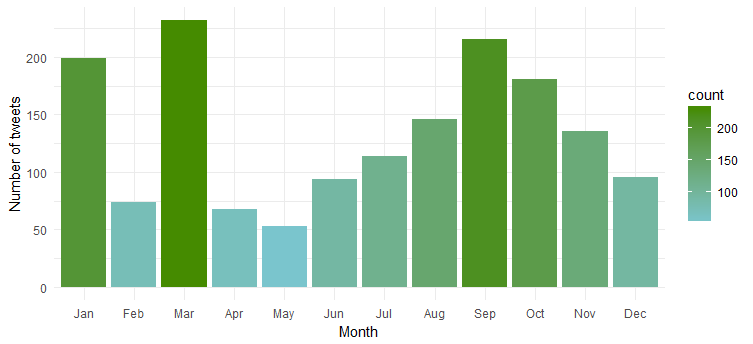

Clearly she is most active during ‘January’, ‘March’ and ‘September’.

Tweet frequency through a week

Is there any specific day of the week in which she is most active? Let’s plot the chart by executing the following code:

[code language=”r”]

ggplot(data = ew_tweets, aes(x = wday(created_at, label = TRUE))) +

geom_bar(aes(fill = ..count..)) +

xlab(“Day of the week”) + ylab(“Number of tweets”) +

theme_minimal() +

scale_fill_gradient(low = “turquoise3”, high = “darkgreen”)

[/code]

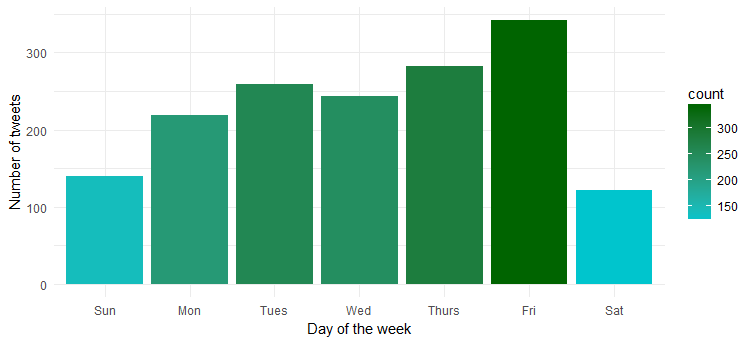

Hmm…she is most active on Friday. Probably getting ready to get into party mode?

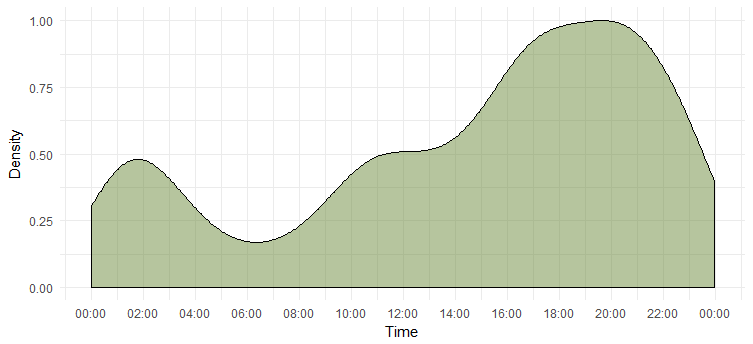

Tweet density through a day

We’ve figured out the most active day, but we don’t know the time at which she is most active. The following chart will give us the answer.

[code language=”r”]

# package to store and format time of the day

install.packages(“hms”)

# package to add time breaks and labels

install.packages(“scales”)

library(“hms”)

library(“scales”)

# Extract only time from the timestamp, i.e., hour, minute and second

ew_tweets$time <- hms::hms(second(ew_tweets$created_at),

minute(ew_tweets$created_at),

hour(ew_tweets$created_at))

# Converting to `POSIXct` as ggplot isn’t compatible with `hms`

ew_tweets$time <- as.POSIXct(ew_tweets$time)

ggplot(data = ew_tweets)+

geom_density(aes(x = time, y = ..scaled..),

fill=”darkolivegreen4″, alpha=0.3) +

xlab(“Time”) + ylab(“Density”) +

scale_x_datetime(breaks = date_breaks(“2 hours”),

labels = date_format(“%H:%M”)) +

theme_minimal()

[/code]

This tells us that she is most active during 6-8 p.m. Note that the timezone is UTC (can be found out using `unclass` function. Keep this in mind while tweeting Emma.

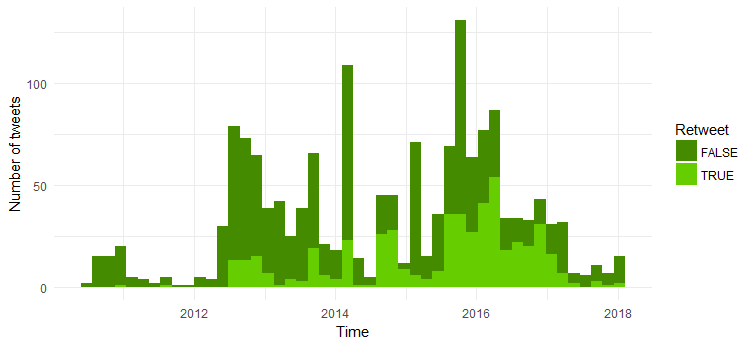

Comparison of the number of re-tweets and original tweets

Now we’ll compare the number of original tweets and re-tweets. Given below is the code:

[code language=”r”]

ggplot(data = ew_tweets, aes(x = created_at, fill = is_retweet)) +

geom_histogram(bins=48) +

xlab(“Time”) + ylab(“Number of tweets”) + theme_minimal() +

scale_fill_manual(values = c(“chartreuse4”, “chartreuse3”),

name = “Retweet”)

[/code]

Majority of the tweets are original tweets. Interesting to see that the number of re-tweets increased from 2014.

Text Mining

Let’s now get into more interesting area — we’ll perform text mining techniques including NLP to find out the following:

1. Frequently used hashtags

2. Word cloud of the tweet texts

3. Sentiment analysis

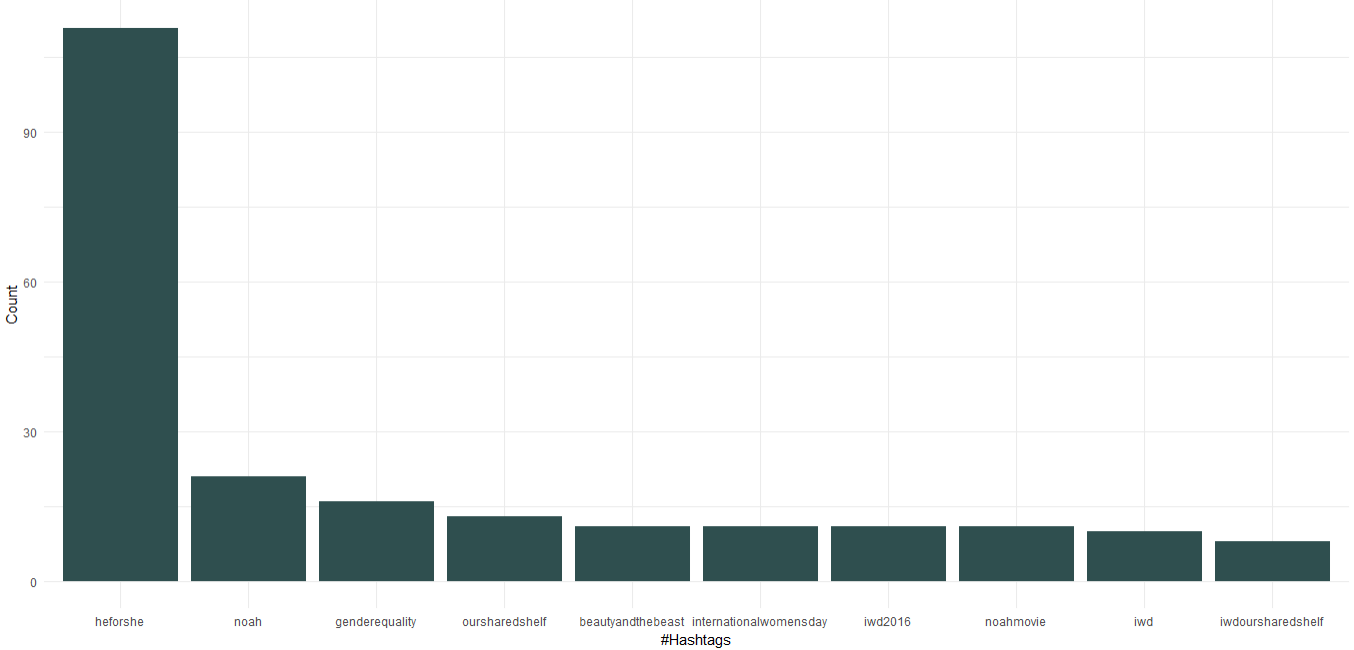

1. Frequently used hashtags

The downloaded dataset already has a column containing hashtags; we’ll be using that to find out the top 10 hashtags used by Emma. Given below is the code to create the chart for the hashtags:

[code language=”r”]

# Package to easily work with data frames

install.packages(“dplyr”)

library(“dplyr”)

# Getting the hashtags from the list

ew_tags_split <- unlist(strsplit(as.character(unlist(ew_tweets$hashtags)),’^c(|,|”|)’))

# Formatting by removing the white spacea

ew_tags <- sapply(ew_tags_split, function(y) nchar(trimws(y)) > 0 & !is.na(y))

ew_tag_df <- as_data_frame(table(tolower(ew_tags_split[ew_tags])))

ew_tag_df <- ew_tag_df[with(ew_tag_df,order(-n)),]

ew_tag_df <- ew_tag_df[1:10,]

ggplot(ew_tag_df, aes(x = reorder(Var1, -n), y=n)) +

geom_bar(stat=”identity”, fill=”darkslategray”)+

theme_minimal() +

xlab(“#Hashtags”) + ylab(“Count”)

[/code]

We can see that as a UN Women Goodwill Ambassador, Emma Watson has promoted “HeForShe” campaign which is focused on gender equality. Apart from that she has promoted her book club called called “Our Shared Shelf” and “International Women’s Day”. Coming to the movies, “Noah”, “Beauty and the Beast” feature in the top 10 hashtags.

2. Word cloud

Now we’ll analyze the tweet text to find out the most frequent words and create a word cloud. Execute the following code to proceed:

[code language=”r”]

#install text mining and word cloud package

install.packages(c(“tm”, “wordcloud”))

library(“tm”)

library(“wordcloud”)

tweet_text <- ew_tweets$text

#Removing numbers, punctations, links and alphanumeric content

tweet_text<- gsub(‘[[:digit:]]+’, ”, tweet_text)

tweet_text<- gsub(‘[[:punct:]]+’, ”, tweet_text)

tweet_text<- gsub(“http[[:alnum:]]*”, “”, tweet_text)

tweet_text<- gsub(“([[:alpha:]])1+”, “”, tweet_text)

#creating a text corpus

docs <- Corpus(VectorSource(tweet_text))

# coverting the encoding to UTF-8 to handle funny characters

docs <- tm_map(docs, function(x) iconv(enc2utf8(x), sub = “byte”))

# Converting the text to lower case

docs <- tm_map(docs, content_transformer(tolower))

# Removing english common stopwords

docs <- tm_map(docs, removeWords, stopwords(“english”))

# Removing stopwords specified by us as a character vector

docs <- tm_map(docs, removeWords, c(“amp”))

# creating term document matrix

tdm <- TermDocumentMatrix(docs)

# defining tdm as matrix

m <- as.matrix(tdm)

# getting word counts in decreasing order

word_freqs = sort(rowSums(m), decreasing=TRUE)

# creating a data frame with words and their frequencies

ew_wf <- data.frame(word=names(word_freqs), freq=word_freqs)

# plotting wordcloud

set.seed(1234)

wordcloud(words = ew_wf$word, freq = ew_wf$freq,

min.freq = 1,scale=c(1.8,.5),

max.words=200, random.order=FALSE, rot.per=0.15,

colors=brewer.pal(8, “Dark2”))

[/code]

Clearly she has done heavy promotion for “HeforShe” campaign. Other frequently used words are “thank”, “love”, “women”, “gender” and “UNWomen”. This is clearly in line with the hashtags which suggests that her Twitter activity is quite focused on women’s issues.

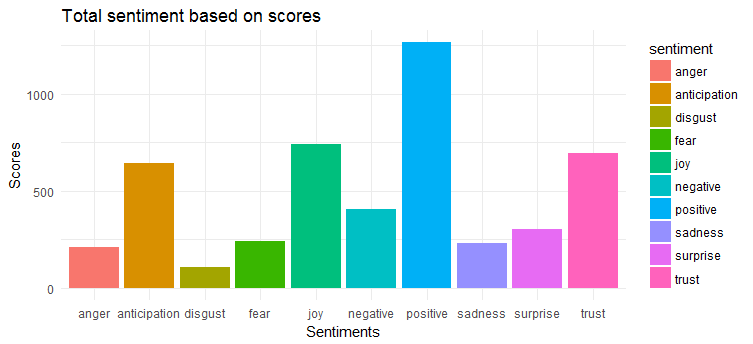

3. Sentiment analysis

For sentiment extraction and plotting we’ll apply syuzhet package. This package is based on emotion lexicon which maps different words with various emotions (joy, fear, anger, surprise, etc.) and sentiment polarity (positive/negative). We’ll have to calculate the emotion score based on the words present in the tweets and plot the same.

[code language=”r”]

install.packages(“syuzhet”)

library(syuzhet)

# Converting tweets to ASCII to trackle strange characters

tweet_text <- iconv(tweet_text, from=”UTF-8″, to=”ASCII”, sub=””)

# removing retweets

tweet_text<-gsub(“(RT|via)((?:bw*@w+)+)”,””,tweet_text)

# removing mentions

tweet_text<-gsub(“@w+”,””,tweet_text)

ew_sentiment<-get_nrc_sentiment((tweet_text))

sentimentscores<-data.frame(colSums(ew_sentiment[,]))

names(sentimentscores) <- “Score”

sentimentscores <- cbind(“sentiment”=rownames(sentimentscores),sentimentscores)

rownames(sentimentscores) <- NULL

ggplot(data=sentimentscores,aes(x=sentiment,y=Score))+

geom_bar(aes(fill=sentiment),stat = “identity”)+

theme(legend.position=”none”)+

xlab(“Sentiments”)+ylab(“Scores”)+

ggtitle(“Total sentiment based on scores”)+

theme_minimal()

[/code]

The following chart shows that tweets have largely positive sentiment. The top three most expressed emotions are ‘joy’, ‘trust’ and ‘anticipation’.

Over to you

In this study we covered exploratory data analysis and text mining techniques to understand the tweeting patterns and underlying theme of the tweets posted by Emma Watson. Further analysis can be performed to find out the frequently mentioned twitter user, create network graph and classify the tweets by using topic modelling.

Follow this tutorial and share your findings in the comments section.