To make well-informed decisions in the digital age, businesses heavily rely on data. When it comes to sorting and extracting essential information, the sheer volume of available data presents a substantial challenge. This underscores the vital role that data extraction techniques play. Data extraction involves the process of retrieving specific data from diverse sources and converting it into a structured format conducive to further analysis. In this comprehensive guide, we will thoroughly explore the range of techniques employed for data extraction, delve into the obstacles it presents, and outline best practices to ensure favorable outcomes.

The Importance of Data Extraction

Data extraction holds a pivotal position in the data lifecycle as it empowers businesses to extract valuable insights from unprocessed and unstructured data. It is important for organizations to gain a deeper insight into their customers, discern market trends, and identify potential growth opportunities by extracting relevant information.

Extraction of data consists of obtaining relevant information from structured and unstructured sources, such as databases, websites, documents, and social media. This extracted data is transformed and aligned into a structured format, typically within a database or data warehouse. This structured data streamlines further analysis and equips organizations to make well-grounded decisions.

Common Techniques for Data Extraction

Web Scraping

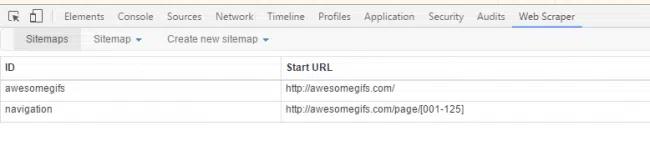

Web scraping is a well-known method employed to extract data from websites. It entails automated web crawling and parsing of HTML or XML pages to retrieve specific data points. Web scraping tools and libraries like BeautifulSoup and Scrapy are frequently used for this purpose.

Database Extraction

Many businesses store their data in structured databases. To extract data from these databases, SQL (Structured Query Language) queries are utilized to select specific data fields or rows. Commonly employed tools for database extraction include Informatica and Talend, which are integral to the Extract, Transform, Load (ETL) process.

Text Extraction

This technique is about extracting data from unstructured text sources, such as documents, PDFs, or emails. Natural language processing (NLP) algorithms are used to extract relevant information from text sources.

Extracting data from social media

Companies can use data from social media to conduct market research, analyze customer sentiment, and monitor their brands. With the help of API, we can extract social media data provided by social media platforms or scraping web pages.

Advanced Methods for Data Extraction

Natural Language Processing (NLP)

NLP techniques can be employed to extract information from unstructured text sources. Utilizing algorithms like topic modeling and text classification, businesses can extract valuable insights from extensive volumes of text data.

Image and Video Analysis

Extracting data from images and videos has become enormously important. Advanced computer vision techniques, such as image recognition and object recognition, enable the extraction of relevant data from visual sources.

Machine Learning

Machine learning algorithms can be trained to automatically extract specific data points from diverse sources. Leveraging techniques like supervised learning and deep learning, businesses can automate the data extraction process and enhance accuracy.

Data Integration

When extracting data, it’s common to combine information from multiple sources for a cohesive understanding. Techniques like data fusion and data virtualization are used to merge and transform data from various sources into a consistent format. By doing so, they create a unified view of the data.

Challenges in Data Extraction

While data extraction techniques offer numerous advantages, organizations may encounter several challenges during the extraction process:

Data Quality: Ensuring the accuracy and reliability of extracted data can be challenging, particularly when dealing with unstructured or incomplete data sources.

Data Volume and Scalability: Extracting and processing substantial volumes of data can be time-consuming and resource-intensive. Organizations need to design efficient data extraction workflows to handle scalability.

Data Privacy and Compliance: Extracting data from external sources, such as websites and social media, raises concerns about data privacy and compliance with regulations like GDPR (General Data Protection Regulation).

Data Complexity: Unstructured data sources, such as text and images, can be intricate to extract and analyze. Advanced techniques, like NLP and computer vision, may be necessary to manage this complexity.

Best Practices for Data Extraction

To ensure successful data extraction and maximize the value derived from extracted data, organizations should adhere to these best practices:

Define Clear Objectives: Clearly defining the objectives of the data extraction process is crucial to ensure that the extracted data aligns with the business goals.

Data Quality Control: Implement measures to maintain data quality, such as data cleansing and validation techniques, to ensure the accuracy and reliability of extracted data.

Automate the Process: Using automation tools and technologies helps the data extraction process, reduces manual effort, and increases efficiency

Data Privacy and Security: Ensure that data extraction processes comply with data privacy regulations and implement proper security measures to protect sensitive information.

Regular Monitoring and Maintenance: Regularly monitor the data extraction process, identify issues or discrepancies, and perform necessary maintenance tasks to ensure data integrity.

Conclusion

Data extraction techniques are indispensable for businesses aiming to harness the vast amounts of available data for informed decision-making. By employing various extraction methods, organizations can unlock valuable insights, enhance decision-making, and achieve their business objectives. Nevertheless, it is imperative to acknowledge the challenges and adopt best practices to ensure successful data extraction, thereby maximizing the value derived from the extracted data.