The emergence of digital revolution has led organizations to streamline a wide number of data sources and unstructured data. This revolution is driving the organizations to offer quick and accurate intelligence across multiple channels. The urge to move up the value chain is pushing traditional businesses increase operational efficiency by moving online.

In the present scenario, Data Analysts have a better picture about company’s performance leveraging Data Crawling techniques. Today’s agile businesses are continuously tapping the updated data stored in news, blogs, government data, and web data to gain insights from these data sources. Thereby, the companies are using it for timely notification, analysis, and enhanced decision making.

Extracting web data with precision has been an expensive venture. New techniques like Automated Web Data extraction are posing an advanced alternative to web scraping and web scraper scripts. Automated Web Data Extraction and analysis offers a cost effective solution with limited expertise and IT infrastructure.

Automated Web data collection solution can:

- React to market changes in lesser time by detecting market moving events and targeted keyword search

- Improve accuracy by eliminating manual intervention in 90 percent of data uploading

- Provide in-depth research by targeting the precise sources and topics you may want to monitor

- Helps in decision-making by obtaining operational data to trigger off trend analysis

Automated Web data monitoring and extraction services help in extracting information in real-time to ensure better decision making. However, a significant post processing work is required to make sense out of the data. The data needs to be structured and organized into XML, CSV or other commonly used formats to ensure its usability immediately. Some data crawling techniques help in offering cost effective custom research that helps in taking strategic investment decisions.

The need is to tap the potential of the data set whether having a shelf life of days, weeks, or months. Targeting accurately the specified data collection by using innovative data crawling tools ensures real-time transparency and market visibility.

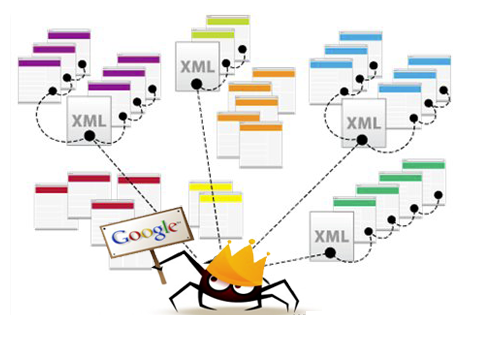

Crawler-based search engines are being used mostly for web crawling operations such as storing in database, ranking, indexing, and displaying to the user. Data crawling ensures better results to the users during searching the desired output.

The main function of a crawler is to download web pages, extract links of each page and follow those links. It also finds usage in web page information processing such as page data mining and email address scanning by spammers.

How Data Crawling enhances Operational Efficiency?

Basically, a crawler can be divided into two components:

- Spider

- Crawl Manager

Spider (or Crawler) is software which is used to search and collect data as suggested by the search engines. It analyzes different pages, reads their content, downloads them, follows links, stores and saves required data and finally makes them accessible for other parts of the search engine. A Spider cannot search the whole web. Therefore, each search engines needs several Spiders. If more Spiders are employed, more accurate information is expected to be added to the database.

Another important part of a Crawler is the Crawl Manager, which is used to command and manage the Spider. It mainly specifies pages to be reviewed by Spider. Web Crawler suggests strategy for crawling features, links, media, and other elements. It helps in storing crawling results in a certain table in its database on the web. As a result; search engines store each website’s details in their databases and implement their ranking results on them; ensuring accuracy in the stored data.

How to increase Operational Efficiency

Every organization needs a proactive approach to improve customer service, optimize business processes, and enhance operational efficiency of the systems. The major challenge is to integrate innovative technologies, analyze, interpret the data, and implement the insights to bring about efficiency in operations.

Ways to optimize performance of server:

- To augment query efficiency and read operations; SQL server maintains its indexes for data stored in different databases. However, these indexes can become fragmented. Therefore, it is significant to schedule maintenance operations such as index defragmentation on a regular basis. Scheduling these operations regularly can prevent data from being written or read from the indexes.

- Cache memory can save a large volume of the content requested by users including query results, documents and others as well. Site administrators can create their own cache profile in order to meet the needs of users.

- To avoid conflict between search and user traffic, an additional server needs to be added. This may reduce traffic to the Web front-end servers clearly during index operations.

- The conflict between user and database traffic on the web can be resolved by isolating connectivity between front-end servers and SQL using separate physical networks or virtual LANs.

Businesses must boost operational efficiency to remain competitive in the business world. Today, businesses need to react quickly to the customers’ demand to remain in the competition. To ensure efficiency in operations, a secure and reliable network based on intelligent routers and switches provide strength to connectivity within your organization and ensure seamless communication.

Effective and interactive collaboration between employees is as important as optimization of server and a reliable network to boost efficiency and ensure high efficiency. Latest techniques including video conferencing, IP communications, and other technologies are available to ensure easy and seamless collaboration.