How to Detect Web Scraping Challenges?

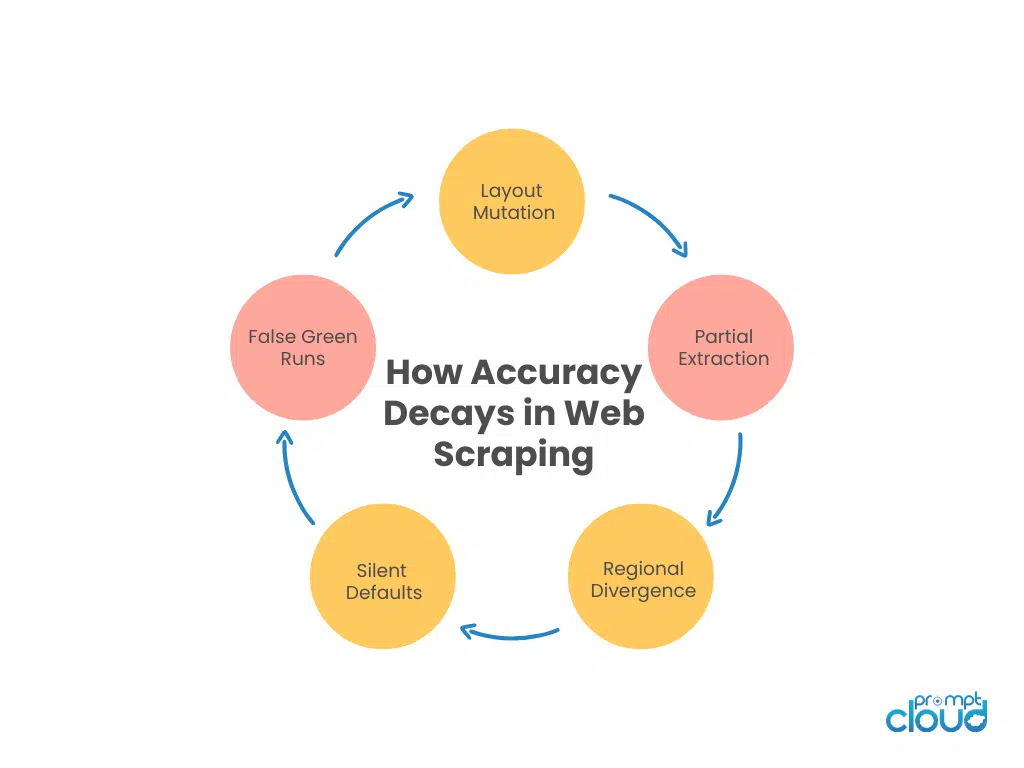

Most scraping failures are not blocks. They are accuracy failures disguised as success. Jobs complete. Dashboards stay green. Files are delivered on time. Meanwhile, fields drift, layouts mutate, regions diverge, and duplicates accumulate. If you don’t actively measure data accuracy in web scraping, you are trusting a system that has no idea whether it is right. This article breaks down the ten silent ways scraping accuracy decays.

What is Data Accuracy in Web Scraping?

Accuracy problems rarely look dramatic. Scrapers don’t explode. They don’t throw obvious exceptions. They return structured JSON and neatly formatted CSVs. Everything appears stable. Then, a product team asks why price changes seem exaggerated. Or an analytics team notices category distributions shifting strangely. Or a compliance audit uncovers inconsistent masking across regions.

By that point, the damage is already baked into decisions. Most articles ranking for data accuracy in web scraping focus on surface issues. Handling missing values. Cleaning duplicates. Basic validation. Useful, but incomplete.

The real problem is deeper.

Accuracy degrades through silent drift. Through partial extraction that still “passes.” Through HTML structure changes that keep selectors valid but shift meaning. Through the regional variance that your pipeline never accounted for. Through false confidence in successful runs. Maintaining data accuracy in web scraping is not about fixing errors. It’s about detecting when you are confidently wrong.

We’ll start with the most dangerous problem of all: Silent drift.

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

Challenge 1: Silent Schema Drift in Web Scraping

Schema drift is often misunderstood as broken selectors or missing fields. That’s the easy version. The harder version keeps the schema intact. Field names stay the same. Types stay valid. Your structured data extraction logic keeps working. But semantics change.

A price field now includes tax. A rating shifts from a five-star scale to a ten-point scale. A status field introduces new values that your downstream system treats as unknown. Nothing fails. Your pipeline reports success. Data validation passes because the field exists and the type matches. Yet accuracy in web scraping has already been compromised.

This is why data consistency checks must go beyond presence and type.

Teams that care about web scraping data quality compare distributions over time. They monitor enum expansions. They track value ranges. They alert when patterns shift, not just when structure breaks. If your validation layer can’t detect meaning changes, your pipeline is fragile.

Next, we’ll move to something even more common and equally damaging: Partial extraction.

If you’re managing this at enterprise pipeline scale, see how schema drift compounds across ingestion layers and ownership boundaries → Scaling Web Data Pipelines at Enterprise Scale.

Challenge 2: Partial Data Extraction and Missing Data Issues

This one destroys data accuracy in web scraping faster than outright failures. A page loads. Your selector matches. Fields populate. The record gets stored. From a system perspective, extraction succeeded.

But only part of the page was actually captured. Modern websites load content in layers. Reviews load after scroll. Variants appear after interaction. Availability changes based on region detection. Consent gates hide elements until triggered. If your scraper captures the first stable DOM snapshot, you may be extracting only a subset of reality.

The danger is that partial extraction still produces structured output. No null values. No missing keys. Everything appears valid. Until someone compares against a real browser view. Missing data issues at scale rarely appear as empty fields. They show up as undercounted attributes, truncated text, or missing nested entities. Over time, that skews analytics, pricing models, and inventory tracking.

Strong data validation strategies don’t just check whether a field exists. They verify expected counts. Expected child elements. Expected cardinality. If a product normally has 12 reviews visible and you’re capturing three, that’s an accuracy issue even if nothing is technically missing. Web scraping data quality requires completeness logic that understands context, not just structure.

Next, we’ll examine a related but distinct problem: HTML structure changes that don’t break selectors but still corrupt data.

Challenge 3: HTML Structure Changes That Corrupt Extracted Data

When people think about HTML structure changes, they imagine broken XPaths. Selectors fail. Extraction errors spike. Jobs crash. In reality, most HTML changes are subtle. A site moves the price into a new container but leaves the old one in place for backward compatibility. A promotional price appears in the same node as the base price. A label shifts position but retains the same class name. Your selector still matches.

It just matches the wrong thing. This is where data reliability erodes without obvious scraping errors. Structured data extraction relies on assumptions about context. When layout shifts, those assumptions may no longer hold even if the DOM node exists. Your scraper happily captures a discounted banner instead of the actual price. Or extracts a summary rating instead of a user rating.

The field is populated. The job is successful. The data is wrong.

Teams that maintain data accuracy in web scraping implement sanity checks. Cross-field validation. Price consistency checks. Category-level outlier detection. They treat a layout change as a constant threat, not a rare event. If you only monitor selector failure rates, you are missing the majority of layout-induced accuracy problems.

Next, we’ll move into a challenge that scales quietly across regions: regional variance that produces multiple truths for the same URL.

Challenge 4: Regional Variance and Data Consistency Breakdowns

This one rarely shows up in tutorials, but it quietly undermines data accuracy in web scraping at scale.

The same URL does not always return the same content. Prices vary by country. Availability shifts by warehouse region. Promotions differ by IP. Even descriptions can change based on localization logic. If your scraping system rotates proxies aggressively or does not bind sessions to geography, you may be stitching together fields from different realities.

The result is internally inconsistent records.

- Price from Region A.

- Availability from Region B.

- Shipping time from Region C.

Every field is technically correct. Together, they never coexisted for any real user. This is a web scraping data quality problem that basic validation won’t catch. The types match. The fields are populated. There are no obvious missing data issues.

Maintaining data consistency checks across regions requires intentional design. Location-aware sessions. Consistent identity handling. Explicit tagging of region metadata. And most importantly, validation rules that understand when fields must align geographically. Without that, your pipeline becomes a collage engine.

Next, we’ll look at something even more common and far less obvious: duplicate records that look unique enough to pass.

Challenge 5: Duplicate Records in Web Scraping Pipelines

Duplicate records are easy to identify when they are exact matches. Same ID. Same URL. Same values. Enterprise pipelines rarely deal with duplicates that cleanly. More often, duplicates differ slightly. A tracking parameter changes. A title gains a prefix. A variant page produces overlapping entries. Or a site updates its internal identifiers without removing the old ones.

Basic deduplication logic misses these cases. The damage is cumulative. Counts inflate. Category distributions skew. Models overweight repeated signals. Analysts assume growth where none exists.

From a scraping perspective, nothing is wrong. The system collected exactly what was available. But from a data accuracy standpoint, you are amplifying noise. Effective duplicate control requires entity-level thinking. Similarity checks. Canonical record selection. Cross-field consistency analysis. It also requires monitoring duplicate growth over time, not just a one-time cleanup. Duplicate records are not just a storage inefficiency. They are a distortion multiplier.

Accuracy Slipping at Scale?

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

Next, we’ll address something deceptively simple: missing data that appears acceptable because null rates stay low.

Challenge 6: Clustered Missing Data and Hidden Null Rate Issues

Most teams measure missing data with a simple metric: null percentage. If less than 5% of records are missing a field, it’s considered healthy. Move on.

That logic breaks down quickly in real systems. Missing data issues rarely distribute evenly. They cluster. One category stops returning attributes. One region hides pricing. One product type no longer exposes reviews. Globally, null rates stay low. Locally, accuracy collapses.

This is how web scraping data quality problems survive under the radar.

You might see 2% nulls overall. But in a high-value segment, the field could be missing 40% of the time. If your monitoring aggregates across the entire dataset, you’ll never see it. Maintaining data accuracy in web scraping requires segmented validation. By category. By region. By source. By crawl batch. Completeness needs to be measured contextually, not globally.

Another common pattern: fields don’t go null. They default. A missing price becomes 0. A missing rating becomes 5. That keeps null rates clean while silently corrupting analytics. If your pipeline treats non-null as valid, you are trusting the wrong metric.

Next, we’ll look at a challenge that feels reassuring but is often misleading: false confidence created by successful runs.

Challenge 7: Accuracy Monitoring vs Green Dashboards

This is where accuracy failures become psychological. Your monitoring system shows 100% job success. No scraping errors. No timeouts. Files delivered on schedule. From an operational standpoint, the pipeline is healthy.

But job success does not equal data accuracy in web scraping.A scraper can execute perfectly while extracting the wrong values. Layout shifts can misplace context. Regional logic can change content. Structured data extraction can target the wrong node.

None of that causes a job failure.

The danger is that green dashboards reduce scrutiny. Teams stop sampling records. They stop comparing against the ground truth. They trust the system because it appears stable. Maintaining accuracy requires adversarial thinking. Sampling outputs regularly. Comparing scraped data to manual checks. Tracking anomalies in business metrics that might originate upstream. If your system only measures whether it ran, not whether it was right, you are optimizing for uptime, not truth.

Next, we’ll move into something tightly linked to both validation and drift: data validation rules that are too shallow to catch real errors.

Challenge 8: Weak Data Validation Rules in Scraping Systems

Most scraping pipelines implement data validation. Type checks. Required fields. Basic format rules. Maybe even some range validation.

It looks disciplined. It feels robust. But shallow validation creates an illusion of safety. A price field passes because it’s numeric. A rating passes because it’s between 1 and 5. A date passes because it matches the ISO format. None of those checks confirms that the value is correct.

If a site suddenly shifts currency without changing the symbol, your numeric validation won’t catch it. If ratings flip scale but remain within bounds, your checks pass. If a layout change moves a promotional badge into the same node as a base value, your extraction logic still produces structured output.

The validation layer approves it. Maintaining data accuracy in web scraping requires relational validation, not just atomic checks. Cross-field consistency. Price should align with the currency. Availability should align with stock count. The discount should align with the base price. Review count should correlate with rating distribution. When those relationships break, something upstream changed.

Data consistency checks must understand how fields interact. Otherwise, you are verifying shape, not correctness.

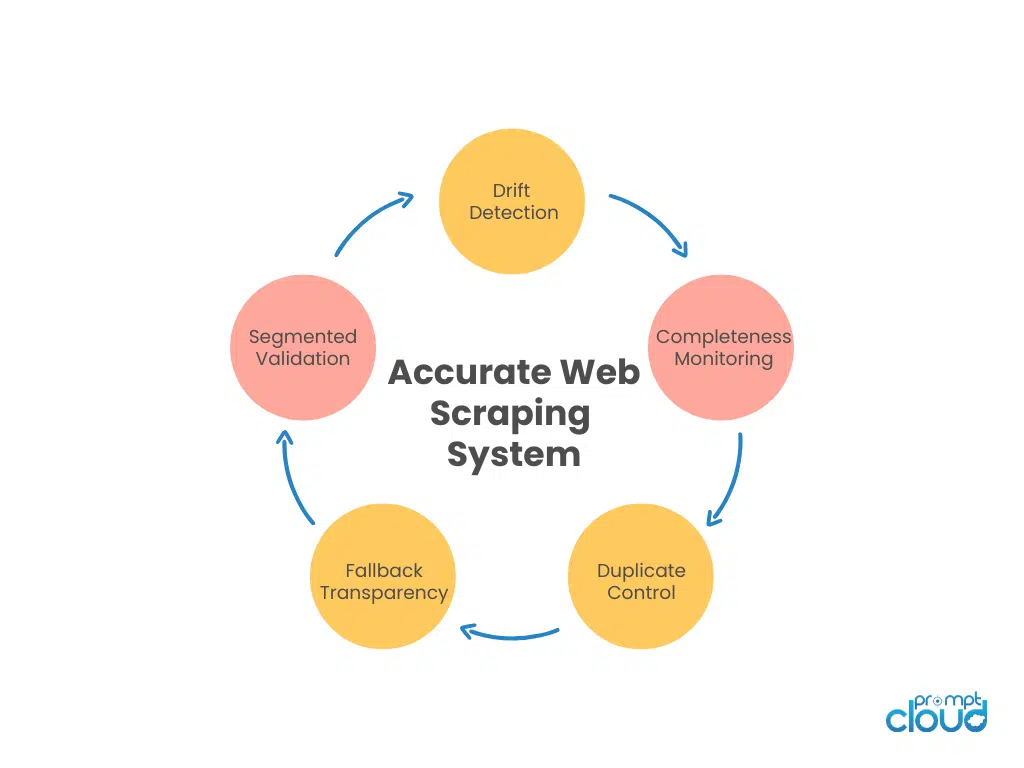

Figure 1: The core operational layers required to maintain data accuracy in web scraping at scale.

Next, we’ll look at a challenge that creeps in through scale and repetition: scraping errors that don’t look like errors.

Challenge 9: Scraping Errors That Insert False Values

Not all scraping errors throw exceptions.

Some return values look perfectly valid. A request times out, and your fallback logic inserts a default. A selector fails and returns the first matching sibling. A dynamic component doesn’t load, and the system captures a placeholder. From a structural perspective, everything works. From an accuracy standpoint, you are introducing synthetic data. This is one of the hardest threats to data reliability.

Fallback mechanisms are designed to keep pipelines flowing. But when they aren’t logged and monitored carefully, they contaminate datasets quietly. A single scraping error is tolerable. Thousands of silent fallbacks distort entire segments.

Maintaining web scraping data quality requires explicit error tagging. Records influenced by fallback logic should be marked. Retry decisions should be observable. Default substitutions should be counted and reviewed. If your pipeline hides its own uncertainty, you cannot measure accuracy honestly.

For example: a review section times out during rendering, and the fallback selector captures the product description instead. Same length. Same text format. No null value. Zero indication that anything went wrong. But every “review” record is now synthetic.

Next, we’ll close with a challenge that ties all others together: accuracy monitoring that lags behind the damage it’s meant to prevent.

Challenge 10: Delayed Accuracy Monitoring and Drift Detection

Most teams monitor data accuracy in web scraping retrospectively. Weekly reports. Monthly audits. Ad-hoc anomaly investigations. By the time a pattern is recognized, inaccurate data has already been consumed.

Accuracy monitoring must be continuous and proactive. Distribution tracking. Drift detection. Duplicate growth trends. Segment-level completeness. These signals need to update alongside ingestion, not after the fact. When monitoring lags, corrections become expensive. You need backfills. Reprocessing. Communication to stakeholders. Sometimes, even the reversal of decisions made on bad data. The longer inaccuracy lives undetected, the more institutional trust it erodes.

Accuracy is not a static attribute. It is a moving target. If your monitoring cadence is slower than the rate of change on the web, you are always behind.

Across enterprise scraping systems we monitor, silent fallback insertion accounts for roughly 18–25% of detected accuracy anomalies. Most were not caused by site blocking, but by internal fallback logic masking uncertainty.

Figure 2: The recurring cycle through which data accuracy in web scraping erodes without obvious scraping errors.

Accuracy Failure Patterns at a Glance

| Challenge | What It Looks Like | Why It Goes Undetected | What You Should Monitor Instead |

| Silent schema drift | Fields populated, but meaning shifts | Type checks pass | Value distribution shifts, enum expansion |

| Partial extraction | Records look complete but lack depth | No null spikes | Expected child count, nested cardinality |

| HTML structure changes | Correct selector, wrong context | Selector still matches | Cross-field consistency checks |

| Regional variance | Mixed geo values in the same record | Fields are valid individually | Region-tag alignment validation |

| Duplicate records | Slightly varied near-duplicates | URL-based dedupe only | Similarity score growth trends |

| Clustered missing data | Local completeness collapse | Global null rate looks fine | Segment-level fill rates |

| False green dashboards | Jobs succeed | Only uptime tracked | Business-metric anomaly correlation |

| Shallow validation | Fields formatted correctly | Atomic checks only | Relational rule validation |

| Silent fallback values | Defaults inserted quietly | Fallback not tagged | Fallback frequency tracking |

| Late accuracy monitoring | Errors discovered weekly | Lagging review cadence | Near real-time drift alerts |

The Way Forward in 2026

If you step back, every challenge we discussed points to the same uncomfortable truth. Accuracy in web scraping is not a property of the scraper. It is a property of the system around it.

You can have perfectly written extraction logic and still be wrong. You can use structured data extraction, headless browsers, proxy rotation, retries, and still ship inaccurate datasets. Because accuracy is not about whether you extracted something. It is about whether what you extracted still matches reality.

And reality moves. HTML structure changes without version numbers. Product schemas expand silently. Ratings scales adjust. Availability logic becomes region-aware. Promotions appear conditionally. None of these changes announce themselves. None of them throws obvious scraping errors.

The web does not promise stability. So if your pipeline assumes stability, data accuracy in web scraping becomes a matter of luck. What separates mature teams from fragile ones is not tooling sophistication. It is posture. Mature teams assume drift. They assume missing data issues will cluster. They assume duplicate records will slip through. They assume validation rules will miss something. And they build layers that question output continuously.

They monitor distributions, not just fields. They segment completeness, not just global null rates. They tag fallback logic. They track structured data extraction confidence. They compare across regions intentionally instead of accidentally mixing them. Most importantly, they treat green dashboards as suspicious, not comforting.

Because the most expensive accuracy failures are the ones discovered by customers, auditors, or executives. Not by the engineering team. There is also a cultural component that is rarely discussed.

Accuracy monitoring feels like overhead. It does not ship features. It does not increase coverage. It does not improve crawl speed. It exists to prevent embarrassment and rework. In fast-moving environments, that prevention work is often deprioritized. Until something breaks publicly. By then, fixing accuracy is not just a technical correction. It is a credibility repair exercise. This is why web scraping data quality cannot be reactive. Backfills and corrections are costly. Once inaccurate data has flowed into analytics, models, or pricing decisions, the cleanup effort multiplies.

The longer the drift lives undetected, the more systems internalize it as truth. And that is the real risk. Data accuracy in web scraping is not a one-time milestone. It is not something you achieve after implementing data validation or schema drift detection. It is a continuous negotiation between your assumptions and the web’s volatility.

You either institutionalize that negotiation, or you accept hidden risk. If your scraping system cannot explain why a value changed, if it cannot detect when completeness shifts by segment, if it cannot distinguish between extraction success and semantic correctness, then you are operating on optimism.

Accuracy demands skepticism. And in enterprise systems, skepticism is cheaper than being misplaced.

What separates reliable data teams is proactive quality control. They monitor for drift, validate continuously, and design pipelines for accuracy—not just collection. This is why managed web scraping services require built-in validation, structured governance, and dedicated maintenance oversight. Organizations reaching this realization often evaluate whether internal scraping workflows can truly deliver decision-grade data over time.

Trusted by enterprise data teams across retail, fintech, and AI platforms processing billions of records annually.

“PromptCloud reduced silent data drift incidents by over 40% within two quarters.”

Director of Data Engineering

Global Commerce Platform

Don’t Let Accuracy Failures Surface in Production

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

How privacy-safe scraping affects data consistency and validation integrity

If accuracy is your main concern, these pieces go deeper into governance, compliance, and operational discipline that directly affect data reliability.

- How to implement privacy-safe scraping without breaking structure – When masking and anonymization aren’t applied consistently, they introduce subtle data inconsistencies. Read more: privacy-safe scraping with PII masking and anonymization

- What enterprise vendors are evaluated on in 2026 audits – Accuracy, traceability, and validation discipline are now part of vendor scrutiny. Read more: enterprise vendor compliance checklist 2026

- Why ethical governance frameworks reduce long-term drift risk – Governance is not just about compliance. It directly affects schema control and validation practices. Read more: ethical web data governance framework 2026

- What a real enterprise compliance audit uncovered about data reliability – See how structured validation and monitoring helped pass a large-scale audit. Read more: enterprise compliance audit success case study

A formal breakdown of accuracy, completeness, consistency, and reliability, useful for framing how scraping accuracy should be evaluated beyond just successful runs. Read more: DAMA Body of Knowledge – Data Quality Dimensions.

FAQs

Why is data accuracy in web scraping so hard to maintain?

Because most failures do not break pipelines. They preserve structure while degrading meaning.

How is web scraping data quality different from generic data cleaning?

Scraping accuracy must account for layout volatility, regional variance, and extraction logic drift.

Can validation rules guarantee accuracy?

No. Validation reduces risk but must include relational and distribution checks to catch semantic errors.

Are duplicate records always obvious?

Rarely. Near-duplicates and variant URLs often evade simple deduplication logic.

How often should accuracy monitoring run?

As close to real-time as feasible. Monitoring must move at least as fast as source changes.

What is the biggest false assumption teams make?

That successful runs equal accurate data. They don’t.

Don’t Let Accuracy Failures Surface in Production

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

By then, cleanup is expensive.

PromptCloud designs scraping systems with built-in validation, segmented completeness monitoring, drift detection, and fallback transparency — so accuracy failures surface before they reach decision systems.

If your current pipeline cannot explain why a value changed or when completeness shifted by segment, it is operating on optimism.

Turn web scraping into a defensible data system.