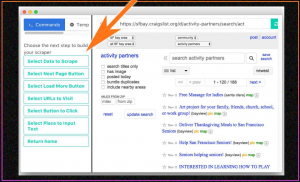

Portia was a visual tool that allowed users to crawl websites without having any programming knowledge. It was a hosted service but due to dwindling usage of visual scrapers, it has been taken down and is no longer in use today. So how did people use Portia when it was in existence? To use Portia, you would need to enter the pattern of URLs that need to be visited, and then select elements in those webpages with point-and-click gestures, or by using CSS or XPath. Despite being easy to use, the biggest problems with Portia were the following:

- It was a time-consuming tool to get control over as compared to other open sourced web scraping tools.

- Navigating websites was difficult to handle.

- You needed to mention the target pages when you started crawling to keep Portia from visiting unnecessary URLs.

- There was no way to plug in a database to save your scraped data points.

What are the advantages of visual web scrapers?

When you have a one-time web scraping requirement, you can use a visual web scraper, but using it as a part of a business workflow is not recommended. In case you are running a business where you need to crawl data from very few static web pages, and that too very occasionally (say once a month), you can get someone in your team who knows what data has to be scraped to understand the workings of a visual web scraper within a matter of hours and then web data extraction from time to time. Visual web crawlers are especially helpful for small businesses that lack a tech team and have minute scraping requirements.

A visual web crawler is almost the same as someone clicking on “inspect elements” on a webpage and copy-pasting data from the HTML content. Instead, when you use a visual web scraper, you end up clicking on a part of the webpage and the software copies the data for you into a location of your choice.

In which areas do visual web scrapers fall short?

Visual scrapers, however, fall short when you have some serious heavy lifting to do.

You might need to include scraping some data as a part of your business workflow (that should be automated).

Data might need to be scraped across hundreds or thousands of pages and might need to be refreshed very frequently.

There might be a need for a live feed of scraped data for a particular business module. In most of the above cases, a code-based web scraper would come in much handier than a visual scraper.

Most mass-scraping projects find the need to crawl a ton of similar web pages to web data extraction data about different items. These items can range from flight information on e-booking websites to product details on e-commerce websites. The logic applied in such scenarios is that you try to understand the pattern in which data is stored in web pages using a few web pages, and use a code that can not only crawl pages with the exact same structure but even pages with a similar structure. Also while scraping all the pages available on a website, pages with certain structure might need to be ignored. All these customizations are not possible on a visual scraper and thus, scraping too many pages using a visual scraper is not recommended.

On the other hand of the spectrum, due to changes in the look and feel of websites every few weeks or months, you might need to train your visual web scraper every time a website’s User Interface changes. On the other hand, when using a code based scraper, often a UI change might not even require any changes in the scraper since the website may structurally still remain the same. Even if there are some changes in the User Interface that may require a change in the scraper, the changes are usually minimal and adjusting the scraper to the changes is simple enough.

What other alternatives do we have?

There exist many alternatives to Portia. Languages like Python, R and Golang are being used by developers and web scraping teams all over the world to web data extraction from web pages. New ways are being developed to make the process faster. For example, with the help of parallel programming and caching in Golang, using the package called Colly, you can use custom settings like the following:

- The number of pages you want to crawl concurrently at any given time.

- Maximum depth the scraper should go once it starts scraping from a web page. (What this means is that, if you set maximum depth to 3, it will crawl the top page, go to a URL found in it, crawl it, then go to a URL found in that page, and crawl that too, but now in the third page if it finds a URL, it will go no further).

- You can set a check for words present in URLs – that is if a word is present in a URL, then the webpage in that URL must be scraped. Or you can set exclusions- URLs with a particular word, shouldn’t be accessed by the scraper.

These are just some of the examples of the hundreds of tiny functionalities that you get when you build a web scraper on your own.

DaaS providers vs in-house team?

Most businesses that lack a tech team, or even members without a basic understanding of any scripting language, should try not to start building an in-house scraping team. The reason behind this is simple. The money you spend in recruiting developers and then getting them to build and maintain a completely new web scraping system for your business needs would be massive. And at the end of the day if you are a small company, and web scraping is not the fuel for your business (that is your business is not centered around the data you crawl off the web), then it makes no sense to build an in-house team.

The simple solution, in that case, is the DaaS providers who take your requirements and give you your data in a format of your choice. Our team at PromptCloud takes great pride in reducing web scraping to a two-step process for businesses and enterprises.

Conclusion

While visual tools are good for business teams, we can agree that web scraping is not a just a simple business task. It is a task that needs to be efficient, fast and completely customizable. If you have large volume web scraping requirements or would like to web data extraction on a much larger scale it’s recommended to use web scraping services.

If you aren’t adept in programming or your requirements are complex, you can use a fully managed service provider like PromptCloud to get clean data in an automated manner without any technical hassle or learning any tool.