Web Scraping Guide: How to Scrape E-Commerce Websites (2026)

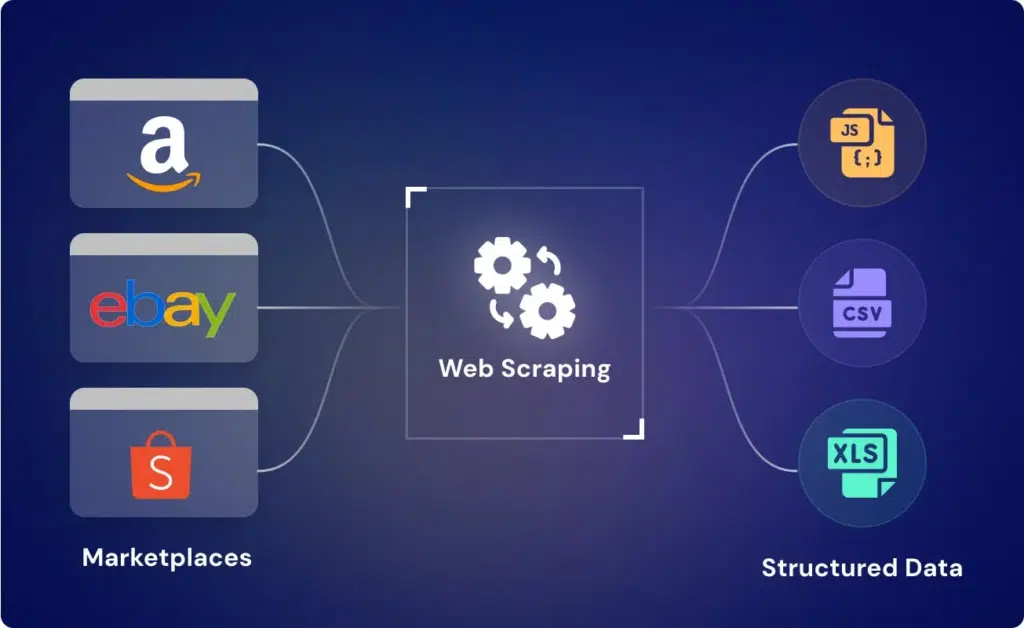

This web scraping guide shows how to extract product data from e-commerce websites, including prices, reviews, and availability, using practical methods and code. It also explains why most scraping setups fail at scale and what it takes to build reliable, production-ready data pipelines.

If you are operating in e-commerce, your biggest competitor advantage is not your product. It is how fast you understand the market.

Prices change daily. Inventory fluctuates. New products appear without notice. Customer sentiment shifts in real time. Most of this data is publicly available, but almost no team can track it manually at scale.

This is where web scraping becomes critical.

This web scraping guide focuses specifically on how to scrape e-commerce websites, not as a one-time script, but as a repeatable system for collecting product data, pricing signals, and competitive intelligence.

The goal is not just to extract data. The goal is to extract usable, structured, and reliable data that can feed pricing engines, analytics systems, or AI models.

Because in e-commerce, delayed or incorrect data does not just reduce insight. It directly impacts revenue decisions.

Stop fixing scrapers. Start getting reliable e-commerce data

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

Understanding E-Commerce Website Structure

Before writing a single line of code, the biggest leverage comes from understanding how e-commerce sites are actually built. Most scraping failures don’t happen because of bad code. They happen because the scraper is pointed at the wrong layer of the page.

How E-Commerce Pages Are Structured

At a high level, almost every e-commerce website follows a predictable hierarchy:

- Listing pages (category or search results)

- Product detail pages (PDPs)

- Supporting layers (APIs, scripts, dynamic loaders)

What looks like a simple product page is often a combination of:

- Static HTML (basic structure)

- JavaScript-rendered content (prices, stock, variants)

- Background API calls (reviews, recommendations)

If you scrape only the visible HTML, you often miss critical data.

The Hidden Layer Most Scrapers Miss

Modern e-commerce platforms rarely load everything directly in the HTML. Instead, they fetch data through APIs after the page loads.

This creates a key decision point:

- Scrape rendered HTML (simpler, less reliable)

- Intercept API responses (cleaner, more scalable)

Most beginners default to HTML scraping. Most production systems rely heavily on API-level extraction.

Pagination, Variants, and Data Depth

E-commerce data is rarely flat. A single product can have:

- Multiple variants (size, color, region-specific pricing)

- Dynamic availability

- Region-based pricing differences

Listing pages also introduce pagination and infinite scroll, which means:

If your scraper only captures page one, your dataset is already incomplete.

Why Structure Awareness Matters

Without understanding structure:

- You miss fields (incomplete datasets)

- You scrape wrong selectors (fragile pipelines)

- You over-scrape (wasted compute + higher block rates)

With structure awareness:

- You extract cleaner datasets

- You reduce maintenance overhead

- You design scalable pipelines from day one

Industry analysis suggests a majority of modern e-commerce websites rely on client-side JavaScript rendering for critical data like pricing and availability, making simple HTML scraping unreliable at scale.

How to Scrape an E-Commerce Website

Once you understand the page structure, the scraping workflow becomes much clearer. The mistake most beginners make is jumping straight into BeautifulSoup selectors without deciding where the data is actually coming from. On many modern commerce sites, the visible page is only a shell. Prices, variants, ratings, and inventory are often loaded through background requests after the page renders. Cloudflare’s browser rendering docs explicitly call out fully rendered HTML capture as useful for JavaScript-heavy pages and downstream scraping, which is exactly why a simple requests.get() approach is often not enough for ecommerce targets.

Need This at Enterprise Scale?

While Python-based scraping works for small product tracking or one-off use cases, e-commerce data pipelines at scale introduce constant site changes, anti-bot defenses, and reliability risks. Most enterprise teams evaluate total cost of ownership across build vs buy before committing long term.

Step 1: Decide the extraction layer first

Before scraping, inspect the page in the browser and identify whether the data lives in raw HTML, embedded JSON, or background API calls. This is the highest-leverage step in the entire workflow.

If product name, price, and availability are already present in the initial HTML response, a lightweight scraper is enough. If they appear only after the page loads, you either need browser automation or a cleaner route through the site’s network calls. This is why e-commerce scraping is not just about parsing pages. It is about choosing the right layer of extraction.

Step 2: Start with one product page, not the whole site

A common mistake is scraping category pages first and trying to scale immediately. That creates noise too early.

Start with a single product detail page and confirm that you can reliably extract the core fields you actually need. In most e-commerce workflows, that means product title, current price, list price if available, stock status, rating, review count, brand, and SKU or product ID. Once those fields are stable on a single page, then you expand to listing pages, pagination, and category coverage.

This is also where you discover whether the site has variant complexity. A product page may show one visible price while the real data changes by size, color, seller, or region. If you do not catch that early, your dataset looks complete but is commercially misleading.

Step 3: Handle dynamic content intentionally

Not every e-commerce site needs Selenium or Playwright. But many do need some form of rendering or API interception.

The right question is not “can I scrape this page?” The right question is “what is the cleanest way to get the correct data repeatedly?” If the site exposes structured JSON through network calls, scraping those endpoints is usually more stable than scraping rendered HTML. If the site depends heavily on client-side rendering, headless browsing becomes necessary.

That matters because modern web traffic is heavily bot-driven. Akamai reported that bots made up 42% of overall web traffic, and noted that ecommerce has been among the sectors most affected by high-risk bot traffic. That fact matters operationally: the more aggressive your scraping pattern, the more likely you are to trigger defenses that degrade reliability.

Step 4: Extract fields with normalization in mind

Scraping is only half the job. The other half is making the data usable.

A raw price string like $1,299.00 is not the same as a normalized numeric field. “In stock,” “Only 2 left,” and a green availability badge may all mean the same thing from a business perspective, but they arrive in different formats. The same product can appear on a listing page with one set of labels and on the PDP with another.

That means your scraper should not just collect text. It should be designed to convert raw web content into a repeatable schema. Otherwise, your analysis layer absorbs the inconsistency and becomes harder to trust.

Step 5: Expand to listings and pagination only after validation

Once the product-page extraction is stable, move outward to category pages, search result pages, and pagination logic. This is where coverage errors usually begin.

The problem is rarely page one. The problem is incomplete pagination, infinite scroll handling, duplicate products across categories, and missing product URLs caused by lazy loading. If you do not validate record counts and category coverage as you scale, you end up with a partial dataset that looks complete enough to use.

That is why mature e-commerce scraping workflows treat validation as part of extraction, not something done later.

Code Example: Scraping a Product Page with Python

For a beginner-friendly e-commerce scraper, the cleanest starting point is still requests for fetching and BeautifulSoup for parsing. Requests provides the basic HTTP workflow, and Beautiful Soup supports parser selection plus CSS-style selection for extracting fields from HTML.

The example below is intentionally simple, but it is structured the right way. It sets headers, fetches the page, parses HTML, extracts common ecommerce fields, and normalizes the output into a predictable dictionary. That matters because scraped data becomes much more useful once title, price, availability, and rating are returned in a consistent schema.

import re

from typing import Optional, Dict, Any

import requests

from bs4 import BeautifulSoup

def clean_text(value: Optional[str]) -> Optional[str]:

if not value:

return None

return ” “.join(value.split())

def parse_price(price_text: Optional[str]) -> Optional[float]:

if not price_text:

return None

# Keep digits, commas, and decimals, then normalize

match = re.search(r”[d,]+(?:.d+)?”, price_text)

if not match:

return None

return float(match.group(0).replace(“,”, “”))

def scrape_product_page(url: str) -> Dict[str, Any]:

headers = {

“User-Agent”: (

“Mozilla/5.0 (Windows NT 10.0; Win64; x64) “

“AppleWebKit/537.36 (KHTML, like Gecko) “

“Chrome/124.0.0.0 Safari/537.36”

),

“Accept-Language”: “en-US,en;q=0.9”,

}

response = requests.get(url, headers=headers, timeout=20)

response.raise_for_status()

soup = BeautifulSoup(response.text, “html.parser”)

# These selectors are examples only.

# Replace them after inspecting the actual target site.

title = soup.select_one(“h1”)

price = soup.select_one(“.price, .product-price, [data-price]”)

availability = soup.select_one(

“.availability, .stock, .inventory-status, [data-stock]”

)

rating = soup.select_one(“.rating, .stars, [data-rating]”)

review_count = soup.select_one(“.review-count, .ratings-count”)

data = {

“url”: url,

“title”: clean_text(title.get_text()) if title else None,

“price_raw”: clean_text(price.get_text()) if price else None,

“price”: parse_price(price.get_text()) if price else None,

“availability”: clean_text(availability.get_text()) if availability else None,

“rating”: clean_text(rating.get_text()) if rating else None,

“review_count”: clean_text(review_count.get_text()) if review_count else None,

}

return data

if __name__ == “__main__”:

product_url = “https://example.com/product-page”

product_data = scrape_product_page(product_url)

print(product_data)

What this code gets right

It starts with one product page, not the whole category tree. That is the right sequence for e-commerce scraping because product pages expose the actual extraction difficulty: price formatting, inventory labels, review blocks, and inconsistent page elements.

It also separates raw and normalized output. For example, price_raw preserves the original visible text, while price converts that value into a numeric field. That makes downstream analysis easier and gives you a recovery path if parsing logic needs adjustment later.

Just as importantly, the selectors are treated as site-specific, not universal. That is the right mindset. Ecommerce scraping breaks when teams assume one site’s structure will generalize cleanly to another.

Where this code stops being enough

This approach works when product data is present in the initial HTML response. It becomes less reliable when the site loads prices, variants, or inventory through JavaScript or background API calls after the page renders. In those cases, the next step is usually one of two paths: intercept the network response directly, or render the page with a browser automation layer before parsing.

That is why this code is a good starting point, but not the final architecture.

Handling Dynamic Content, Pagination, and Anti-Bot Friction

Scaling e-commerce scraping is where most systems break. Not because extraction fails, but because data becomes incomplete or unreliable without obvious errors.

Modern e-commerce sites load data dynamically, paginate inconsistently, and actively manage bot traffic. If your scraper doesn’t account for this, you end up with datasets that look usable but are commercially wrong.

Where Things Break (and How to Fix It)

| Problem Area | What Actually Happens | Impact on Data | What You Should Do |

| Dynamic Content | Prices, stock, reviews loaded via JS or APIs | Missing or outdated fields | Prefer API interception or rendered scraping |

| Pagination | Infinite scroll, filters, hidden pages | Incomplete product coverage | Validate total product count per category |

| Product Variants | Multiple SKUs under one PDP (size, color, seller) | Partial or misleading product data | Extract all variant states explicitly |

| Anti-Bot Systems | Partial loads, silent blocking, altered responses | Inconsistent or corrupted datasets | Combine access strategy with data validation |

A scraper that runs successfully is not the same as a scraper that produces complete and decision-ready data.

What Data Can Be Extracted from E-Commerce Websites

E-commerce websites are not just product catalogs. They are continuous streams of market signals. The real value of scraping is not in collecting “product data,” but in capturing decision-grade inputs across pricing, demand, competition, and customer behavior.

Product and Catalog Data

At the most basic level, you can extract structured product information such as titles, descriptions, images, brand, category hierarchy, and SKUs. This forms the foundation of any dataset, but on its own, it has limited strategic value unless combined with pricing and availability signals.

Pricing and Promotion Signals

Pricing is one of the most critical datasets in e-commerce scraping.

You can extract current price, list price, discount percentage, bundled offers, and time-bound promotions. Over time, this becomes a pricing history dataset, which is essential for tracking competitor strategies, discount patterns, and dynamic pricing behavior.

Availability and Inventory Indicators

Stock status is often underestimated but highly valuable.

Labels like “in stock,” “out of stock,” “limited availability,” or delivery timelines provide indirect signals about demand and supply constraints. When tracked over time, this data can reveal stockouts, replenishment cycles, and product velocity.

Reviews and Ratings

Customer feedback is a rich source of unstructured data.

You can extract ratings, review counts, and review text to understand sentiment, product perception, and recurring issues. This is especially useful for identifying gaps in competitor products or validating demand trends.

Seller and Marketplace Data

On multi-seller platforms, each product may be listed by multiple vendors.

You can capture seller names, pricing differences, fulfillment methods, and seller ratings. This helps in understanding marketplace dynamics, pricing competition, and distribution strategies.

Search and Ranking Data

Search results and category rankings reveal visibility, not just presence.

By tracking where products appear for specific keywords or within categories, you can measure share of shelf, discoverability, and how ranking changes over time. This is critical for understanding competitive positioning.

Behavioral and Engagement Signals

Some platforms expose indirect engagement signals such as “bestseller” tags, “trending” labels, or “frequently bought together” sections. While these are not explicit metrics, they act as proxies for demand and consumer behavior, especially when tracked consistently.

Digital Shelf Monitoring

Track product rankings, category placement, and share of shelf across marketplaces to understand visibility and competitive positioning.

Lead and Seller Intelligence

Extract seller-level data including vendor names, pricing variations, and fulfillment methods to analyze marketplace dynamics.

Customer Sentiment Analysis

Aggregate reviews and ratings across products to identify trends, product gaps, and consumer preferences at scale.

Best Practices for Scraping E-Commerce Websites

E-commerce scraping is not about extracting data once. It is about consistently extracting the right data despite constant change. The difference between a working scraper and a reliable system comes down to how you design for failure from the start.

What Actually Works in Production

| Area | What Most Teams Do | What Actually Works |

| Extraction Layer | Scrape visible HTML | Choose between HTML, API, or rendered layer |

| Data Quality | Assume output is correct | Validate fields, formats, and completeness |

| Change Handling | Fix scrapers after breakage | Monitor structure changes proactively |

| Coverage | Scrape first few pages | Validate full category and pagination depth |

| Scaling | Increase request volume | Optimize extraction logic and request patterns |

| Output | Store raw text | Normalize into structured, usable schema |

What Most Teams Miss

Reliable scraping is not about stronger code. It is about better system design.

If your pipeline cannot detect missing data, incomplete coverage, or structural changes, it will fail silently. And silent failures are the most expensive ones because they directly affect pricing, analytics, and decision-making without immediate visibility.

How PromptCloud Approaches This

PromptCloud builds scraping systems as data pipelines, not scripts. That means structured output, validation layers, and refresh cycles are designed upfront instead of being added after failures.

Instead of reacting to breakages, the focus is on maintaining data reliability at scale, with consistent schemas, monitoring, and delivery pipelines that integrate directly into business systems.

The Role of AI in E-Commerce Web Scraping

AI is starting to reshape how scraping systems operate, but the shift is subtle. It is not replacing scrapers. It is making them less brittle.

Moving Beyond Fixed Rules

Traditional scraping depends heavily on fixed selectors. You define extraction rules and expect the structure to remain stable. In e-commerce, that assumption breaks quickly.

AI reduces this dependency by identifying elements like price, availability, or ratings based on context, not just position. Even when layouts change, the system can still recognize key fields. This lowers maintenance overhead and reduces breakage across large-scale scraping setups.

Improving Data Quality, Not Just Extraction

Most scrapers focus on successful execution. They do not verify whether the data is actually correct.

AI introduces a layer of intelligence that can detect anomalies such as missing values, inconsistent formats, or unusual price movements. This is especially important in e-commerce, where small data errors can lead to incorrect pricing decisions or flawed analytics.

Scaling Without Constant Maintenance

As scraping expands across categories, sites, and regions, maintaining rule-based systems becomes expensive.

AI helps reduce this operational burden by adapting to structural changes and identifying patterns across different page templates. Instead of rewriting selectors repeatedly, systems can adjust dynamically and maintain consistency at scale.

Where AI Still Needs a Strong Foundation

AI does not solve core scraping challenges on its own.

You still need to:

- Choose the right extraction layer

- Handle access and anti-bot constraints

- Design proper validation and monitoring

Without this foundation, AI becomes an add-on rather than a solution.

PromptCloud applies adaptive extraction logic and validation layers that detect structural changes before they affect data delivery, ensuring consistent, decision-ready datasets without ongoing scraper maintenance.

Why Managed Web Scraping Services Are Ideal for E-Commerce Data

E-commerce data looks simple on the surface. Product names, prices, reviews, availability. But once you try to collect it consistently across categories, regions, and competitors, it turns into a high-maintenance system problem.

This is where most DIY setups break down.

The Hidden Cost of DIY Scraping

Building a scraper is easy. Keeping it reliable is not.

As you scale, you start dealing with:

- Frequent website structure changes

- Dynamic content and API shifts

- Incomplete pagination and coverage gaps

- Data inconsistencies across categories

- Increasing anti-bot friction

At this point, engineering effort shifts from building features to fixing pipelines. The cost is not just infrastructure. It is the ongoing maintenance burden and the risk of bad data flowing into business decisions.

Why Managed Services Change the Equation

Managed web scraping services are designed for data reliability, not just extraction.

Instead of treating scraping as a script, they treat it as a pipeline with:

- Structured data delivery

- Defined schemas and normalization

- Continuous monitoring and change handling

- Coverage validation across categories

- Scheduled refresh cycles

This shifts the focus from “can we scrape this page?” to “can we trust this dataset?”

Built for Scale, Not Just Access

In e-commerce, scale is not just about volume. It is about consistency across thousands of products, multiple sources, and frequent updates.

Managed services handle:

- Multi-site scraping with standardized outputs

- Variant-level data extraction

- Region-specific pricing and availability

- High-frequency refresh without pipeline degradation

This is difficult to maintain internally without a dedicated data engineering effort.

When the Switch Typically Happens

Teams usually move to managed solutions when:

- Scraped data starts impacting pricing or revenue decisions

- Engineering time spent on maintenance increases significantly

- Data gaps or inconsistencies start affecting analytics

- Scaling to new sources becomes slow and unreliable

At this stage, the cost of unreliable data is higher than the cost of outsourcing the pipeline.

E-Commerce Marketplaces for Data Scraping in 2026

Not all e-commerce platforms behave the same way. In 2026, the complexity of scraping depends less on “how big the marketplace is” and more on how the data is structured, delivered, and protected.

Understanding these differences upfront saves a lot of wasted effort.

Global Marketplaces (High Scale, High Complexity)

Platforms like Amazon, Walmart, and eBay operate at massive scale with highly dynamic content layers.

They rely heavily on:

- Client-side rendering

- Frequent layout experimentation

- Region-based pricing and availability

- Strong anti-bot systems

For scraping, this means higher effort in maintaining coverage, handling variants, and ensuring data consistency across regions. These platforms are valuable because of their data richness, but they are also the most volatile.

Vertical and Niche Marketplaces (Cleaner Structure, Faster Wins)

Specialized marketplaces (Wayfair, Zalando, Chewy) in categories like electronics, fashion, or home goods often have more predictable structures.

They typically offer:

- More stable HTML layouts

- Simpler pagination logic

- Less aggressive anti-bot mechanisms

These platforms are easier to scrape and are often used as initial data sources when building category-level intelligence or benchmarking datasets.

D2C Brand Websites (Structured but Fragmented)

Direct-to-consumer websites (Allbirds, Glossier) are structurally simpler but highly fragmented.

Each site has:

- Its own design system

- Unique product schemas

- Different naming conventions for similar data

This makes scaling across multiple D2C sites challenging. The issue is not extraction difficulty, but lack of standardization across sources, which increases normalization effort.

Aggregators and Comparison Platforms (High Signal Density)

Price comparison and aggregator platforms (Google Shopping, PriceGrabber) consolidate data from multiple sources into a single interface.

They provide:

- Cross-market pricing snapshots

- Product comparisons

- Availability across sellers

These are high-leverage sources for scraping because they reduce the need to crawl multiple individual sites. However, they also tend to implement stricter access controls due to the value of aggregated data.

What Changed in 2026

The biggest shift is not in platform types, but in how data is delivered and controlled:

- More platforms are moving toward API-driven or dynamic rendering models

- Personalization is affecting visible pricing and availability

- Anti-bot systems are becoming more behavior-based rather than rule-based

This means scraping strategies need to be more adaptive. Static extraction logic is no longer sufficient for maintaining reliable datasets across marketplaces.

Legal, Compliance, and Ethical Considerations in E-Commerce Web Scraping

Once scraping moves from experimentation to business use, the question shifts from “can we extract this data?” to “can we use this data safely and responsibly?”

This is where most teams underestimate risk.

Public Data Does Not Mean Free Use

A common assumption is that if data is visible on a website, it can be freely collected and used.

That is not always true.

E-commerce platforms often define how their data can be accessed through terms of service, usage policies, and access controls. Even when data is publicly visible, the way it is collected, stored, and used can introduce compliance risks if not handled carefully.

The key distinction is not just data availability, but data usage and intent.

Terms of Service vs Technical Feasibility

Just because a scraper can access a page does not mean it should.

Many teams treat scraping as a purely technical problem. In reality, it is also a contractual and compliance problem. Ignoring this leads to fragile setups that may work technically but create legal exposure over time.

Responsible scraping requires evaluating:

- Whether the data is intended for automated access

- Whether access patterns violate platform policies

- Whether extraction impacts the target website’s performance

This is where scraping shifts from engineering to governance.

Handling Personal and Sensitive Data

E-commerce platforms often include user-generated content such as reviews, profiles, or seller information. Some of this may qualify as personally identifiable information depending on how it is structured and used.

Collecting such data without proper safeguards can introduce privacy risks, especially when datasets are used for analytics or AI training.

A safer approach is to:

- Avoid collecting unnecessary personal data

- Mask or anonymize sensitive fields

- Limit usage to clearly defined business purposes

For a deeper view on handling sensitive data, see how privacy-safe scraping with PII masking and anonymization is approached in practice.

Compliance Is an Ongoing Process, Not a One-Time Check

Scraping systems evolve. Websites change. Regulations shift.

Compliance is not something you validate once and forget. It needs to be built into how data pipelines operate.

That includes:

- Tracking what data is collected and why

- Maintaining auditability of datasets

- Ensuring consistent handling across sources

A structured approach to governance helps avoid reactive fixes later.

Vendor and Process Accountability

As scraping scales, many teams work with external vendors or internal pipelines that multiple teams depend on.

This introduces another layer of risk: who is responsible for compliance?

Clear vendor evaluation and process controls become important, especially when data feeds into critical systems. A practical checklist for this is outlined here.

Automating Compliance Where Possible

Manual compliance checks do not scale.

Modern scraping systems increasingly incorporate:

- Consent-aware data collection

- Rule-based filtering of sensitive fields

- Automated checks for policy violations

This reduces operational risk while maintaining data usability. A detailed look at how consent and compliance can be automated is covered here.

Data protection regulations like GDPR define how personal data can be collected, processed, and stored, even when it is publicly accessible. Understanding these principles is critical when scraping data that may include user-generated content or identifiable information.

Stop fixing scrapers. Start getting reliable e-commerce data

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. Is it legal to scrape e-commerce websites?

Legality depends on how data is collected and used. Scraping publicly available data is generally acceptable, but violating terms of service or collecting sensitive data introduces risk.

2. What is the best way to scrape product prices from e-commerce sites?

The most reliable approach is API interception or rendered scraping, since many sites load pricing dynamically using JavaScript.

3. Which Python libraries are commonly used for e-commerce scraping?

Requests and BeautifulSoup work for static pages, while Playwright or Selenium are used for dynamic, JavaScript-heavy websites.

4. How do you avoid getting blocked while scraping e-commerce websites?

Use controlled request rates, rotate access strategies, and validate data output to detect partial blocks or corrupted responses.

5. When should you move from DIY scraping to managed services?

When scraped data starts impacting pricing, analytics, or AI systems, and maintenance effort begins to outweigh the value of internal control.