Why the eBay API Is Useful, but Not Enough for Serious Market Intelligence

The eBay API is useful for structured marketplace operations, especially inventory and listing workflows. But it was not designed to give you full marketplace visibility. When teams need deeper pricing intelligence, richer listing data, historical tracking, or competitor monitoring, web scraping fills the gap.

- eBay’s official APIs are strong for seller-side workflows and structured search access

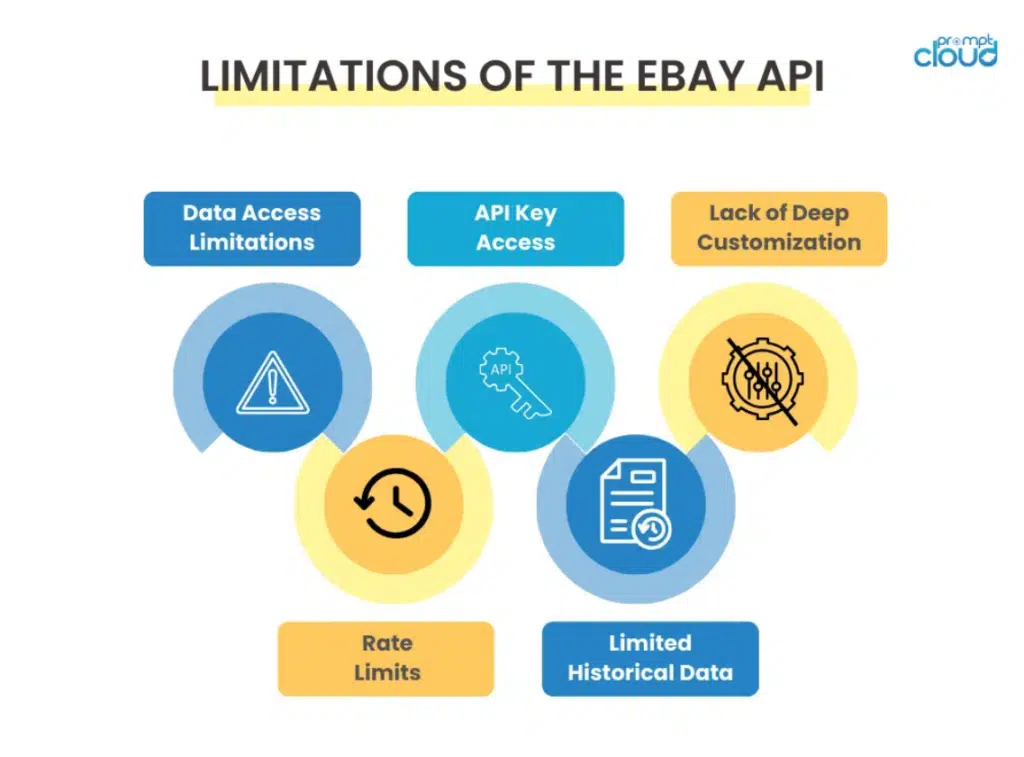

- eBay also enforces API call limits, and some search endpoints cap result sets, which affects large-scale monitoring use cases

- Web scraping becomes useful when the goal shifts from store management to market intelligence

eBay’s API is valuable if your main goal is to manage listings, inventory, and marketplace workflows in a structured way. eBay’s official documentation positions the Inventory API around creating and managing inventory item records and publishing those records as offers on eBay marketplaces. The Browse API, on the buy side, is designed for search and item discovery experiences.

That is useful, but it creates a common misunderstanding.

Many teams assume that if an API exists, it must already provide everything needed for pricing analysis, assortment benchmarking, listing-quality comparisons, and competitive monitoring. In practice, that is rarely true. APIs are designed around productized access patterns, not around every intelligence question an analyst, pricing team, or marketplace operator wants to answer.

This matters more on eBay because the marketplace is dynamic. Listings change, seller behavior changes, pricing shifts quickly, and competitive signals often sit in the parts of the marketplace experience that are most useful to humans, not necessarily the parts exposed most flexibly through official API responses.

There is also a scale issue. eBay explicitly limits API call usage, and the Browse API documentation notes that search methods can return a maximum result set of 10,000 items. For operational workflows, that can be manageable. For broad monitoring and deep comparative analysis, it creates obvious constraints.

That is why the real question is not “API or scraping?” It is:

When is the eBay API enough, and when do you need scraping to go deeper?

What the eBay API Does Well, and Where It Starts to Fall Short

The eBay API is useful when your goal is operational control. It helps sellers and integrators manage listings, inventory records, offers, and structured marketplace interactions in a predictable format. That is exactly what official APIs are supposed to do.

But marketplace intelligence is a different problem.

The moment your questions shift from “How do I manage my listings?” to “How is the market moving?” the API starts to feel narrower. That is not a flaw in the platform. It is simply a design boundary. eBay’s APIs are optimized for official workflows and productized access, while pricing intelligence, assortment monitoring, listing-quality analysis, and historical tracking often require a wider view of what is actually happening on the marketplace. eBay’s own documentation makes that boundary visible: the Inventory API is built around creating and managing inventory records and offers, while the Browse API’s search methods return a maximum result set of 10,000 items and operate within platform call limits.

Successful eBay marketplace intelligence requires continuous tracking of pricing, listings, seller behavior, and category changes across large product sets. This is the foundation of modern ecommerce industry web data.

eBay API vs Web Scraping at a Glance

| Use Case | eBay API | Web Scraping |

| Inventory and listing management | Strong fit | Not the right primary method |

| Structured seller-side workflows | Strong fit | Usually unnecessary |

| Marketplace search and item discovery | Useful, but bounded by endpoint design and result limits | More flexible for wide monitoring |

| Large-scale competitor price tracking | Limited by access patterns and call limits | Better suited |

| Historical dataset building | Limited unless you store data over time yourself | Better for continuous archival |

| Rich listing-content analysis | Partial | Stronger |

| Monitoring listing changes across many sellers | Harder to operationalize through API alone | More adaptable |

| Custom data fields for niche intelligence | Limited to exposed fields | Highly customizable |

Where the eBay API Is Strong

The API is strongest when the job is transactional or operational. If you need to manage inventory, update offers, publish listings, or integrate eBay workflows into internal systems, the API is the cleaner choice. It gives you structured responses, official documentation, and a more stable path for workflows that eBay explicitly supports.

This is especially useful for:

- inventory synchronization

- listing creation and updates

- order and seller workflow automation

- structured search and catalog-linked operations

In short, the API is excellent for running your eBay presence.

Where It Starts to Fall Short

The gaps appear when you need deeper visibility into the marketplace itself.

For example, pricing teams often want to monitor broad competitor movement across categories, not just pull a bounded set of search responses. Merchandising teams may want to analyze how sellers write titles, structure descriptions, use keyword placement, bundle products, or shift offer positioning over time. Strategy teams may want to track assortment changes, listing churn, or repeated changes in item presentation.

These are not always questions the API is built to answer directly.

The limitation is not just about missing fields. It is also about scale, flexibility, and perspective. Once the use case becomes continuous market intelligence rather than structured marketplace management, the API begins to feel more like one input than a complete solution.

Why This Distinction Matters

A lot of teams choose the wrong approach because they frame the problem incorrectly.

If you think the problem is “access eBay data,” the API may seem sufficient. If the real problem is “build a deeper view of pricing, competition, listing behavior, and market shifts,” then API access alone is often not enough.

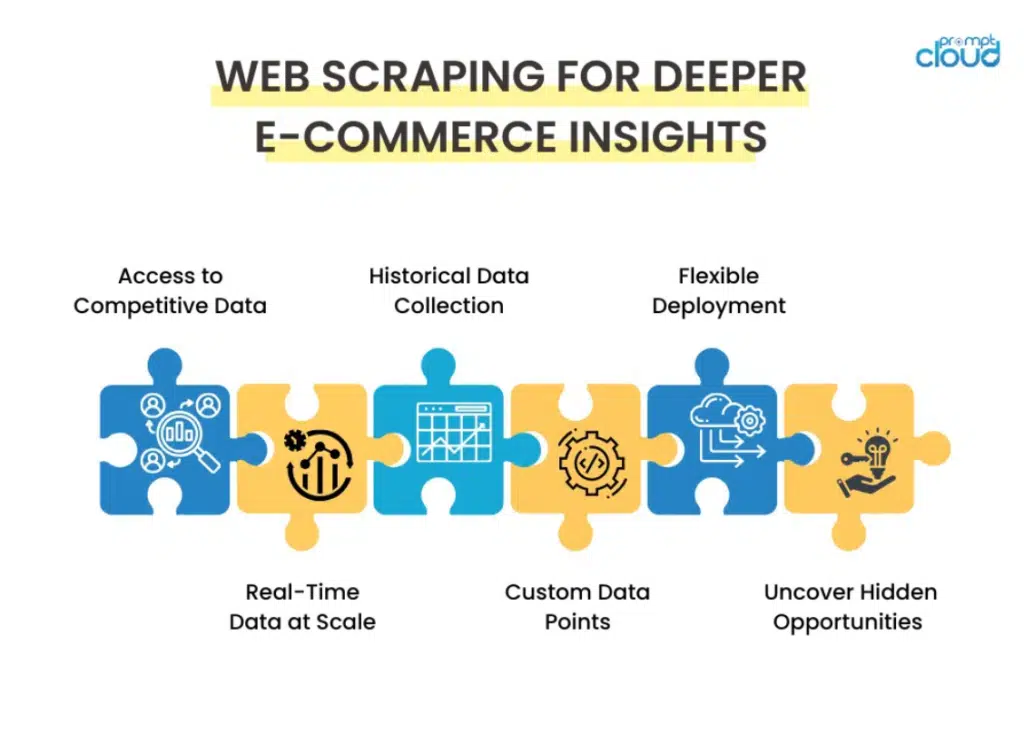

That is where web scraping becomes valuable, not as a replacement for the API, but as a way to capture the marketplace signals the API does not fully expose or support at the depth required.

Stop relying on incomplete, outdated marketplace data for e-commerce decisions.

Get structured, schema-ready web data delivered to your exact specifications, across any source, refreshed on your schedule.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

How Web Scraping Extends eBay Data Beyond the API

The eBay API is useful when you need structured access to the parts of the marketplace eBay explicitly supports through its developer stack. But once the goal shifts from operations to intelligence, the gaps become obvious. The Inventory API is built to create and manage inventory records and turn them into offers, while the Browse API is designed around search and discovery. Even there, search responses are constrained: eBay documents that Browse API search methods can return a maximum of 10,000 items in one result set, and standard call limits apply across APIs.

That is where web scraping becomes useful, not as a replacement for the API, but as the layer that captures richer marketplace behavior.

Scraping Gives You a Broader Competitor View

If you are tracking a few known SKUs or managing your own listings, the API may be enough. But competitor intelligence usually requires a wider view. Teams want to monitor how rival sellers position products, change pricing, rewrite titles, adjust descriptions, add urgency language, or shift category presence over time.

Those are not always the questions the API is built to answer directly. Web scraping lets you collect the marketplace as it appears to buyers, which is often the most useful version of the truth when you are analyzing competitive behavior.

Listing Content Becomes a Data Source, Not Just a Page

One of the biggest differences is depth. APIs tend to return structured fields that are useful for applications. Scraping lets you analyze the actual listing presentation: title construction, keyword density, image count, promotional messaging, shipping promises, variation handling, seller badges, and review signals.

That matters because on eBay, commercial performance is influenced not just by price, but by how a listing is framed. If you only use API-accessible fields, you often miss the qualitative layer that shapes buyer response.

Scraping Makes Historical Tracking Easier to Build

The current eBay API is more useful for live workflows than for long-horizon market archiving. If you want to understand how categories evolve, how price corridors shift, or how listing quality changes across weeks and months, you need a way to capture and store snapshots over time.

That is one of the strongest uses for scraping. It gives teams the ability to build their own history layer instead of depending only on real-time endpoint responses. This becomes especially important when pricing teams, marketplace analysts, or merchandising teams want trendlines rather than one-time pulls.

Custom Data Collection Becomes Possible

Official APIs expose official fields. That is their strength, but also their limit.

Scraping becomes valuable when the business question is more specific than the API design. You may want to track whether sellers are using financing language, compare image-gallery depth by category, identify repeated bundles, monitor delivery-message changes, or detect how frequently certain value propositions appear in titles.

Those are not standard API use cases. They are market intelligence use cases. Scraping gives you the freedom to collect around the question, not just around the endpoint.

API Plus Scraping Is Usually the Better Model

For most serious e-commerce teams, this is not an either-or decision.

The API is cleaner for official workflows such as inventory and listing operations. Scraping is stronger for competitor tracking, listing analysis, historical monitoring, and custom intelligence extraction. Used together, they create a much fuller view of the marketplace than either approach alone.

That is the real strategic takeaway. The eBay API helps you manage your presence. Web scraping helps you understand the market around it.

When API Access Is Enough, and When You Need Both

Not every eBay data project needs scraping.

If your goal is to manage your own listings, sync inventory, or automate seller workflows, the eBay API is the better starting point. That is what it was built for. The API gives you structured access, predictable schemas, and official support for marketplace operations. For many seller-side workflows, that is enough.

The problem starts when the use case becomes analytical rather than operational.

If you are trying to understand how pricing moves across a category, how competitors rewrite listings, how assortment shifts over time, or how marketplace presentation affects conversions, the API alone usually stops short. eBay’s own developer documentation makes that boundary clear. Some Browse API search methods cap a result set at 10,000 items, and API usage is still subject to platform call limits. That is manageable for many applications, but it becomes restrictive for broad monitoring and large-scale intelligence work.

Use the eBay API When the Goal Is Operational Efficiency

The API is usually enough when you need to:

- manage inventory and offers

- automate listing updates

- connect eBay workflows to internal systems

- retrieve structured data for bounded application use cases

This is where official API access is at its best. It is cleaner, more stable, and easier to integrate into day-to-day marketplace operations.

Add Scraping When the Goal Is Marketplace Intelligence

Scraping becomes valuable when the business question moves beyond exposed fields and standard endpoint responses.

This typically includes:

- competitor price monitoring across large sets of listings

- title, description, and merchandising analysis

- historical tracking of listing changes

- custom extraction of signals not exposed in official fields

- broader category-level or seller-level monitoring

At that point, scraping is not replacing the API. It is extending your visibility.

The Better Model for Most Teams Is Hybrid

For serious e-commerce teams, the strongest setup is usually a hybrid one.

Use the API for what it does best: structured marketplace workflows. Use scraping for what the API does not fully cover: competitor intelligence, broader listing analysis, change tracking, and custom market signals.

That gives you two different kinds of leverage:

- operational efficiency from the API

- strategic visibility from scraping

That is the combination that turns eBay data from a workflow input into a real intelligence layer.

How Managed Web Data Collection Helps Teams Go Beyond eBay API Limits

The gap between API access and real marketplace intelligence usually appears when teams try to scale. Pulling structured data for seller workflows is one thing. Monitoring a marketplace with 135 million active buyers and roughly 2.5 billion live listings is something else entirely. At that scale, even small blind spots in pricing, assortment, seller behavior, or listing quality can distort decisions quickly. eBay’s own materials highlight the scale of the marketplace, while its developer docs also make the operational boundaries clear, including Browse API result-set caps of 10,000 items and standard API usage limits.

It Turns Marketplace Pages Into Usable Competitive Data

Most teams do not need more raw pages. They need clean, structured datasets they can actually use. A managed approach helps extract and organize marketplace signals that sit beyond standard API workflows, including competitor pricing movement, seller assortment changes, listing-title shifts, promotional messaging, and category-level changes over time.

The goal is not just extraction. It is making the data usable for pricing, merchandising, and competitive analysis teams without forcing them to build and maintain custom scraping infrastructure internally.

It Supports Historical and Continuous Monitoring

One of the biggest weaknesses in API-only approaches is that they are stronger for live access than for long-horizon tracking. If you want to understand how a category changes over weeks or months, or how sellers repeatedly reposition offers, you need a way to collect and store data continuously.

That is where managed collection becomes more practical. It makes it easier to build a historical layer instead of depending only on point-in-time responses. And in e-commerce, the real insight often comes from change over time, not from one isolated snapshot.

It Reduces the QA and Reliability Burden

At marketplace scale, scraping is not just about grabbing data. It is about handling page changes, maintaining extraction logic, monitoring data quality, and making sure what gets delivered is consistent enough to trust. This is where the quality layer matters. Production-grade collection needs QA checks, schema consistency, and delivery discipline.

It Makes Custom Data Collection Practical

Official APIs are limited to official fields. Managed scraping makes it easier to collect around the actual business question instead.

That could mean tracking:

- how sellers frame urgency

- how often titles are rewritten

- what differentiators appear in item descriptions

- how listing quality varies across sellers or categories

These are often the signals that matter most for e-commerce strategy, even though they sit outside standard endpoint outputs.

The Real Value Is Operational Focus

Teams often underestimate the cost of managing marketplace intelligence internally. Once they are dealing with extraction logic, QA, storage, historical snapshots, and downstream delivery, the real bottleneck is no longer access. It is maintenance.

PromptCloud helps remove that burden by turning complex marketplace collection into structured, reusable data delivery, so internal teams can spend more time analyzing the market and less time keeping the pipeline alive.

Why API Plus Scraping Is the Smarter Model for eBay Intelligence in 2026

The API Helps You Operate. Scraping Helps You See the Market.

That is the cleanest way to think about it.

The eBay API is strong when the job is operational: inventory, offers, listing workflows, structured integrations. Scraping becomes valuable when the job is analytical: pricing intelligence, listing comparisons, seller monitoring, historical tracking, and category-level change detection.

Trying to force one method to do both usually leads to a weaker outcome.

Marketplace Scale Changes the Equation

This distinction matters even more on eBay because the marketplace is large and constantly moving. At the end of 2025, eBay reported 135 million active buyers and 2.5 billion live listings globally. That level of scale makes it hard to rely on bounded endpoint responses alone if the goal is broad market visibility. eBay’s own Browse API documentation also notes that search methods can return a maximum of 10,000 items in a result set, which is enough for many use cases but clearly not a full-market lens.

A Hybrid Model Creates Better E-Commerce Intelligence

The smarter setup for most teams is not API or scraping. It is API plus scraping.

Use the API where structured, official access makes the most sense. Use scraping where the questions become more open-ended and competitive:

- how pricing shifts across sellers

- how listings are rewritten over time

- how category positioning changes

- how marketplace presentation affects buyer response

That hybrid model gives you both control and context.

This Is Where Better Data Becomes a Competitive Edge

The real advantage is not simply collecting more eBay data. It is building a clearer, more complete view of how the marketplace behaves.

Teams that can combine structured API access with deeper marketplace scraping are in a better position to:

- spot price movement earlier

- track competitive shifts more accurately

- build stronger historical benchmarks

- make faster merchandising and strategy decisions

That is what turns eBay data from an operational input into a real intelligence layer.

If you’re building eBay intelligence infrastructure, explore how ecommerce industry web data handles large-scale marketplace monitoring, historical tracking, and competitive data collection at scale.

Read More:

- synthetic datasets and scraping for AI training

- scraping QA automation for reliable data pipelines

- multi-agent scraping for scalable marketplace monitoring

- eBay Browse API search limits and marketplace data access

Stop relying on incomplete, outdated marketplace data for e-commerce decisions.

Get structured, schema-ready web data delivered to your exact specifications, across any source, refreshed on your schedule.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. Can I use the eBay API for competitor price tracking?

You can use the eBay API for some search and item-level monitoring, but it is not always enough for broad competitor price tracking across large categories or shifting seller sets. eBay’s Browse API search methods can return a maximum of 10,000 items in a result set, which makes full-category monitoring harder at scale.

2. What data can web scraping collect from eBay that APIs often miss?

Web scraping can capture listing-level details that are useful for marketplace intelligence, such as pricing presentation, seller messaging, shipping language, title changes, image counts, and other visible page elements. This makes it useful when the goal is deeper competitive analysis rather than just structured operational access.

3. Is eBay web scraping better than the eBay API for historical data analysis?

For historical analysis, scraping is often more practical because it lets teams collect and store marketplace snapshots over time. Official APIs are usually stronger for live access and structured workflows, while scraped datasets are better suited for building your own history layer for trend analysis.

4. What is the best way to collect eBay data for market research?

The best approach for market research is usually a hybrid model: use the eBay API for official, structured marketplace access and use web scraping for broader competitor monitoring, listing analysis, and custom data points. This gives you both operational clarity and richer market visibility.

5. What are the main limitations of the eBay API for large-scale data collection?

The main limitations are endpoint design, result-set caps, and API call limits. eBay documents default call limits across its APIs, and some search methods also cap the total number of items you can retrieve in one result set, which can restrict broad marketplace analysis.