**TL;DR**

Enterprise audit success is not about passing an audit. It is about proving, over time, that controls work under pressure. The most reliable way to measure that success is through compliance case studies that show repeatable outcomes, reduced friction, and growing trust signals across regulators, partners, and internal teams.

Enterprise Audit in 2026

Most enterprises say they passed the audit. That statement hides more than it reveals. Passing an audit usually means one thing. On a specific day, with specific evidence prepared, nothing obviously failed. It does not tell you whether controls are durable. It does not tell you whether teams understand them. And it definitely does not tell you whether the next audit will be easier or harder.

That gap is where most audit programs quietly struggle. Enterprise audits are supposed to reduce risk and build trust. In reality, many turn into stressful, one-off events. Teams scramble. Evidence is stitched together. Controls are explained instead of demonstrated. Everyone exhales when it is over, then goes back to business as usual. That is not audit success. That is survival.

Measuring enterprise audit success requires a different lens. Instead of asking “Did we pass?”, you look at outcomes over time. Did audit preparation get easier? Did questions become more predictable? Did regulators or partners ask fewer follow-ups? Did internal teams start trusting the controls instead of working around them? This is where compliance case studies matter.

They show what success looks like in motion, not in theory. They capture how controls behave under real operating conditions. They surface trust signals that do not show up on a checklist but matter deeply in enterprise environments.

This article breaks down how to measure enterprise audit success using those signals. We will look at what actually changes when audits are working, how to recognize regulatory success early, and why the best audit programs feel almost boring to the people running them.

Why Passing an Audit Is a Weak Success Metric

Did we pass? is the wrong question. It sounds reasonable. It feels definitive. And it gives leadership something simple to report. But as a measure of enterprise audit success, it barely holds up.

Passing an audit only tells you that, at a specific point in time, the organization could produce acceptable answers. It says nothing about how hard that was. Or who paid the cost. Or whether the same answers would exist six months later without another scramble.

In many enterprises, audit prep is a seasonal event. Controls exist, but only a few people know where they live. Evidence is technically available, but not easily accessible. Policies are approved, but rarely referenced in daily work. When auditors arrive, teams go into retrieval mode instead of operating mode.

A weak success metric hides fragility. If every audit requires heroics, late nights, or quiet exceptions, the system is brittle. It may pass today and fail tomorrow, or worse, remember how close it came and start avoiding scrutiny altogether. Strong audit programs behave differently. They surface issues early. They make questions predictable. They turn audits into verification exercises rather than investigations. When controls are embedded into workflows, people stop treating audits as interruptions and start treating them as confirmations.

This is also where enterprise audits diverge from smaller compliance efforts. At scale, success is not about one clean report. It is about whether the organization can demonstrate consistency across teams, regions, and systems without rewriting the story each time. If passing is the only metric, teams optimize for passing. If durability is the metric, teams build controls that hold up even when nobody is watching.

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

What Enterprise Audit Success Actually Looks Like in Practice

When audit programs are working, you can feel it long before the report arrives. There is less urgency. Fewer last-minute requests. Fewer internal emails that start with “urgent.” Teams know where evidence lives. Controls are referenced naturally, not dusted off for review week.

Audits become predictable, not dramatic

In successful enterprise audit programs, auditors stop surprising teams.

Questions repeat year over year. Scope changes are incremental, not disruptive. Follow-ups shrink instead of multiply. That predictability is a signal. It means controls are stable and understood. When audits feel unpredictable, it usually means the system underneath is changing faster than governance can keep up.

Evidence is produced, not assembled

Strong programs do not “prepare” evidence. They retrieve it. Logs exist because systems generate them. Approvals exist because workflows require them. Documentation is current because it is used operationally, not because it is needed once a year.

This is where enterprises that automate compliance controls gain an advantage. When consent handling, access restrictions, and review checkpoints are built into pipelines, audits become verification exercises instead of archaeology. This shift is especially visible in teams that have invested in structured compliance automation for web data collection, where consent and usage rules are enforced continuously rather than explained retroactively.

Controls survive scrutiny across teams

Enterprise audit success shows up when the same answers hold across departments.

Legal, security, data, and product teams describe controls in similar terms. Not identical language, but consistent logic. That alignment means governance is shared, not siloed. When answers diverge, auditors notice. Quickly.

Trust signals increase over time

One of the clearest success indicators is external trust.

Regulators ask fewer clarifying questions. Partners shorten diligence cycles. Customers ask about controls once, not repeatedly. Internal stakeholders stop bypassing governance because they trust it to work. These trust signals rarely appear in audit reports, but they are the real output of successful enterprise audits.

Issues surface earlier and feel smaller

Ironically, strong audit programs find more issues.

The difference is timing. Issues are caught during design reviews, pilot rollouts, or scope changes. They are handled when they are small. Teams that measure success by “no findings” often discover problems late, when they are expensive and public. Enterprise audit success is not the absence of findings. It is the absence of surprises.

Figure 1: Practical signals that indicate an enterprise audit program is working as intended.

Using Compliance Case Studies to Measure Audit Maturity

Metrics alone rarely capture audit maturity. Case studies do.

A compliance case study shows how controls behave in real conditions. Not during a planned audit window, but while systems are running, teams are shipping, and requirements are shifting. That is why mature enterprises rely on case studies to judge whether their audit programs are actually working.

What a useful compliance case study includes

A strong compliance case study does not read like a success story. It reads like a trace. It shows:

- what triggered the audit or review

- which controls were already in place

- where friction appeared

- how evidence was produced

- what changed afterward

If a case study skips over tension or trade-offs, it is not useful for measuring audit success. Real maturity shows up in how teams respond when assumptions are tested.

Example: Large-scale web crawling with built-in compliance controls

One of the more telling examples comes from a large enterprise running continuous web crawling across multiple jurisdictions to support market intelligence and risk monitoring use cases. The challenge was not data access. It was governance.

The crawling program had to operate across regions with different privacy expectations, handle varying site policies, and remain auditable without slowing down delivery teams. Early audits revealed a pattern that will sound familiar. Controls existed, but they lived in documents. Evidence was available, but assembling it took time. Audit outcomes depended too heavily on individual contributors. Instead of tightening documentation, the organization changed how compliance showed up in the system.

Robots directives were interpreted consistently at the crawl orchestration layer. Consent signals were logged automatically. Sensitive fields were filtered before storage. Every crawl job carried metadata explaining purpose, scope, and ownership. When the next audit cycle arrived, something different happened.

Auditors did not ask for explanations. They asked for confirmation. Evidence was retrieved, not prepared. Follow-up questions dropped sharply. Internal teams stopped treating audits as interruptions because controls were no longer external to their workflows. That shift, not the final report, was the success signal.

What this case study reveals about maturity

This example highlights several traits of mature audit programs.

- First, compliance controls moved closer to execution. They were no longer enforced after the fact. They were enforced by design.

- Second, ownership became clearer. When auditors asked why a dataset existed, there was a name and a purpose attached to it. No translation layer was required.

- Third, audit outcomes became repeatable. The same answers held across cycles because the system, not the people, carried the logic. This is what regulatory success looks like in practice. Not fewer rules, but fewer surprises.

How to use case studies as measurement tools

Enterprises can use compliance case studies to measure audit success by asking a few simple questions.

Did audit preparation time decrease? Did the number of ad hoc clarifications drop. Did controls survive team changes. Did external trust improve? If the answers improve year over year, audit maturity is increasing, even if individual findings still exist. That is the difference between compliance as an event and compliance as a capability.

Audit Metrics That Actually Signal Regulatory Success

If you want to measure enterprise audit success, you need metrics that reflect reality. Not vanity metrics like “number of policies.” Not optics metrics like “zero findings.” Regulatory success is about repeatability, evidence quality, and reduced uncertainty over time. The best trust signals are the ones that show your program is getting easier to audit, not just better at preparing.

The metrics that matter

| Metric | What it tells you | How to measure it without guesswork | What “good” looks like over time |

| Audit prep time | Whether evidence is embedded or assembled | Total hours spent across teams per audit cycle | Downward trend, fewer peak weeks |

| Follow-up questions | Whether controls are clear and complete | Count of auditor follow-ups after initial evidence | Drops each cycle, more predictable asks |

| Evidence retrieval speed | Whether systems produce proof on demand | Time to retrieve logs, access records, approvals | Minutes or hours, not days |

| Exception volume | Whether governance is working or bypassed | Number of exceptions raised and approved | Fewer exceptions, tighter scoping |

| Exception aging | Whether exceptions are controlled | % of exceptions past expiry date | Near zero, exceptions close on time |

| Change-to-review coverage | Whether drift is caught early | % of material changes reviewed before rollout | Moves toward 100% |

| Control failure rate | Whether controls work under pressure | Incidents where controls failed or were bypassed | Fewer failures, faster detection |

| Remediation cycle time | Whether issues lead to durable fixes | Avg time from finding to verified fix | Shrinks over time |

| Partner diligence cycle time | Whether trust is increasing externally | Time partners take to approve security or compliance | Shorter cycles, fewer repeat requests |

| Internal trust signals | Whether teams rely on the system | Survey or proxy metrics, reduced workarounds | Fewer bypasses, fewer “special requests” |

This table is the heart of the measurement approach. It focuses on whether governance is functioning as an operating system, not a document store.

A quick reality check

Some teams chase zero findings. That usually backfires. A mature program still finds issues. It just finds them earlier, fixes them faster, and documents them better. Auditors respond positively to that pattern because it is consistent and defensible. Regulatory success is not silence. It is controlled.

How Trust Signals Show Up in Web Data Compliance

Trust is not something auditors explicitly score, but they absolutely react to it. In enterprise web data programs, trust signals show up in subtle ways. They appear in the tone of questions, the depth of scrutiny, and the amount of explanation required. Teams that track these signals can often predict audit outcomes long before the formal review ends.

Regulators shift from investigation to verification

One of the clearest trust signals is how regulators engage. Early on, reviews feel exploratory. Auditors ask broad questions. They probe intent. They request context repeatedly. This usually means they are still trying to understand how much confidence they should place in the system.

As trust builds, the nature of engagement changes. Questions become narrower. Auditors reference prior discussions. They ask whether controls are still in place rather than whether they exist at all. That shift is not accidental. It happens when evidence is consistent across cycles and explanations do not change depending on who is asked.

Platform interactions become less adversarial

In web data compliance, platforms are often the first external signal. When crawling and extraction programs lack discipline, platform interactions tend to escalate. Blocks appear suddenly. Requests for clarification arrive without warning. Policies are interpreted differently by different teams.

Mature programs see fewer surprises. Robots directives are handled consistently. Consent mechanisms are respected automatically. Sensitive data handling does not depend on manual judgment. Over time, this reduces friction and builds credibility, even when disagreements arise.

Internal teams stop second-guessing governance

Trust signals also appear inside the organization. When governance is weak, teams look for workarounds. They ask for exceptions preemptively. They bypass review because they assume it will slow them down. As audit programs mature, behavior changes. Teams involve governance earlier. Reviews feel predictable. Decisions feel fair. Controls stop being seen as blockers and start being treated as guardrails. That internal trust matters more than most audit reports.

Sensitive data handling becomes boring

Another quiet signal is how sensitive data is treated. In immature systems, privacy and PII handling generate constant discussion. Who can access what? Whether masking is applied. How data is stored.

In mature programs, these questions fade. Controls are applied automatically. Pipelines enforce masking and minimization by default. Teams stop debating because the system already decided. That boredom is a success indicator. It means privacy is no longer fragile.

Trust compounds when audits feel routine

The strongest trust signal is routine. When audits stop feeling like events and start feeling like checkpoints, governance has become part of the operating rhythm. Regulators notice. Partners notice. Internal teams notice. That is when enterprise audit success becomes sustainable, not just reportable.

Common Mistakes When Measuring Enterprise Audit Success

Most enterprises fail at measuring audit success for the same reason they fail at audits in the first place. They measure what is easy to report, not what actually matters. These mistakes are subtle. And common.

Treating the audit report as the outcome

An audit report is a snapshot. Teams often treat it like a scorecard. Passed. Minor findings. Closed. That framing ends the conversation too early. The report reflects what auditors saw, not how the system behaved over time. If preparation required special effort or selective evidence, the report hides that cost. Audit success lives in the months between reports, not the document itself.

Optimizing for zero findings

Zero findings feels like success. It rarely is. Programs that chase clean reports often discourage issue surfacing. Teams delay raising concerns. Exceptions are handled informally. Problems stay invisible until they become unavoidable. Mature audit programs expect findings. They just expect them to be scoped, documented, and resolved predictably. If findings disappear overnight without structural change, something else is happening.

Ignoring how hard audits are on teams

Audit success is not neutral to measure. If teams burn out every cycle, the system is not healthy. If only a few people can answer audit questions, knowledge is too concentrated. If preparation pulls engineers away from delivery repeatedly, controls are not embedded. These signals rarely appear in dashboards. They show up in calendars, late nights, and quiet resentment. Ignoring them guarantees future failure.

Measuring policies instead of behavior

Counting policies is easy. Measuring behavior is harder. Many programs report success because documentation exists. Policies are approved. Training is completed. Acknowledgements are logged. None of that proves controls are followed. Behavior shows up in logs, workflows, and enforcement. That is what auditors ultimately care about, even if they do not phrase it that way.

Failing to connect audits to vendor reality

Enterprise audits do not stop at internal systems. Vendors, data providers, and partners expand the risk surface. Measuring audit success without including third-party behavior gives a false sense of control. Programs that mature start treating vendor governance as part of audit measurement, not a separate exercise. This is where structured vendor reviews and repeatable checklists become critical to understanding real exposure.

Missing the long view

The biggest mistake is treating audits as isolated events. Audit success should compound. Preparation should get easier. Questions should narrow. Trust should increase. If each cycle feels like starting over, measurement is failing even if reports look clean.

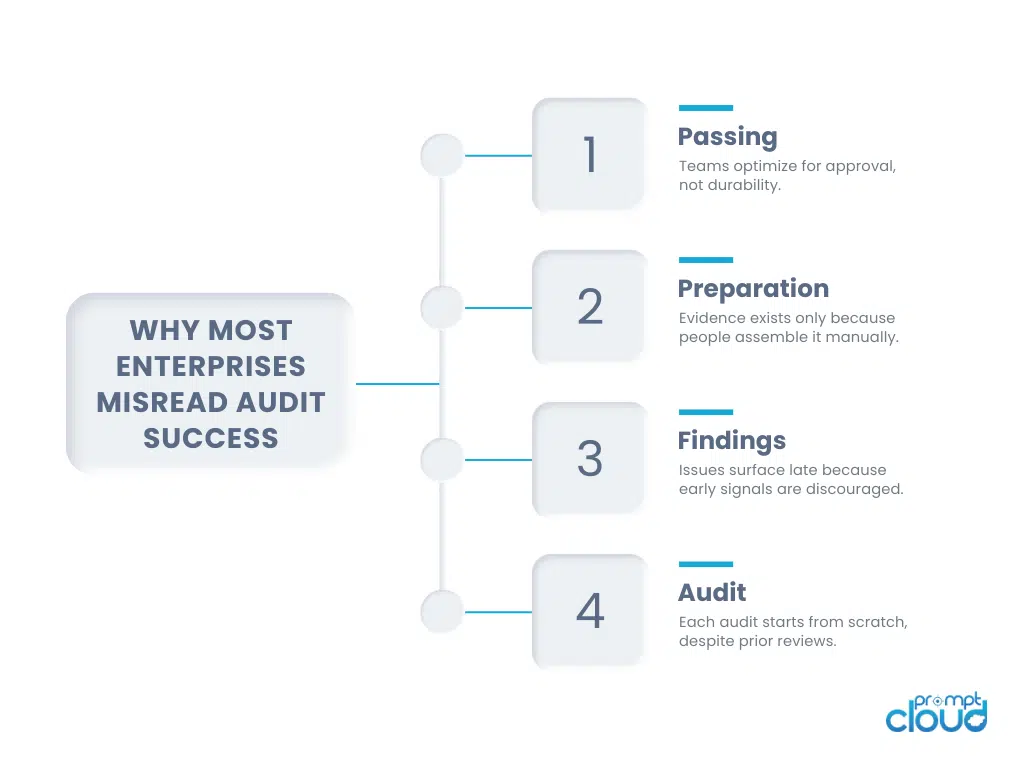

Figure 2: The common patterns that cause enterprises to misinterpret audit outcomes as success.

When Audit Success Becomes a Capability

The strongest signal of enterprise audit success is not what happens during the audit window. It is what happens when nobody is watching. When controls are embedded, teams stop preparing for audits and start operating within them. Evidence exists because systems generate it. Decisions are explainable because governance shaped them earlier. Reviews feel routine because nothing has to be reconstructed.

That is the shift most enterprises underestimate. Audit programs fail when they are treated as compliance theater. They succeed when they behave like infrastructure. Quiet. Predictable. Boring in the best way.

This is why measuring success through compliance case studies works better than relying on pass rates or reports. Case studies capture motion. They show whether controls hold when scope changes, when vendors rotate, when data volumes grow, and when teams move fast. They also surface trust signals that formal metrics miss. Fewer follow-up questions. Shorter diligence cycles. Less internal resistance. Less fear of scrutiny.

Those signals compound. Strong audit programs reduce friction across the business. They make growth safer. They make regulatory conversations calmer. They give leadership confidence without demanding heroics from teams. That is what regulatory success looks like in practice.

For a regulator-backed perspective on measuring governance maturity and compliance effectiveness over time, refer to: UK National Audit Office on measuring regulatory effectiveness and assurance.

Many organizations begin web scraping with internal scripts, but maintaining crawler infrastructure, handling anti-bot protections, and monitoring data quality quickly becomes a full-time operational task.

FAQs

What is a compliance case study in enterprise audits?

It is a real-world account of how controls behave during audits, changes, and operational pressure. It shows maturity over time, not just a single outcome.

How do you measure audit success beyond passing?

By tracking preparation effort, follow-up volume, evidence retrieval speed, and trust signals across cycles. Ease and predictability matter more than clean reports.

Why do audits feel harder as enterprises scale?

Because controls are often documented but not embedded. As systems and vendors grow, manual governance breaks down.

Can audit programs still have findings and be successful?

Yes. Mature programs expect findings. The difference is that issues are scoped, documented, and resolved without disruption.

How do vendors affect enterprise audit success?

Vendors expand the risk surface. If they are not governed consistently, internal audit strength is undermined no matter how good internal controls are.