How to Make a Web Crawler in Python (Step-by-Step Guide)

This guide shows how to make a web crawler in Python using Scrapy, from a basic spider to structured data extraction with pagination. More importantly, it explains why most crawlers fail in production, including silent data quality issues, selector breakage, pagination drift, and anti-bot escalation.

A production-ready crawler requires more than code. It needs a system with layers for discovery, extraction, validation, and monitoring. A decision framework at the end helps you identify when DIY crawlers become unreliable and costly to maintain.

Most tutorials on how to make a web crawler stop at a basic script. They show you how to crawl a page, extract a few fields, and print the output. It works on day one. Then it breaks.

Because real-world web data is not static.

- Pages change structure

- JavaScript loads content dynamically

- Anti-bot systems block requests

- Data formats shift across runs

So the real question is not just how to make a web crawler. It is how to build one that continues working after the first run. This is where most teams fail.

They build crawlers as scripts. But in reality, a crawler is just one layer of a larger data pipeline. According to modern data infrastructure frameworks, reliable web data systems require multiple layers including acquisition, structuring, validation, and monitoring.

Web Crawler vs Web Scraper: What Actually Matters in Real Systems

Most explanations stop at definitions. That is not useful. The real distinction only shows up when you try to build something that runs continuously, not just once.

The Oversimplified Version

- A web crawler discovers URLs

- A web scraper extracts data

Technically correct. Practically incomplete. In real systems, they are not separate tools. They are interdependent layers in a data pipeline.

The System-Level View

| Layer | Role | What It Handles | Typical Breakpoint |

| Crawler | Discovery | Finds pages, builds URL graph | Missed pages, crawl loops |

| Fetcher | Retrieval | Sends requests, manages headers, retries | Blocks, rate limits |

| Scraper (Parser) | Extraction | Pulls structured data from HTML/DOM | Selector failures |

| Normalizer | Structuring | Cleans and standardizes fields | Schema inconsistencies |

| Delivery Layer | Output | API, database, files | Data gaps, duplication |

A crawler without extraction is just mapping URLs. A scraper without crawling is blind to new data.

What Changes at Scale

At small scale:

- URLs are predefined

- Selectors are hardcoded

- Failures are visible

At scale:

- New pages appear continuously

- Page structures change silently

- Downstream systems depend on consistency

This is where the distinction becomes operational.

Mental Model Shift

Most teams think:

“We need a scraper”

What they actually need:

“A system that continuously discovers, extracts, validates, and delivers data”

This is a pipeline problem, not a script problem.

Where Teams Misallocate Effort

| Mistake | What They Focus On | What Actually Breaks |

| Over-invest in crawling | Speed, concurrency | Data quality downstream |

| Hardcoded extraction | Static selectors | Breaks on layout changes |

| No change detection | Assume stability | Silent data corruption |

| No validation | Trust raw output | Incorrect data enters systems |

Most failures do not happen in crawling. They happen in extraction and data consistency.

Example Failure Scenario

- Crawler continues to run without issues

- Scraper misses a structural change (for example, price format)

- Pipeline still delivers output

- Dashboards reflect incorrect data

No alerts. No errors. Just incorrect decisions.

The Correct Way to Think About It

Instead of separating crawler and scraper, think in terms of system reliability:

| Stage | Key Question |

| Discovery | Are all relevant pages being captured? |

| Extraction | Are the right fields being extracted correctly? |

| Validation | Is the data complete and accurate? |

| Delivery | Is the output usable downstream? |

See how PromptCloud delivers structured web data without scraper maintenance.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

Bottom Line

Crawler ensures coverage. Scraper ensures accuracy. The pipeline ensures reliability. The real decision is not crawler versus scraper. It is how these components work together under continuous change.

Source: medium

How to Make a Web Crawler Using Python

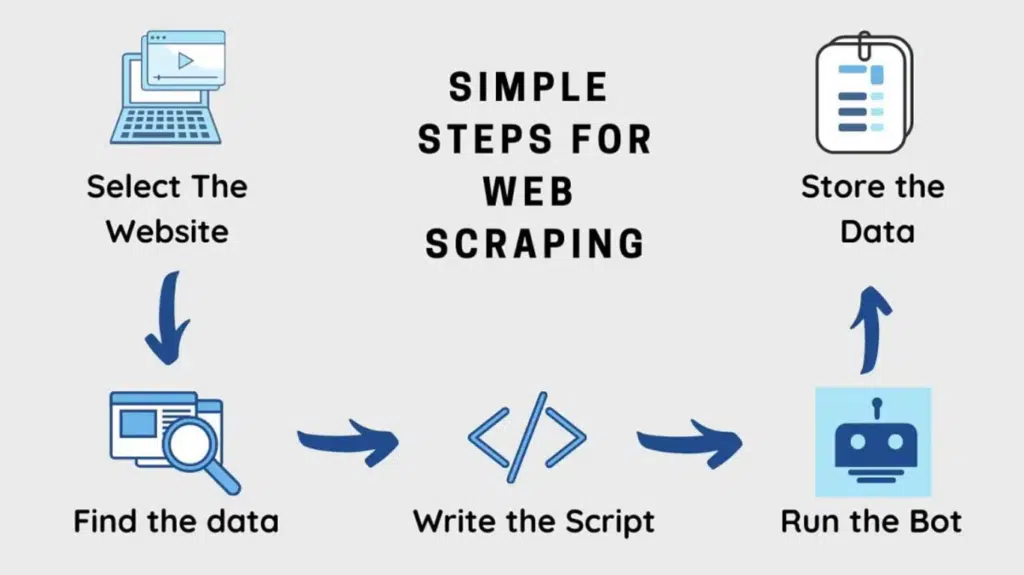

If your goal is to learn the mechanics, a basic crawler is enough. If your goal is to collect usable web data, you need to make a few correct decisions before you write code.

Choose the Right Build Path First

There is no single best way to make a web crawler in Python. The right choice depends on site complexity, crawl depth, and how often the data needs to be refreshed.

| Approach | Best For | Weakness |

| Requests + BeautifulSoup | Small static sites, one-off jobs | Weak for multi-page crawling and maintenance |

| Scrapy | Structured crawling, pagination, repeatable extraction | Requires some setup and framework familiarity |

| Playwright or Selenium | JavaScript-heavy pages, rendered content | Slower, heavier, costlier to run |

For this guide, Scrapy is the right choice. It gives you URL discovery, parsing, request handling, and output pipelines in one framework. More importantly, it forces cleaner structure than an ad hoc script.

Step 1: Install Scrapy

- pip install scrapy

- Create a new project:

- scrapy startproject crawler_project

- cd crawler_project

This gives you a base project with separate files for spiders, settings, and pipelines.

Step 2: Create a Spider

Inside the spiders folder, create a file called example_spider.py:

import scrapy

class ExampleSpider(scrapy.Spider):

name = “example_crawler”

start_urls = [“https://example.com”]

def parse(self, response):

yield {

“url”: response.url,

“title”: response.css(“title::text”).get()

}

for href in response.css(“a::attr(href)”).getall():

yield response.follow(href, callback=self.parse)

This does three things:

- Starts from an initial URL

- Extracts a simple field from the page

- Follows links to continue crawling

That is the minimum viable crawler.

What This Code Is Actually Doing

| Component | Purpose |

| name | Unique spider identifier |

| start_urls | Crawl entry point |

| parse() | Default callback for each fetched page |

| response.css() | Selects elements from the page |

| response.follow() | Follows discovered links |

This is where crawler and scraper behavior start to overlap. The spider discovers pages and extracts data in the same flow.

Step 3: Run the Crawler

From the project root, run:

scrapy crawl example_crawler -o output.json

This will:

- start crawling from the seed URL

- follow discovered links

- save extracted output into output.json

At this point, you have a working crawler. But it is still generic and too loose for real extraction.

Step 4: Extract Specific Data Instead of Just Titles

A crawler becomes useful only when the output is structured around a use case.

For example, if you are crawling listing pages:

import scrapy

class ProductSpider(scrapy.Spider):

name = “product_crawler”

start_urls = [“https://example.com/products”]

def parse(self, response):

for product in response.css(“div.product-card”):

yield {

“name”: product.css(“h2::text”).get(),

“price”: product.css(“.price::text”).get(),

“product_url”: product.css(“a::attr(href)”).get()

}

next_page = response.css(“a.next::attr(href)”).get()

if next_page:

yield response.follow(next_page, callback=self.parse)

Now the crawler is doing something closer to a real use case:

- finding repeated entities on a page

- extracting defined fields

- handling pagination

This is the point where most tutorials stop. That is also where most problems begin.

What This Still Does Not Solve

Even if this crawler runs successfully, it is still fragile.

It can break when:

- the website changes class names

- pagination logic changes

- fields become optional

- prices appear in multiple formats

- the site starts rate-limiting requests

A script that runs is not the same thing as a crawler you can rely on.

A Better Way to Think About This Section

What you have built so far is not a production crawler. It is a functional prototype. That distinction matters.

| Stage | What You Have |

| Basic script | One page, one output |

| Functional crawler | Multiple pages, structured extraction |

| Reliable crawler system | Monitoring, validation, retries, schema control |

Most teams assume step two is enough. It usually is not.

When This Approach Works Well

Scrapy is a strong fit when:

- the target site is mostly server-rendered

- pagination is predictable

- the crawl path is link-driven

- the required fields are visible in HTML

- the data does not require browser simulation

If those conditions hold, Scrapy gives a clean path from crawl logic to repeatable output. If they do not, forcing Scrapy alone can waste time.

Need This at Enterprise Scale?

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

Why Most Web Crawlers Break in Production

A crawler usually does not fail the way people expect.

It does not always crash. It often keeps running and returns bad data.

That is the real problem.

A broken crawler is obvious. A crawler that runs successfully while extracting incomplete, stale, or misformatted data is far more dangerous.

The Core Mistake

Most teams treat crawling as a coding task.

In reality, it is a maintenance problem.

Getting the first version working is easy. Keeping it accurate across changing page structures, pagination rules, and field formats is where the effort compounds.

Where Breakage Actually Happens

| Failure Point | What Changes | What Happens |

| HTML structure | CSS classes, nesting, element order | Selectors stop matching |

| Pagination logic | Next-page links, lazy loading, infinite scroll | Crawl depth drops |

| Field formatting | Prices, dates, availability labels | Output becomes inconsistent |

| Site behavior | Rate limits, IP throttling, bot checks | Requests fail or degrade |

| Content rendering | Data shifts behind JavaScript | Static requests miss fields |

| URL patterns | Canonicals, redirects, query parameters | Duplicate or missed pages |

Most of these failures do not look dramatic in logs. They show up later as bad records, missing coverage, or strange reporting gaps.

Silent Failures Are the Default

This is what a production failure often looks like:

- crawl job completes successfully

- row count drops 28 percent

- price field fill rate falls from 97 percent to 61 percent

- no alert is triggered

- downstream team notices the issue days later

That is why success logs are not enough. A crawler needs quality checks, not just execution logs.

The Four Breakpoints That Matter Most

1. Selectors Break Quietly

A site redesign does not need to be dramatic to break extraction.

A class name changes. A container moves one level deeper. A field appears in a different block for mobile or regional templates. Your crawler still fetches the page, but extraction quality collapses.

This is why hardcoded selectors are fine for prototypes and weak for long-running systems.

2. Pagination and Discovery Drift

A crawler can keep extracting perfectly from the pages it finds while missing a large part of the site.

This happens when:

- page numbers become cursor-based

- listings load only after interaction

- category structures change

- internal linking patterns shift

Now you have an extraction system that is accurate on incomplete coverage. That produces false confidence.

3. Data Format Drift

Even when fields still exist, their structure changes.

Examples:

- price becomes ₹1,299 on one page and 1,299 INR on another

- availability changes from In Stock to Only 2 left

- review count becomes embedded inside a different label

- dates change from relative text to full timestamps

The crawler still returns output, but downstream systems now receive inconsistent data types and values.

4. Anti-Bot Escalation

This is where many DIY crawlers hit a wall.

A site may allow low-frequency crawling at first, then start:

- rate limiting requests

- blocking repeated headers

- challenging browser fingerprints

- returning incomplete HTML to automated traffic

The crawler looks unstable, but the actual issue is that the site behavior has changed based on detection.

What Working Should Actually Mean

A crawler is not working because it returned JSON. It is working only if these conditions hold:

| Metric | What Good Looks Like |

| Coverage | Expected pages are being discovered |

| Freshness | Data is updated within the required window |

| Completeness | Required fields remain populated |

| Consistency | Formats stay stable across records |

| Accuracy | Extracted values match source content |

This is the line most tutorials miss. They measure execution. Production systems measure data quality.

When This Breaks Faster Than Expected

Web crawlers tend to break faster when:

- the site is JavaScript-heavy

- the source changes layout frequently

- the business needs daily or hourly refreshes

- multiple sites are being crawled at once

- downstream teams depend on stable schemas

That last point matters most. The moment analytics, pricing, or monitoring systems depend on crawler output, fragility becomes expensive.

Sites increasingly use bot detection and access control mechanisms. Crawlers that do not respect crawl policies or request patterns are more likely to get blocked or throttled.

Google’s crawler guidelines outline how automated systems are expected to behave and respect site controls → Google Search Central.

Comparison: Prototype vs Production Reality

| Dimension | Prototype Crawler | Production Crawler |

| Goal | Prove extraction works | Deliver stable data continuously |

| Monitoring | Manual checks | Automated alerts |

| Extraction logic | Hardcoded selectors | Maintained and validated |

| Failure handling | Retry and rerun | Diagnose, alert, recover |

| Success criteria | Job finished | Data quality stayed intact |

That is the transition point where “how to make a web crawler” becomes a systems question, not just a Python question.

Web Crawler Architecture: What You Need Beyond the Spider

The spider is the smallest part of the system.

Most guides stop at the spider because it is visible and easy to implement. But a reliable crawler is not a single script. It is a system with defined layers, each handling a different failure mode.

The Minimum Architecture for a Reliable Crawler

At a system level, a working crawler setup looks like this:

| Layer | Purpose | What It Solves |

| URL Queue | Manages crawl targets | Prevents duplication, controls crawl scope |

| Fetch Layer | Handles requests | Retries, headers, throttling |

| Parsing Layer | Extracts data | Converts HTML into structured fields |

| Normalization | Cleans and standardizes data | Ensures consistency across records |

| Validation Layer | Checks data quality | Detects missing or invalid fields |

| Storage Layer | Saves output | Structured delivery for downstream use |

| Monitoring | Tracks performance and quality | Detects failures early |

Most DIY crawlers only implement the first three layers. That is why they break quickly.

How a Real Crawl Flow Works

A proper crawl is not linear. It is iterative and state-driven.

- Seed URLs are added to a queue

- URLs are fetched with controlled request logic

- Responses are parsed for data and new links

- New URLs are added back to the queue

- Extracted data is normalized and validated

- Clean data is stored and delivered

This loop continues until coverage conditions are met. The difference between a script and a system is not the logic. It is how the state is managed across runs.

Where Scrapy Fits in This Architecture

Scrapy handles some parts of this system out of the box:

| Capability | Covered by Scrapy |

| Request scheduling | Yes |

| Link following | Yes |

| Basic parsing | Yes |

| Output pipelines | Yes |

What it does not fully handle:

- schema validation

- change detection

- data quality monitoring

- cross-run consistency

- multi-source coordination

This is why Scrapy is a strong starting point, but not a complete solution.

The Missing Layer Most Teams Ignore: Validation

A crawler without validation is operating blind. At minimum, validation should check:

| Check Type | Example |

| Completeness | Is price missing in more than 5 percent of records |

| Format | Are prices consistently numeric |

| Range | Are values within expected bounds |

| Schema | Are all required fields present |

Without this, incorrect data flows downstream without detection.

State Management Is the Real Complexity

A crawler needs to remember:

- which URLs were already visited

- which pages failed

- which records were already extracted

- what changed between runs

If you ignore state, you will get:

- duplicate data

- missed updates

- inconsistent datasets

This is where most “working crawlers” start failing at scale.

Architecture Comparison: Script vs System

| Component | Script-Based Crawler | System-Based Crawler |

| URL tracking | In-memory or none | Persistent queue |

| Retry logic | Basic or manual | Automated with backoff |

| Data validation | None | Structured checks |

| Monitoring | Logs only | Metrics + alerts |

| Data storage | Flat files | Structured storage |

| Change handling | Manual fixes | Designed for updates |

The difference is not complexity for its own sake. It is resilience under change.

When You Need This Architecture

You do not need full architecture for every use case.

You do need it when:

- data is collected on a schedule (daily or more frequent)

- multiple pages or categories are involved

- data feeds analytics, pricing, or business decisions

- multiple sources are being crawled

- data consistency matters over time

If those conditions apply, a script will not hold.

When to Scale Beyond DIY Web Crawlers

Building your own crawler works. For a while. The problem is not getting data. The problem is keeping data reliable as complexity increases. Most teams do not decide to scale beyond DIY. They are forced into it after repeated failures.

When DIY Crawlers Still Make Sense

There are clear scenarios where building your own crawler is the right choice.

| Scenario | Why DIY Works |

| One-time data extraction | No need for long-term maintenance |

| Small number of pages | Low crawl complexity |

| Static websites | Minimal structural changes |

| Internal experimentation | Speed matters more than reliability |

| Non-critical use cases | Data errors have low impact |

If your use case fits this, a Scrapy-based setup is enough.

Where DIY Starts Breaking Down

As soon as you move beyond basic use cases, complexity increases non-linearly.

| Trigger | What Changes | Impact |

| More websites | Different structures, rules | More maintenance overhead |

| Higher frequency | Daily or hourly crawling | Stability issues surface |

| Larger datasets | Thousands of pages | Performance bottlenecks |

| Business dependency | Data feeds decisions | Accuracy becomes critical |

| Dynamic content | JS-rendered pages | Requires browser automation |

At this stage, the cost is no longer just engineering time. It becomes an operational risk.

The Hidden Cost of DIY Crawlers

Most teams underestimate the ongoing cost.

| Cost Area | What It Looks Like |

| Maintenance | Fixing broken selectors weekly |

| Monitoring | Manually checking outputs |

| Debugging | Investigating missing or incorrect data |

| Infra | Managing proxies, retries, scaling |

| Rework | Rebuilding pipelines when requirements change |

The crawler itself is not expensive. Keeping it reliable is.

Comparison: DIY vs Managed Approach

| Dimension | DIY Crawlers | Managed Data Pipeline |

| Initial setup | Low to moderate | Minimal |

| Ongoing maintenance | High | Handled externally |

| Data reliability | Variable | Consistent |

| Scalability | Limited | Designed for scale |

| Monitoring | Manual | Automated |

| Time to value | Slower over time | Faster |

The decision is not technical. It is economical.

The Inflection Point

Teams usually hit a breaking point when:

- engineering time spent on maintenance exceeds time spent using data

- data inconsistencies start affecting reporting or decision-making

- crawl coverage becomes unpredictable

- multiple stakeholders depend on the same dataset

At this point, continuing with DIY becomes inefficient.

PromptCloud operates managed web data pipelines that handle infrastructure, anti-bot systems, schema validation, and structured dataset delivery. This removes the operational overhead that makes DIY crawlers unreliable at scale.

What Changes After This Point

The focus shifts from:

“Can we extract this data?”

to

“Can we trust and maintain this data continuously?”

That shift requires:

- structured pipelines

- validation layers

- monitoring systems

- scalable infrastructure

These are not incremental upgrades. They are system-level changes.

Practical Decision Framework

Use this to decide:

| Question | If Yes |

| Is the data used regularly (daily or more)? | Move beyond DIY |

| Does the data feed business decisions? | Move beyond DIY |

| Are multiple sites involved? | Move beyond DIY |

| Are you fixing crawler issues frequently? | Move beyond DIY |

| Do you need consistent schemas over time? | Move beyond DIY |

If you answered yes to three or more, DIY is likely already costing more than it should.

Should You Build a Web Crawler or Not

Building a web crawler is straightforward. Keeping it useful is not.

If your goal is learning or running a one-time extraction, a Python-based crawler using Scrapy is enough. You get control, flexibility, and a clear understanding of how web data flows from source to output.

But that model breaks the moment requirements shift from “get some data” to “depend on this data.”

The transition is subtle. At first, the crawler works. Then:

- selectors start breaking

- data formats become inconsistent

- coverage drops without visibility

- maintenance effort increases week over week

This is where most teams miscalculate. They treat crawling as a build problem, when it is actually a reliability and maintenance problem.

Related Reading

- For real-world applications in retail and pricing, see web scraping use cases in ecommerce

- To understand infrastructure cost tradeoffs, refer to AWS EC2 on demand vs reserved instance price calculator

- For monitoring use cases, read keeping track of media mentions using web crawling

- To see how this feeds analytics systems, check decoding digital shelf analytics and the data powering it

Stop Maintaining Crawlers. Start Receiving Structured Data

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

PromptCloud delivers structured web data pipelines that handle crawling, extraction, validation, and delivery without ongoing engineering effort.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. What is the difference between a web crawler and a web spider?

A web crawler and web spider refer to the same concept. Both are automated programs that browse websites by following links and discovering new pages. The term “spider” is just older terminology.

2. Can I build a web crawler without using frameworks like Scrapy?

Yes, you can use libraries like Requests and BeautifulSoup. However, without a framework, you will need to manually handle link discovery, retries, throttling, and output pipelines, which becomes difficult as scale increases.

3. How do web crawlers handle JavaScript-heavy websites?

Basic crawlers cannot extract data rendered via JavaScript. For such cases, tools like Playwright or Selenium are used to simulate a browser environment and render the page before extraction.

4. Is web crawling legal?

Web crawling is generally legal when done responsibly and in compliance with a website’s terms of service and robots.txt guidelines. However, scraping restricted or sensitive data without permission can lead to legal risks depending on jurisdiction.

5. Why does my web crawler return incomplete or inconsistent data?

This usually happens due to changes in website structure, dynamic content loading, pagination issues, or inconsistent data formats. Without validation and monitoring, these issues often go unnoticed in crawler outputs.