Big Data Democratization via Web Scraping

If we had to put democratization of data in line with the classroom definition of democracy, it would read- Data by the people, for the people, of the people. Makes a lot of sense, doesn’t it? It resonates with the generic feeling we have these days with respect to easy access to data for our daily tasks. Thanks to the internet revolution, and now the social media.

Big Data web Crawling

By the people–

Most of the public data on the web is a user group’s sentiments, analyses and other information.

Of the people-

Although the “of” here does not literally mean that the data is owned, all such data on the internet either relates to the user group itself or its views on things.

For the people-

Most of this data is presented via channels (either social media, news, etc.) for public benefit be it travel tips, daily news feeds, product price comparisons, etc.

Essentially, data democratization has come to mean that by leveraging cloud computing, data that’s mostly user-generated on the internet has become accessible by all industries- big or small for their own internal use (commercial or not). This democratization has been put to use for unearthing hidden patterns from big blobs of datasets. Use cases have evolved with the consumer internet landscape and Big Data is now being used for various other means quite unanticipated.

With respect to the democratization, we’ve also heard enough about how data analytics is paving way beyond data analysts within companies and becoming available to even the non-tech-savvies. But did anyone mention DaaS providers who aid in the very first phase of data acquisition? Data scraping or web crawling (whatever your lingo is) has come to become an indivisible part of data democratization, especially when talking large scale. The first step in bringing the public data to use is acquiring it, which is where setting up web crawlers internally or partnering with DaaS providers comes to play. This blog guides towards making a choice. It’s not always all the data that companies crunch or should crunch from the web. There are obviously certain channels that are of more interest to the community than the rest and there lies the barrier- to identify sources of higher ROI and acquire data in a machine-readable format.

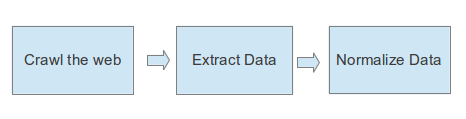

DaaS providers usually come to help with the entire data acquisition pipeline- starting from picking the right sources through crawl, extraction, dedup, as well as data normalization based on specific requirements. Once the data has been acquired, its most likely published on another channel. Such network effect bolsters the democracy.

Steps in Data Acquisition Pipeline

Note- PromptCloud only delivers structured data as per the schema provided.

So while democratization may refer to easy access to computing resources in order to draw patterns from Big Data, it could also be analogous to ensuring right data in the right format at right intervals. In fact, DaaS providers have themselves used this democracy to empower it further.