Artificial Intelligence (AI) and Big Data are a few of the most popular topics of this decade. However, it was only recently that we have started seeing AI and Big Data in action. Thanks to web scraping and data extraction software. In this blog, we will be seeing Artificial Intelligence in web data extraction.

What has changed in AI and big data extraction? The ever-increasing computing power has made web data extraction feasible and efficient. Today, advanced web scraping solutions, data extraction tools can help you access data even without the technical know-how. Artificial intelligence is proving to be the right solution for aggregating huge data sets from the web, with minimal manual interference.

Artificial Intelligence vs Machine Learning

There is a stark difference between Machine Learning (ML) and Artificial Intelligence (AI). In Machine Learning, you teach the machine to perform a specific task within narrowly defined rules, along with some training examples. The training and rules are necessary for the machine learning system to achieve a level of success.

Whereas, Artificial intelligence, does the teaching itself with a minimal number of rules and loose training. It can then go on to create its own set of rules based on the exposure it gets. Thus making AI the continued learning process.

How does continuous learning happen in Artificial Intelligence? The answer is artificial neural networks. Artificial neural networks and deep learning are used in AI for speech and object recognition, image segmentation, modeling language, and human motion.

Artificial Intelligence in Web Data Extraction

The web is a giant repository where data is vast and abundant. The possibilities that come with this amount of web data can be groundbreaking. The challenge is to navigate through this unstructured pile of information and make data extracting easier. Even with the advanced web scraping technologies, data extraction is a time-consuming process. But things are about to change.

Researchers from the Massachusetts Institute of Technology recently released a paper on an Artificial Intelligence system that can extract information from the web and teach itself how to extract data on its own. The research paper introduces an information extraction system that can extract structured data from unstructured documents automatically.

To put it simply, the AI system can think like humans while looking at a document. When humans cannot find a particular piece of information in a document, we find alternative sources to fill the gap. This adds to our knowledge of the topic in question. The AI system works just like this, web scraping data on related topics and filling in the gaps in the information structure.

AI System Works on Rewards and Penalties

The working of this AI-based website data extraction system involves classifying the data with a ‘Confidence score’. This confidence score determines the probability of the classification being statistically correct and derived from the patterns in the training data. If the confidence score doesn’t meet the set threshold, the system will automatically search the web for more relevant data.

Once the adequate confidence score achieved by extracting new data from the web and integrating it with the current document, it will deem the task successful. If the confidence score is not met, the process continues until the most relevant web data extracted.

This type of learning mechanism is called ‘Reinforcement learning’ and works by the notion of learning by reward. It’s very similar to how humans learn. Since there can be a lot of uncertainty associated with the data being merged, especially where contrasting information involved. The rewards are given based on the accuracy of the information. With the training provided, the AI learns how to optimally merge different pieces of extracted data together so that the answers we get from the system is as accurate as possible.

Al’s Web Data Extraction in Action

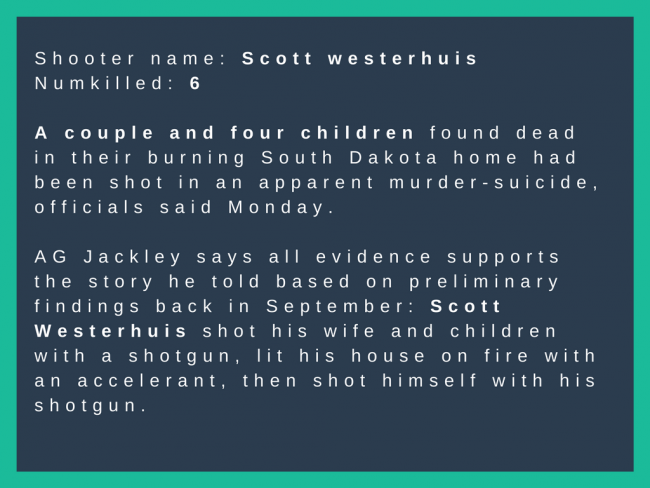

To test how well the artificial intelligence system can extract data from the web, researchers gave it a test task. The system was to analyze various data sources on mass shootings in the USA and extract the name of the shooter. The number of injured, fatalities, and location. The performance was, in fact, mind-blowing as it could pull up the accurate data the way it needed while beating conventionally taught data extraction mechanisms by more than 10 percent!

The Future of Web Scraping and Data Extraction

With the ever-increasing need for data and the challenges associated with acquiring it, AI could be what’s missing link in the equation. The research is promising and hints at a future where intelligent bots with human sight, can read, crawl and extract information. This done only to tell us the bits we need to know.

The Artificial Intelligence system could be the game-changer. An advanced system like this will not only save time but also enables us to make use of the abundance of information out there on the web. Looking at the bigger picture, this new research is only a step towards creating the truly intelligent web spider that can master web scraping. This done to solve information gaps in a fraction of time.