How Web Data Powers High-Quality Training for ChatGPT and LLMs

LLMs like ChatGPT don’t fail because of model limitations. They fail because of poor, stale, or unstructured training data.

Web data solves this by providing:

- Real-time, continuously updating information

- Diverse, behavior-rich language patterns

- Domain-specific depth that internal datasets lack

But the advantage is not in accessing web data.

The advantage is in building reliable pipelines that convert raw web data into high-quality training datasets at scale.

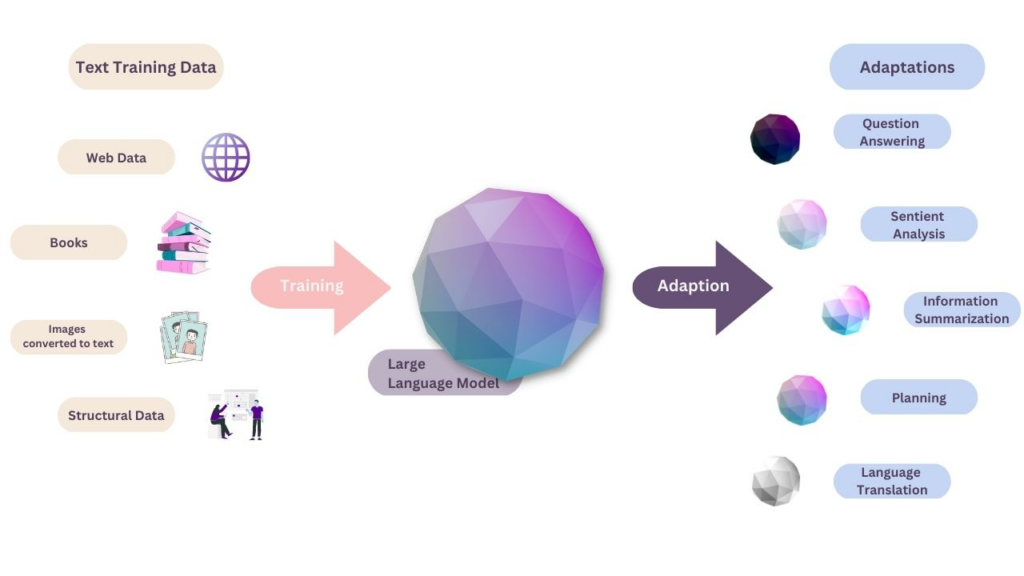

Large language models like ChatGPT are often evaluated based on model architecture and scale. In practice, performance is far more dependent on the quality and freshness of training data.

The existing version of this blog highlights the importance of web data in terms of diversity and scale . However, the real challenge is not access to data, but whether that data remains relevant, accurate, and usable over time.

Static datasets degrade quickly. Synthetic datasets lack real-world variability. Internal datasets are limited in scope. This creates a gap between what models are capable of and how they perform in real-world applications.

Web data fills this gap by introducing continuously evolving language patterns, real-world context, and domain-specific depth. But the advantage is not in collecting web data—it is in building systems that can reliably convert raw web data into structured, high-quality training datasets at scale.

This is where web data shifts from being a dataset to becoming infrastructure.

Stop training models on inconsistent, outdated data.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

How Web Data Improves ChatGPT Training

Web Data Improves LLM Performance Through Signal Extraction, Not Volume

Most content online frames web data as a volume advantage. More data, more coverage, better models.

That’s incorrect.

LLMs do not improve because of more data. They improve because of better signal density within that data.

Raw web data is noisy. It contains duplicates, low-quality content, spam, conflicting information, and irrelevant text. If ingested directly, it degrades model performance instead of improving it.

The real value of web data comes from the ability to extract high-signal patterns from unstructured, real-world content.

Image Credit: Renaissance Rachel

This includes:

- How users phrase intent across contexts

- How terminology evolves within industries

- How sentiment, tone, and meaning shift based on usage

Without filtering, web data increases noise. With proper processing, it becomes a continuous source of training signal.

The Web Data Pipeline That Powers ChatGPT Training

To convert raw web data into usable ChatGPT training data, teams need a structured pipeline. This is where most implementations fail.

The pipeline is not a single step. It is a system:

| Stage | What Happens | Failure Risk |

| Collection | Data extracted from websites, forums, marketplaces, etc. | Incomplete coverage, blocked access |

| Cleaning | Removal of noise, duplicates, irrelevant content | Poor filtering reduces signal quality |

| Structuring | Converting unstructured text into usable formats | Inconsistent schemas, parsing errors |

| Validation | Ensuring accuracy, consistency, freshness | Data drift, outdated information |

| Updating | Continuous refresh of datasets | Pipeline breaks, stale data |

Each stage directly impacts the final training dataset. A failure at any layer introduces compounding errors into the model.

Where Most Teams Get It Wrong

Most teams treat web scraping as a collection problem. They optimize for:

- Number of sources

- Crawl frequency

- Data volume

But they underinvest in:

- Data validation

- Schema consistency

- Change detection

- Continuous updates

This leads to a predictable failure pattern:

| Assumption | Reality |

| More data improves models | More noise reduces accuracy |

| Scrapers scale linearly | Maintenance cost grows exponentially |

| Data once collected remains useful | Data decays rapidly |

| Pipeline works once, it keeps working | Websites change constantly |

This is why many LLM systems show strong early performance and then degrade over time.

Why Web Data Works Specifically for ChatGPT Training

ChatGPT and similar LLMs depend heavily on contextual understanding and language variability. Web data directly improves both.

Unlike curated datasets, web data captures:

- Conversational patterns from forums and communities

- Domain expertise from niche publications

- Real-time updates from news and evolving topics

This allows models to:

- Handle ambiguity better

- Generate responses that align with user intent

- Adapt to changing language patterns

The result is not just better answers, but more relevant and context-aware interactions.

The Strategic Insight

Web data does not improve ChatGPT because it is large.

It improves ChatGPT because it is:

- Continuously updated

- Behaviorally rich

- Contextually diverse

But this only holds true if the data is processed correctly.

Without a pipeline, web data is noise.

With a pipeline, it becomes a competitive advantage in model performance.

Challenges of Using Web Data for LLM Training (and Why Most Pipelines Break)

Web Data Looks Like an Advantage—Until You Try to Scale It

At a conceptual level, using web data for ChatGPT training sounds straightforward. The web offers unlimited, continuously updating information across domains. In practice, turning that into a reliable training dataset is where most teams fail.

The gap between data availability and data usability becomes visible only at scale.

What works for a handful of sources breaks when expanded to hundreds or thousands. Pipelines that initially deliver value begin to show cracks in the form of missing data, inconsistent formats, and silent quality degradation. This is not a tooling issue. It is a systems problem.

Challenge 1: Noise Overpowers Signal Without Strong Filtering

Web data is inherently unstructured and inconsistent. It includes duplicates, spam, outdated content, and conflicting information across sources. Without strong filtering and normalization, this noise gets embedded into training datasets.

For LLMs, this has direct consequences. Models trained on noisy data struggle with:

- Inconsistent outputs

- Lower factual accuracy

- Increased hallucination rates

The challenge is not collecting more data, but preserving signal quality as data volume increases.

Challenge 2: Legal and Compliance Risks Are Often Ignored Early

Most teams treat compliance as a later-stage concern. By the time it becomes a priority, data pipelines are already built on questionable sourcing practices.

Web data collection must account for:

- Website terms of service

- Data privacy regulations such as GDPR and CCPA

- Ethical data usage standards

Ignoring this introduces long-term risk, especially for enterprise AI applications.

For a deeper breakdown of compliance considerations, refer to is web scraping legal.

Challenge 3: Crawling Complexity Increases Non-Linearly

As coverage expands, crawling becomes significantly more complex. Websites differ in structure, rendering methods, anti-bot mechanisms, and update frequency.

What starts as a manageable system quickly evolves into a high-maintenance operation. Issues such as:

- Frequent DOM changes

- JavaScript-heavy content

- Rate limits and blocking

- Dynamic content rendering

create constant friction.

This is where most teams underestimate effort. The technical overhead grows faster than the value derived from the data.

For a closer look at these operational challenges, see few pains of web crawling.

Challenge 4: Data Structure Inconsistency Breaks Downstream Systems

Even when data is successfully collected, it rarely arrives in a usable format. Different sources represent similar information in completely different ways.

Without consistent structuring:

- Training datasets become fragmented

- Schema mismatches increase preprocessing effort

- Model training pipelines slow down

This is particularly critical for ChatGPT training, where consistency across datasets directly impacts model learning quality.

Challenge 5: Data Decay Is Faster Than Most Teams Expect

Web data is not static. Content changes, pages are updated, and information becomes outdated quickly.

This introduces a hidden challenge: data decay.

| Data State | Impact on LLMs |

| Fresh data | High relevance and accuracy |

| Partially outdated data | Inconsistent responses |

| Stale data | Incorrect or misleading outputs |

Most pipelines are designed for collection, not for continuous refresh and validation. As a result, models trained on these datasets degrade over time.

The Bigger Problem: Fragmented Data Systems

Individually, each of these challenges is manageable. Together, they create a fragmented system where:

- Data is collected but not validated

- Data is structured but not updated

- Data is refreshed but not normalized

This fragmentation leads to unreliable training datasets and unpredictable model performance.

This is also where web data intersects with broader enterprise data strategy. As highlighted in how big data is changing enterprise IT, organizations are shifting from isolated data processes to integrated, scalable data systems.

Web data for LLM training needs to follow the same evolution.

What High-Quality ChatGPT Training Data Actually Looks Like

High-Quality Training Data Is Defined by Signal Integrity, Not Source Count

Most teams assume that more sources automatically lead to better training datasets. In reality, high-performing LLMs are trained on data that maintains consistency, relevance, and structure over time, not just breadth.

A widely cited issue in LLM performance is hallucination, which is often linked to poor-quality or outdated training data. According to research from IBM, data quality directly impacts AI model accuracy, reliability, and bias, making it one of the most critical factors in enterprise AI performance.

This shifts the focus from “how much data you have” to how reliable and usable that data is for training.

Need This at Enterprise Scale?

While building in-house web data pipelines works for small-scale experiments, enterprise AI and LLM training introduces challenges in maintaining data quality, freshness, and pipeline reliability across thousands of sources. Most enterprise teams evaluate, build vs managed data infrastructure to determine total cost of ownership.

Core Characteristics of High-Quality ChatGPT Training Data

1. Contextual Relevance Over Generic Coverage

High-quality datasets prioritize context-rich content over broad but shallow information. This includes domain-specific sources, real user interactions, and industry-relevant language patterns.

Generic datasets may help models respond, but they fail when deeper understanding or domain accuracy is required. Contextual relevance ensures that models can interpret intent, not just language.

2. Structured and Consistent Data Formats

Raw web data is inherently unstructured. Without normalization, similar data points appear in multiple formats across sources, leading to inconsistencies during training.

High-quality datasets enforce:

- Standardized schemas

- Clean text formatting

- Consistent labeling and categorization

This reduces preprocessing complexity and improves how models learn relationships between data points.

3. Continuous Freshness and Update Cycles

Training data is not a one-time input. It requires continuous updates to reflect changes in language, trends, and domain knowledge.

The impact of freshness is measurable:

| Data Freshness Level | Model Outcome |

| Continuously updated | High relevance and adaptability |

| Periodically updated | Partial accuracy, delayed relevance |

| Static datasets | Rapid performance degradation |

This is especially critical for applications that rely on current information, such as market intelligence, customer interactions, and decision-support systems.

4. Noise Reduction and Signal Preservation

High-quality training data minimizes:

- Duplicate content

- Low-value or spam content

- Conflicting or misleading information

At the same time, it preserves:

- Intent signals

- Sentiment variations

- Contextual nuance

This balance is what allows ChatGPT to generate responses that are not only accurate, but also aligned with user intent.

5. Validation and Quality Assurance Layers

Data quality cannot be assumed. It must be validated continuously.

High-quality pipelines include:

- Accuracy checks across sources

- Schema validation

- Change detection mechanisms

- Human-in-the-loop sampling where required

Without validation, even well-structured datasets degrade over time.

Download the AI Pipeline Troubleshooting Playbook to identify where your web data pipelines are silently breaking and impacting LLM performance.

Why DIY Web Data Pipelines Fail for LLM Training

Build vs Managed Web Data Pipelines: Where the Gap Actually Appears

At an early stage, building an in-house web data pipeline for ChatGPT training looks efficient. Engineering teams can set up crawlers, define extraction logic, and start collecting data quickly. For small datasets or limited use cases, this approach works.

The problem starts when the system needs to scale.

As the number of sources increases and update frequency rises, the pipeline begins to absorb hidden complexity. Maintenance effort grows, data quality becomes inconsistent, and reliability drops. What initially feels like a cost-saving decision turns into a long-term operational burden.

This is not because internal teams lack capability. It is because web data systems behave differently from traditional data pipelines. They operate in an environment that is constantly changing and largely outside your control.

Where DIY Pipelines Break in Practice

1. Maintenance Overhead Scales Faster Than Data Value

Every new source introduces variability in structure, format, and behavior. Websites change layouts, introduce anti-bot mechanisms, or modify content delivery patterns. Each change requires updates to extraction logic.

Over time, engineering effort shifts from building product features to maintaining scraping infrastructure.

2. Data Quality Becomes Inconsistent Across Sources

Without centralized validation and normalization, data collected from multiple sources varies in format and accuracy. This inconsistency propagates into training datasets, affecting model performance.

For ChatGPT training, even small inconsistencies in data structure can lead to:

- Poor generalization

- Conflicting outputs

- Increased preprocessing overhead

3. Freshness Is Hard to Maintain at Scale

Keeping data updated is not just about re-running scrapers. It requires:

- Detecting when data has changed

- Prioritizing high-impact updates

- Avoiding redundant collection

Most DIY systems are designed for extraction, not for continuous synchronization. As a result, datasets become partially outdated without teams realizing it.

4. Infrastructure Complexity Is Underestimated

Scaling web data pipelines introduces challenges that go beyond scraping logic:

- Distributed crawling systems

- Proxy and IP rotation

- Handling dynamic and JavaScript-heavy content

- Failure recovery and monitoring

These are infrastructure problems, not scripting problems. They require sustained investment to manage effectively.

Build vs Managed Approach: A Practical Comparison

| Dimension | DIY Pipeline | Managed Web Data Service |

| Setup speed | Fast for small use cases | Structured onboarding |

| Scalability | Breaks under high source volume | Designed for scale |

| Maintenance effort | High and continuous | Offloaded |

| Data quality consistency | Variable | Standardized |

| Freshness management | Manual and reactive | Automated and monitored |

| Total cost over time | Increases unpredictably | More predictable |

The Strategic Trade-Off

The decision is not simply build vs buy. It is a trade-off between:

- Control vs reliability

- Short-term efficiency vs long-term scalability

- Engineering time vs data quality assurance

Most teams initially optimize for control and speed. Over time, they realize that maintaining web data pipelines requires a level of operational commitment that diverts focus from core product development.

Why This Matters Specifically for ChatGPT Training

ChatGPT and other LLMs are highly sensitive to data quality, structure, and freshness. Any inconsistency in the data pipeline directly affects model performance.

This means that unreliable pipelines do not just create engineering overhead. They create:

- Model drift

- Reduced accuracy

- Poor user experience

In this context, web data pipelines are not just data infrastructure. They are model performance infrastructure.

How PromptCloud Enables Reliable Web Data Pipelines for LLM Training

From Extraction to Reliability: Where PromptCloud Fits

By this stage, the challenge is clear. Web data improves ChatGPT training, but only when it is consistently accurate, structured, and continuously updated.

This is where most internal pipelines fail.

PromptCloud is designed specifically to solve this gap. Instead of treating web scraping as a one-time extraction task, it delivers end-to-end web data pipelines that ensure data remains usable despite constant change across sources.

This matters because poor data quality is not just a technical issue. According to IBM, poor data quality costs organizations an average of $12.9 million per year, driven by inefficiencies, rework, and incorrect outputs. In LLM systems, this translates directly into degraded model performance.

What PromptCloud’s Web Data Pipeline Delivers

1. Continuous Data Collection Without Pipeline Breaks

PromptCloud’s infrastructure is designed to handle dynamic websites, anti-bot systems, and structural changes without constant manual intervention.

Instead of reacting to failures, the system is built to adapt to change and maintain continuity, ensuring that training datasets are consistently available.

2. Clean, Normalized, and Model-Ready Data

Raw web data is noisy and inconsistent. PromptCloud processes this data into structured, standardized formats that are directly usable for ChatGPT training.

This includes:

- Removal of duplicates and irrelevant content

- Schema standardization across sources

- Consistent formatting for downstream use

The result is high-signal datasets, not raw dumps.

3. Structured Outputs Aligned with LLM Training Needs

PromptCloud delivers data in formats that align with training pipelines, reducing preprocessing effort for engineering teams.

Instead of spending cycles on cleaning and structuring, teams can focus on:

- Model development

- Fine-tuning

- Application-level innovation

4. Built-In Quality Validation and Monitoring

Data quality is continuously monitored through validation layers that detect inconsistencies, anomalies, and freshness gaps.

This ensures that datasets do not silently degrade over time—a common issue in DIY pipelines.

5. Scalable Infrastructure Without Operational Overhead

PromptCloud handles the infrastructure complexity required to scale web data pipelines, including distributed crawling, access management, and large-scale data processing.

This removes the need for internal teams to maintain:

- Scraping infrastructure

- Proxy networks

- Monitoring systems

Why This Matters for ChatGPT and LLM Performance

For LLMs, the impact of data quality is direct and compounding.

| Without PromptCloud | With PromptCloud |

| Fragmented, inconsistent data | Structured, standardized datasets |

| Manual pipeline maintenance | Fully managed data delivery |

| Data freshness issues | Continuous updates |

| High preprocessing effort | Ready-to-use data |

PromptCloud effectively shifts the model from:

- Data collection → data reliability

- Engineering overhead → data consumption

Stop training models on inconsistent, outdated data.

Get structured, schema-ready web data delivered to your exact specifications, across any source, at whatever cadence your use case demands.

• No contracts. • No credit card required. • No scraping infrastructure to maintain.

FAQs

1. What is web data for ChatGPT training and why is it important?

Web data for ChatGPT training refers to real-world content collected from websites, forums, and digital platforms to improve language models. It is important because it provides up-to-date, diverse, and context-rich information that helps LLMs generate more accurate and relevant responses.

2. How does web data improve LLM training data quality?

Web data improves LLM training data by adding real-world language patterns, domain-specific knowledge, and continuously updated information. This helps models better understand context, reduce generic responses, and improve accuracy across different use cases.

3. Does web data help reduce hallucinations in ChatGPT?

Yes, high-quality web data can reduce hallucinations in ChatGPT by improving factual grounding. When training datasets are accurate, structured, and regularly updated, models are less likely to generate incorrect or misleading outputs.

4. What challenges come with using web data for LLM training?

The main challenges include filtering noisy or irrelevant data, maintaining data freshness, ensuring compliance with regulations, and structuring unstructured content into usable training datasets. Without proper pipelines, these issues can degrade model performance.

5. How do enterprises collect and use web data for AI and machine learning?

Enterprises collect web data using scalable data pipelines that extract, clean, and structure information from multiple sources. This data is then used to train and update AI and machine learning models for applications such as chatbots, analytics, and decision systems.