Screen Scraping Isn’t What It Used to Be

Screen scraping — extracting data from rendered browser output — still works for specific fallback scenarios, but it’s no longer the primary approach in modern web data systems. Websites are now dynamic, API-driven, and actively protected by anti-bot systems. Reliable data extraction in 2026 requires hybrid architectures combining API interception, DOM parsing, and screen scraping as a last layer, with validation and monitoring built in. This article covers what screen scraping actually is today, why it breaks at scale, the five key technical shifts changing the field, and when to move from scripts to a managed data pipeline.

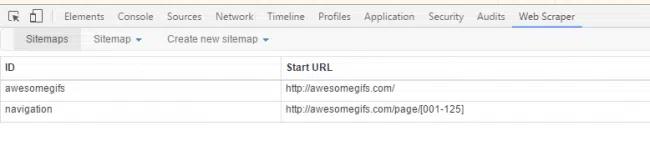

Screen scraping used to be simple. You could load a webpage, inspect the HTML, write a few selectors, and extract the data you needed. For static sites and small workflows, that approach still works. But that version of screen scraping no longer reflects how the web actually behaves.

Modern websites are dynamic, heavily scripted, and designed to control how data is accessed. Content is rendered through JavaScript, APIs are hidden behind layers, and anti-bot systems actively monitor traffic patterns. What looks like a page is often just a shell that loads data asynchronously.

This shift has changed the nature of data extraction. What used to be a data extraction problem is now an access, reliability, and system design problem. This shift has pushed web scraping closer to data engineering than scripting. The focus is no longer on extracting fields, but on building pipelines that can handle change, validate outputs, and deliver consistent datasets over time.

Screen scraping, in its traditional sense, interacts with what is visible on the screen. It mimics a user, reads rendered output, and extracts information from it. That approach still has its place, especially when APIs are unavailable or systems are locked down.

However, relying only on screen scraping today introduces limitations:

- higher fragility when page structures change

- increased resource cost due to browser rendering

- difficulty scaling across multiple sources

- higher risk of detection and blocking

This is why most modern systems have moved beyond pure screen scraping. They combine multiple layers: crawling, API interception, DOM parsing, and validation pipelines. The goal is no longer just to extract data, but to ensure that data remains consistent, structured, and usable over time.

What Is a Screen Scraper?

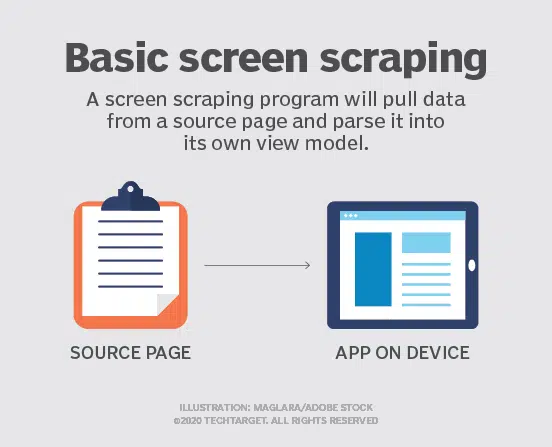

A screen scraper is no longer just a tool that “reads what’s on the screen.” That definition is outdated.

In practice, a screen scraper is a fallback extraction method used when direct data access is unavailable. It operates at the presentation layer, meaning it captures data from rendered output rather than structured sources like APIs or databases.

This distinction matters.

Traditional screen scrapers interact with:

- HTML rendered in the browser

- visual elements such as tables, lists, and text blocks

- sometimes even pixel-level data in legacy systems

They simulate user behavior, navigate interfaces, and extract information based on what is visible.

That approach still works in specific scenarios:

- legacy systems with no API access

- websites where data is only available post-render

- environments where backend endpoints are inaccessible

But in modern web ecosystems, this is rarely the most efficient path.

Most websites today separate data from presentation. The browser assembles the interface using API calls, client-side scripts, and dynamic rendering layers. A pure screen scraping approach ignores this underlying structure and instead extracts data from the most unstable layer.

That creates three core problems:

- fragility: small UI changes break extraction logic

- inefficiency: rendering full pages increases compute cost

- inconsistency: output varies depending on load states and dynamic content

Because of this, screen scraping is now best understood as one technique within a broader extraction strategy, not the strategy itself.

Modern systems prioritize:

- direct API capture when available

- structured DOM parsing over visual extraction

- hybrid approaches that combine multiple methods

Stop fixing scrapers. Start receiving reliable data

PromptCloud delivers structured web data pipelines without scraper maintenance.

Why Traditional Screen Scraping Breaks at Scale

Most teams don’t start with scale in mind. They build a scraper that works for a few pages, validate the output, and then expand it across more sources. The problem is that what works at a small scale rarely holds when the system becomes a dependency.

This is where traditional screen scraping starts to break. The first issue is changing frequency. Websites are constantly updated. A minor UI change, a renamed class, or a layout shift can disrupt extraction logic. At a small scale, this is manageable. At scale, it turns into continuous maintenance.

The second issue is rendering overhead. Screen scraping relies on fully loading and rendering pages, often through headless browsers. This increases latency and infrastructure cost significantly when you are processing thousands or millions of pages.

Then comes access instability. As request volume increases, websites begin to detect patterns. Rate limits, CAPTCHAs, and IP blocks become more frequent. What was once a simple request pipeline turns into a system that requires proxy management, session handling, and behavioral tuning.

According to Imperva, nearly 47% of all internet traffic now comes from bots, making detection and blocking increasingly sophisticated — and stable scraping access harder to maintain at scale.

But the most critical issue is silent data failure. Scrapers often continue running even when they are broken. Fields may go missing, values may shift, or partial data may be extracted without triggering errors. Without validation layers, these issues go unnoticed and propagate into downstream systems.

This is where most teams misdiagnose the problem. They try to fix selectors or adjust scripts, assuming the issue is technical. In reality, the problem is structural. They are using a method designed for extraction as if it were a system designed for reliability.

Need This at Enterprise Scale?

Get clean, structured stock data — prices, fundamentals, and news signals — delivered on schedule.

Key Innovations Changing Web Data Extraction

The shift away from traditional screen scraping is being driven by a set of clear technological changes. These are not incremental improvements. They redefine how data is accessed, extracted, and maintained over time.

What matters now is not just extraction capability, but resilience, adaptability, and data reliability.

AI-Led Parsing and Adaptive Extraction

One of the biggest shifts is the use of AI to reduce dependency on rigid selectors.

Earlier, extraction relied heavily on fixed rules. If a class name changed or an element moved, the scraper broke. AI-led systems change this by understanding patterns rather than exact structures.

They can:

- identify similar data points across layout variations

- adapt to minor structural changes without manual updates

- distinguish between primary content and noise

This does not eliminate failures, but it reduces the frequency of manual intervention. The focus moves from fixing breakages to monitoring system behavior.

API Interception Over UI Extraction

Modern websites rarely store data in the HTML itself. The visible interface is often built on top of API calls.

Instead of extracting from rendered pages, newer systems intercept these network requests directly.

This approach offers clear advantages:

- cleaner, structured data

- lower compute cost compared to full page rendering

- reduced dependency on UI structure

Screen scraping still plays a role when APIs are inaccessible, but API-first extraction has become the preferred path wherever possible.

Hybrid Extraction Architectures

No single method works across all websites.

This has led to hybrid systems that combine:

- crawling to discover pages

- API capture for structured data

- DOM parsing for fallback extraction

- browser automation when necessary

The system dynamically chooses the most efficient method based on the source. This flexibility is what allows modern pipelines to scale across diverse websites without breaking frequently.

Anti-Bot Adaptation as a System Layer

Avoiding detection is no longer a workaround. It is a core part of the architecture.

Advanced systems manage:

- request patterns and timing

- IP rotation and geo-distribution

- session persistence and headers

The goal is not to “bypass” detection once, but to maintain stable access over long periods. This shifts the problem from tactical fixes to system-level design.

Built-In Data Quality and Validation Layers

Extraction is no longer considered complete when data is collected.

Modern pipelines include validation mechanisms that check:

- schema consistency across runs

- completeness of extracted fields

- duplication and anomalies

This ensures that data remains usable even as source websites change. Without this layer, scale only amplifies errors.

The Role of Screen Scraping in Modern Data Pipelines

Screen scraping has not disappeared. It has been repositioned.

In modern data architectures, it is no longer the primary method of extraction. Instead, it functions as a fallback layer within a broader system, used when more efficient or stable methods are not available.

This distinction is important because most real-world pipelines do not rely on a single approach.

A typical system today might:

- use crawlers to discover and prioritize pages

- capture API responses for structured data

- parse the DOM for predictable elements

- fall back to screen scraping when data is only available post-render

Screen scraping fits into this last layer.

It becomes relevant in scenarios where:

- data is rendered dynamically with no accessible API

- content is embedded within complex UI components

- legacy systems expose data only through visual interfaces

In these cases, screen scraping is still effective. But it comes with tradeoffs.

Compared to other methods, it is:

- slower due to full page rendering

- more sensitive to UI changes

- harder to standardize across multiple sources

This is why it is rarely used in isolation at scale. Instead, it is integrated into hybrid pipelines that can switch strategies depending on the source. The system decides whether to use API extraction, DOM parsing, or screen scraping based on efficiency and reliability.

This layered approach reduces dependency on any single method. It also ensures that when one method fails, another can take over without disrupting the entire pipeline. The key shift is conceptual.

Screen scraping is no longer the foundation of web data extraction. It is a supporting technique within a system designed for adaptability and continuity. That is how modern data pipelines stay resilient as the web continues to evolve.

Screen Scraping vs Modern Web Data Extraction Approaches

The real shift in this space is not about better scraping tools. It is about choosing the right extraction method based on the system requirement.

Most teams still default to screen scraping because it is familiar. But in modern pipelines, it is just one of several approaches, each with a different role, cost structure, and reliability profile.

Understanding this difference is what prevents long-term architectural mistakes.

Screen scraping operates at the presentation layer, while newer approaches aim to access data closer to the source.

Here is how they compare in real systems:

| Approach | How It Works | Strength | Limitation | When to Use |

| Screen Scraping | Extracts data from rendered UI | Works when no API exists | Fragile, high cost at scale | Last-resort fallback |

| DOM Parsing | Extracts from HTML structure | Faster than rendering | Breaks on structural changes | Semi-stable pages |

| API Extraction | Captures backend data calls | Clean, structured data | Not always accessible | Preferred default |

| Hybrid Systems | Combines multiple methods | High reliability | Requires system design | Enterprise-scale pipelines |

The key takeaway is not that one method replaces another.

It is that modern systems orchestrate multiple methods together.

A page might initially be accessed via an API. If that fails, the system may fall back to DOM parsing. If the data is only visible after rendering, screen scraping is used as the final layer.

This hierarchy is intentional.

It prioritizes:

- efficiency first

- stability second

- fallback coverage third

Teams that rely only on screen scraping miss this optimization layer. They end up solving every problem at the most expensive and fragile level of the stack.

That is why the conversation has shifted from “how to scrape” to “how to choose the right extraction path for each source.”

That decision is what defines whether a system scales or breaks.

Legal, Compliance, and Ethical Considerations

As web data extraction evolves, the technical challenges are no longer the only concern. Legal and compliance risks are now equally important, especially as data pipelines move from experimentation to business-critical systems.

Screen scraping, in particular, sits in a sensitive position because it interacts with the presentation layer, often without explicit data access agreements.

The first layer of risk comes from website terms of service. Many platforms explicitly restrict automated data collection. Ignoring these terms may not break your scraper, but it can expose the business to legal action.

The second layer is data ownership and usage rights. Extracting data is one thing. Storing, redistributing, or commercializing it is another. The risk increases significantly when scraped data is used in products, analytics platforms, or AI models.

Then comes privacy regulation.

Frameworks like GDPR and CCPA have changed how organizations must think about data collection. Scraping personal or identifiable data without consent introduces direct compliance risk. Even if the data is publicly accessible, how it is processed and stored matters.

Beyond regulation, there is also operational ethics.

Aggressive scraping can:

- overload target websites

- degrade service for other users

- trigger defensive mechanisms that disrupt access

Responsible systems account for this by:

- respecting rate limits

- following robots directives where applicable

- avoiding unnecessary request volume

What is changing in modern systems is that compliance is no longer an afterthought.

It is being built into the pipeline itself.

This includes:

- access control policies

- audit logs for data collection

- filters to exclude sensitive data

- governance layers that define what can and cannot be collected

The Road Ahead: What Will Define the Next Phase

The next phase of web data extraction will not be defined by faster scrapers. It will be defined by systems that can operate continuously in unstable environments.

The web is becoming more dynamic, more protected, and more fragmented. Extraction systems are adapting in response.

The first shift is toward event-driven data collection.

Instead of scraping on fixed schedules, systems are moving toward detecting changes and triggering extraction when something actually updates. This reduces unnecessary load, improves freshness, and aligns data collection with real-world changes.

The second shift is deeper integration with AI systems.

Data is no longer just collected for dashboards. It feeds directly into models, decision engines, and automated workflows. This increases the cost of bad data. As a result, pipelines are being designed with stronger validation, lineage tracking, and feedback loops.

Another key trend is infrastructure abstraction.

Teams are moving away from managing scraping infrastructure internally. Instead, they are focusing on consuming structured datasets while external systems handle crawling, extraction, and maintenance. This is similar to how cloud computing replaced on-premise infrastructure.

There is also a growing emphasis on data freshness and continuity.

It is no longer enough to collect data once. Systems need to ensure:

- consistent update cycles

- minimal data gaps

- reliable historical tracking

This is especially critical for use cases like pricing intelligence, financial monitoring, and AI training pipelines.

Finally, access control will continue to tighten.

Websites are investing more in anti-bot technologies and controlled data exposure. This will push extraction systems to become more adaptive, but it will also increase the importance of compliant and sustainable data strategies.

PromptCloud operates in this model, delivering structured web datasets through managed pipelines while handling crawling, extraction, and maintenance externally.

The Future of Screen Scraping and Web Data Extraction

Modern extraction systems take a different approach. They prioritize direct data access where possible, combine multiple extraction methods, and add layers for validation, monitoring, and governance. The goal is not just to get data, but to ensure that the data remains usable over time.

That is the real transition.

The problem has moved from extraction to reliability. From writing scripts to operating pipelines. From accessing data once to maintaining it continuously.

Teams that recognize this early build systems that scale with the web. Teams that do not end up maintaining brittle workflows that break as soon as the environment changes.

The future of web data extraction will not be defined by better scrapers. It will be defined by systems that can adapt, validate, and deliver data consistently, even as the sources themselves keep evolving.

Relevant Reads to Go Deeper

If you’re evaluating how scraping behaves in real systems, these will help connect the dots:

- Understand where most systems fail in production →

- See how large-scale data is reshaping enterprise tech decisions →

- Learn how trend-based data extraction works in practice →

- Explore how scraping is used in high-stakes industries like finance →

For a neutral, technical overview of how modern web data extraction works at the protocol and browser level.

Stop fixing scrapers. Start receiving reliable data

PromptCloud delivers structured web data pipelines without scraper maintenance.

FAQs

1. What is the difference between screen scraping and data scraping?

Screen scraping extracts data from what is visually rendered on a screen, often using browser automation. Data scraping, on the other hand, targets structured sources like HTML or APIs.

In modern systems, data scraping is preferred because it is faster and more stable, while screen scraping is used only when no direct data access exists.

2. Why do screen scrapers fail on dynamic websites?

Dynamic websites load content using JavaScript after the initial page request. Traditional screen scrapers that rely on static HTML miss this data or capture incomplete outputs.

To handle this, systems either use headless browsers to render pages fully or intercept backend API calls directly.

3. How much data can you realistically scrape at scale?

The limitation is not volume, but infrastructure and access stability.

At scale, scraping requires:

distributed request handling

proxy and session management

failure recovery systems

Without these, even moderate-scale scraping becomes unreliable.

4. What are the biggest challenges in maintaining scraping systems long-term?

The most common issues are:

frequent website structure changes

increasing anti-bot protections

silent data quality degradation

The long-term challenge is not building the scraper, but maintaining consistent output without constant manual fixes.

5. When should you stop using DIY scraping and move to a managed solution?

This shift typically happens when:

scraping becomes business-critical

engineering time is spent on maintenance instead of development

data accuracy directly impacts decisions or models

At this point, the cost of maintaining internal scraping systems often exceeds the cost of adopting a managed data pipeline.