This post is about DIY web scraping tools. If you are looking for a fully customizable web scraping solution, you can add your project on CrawlBoard.

How to Extract Data from a Website Using Google Docs?

We’ve been using Google docs for creating spreadsheets and other documents for all this while. Did you know that Google’s own office suite could do a lot more than that? It is actually easy to crawl data from websites using Google docs by learning a few simple functions.

Thankfully, Google docs web scraping method doesn’t require you to be a programmer or technical person at all. If you are fairly used to Google sheets, you can learn this easy hack and level up your web scraping skills. We will be using two Google docs functions for this; ImportHTML and ImportFeed. Translating the text from one language to another, reading RSS feeds and monitoring website changes are a few of the great applications of this handy feature. Let’s get started with the tutorial!

Web Scraping using Google Sheets

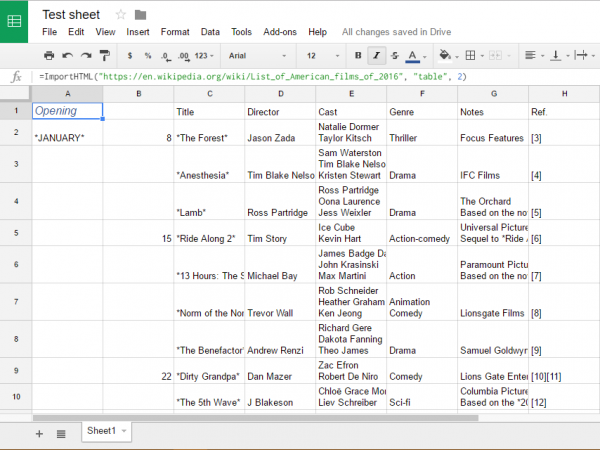

ImportHTML is a handy Google formula that can be used to fetch tables and lists from web pages on the internet into a Google sheet.

Step 1:

Let’s begin with an example. Create a new spreadsheet file inside Google docs.

Step 2:

Double click on a random cell to get into the edit mode.

Step 3:

Copy and paste the following function into the selected cell and press ‘Enter’:

=ImportHTML(“https://en.wikipedia.org/wiki/List_of_American_films_of_2016”, “table”, 2)

When you press enter, Google docs will immediately start importing the second table from the Wikipedia page in question into the current Google sheet. You can also replace the value of the third parameter in this formula from 2 to something else, like 3 or 4. In that case, Google docs will fetch the third or fourth table from the page instead of the second. This way, you can automate fetching data from tables on any web page on the internet.

It is not just that you can fetch into Google docs using the importHTML function. This function can also be used to import HTML lists that are made using the

-

- or <0l> tags which make it somewhat versatile.

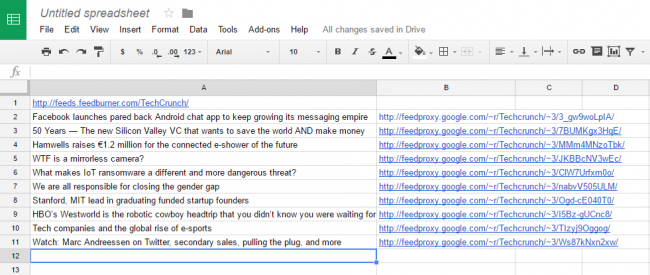

Fetching RSS feeds into Google sheets

Fetching RSS feeds into your Google sheets is made possible by the ImportFeed function. Here is how to go about doing it.

Step 1:

Open a new Google sheet and type in the URL of the RSS feed in cell A1.

Step 2:

Type =ImportFeed(A1, “items title”, FALSE, 10) in cell A2

(This will fetch the titles of the newest 10 posts in that feed into column A)

Step 3:

TYPE =ImportFeed(A1, “items url”, FALSE, 10) in cell B2

(This will fetch the permalinks of these posts in column B)

Step 4:

Type =HyperLink(B2, A2) in cell C2

(This function will combine the titles and URLs to form clickable hyperlinks in the sheet)

Step 5:

Copy the formula in cell C2 into cells C3 until C11

If you want to track price fluctuations for some products on an ecommerce website, the ImportXML function can be used along with these to achieve the same. We will come up with the next part of this series soon, where that will be discussed.

Bottom line

These Google Docs functions can come in handy if you are looking for data to do some research on the web or want to create a database of your own for small to medium requirements. However, this won’t be suitable for more complicated web data extraction requirements. This function would require the data to be presented on the webpage in the table format. For more complex web scraping requirements, it’s better to rely on a web scraping service provider. Moreover, it is always recommended to outsource the scraping process when it comes to large scale data acquisition for business.