Discover the hidden costs of in-house web scraping

PromptCloud Inc, 16192 Coastal Highway, Lewes De 19958, Delaware USA 19958

We are available 24/ 7. Call Now. marketing@promptcloud.com- Home

- How it Works

How it Works

How it Works

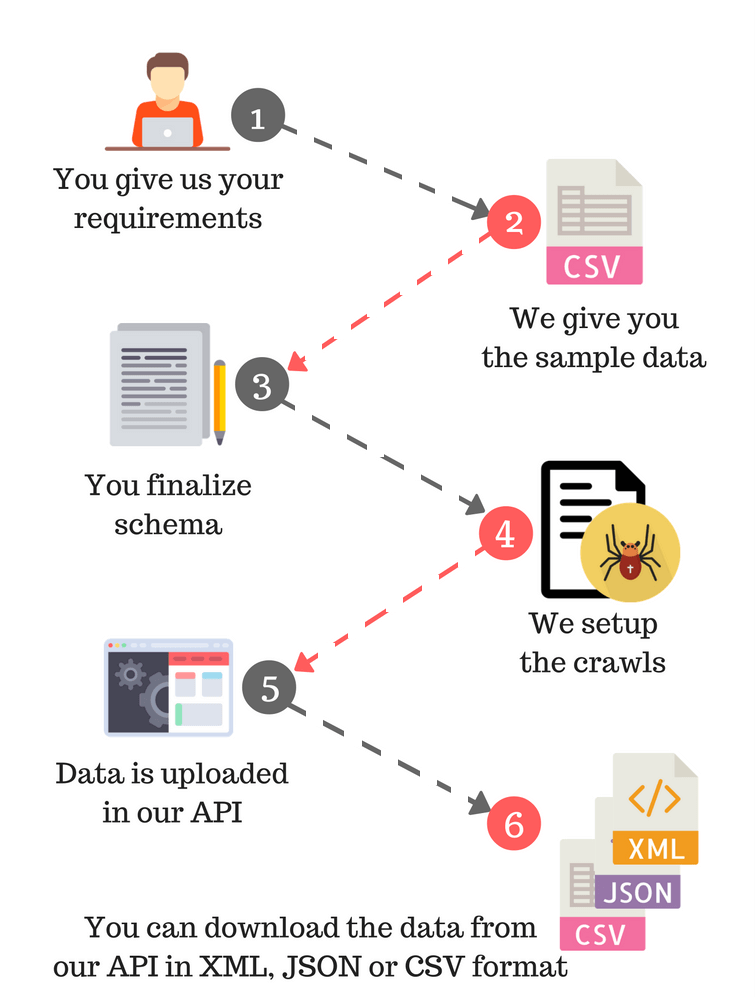

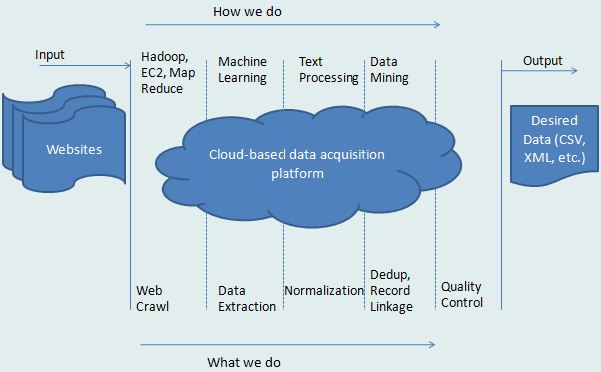

Being a DaaS provider, we have a very simple setup process in place. All you have to do is, provide us with your requirements, which mainly consist of 3 steps:

1. Sites to crawl

2. Fields to be extracted

3. Desired frequency of such crawls.

Once you have provided us with your requirements, we will send you a set of sample data which will help you understand the final output. You can finalize the schema & inform us if any changes are needed. After finalizing the schema, we setup custom crawlers to acquire structured data sets at scale from a list of sites you provide, in your desired schema and predefined intervals. Data can be delivered in either XML, JSON or CSV formats via our RESTful API or directly downloaded from CrawlBoard. We could also push data to your FTP, SFTP, AWS, Dropbox, Azure, Box, Gdrive. Data output can be normalized based on client-specified rules.

Yes, it’s this simple. You don’t have to be involved in any process after giving your requirements. Just sit back & enjoy the desired data regularly on time

Technologies We Use

Our service has been designed taking into account the various use cases and the non-uniform formats on the web. It deep crawls the web and uses certain machine learning components for extraction. Client specific normalization too can be added to the pipeline.

Built on Open-Source

It all started with open source technologies, and PromptCloud has gradually built on top of these technologies thus designing its own state-of-the-art crawler that can take care of scale and failovers.

What Our Clients Say

With PromptCloud, it became evident that focus on quality and timeliness were paramount. They have helped us grow our business without compromises.

The team at PromptCloud has done an excellent job in providing me with a custom set of scraping data from multiple websites. I would have no hesitation in recommending them.

PromptCloud was by far best data provider we have worked with. They really understand the domain space, and have worked very diligently to meet our requirements in record time.